NVIDIA GeForce 6800 Ultra: The Next Step Forward

by Derek Wilson on April 14, 2004 8:42 AM EST- Posted in

- GPUs

Anisotropic, Trilinear, and Antialiasing

There was a great deal of controversy last year over some of the "optimizations" NVIDIA included in some of their drivers. We have

NVIDIA's new driver defaults to the same adaptive anisotropic filtering and trilinear filtering optimizations they are currently using in the 50 series drivers, but users are now able to disable these features. Trilinear filtering optimizations can be turned off (doing full trilinear all the time), and a new "High Quality" rendering mode turns off adaptive anisotropic filtering. What this means is that if someone wants (or needs) to have accurate trilinear and anisotropic filtering they can. The disabling of trilinear optimizations is currently available in the 56.72

Unfortunately, it seems like NVIDIA will be switching to a method of calculating anisotropic filtering based on a weighted Manhattan distance calculation. We appreciated the fact that NVIDIA's previous implementation of anisotropic filtering employed a Euclidean distance calculation which is less sensitive to the orientation of a surface than a weighted Manhattan calculation.

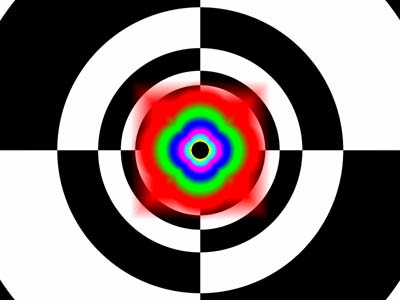

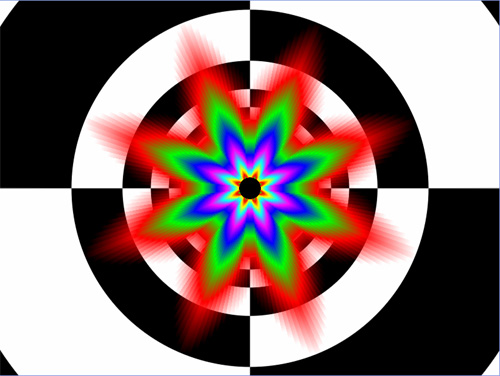

This is how NVIDIA used to do Anisotropic filtering

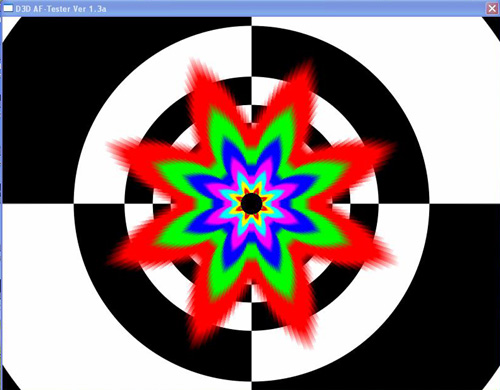

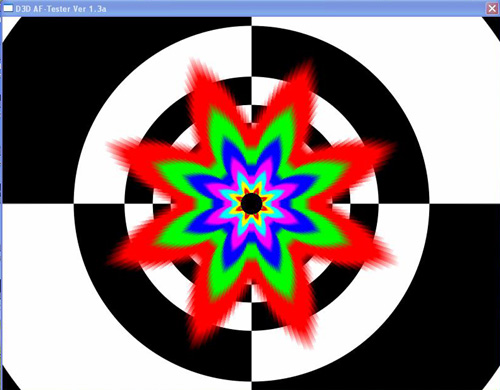

This is Anisotropic under the 60.72 driver.

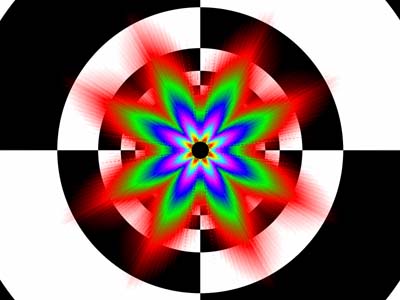

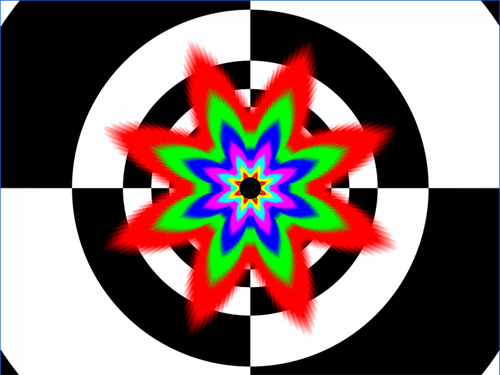

This is how ATI does Anisotropic Filtering.

The advantage is that NVIDIA now has a lower impact when enabling anisotropic filtering, and we will also be doing a more apples to apples comparison when it comes to anisotropic filtering (ATI also makes use of a weighted Manhattan scheme for distance calculations). In games where angled, textured, surfaces rotate around the z-axis (the axis that comes "out" of the monitor) in a 3d world, both ATI and NVIDIA will show the same fluctuations in anisotropic rendering quality. We would have liked to see ATI alter their implementation rather than NVIDIA, but there is something to be said for both companies doing the same thing.

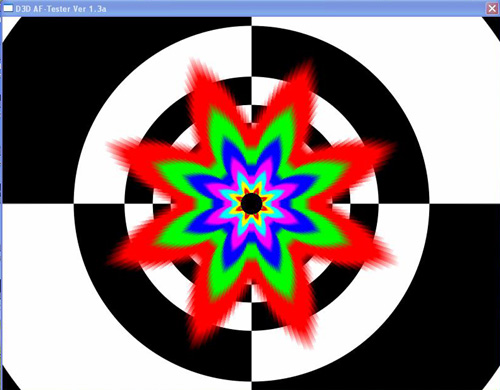

We had a little time to play with the D3D AF Tester that we used in last years image quality article. We can confirm that turning off the trilinear filtering optimizations results in full trilinear being performed all the time. Previously, neither ATI nor NVIDIA did this much trilinear filtering, but check out the screenshots.

Trilinear optimizations enabled.

Trilinear optimizations disabled.

When comparing "Quality" mode to "High Quality" mode we didn't observe any difference in the anisotropic rendering fidelity. Of course, this is still a beta driver, so everything might not be doing what it's supposed to be doing yet. We'll definitely keep on checking this as the driver matures. For now, take a look.

Quality Mode.

High Quailty Mode.

On a very positive note, NVIDIA has finally adopted a rotated grid antialiasing scheme. Here we can take a glimpse at what the new method does for their rendering quailty in Jedi Knight: Jedi Academy.

Jedi Knight without AA

Jedi Knight with 4x AA

Its nice to finally see such smooth near vertical and horizontal lines from a graphics company other than ATI. Of course, ATI does have yet to throw its offering into the ring, and it is very possible that they've raised their own bar for filtering quality.

77 Comments

View All Comments

Regs - Wednesday, April 14, 2004 - link

Wow, very impressive. Yet very costly. I'm very displeased with the power requirments however. I'm also hoping newer drivers will boost performance even more in games like Far cry. I was hoping to see at least 60 FPS @ 1280x1024 w/ 4x/8x. Even though it's not really needed for such a game and might be over kill, however It would of knocked me off my feet enough where I could over look the PSU requirement. But ripping my system apart yet again for just a video card seems unreasonable for the asking price of 400-500 dollars.Verdant - Wednesday, April 14, 2004 - link

i don't think the power issue is as big as some make it out to be, some review sites used a 350 W psu, and two connectors on the same lead and had no problems under loaddragonballgtz - Wednesday, April 14, 2004 - link

I can't wait till December when I build me a new computer and use this card. But maybe by then the PCI-E version.DerekWilson - Wednesday, April 14, 2004 - link

#11 you are correct ... i seem to have lost an image somewhere ... i'll try to get that back up. sorry about that.RyanVM - Wednesday, April 14, 2004 - link

Just so you guys know, Damage (Tech Report) actually used a watt meter to determine the power consumption of the 6800. Turns out it's not much higher than a 5950.Also, it makes me cry that my poor 9700Pro is getting more than doubled up in a lot of the benchmarks :(

CrystalBay - Wednesday, April 14, 2004 - link

Hi Derek, What kind of voltage fluctuations were you seeing... just kinda curious about the PSU...PrinceGaz - Wednesday, April 14, 2004 - link

A couple of comments so far...page 6 "Again, the antialiasing done in this unit is rotated grid multisample" - nVidia used an ordered grid before, only ATI previously used the superior rotated grid.

page 8 - both pictures are the same, I think the link for the 4xAA one needs changing :)

Can't wait to get to the rest :)

ZobarStyl - Wednesday, April 14, 2004 - link

dang ive got a 450W...sigh. That power consumption is really gonna kill the upgradability of this card (but then again the x800 is slated for double molex as well). I know it's a bit strange but I'd like to see which of these cards (top end ones) can provide the best dual-screen capability...any GPU worth its salt comes with dual screen capabilities and my dually config needs a new vid card and I dont even know where to look for that...and as for cost...these cards blow away 9800XT's and 5950's...it wont be 3-4 fps above the other that makes me pick between a x800 and a 6800...it will be the price. Jeez, what are they slated to hit the market at, 450?

Icewind - Wednesday, April 14, 2004 - link

Upgrade my PSU? I think not Nvidia! Lets see what you got AtiLoneWolf15 - Wednesday, April 14, 2004 - link

It looks like NVidia has listened to its customer base. I'm particularly interested in the hardware MPEG 1/2/4 encoder/decoder.Even so, I don't run anything that comes close to maxing my Sapphire Radeon 9700, so I don't think I'll buy a new card any time soon. I bought that card as a "future-proof" card like this one is, and guess what? The two games I wanted to play with it have not been released yet (HL2 and Doom3 of course), and who knows when they will be? At the time, Carmack and the programmers for Valve screamed that this would be the card to get for these games. Now they're saying different things. I don't game enough any more to justify top-end cards; frankly, and All-In-Wonder 9600XT would probably be the best current card for me, replacing the 9700 and my TV Wonder PCI.