Zen and Vega DDR4 Memory Scaling on AMD's APUs

by Gavin Bonshor on June 28, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- G.Skill

- AMD

- DDR4

- DRAM

- APU

- Ryzen

- Raven Ridge

- Scaling

- Ryzen 3 2200G

- Ryzen 5 2400G

CPU Performance

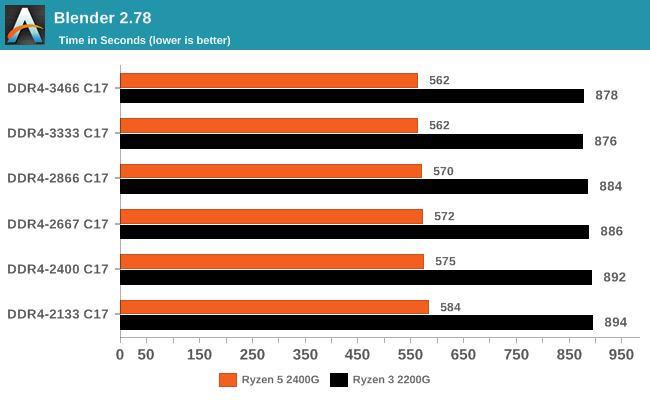

Rendering - Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

While memory speed does influence our final rendering duration consistently by shaving seconds off, the jump from DDR4-2133 to DDR4-3333 equates to a 2% reduction overall on the Ryzen 3 2200G, although the last step from 3333 to 3466 is actually a regression. The Ryzen 5 2400G experienced a reduction of just under 4%.

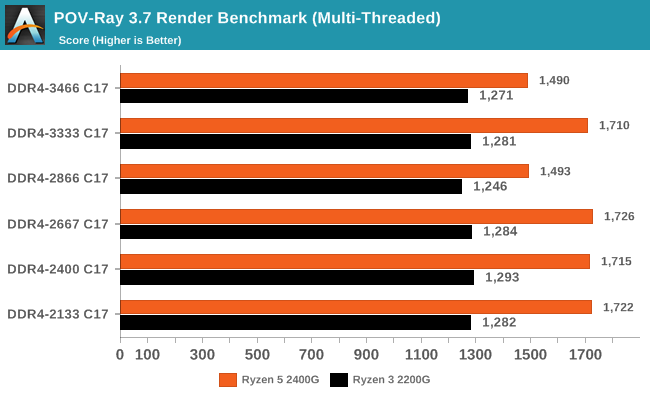

Rendering – POV-Ray 3.7: link

The Persistence of Vision Ray Tracer, or POV-Ray, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 1-2 minutes on high-end platforms.

Our results in POV-Ray 3.7 were a little hit and miss and showed irregularities at different memory clock speeds, perhaps due to the additional power required by the memory controller at higher frequencies taking away from available CPU power. This means POV-Ray isn’t really influenced more by the memory speeds themselves, although it is certainly more determined from more CPU cores and higher frequencies as the difference between the 2200G and 2400G show.

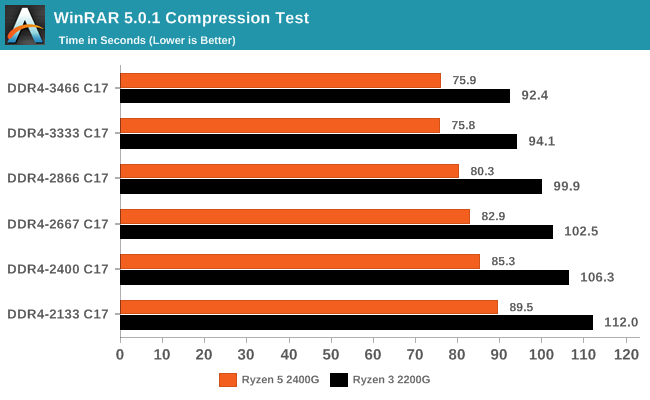

WinRAR 5.4: link

Our WinRAR test from 2013 is updated to the latest version of WinRAR at the start of 2014. We compress a set of 2867 files across 320 folders totaling 1.52 GB in size – 95% of these files are small typical website files, and the rest (90% of the size) are small 30-second 720p videos.

As the memory frequency was increased, the compression time was consistently lower going up a memory strap. With WinRAR being one of our most DRAM-affected CPU tests, we have seen this before with other CPU generations. The 2200G saw a 21% increase in throughput, while the 2400G had a +18% gain. However it is worth noting that the DDR4-3466 time was slightly slower than that of the DDR4-3333 time again.

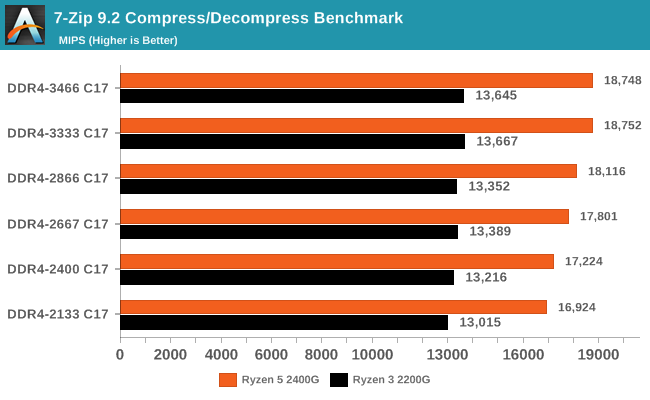

7-Zip 9.2: link

As an open source compression tool, 7-Zip is a popular tool for making sets of files easier to handle and transfer. The software offers up its own benchmark, to which we report the result.

The 7-Zip results are interesting as with both Ryzen based APUs at the DDR4-3333 setting saw the biggest benefit in compression and decompressing. Again, the top strap in our testing was not the most performant. Overall gains were +11% for the 2400G, and +5% for the 2200G, in line with thread counts.

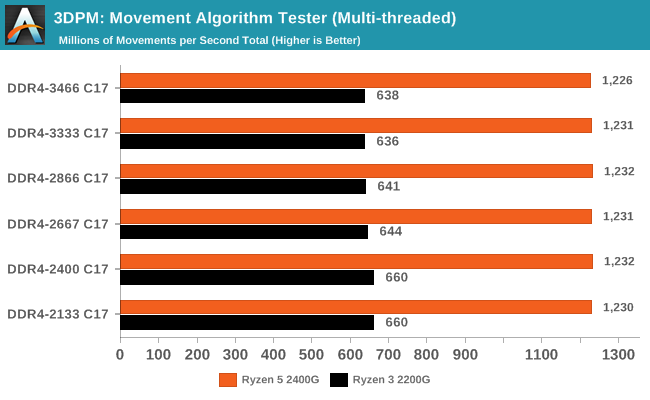

Point Calculations – 3D Movement Algorithm Test: link

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz, and IPC win in the single thread version, whereas the multithread version has to handle the threads and loves more cores. For a brief explanation of the platform agnostic coding behind this benchmark, see my forum post here.

With our 3DPM Brownian Motion simulator benchmark, memory frequency made little difference in increasing performance, although as our results show, the slower the memory, the better performance we experienced on the Ryzen 3 2200G. The Ryzen 2400G experienced a similar range of inconsistent and inconsequential results showing little to no improvement as the memory strap was increased.

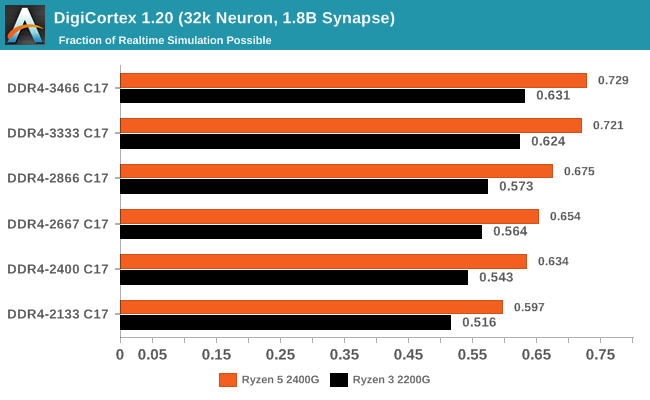

Neuron Simulation - DigiCortex v1.20: link

The newest benchmark in our suite is DigiCortex, a simulation of biologically plausible neural network circuits, and simulates activity of neurons and synapses. DigiCortex relies heavily on a mix of DRAM speed and computational throughput, indicating that systems which apply memory profiles properly should benefit and those that play fast and loose with overclocking settings might get some extra speed up. Results are taken during the steady state period in a 32k neuron simulation and represented as a function of the ability to simulate in real time (1.000x equals real-time).

DigiCortex experienced a very subsequent increase throughout the tested memory straps on both the Ryzen APUs, with a nice jump in performance of 22% on the 2200G and on the 2400G.

74 Comments

View All Comments

PeachNCream - Thursday, June 28, 2018 - link

Oh wow, that's a terrible result! Thanks for sharing the information. I was expecting something more like 25% less performance in worst case scenarios, but that was clearly optimistic.Lolimaster - Friday, June 29, 2018 - link

GT1030 2133 DDR4 is basically 3x less bandwidth than the GDDR5 version which like giving single channel DDR4 2133 on an APU.TheWereCat - Saturday, June 30, 2018 - link

The funny thing is that even if it had "only" 25% less performance, the price difference (at least im my country) is only €4, so it wouldnt make sense anyway.eastcoast_pete - Thursday, June 28, 2018 - link

Yes, thanks for that. I looked it up. That was REALLY BAD. The 1030 DDR4 card paired with a i7700K got a serious butt kicking by the stock Ryzen 2200G with dual channel. The only thing slightly slower than that 1030 setup was if they hogtied the 2200G with single channel memory only, which one would only do if truly desparate. Eye opening, really. Much better off with the 2200G (CPU+iGPU) for about the price of the 1030DDR4 dGPU alone. Nvidia really made one stinker of a card here.Lolimaster - Friday, June 29, 2018 - link

With DDR4 the GT1030 can lose so much performance than even the 2200G walks over it.Lolimaster - Friday, June 29, 2018 - link

Simple, 64bit + DDR vs dual channel DDR on the APU.29a - Thursday, June 28, 2018 - link

Please remove 3DPM from the benchmark suite, it would have been much better to include video encoding benchmarks. Seems the 3DPM benchmark is only included for ego purposes because as usual it offered no beneficial information.boeush - Thursday, June 28, 2018 - link

Yeah, for budget/consumer CPUs/APUs such science/engineering oriented benchmarks seem to be off-topic. Conversely, if you're going to test STEAM aspects, then commit all the way and include the Chrome compilation benchmark, and maybe also include something about molecular dynamics and electronic circuit simulation...lightningz71 - Thursday, June 28, 2018 - link

First of all, thank you for putting together this series of articles. I really respect the time and effort that went into all of this. I don't know if you have any more articles planned, but I would like to offer some constructive criticism.My first point is that you claim to focus on real world scenarios and expectations for the two processors, then proceeded to go right into a scenario that was anything but. The vast majority of the benchmarks that were run were set to 1080p at high detail. It is widely accepted that the performance target of the two processors is either 720p at mid-high detail or 1080p at very low detail. Most people aim for about 60fps for these budget solutions to be considered well playable. Most of your gaming benchmarks never made it north of 30fps.

My second point is that this review neglected to show what the processors would achieve with memory scaling while the iGPU is also overclocked. If the user is going to go to the effort to turn their memory clocks up to 3400 Mxfrs, they are also very likely to overclock their iGPU at the same time. Another point in support of this is the unusual memory scaling that was seen between the highest two memory click settings. The higher clocked iGPU case might have made better use of the higher clocked memory and shown more linear scaling.

I suggest that you make a follow on article that focuses on the gaming and content creation aspects with both processors that is run at both 720p high and 1080p low with the iGPU at stock and overclocked to 1500mhz. I suspect that the numbers would be very enlightening.

Again, thank you. I hope that you can take the time to look at my suggestions and try a follow on article.

eastcoast_pete - Thursday, June 28, 2018 - link

Yes, thanks to Gavin for this continued exploration of the Ryzen chips with Vega iGPUs. Glad to see that your hand has healed up.About Lightningz71's points: +1. I fully agree, the real world scenario for anyone who goes through the trouble to boost the memory clock would be to OC the iGPUs to 1500 MHz and maybe even higher. A German site had a test where they sort of did that, found some strange unstable behavior at mild overclock of the iGPUs, but regained stability once they overvolted a bit more and hit 1450 MHz and up. They did find quite substantial increases in frame rates, making some games playable at settings that previously failed to get even close to 30fps. But, their 2200G and 2400G Ryzens were on stock heatsinks and NOT delidded, so I have even higher hopes for Gavin's setups.

Lastly, given the current crazy high prices for memory, I'd love to see at least some data for 2x4 Gb, representing the true ~$300 potato with a 2200G.