Improving The Exynos 9810 Galaxy S9: Part 2 - Catching Up With The Snapdragon

by Andrei Frumusanu on April 20, 2018 9:00 AM EST- Posted in

- Mobile

- Samsung

- Smartphones

- Exynos 9810

- Exynos M3

- Galaxy S9

Scheduler mechanisms: WALT & PELT

Over the years, it seems Arm noticed the slow progress and now appears to be working more closely with Google in developing the Android common kernel, utilizing out-of-tree (meaning outside of the official Linux kernel) modifications that benefit performance and battery life of mobile devices. Qualcomm also has been a great contributor as WALT is now integrated into the Android common kernel, and there’s a lot of work going on from these parties as well as other SoC manufacturers to advance the platform in a way that benefits commercial devices a lot more.

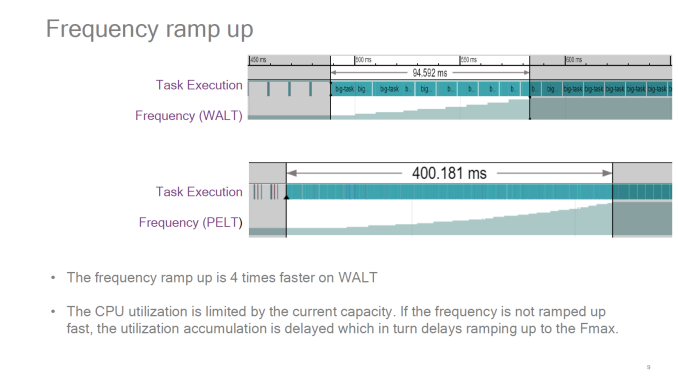

Samsung LSI’s situation here seems very puzzling. The Exynos 9810 is the first flagship SoC to actually make use of EAS, and they are basing the BSP (Board support package) kernel off of the Android common kernel. The issue here is that instead of choosing to optimise the SoC through WALT, they chose to fall back to full PELT dictated task utilisation. That’s still fine in terms of core migrations, however they also chose to use a very vanilla schedutil CPU frequency driver. This meant that the frequency ramp-up of the Exynos 9810 CPUs could have the same characteristics as PELT, which means it would be also bring with it one of the existing disadvantages of PELT: a relatively slow ramp-up.

Source: BKK16-208: EAS

Source: WALT vs PELT : Redux – SFO17-307

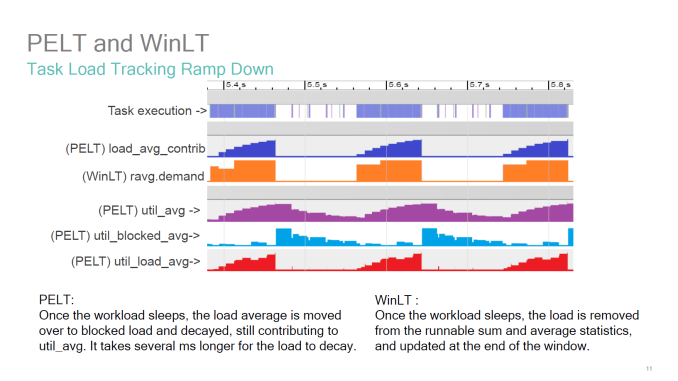

One of the best resources on the issue actually comes from Qualcomm, as they had spearheaded the topic years ago. In the above presentation presented at Linaro Connect 2016 in Bangkok, we see the visual representation of the behaviour of PELT vs WinLT (which WALT was called at the time). The metrics to note here in the context of the Exynos 9810 are the util_avg (which is the default behaviour on the Galaxy S9) and the contrast to WALT’s ravg.demand and actual task execution. So out of all the possible options in terms of BSP configurations, Samsung seemed to have chosen the worst one for performance. And I do think this seems to have been a conscious choice as Samsung had made additional mechanisms to the both the scheduler (eHMP) and schedutil (freqvar) to counteract this very slow behaviour caused by PELT.

In trying to resolve this whole issue, instead of adding additional logic on top of everything I looked into fixing the issue at the source.

What was first tried is perhaps the most obvious route, and that's to enable WALT and see where that goes. While using WALT as a CPU utilisation signal for the Exynos S9 gave outstandingly good performance, it also very badly degraded battery life. I had a look at the Snapdragon 845 Galaxy S9’s scheduler, but here it seems Qualcomm diverges significantly from the Google common kernel which the Exynos is based on. This being far too much work to port, I had another look at the Pixel 2’s kernel – which luckily was a lot nearer to Samsung’s. I ported all relevant patches which were also applied to the Pixel 2 devices, along with porting EAS to a January state of the 4.9-eas-dev branch. This improved WALT’s behaviour while keeping performance, however there was still significant battery life degradation compared to the previous configuration. I didn’t want to spend more time on this so I looked through other avenues.

Source : LKML Estimate_Utilization (With UtilEst)

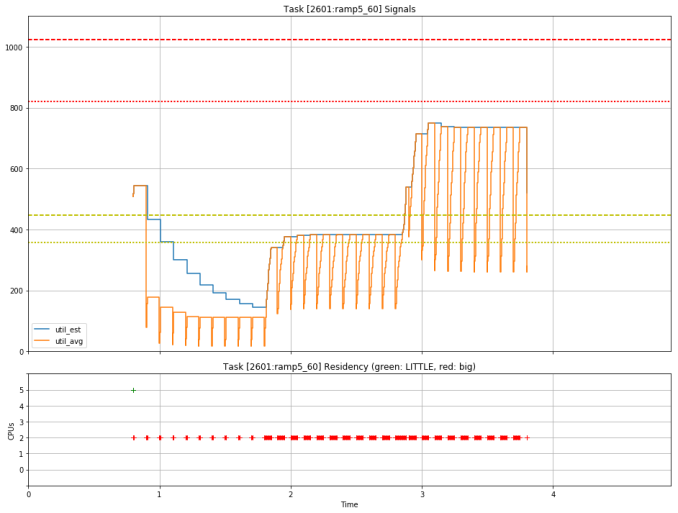

Looking through Arm's resources, it looks very much like the company is aware of the performance issues and is actively trying to improve the behaviour of PELT to more closely match that of WALT. One significant change is a new utilisation signal called util_est (Utilisation estimation) which is added on top of WALT and is meant to be used for CPU frequency selection. I backported the patch and immediately saw a significant improvement in responsiveness due to the higher CPU frequency state utilisation. Another simple way of improving PELT was reducing the ramp/decay timings, which incidentally also got an upstream patch very recently. I backported this as well to the kernel, and after testing a 8ms half-life setting for a bit and judging it to not be good for battery life, I settled on a 16ms settings, which is an improvement over the 32ms of the stock kernel and gives the best performance and battery compromise.

Because of these significant changes in the way the scheduler is fed utilisation statistics, the existing tuning from Samsung obviously weren’t valid anymore. I adapted most of them to the best I could, which basically involves just disabling most of them as they were no longer needed. Also I significantly changed the EAS capacity and cost tables, as I do not think that the way Samsung populated the table is correct or representative of actual power usage, which is very unfortunate. Incidentally, this last bit was one of the reasons that performance changed when I limited the CPU frequency in part 1, as it shifted the whole capacity table and changed the scheduler heuristic.

But of course, what most of you are here for is not how this was done but rather the hard data on the effects of my experimenting, so let's dive into the results.

76 Comments

View All Comments

N Zaljov - Sunday, April 22, 2018 - link

For this part, Spock should‘ve used a tunnel bore instead of a mere chainsaw, because a chainsaw doesn‘t seem to accomodate the right amount of „POWER!“ that one would require in order to deal with Samsung‘s crapload of shady implementation. SCNRdjayjp - Friday, April 20, 2018 - link

Wow, amazing work. The definition of above and beyond. I would submit this data to Samsung.StormyParis - Friday, April 20, 2018 - link

Fascinating. Thank you !mad_one - Friday, April 20, 2018 - link

Lovely article!Software can still play a part in the web workloads, as the Javascript and browser engines are probably better tuned for the ARM cores. Of course tt could also be the M3 core struggling to extract enough ILP out of the branchy and cache unfriendly JS code, ARM has certainly tuned their cores for this over the years.

repoman27 - Friday, April 20, 2018 - link

Outstanding work, and much appreciated. Thank you, Andrei!I fully recognize that one only has so much time to commit to writing pieces like this, and that it requires a fair amount of personal interest in the subject matter at hand to do it right. However, it would be great to see Anandtech do a deep dive into the iPhone battery issue. Obviously Apple is intentionally opaque regarding a fair amount of the iPhone and iOS internals, which would make it somewhat more challenging. But I have yet to see anyone publish anything that even quantifies the basics of the reduced performance modes introduced by the various software updates. I can't imagine it would be too hard to figure out what the maximum clocks are with a new battery vs. what appear to be four distinct lower performance modes, or to determine what metrics are used to trigger those modes. Maybe the folks at the kiosk that does aftermarket battery replacements at the local mall would be willing to set aside a box of pulled batteries for testing? Such an article would arrive late as far as the media cycle is concerned, but this is an issue that continues to affect hundreds of millions of people worldwide.

A few years back, Brian Klug used to catch a lot of flack for being biased, before he (and Anand) left to join Apple. And maybe you'll end up at Samsung in the future, like Kristian. But while I think your tone was quite even handed and appropriate in this article, you seemed much more inclined to denounce Apple as deserving of a class-action lawsuit before doing any in-depth testing in regards to their recent power / performance issues. In your opinion, what is the appropriate response from Samsung in this situation? I mean, obviously pushing out a software update that improves performance would be a good start, but should owners of Exynos 9810 variants of the Galaxy S9 sue for damages and extract their pound of flesh?

BurntMyBacon - Monday, April 23, 2018 - link

@repoman27: "But while I think your tone was quite even handed and appropriate in this article, you seemed much more inclined to denounce Apple as deserving of a class-action lawsuit before doing any in-depth testing in regards to their recent power / performance issues."I think the difference in tone reflects the difference in situation. In Samsung's situation, the product came to market with a set level of performance and battery life. Very early in the lifecycle of the product, It was discovered that better software would improve their situation to a more or less extent on one or both fronts. In Apple's situation, the product came to market with a set level of performance and battery life. After a certain time period and as a direct result of a software update, performance suddenly and inexplicably (until it was investigated) dropped for older phones (more cycles on the battery).

Samsung's situation left their solution looking immature at best and their engineers looking incompetent at worst. In either case, their misstep appears to be unintentional and only serves to hurt sales of a new product. Apple's situation left them looking misguided at best and abusive(?) at worst. In either case, their actions appear to be intentional (well meaning or not). The actions only affect phones later in their life cycle and, whether as an unintentional side effect of their actions or as the primary goal of their actions, Apple stands to gain by virtue of prompting upgrades. Some people tend to believe the upgrades were the primary goal due to Apple's locked in ecosystem design and marketing strategy, but it could just as easily have been an (un)fortunate side effect of them trying to mitigate another potentially serious blemish to their user experience. Perhaps a case of the cure being worse than the disease. Like you, I wouldn't mind a more in depth look at the situation that could help clarify this.

In any case, I think the difference in tone comes down to the clear lack of intention on Samsung's part (they don't benefit from this) and potentially well meaning but certainly intentional actions on Apple's part.

B3an - Friday, April 20, 2018 - link

Excellent article Andrei. One of the best on here in a long time.Glad i got a import Snapdragon S9+, because it seems no software update will ever properly fix the poor battery life and *actual daily usage* performance of the Exynos version. Absolutely pathetic how poorly Samsung handled all this though. So many poor decisions. Honestly the people responsible need to be fired. People shouldn't have to go out of their way to buy imports.

serendip - Friday, April 20, 2018 - link

This article will probably be used as a chainsaw by Samsung top management to get rid of everyone who screwed up the Exynos S9. It's sad how seemingly chasing synthetic benchmarks led to bad decisions from chip design onwards.madurko - Saturday, April 21, 2018 - link

AFAIK they are comparing the smaller S9 Exynos (which has 3000mAh battery) vs. S9+ S845 US version (3500mAh). So the battery life obviously will be better with the bigger bro. But, anyway, this doesn't make the exynos version any better.mczak - Friday, April 20, 2018 - link

Very interesting article indeed.I agree the M3 core looks a bit oversized for a smartphone - but a tweaked version of it on 7nm might fare a lot better. For this generation, it definitely wasn't worth the effort over standard A75.

And, by the looks of it, recognizing the power issues, samsung made things worse with an inadequate scheduler.

I wonder if actually the SD845 could get better battery life at very little performance cost by disabling the highest frequency state - that sure looks inefficient as hell. (Albeit that could only widen the battery life gap between the two S9 versions...).

The discrepancy between web tests and microbenchmarks (and spec) in efficiency is also quite interesting - while I'd agree it might be more difficult to extract good IPC of these web tests (putting wide cores at a disadvantage) apple seems to have successfully done so, though I'm ignoring if through software or hardware means.