The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

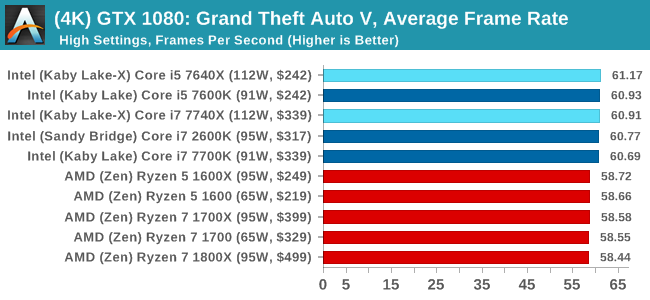

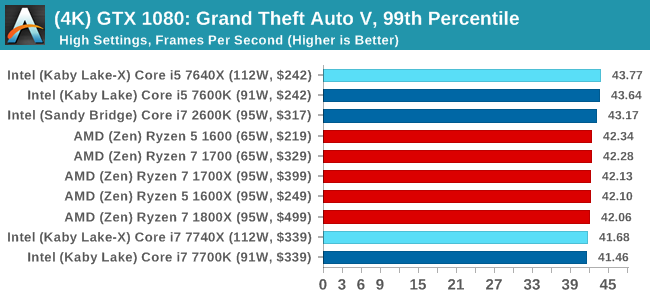

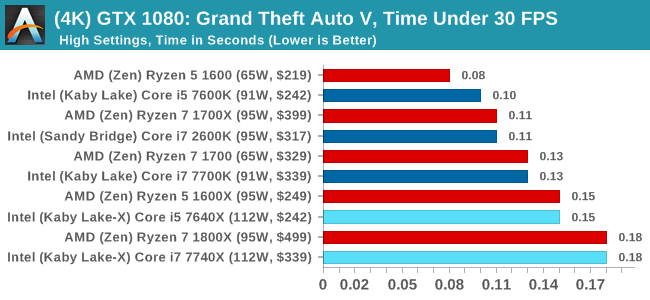

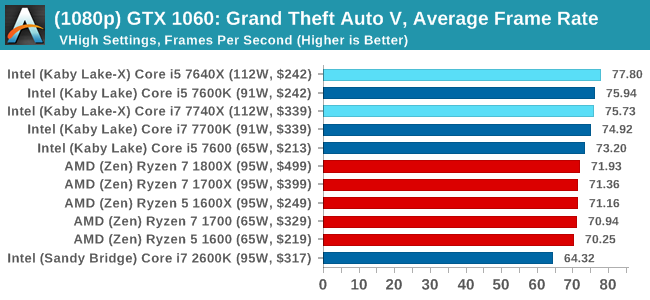

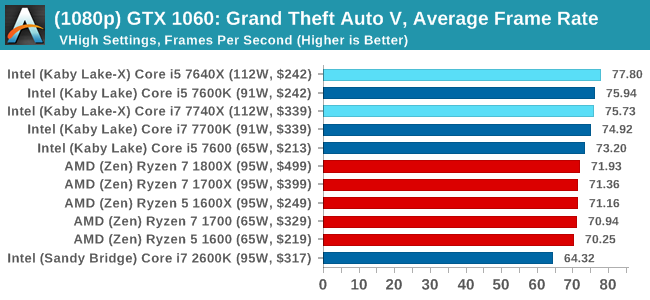

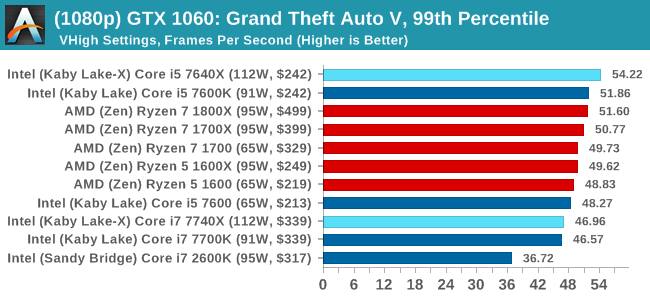

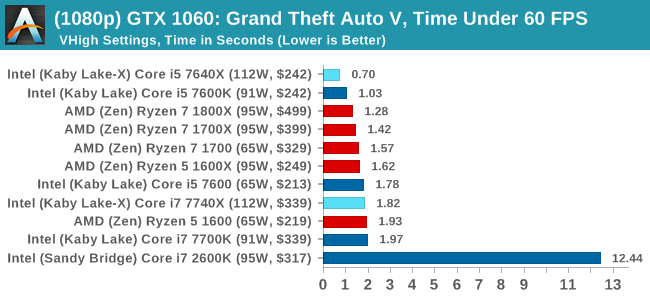

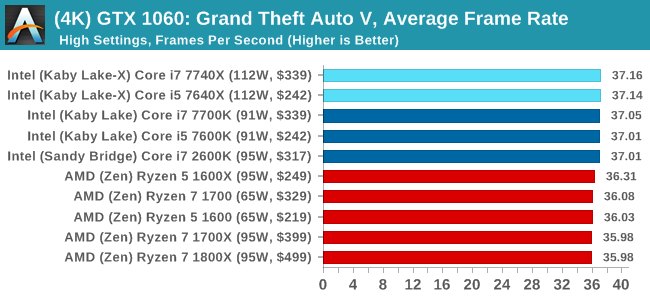

Grand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

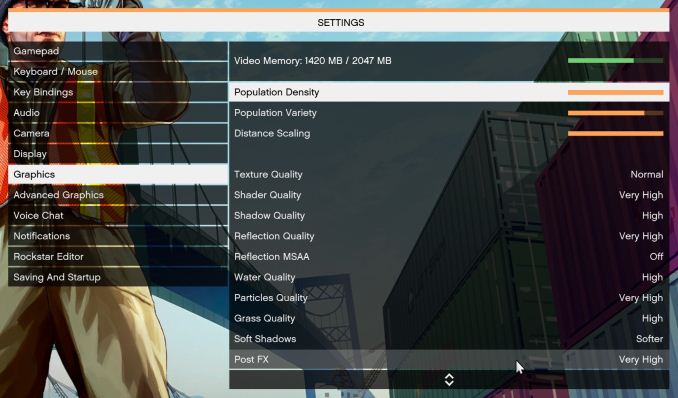

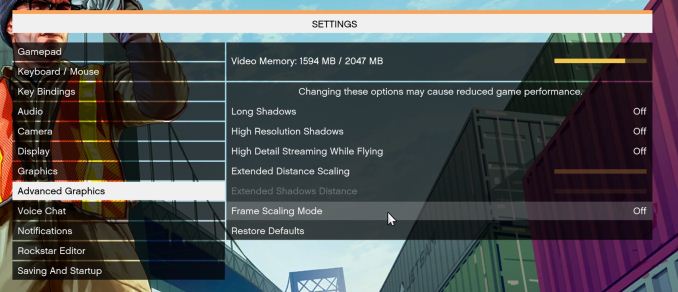

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

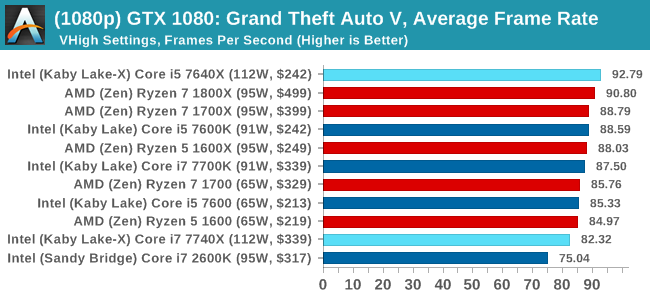

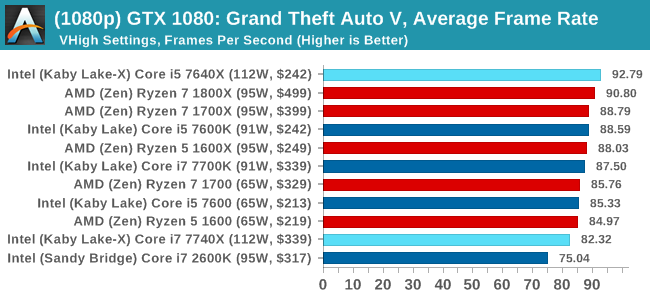

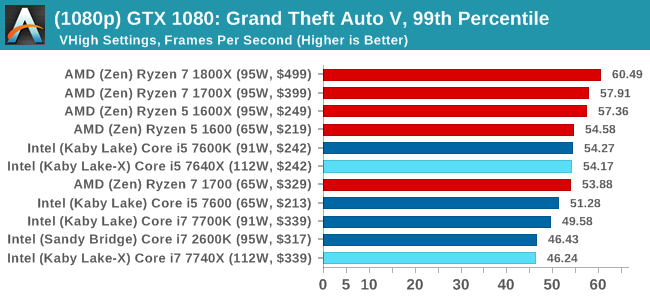

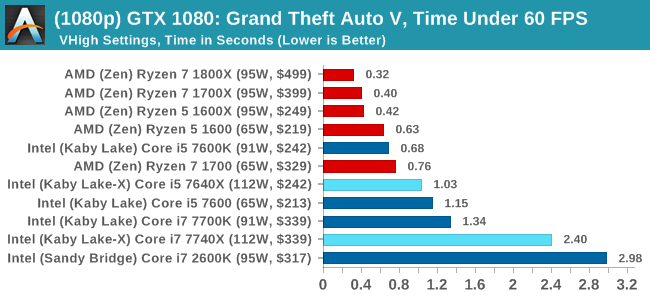

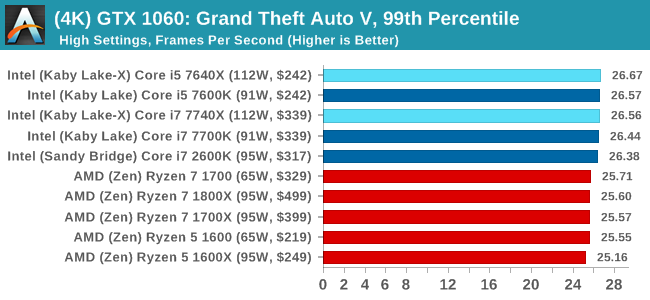

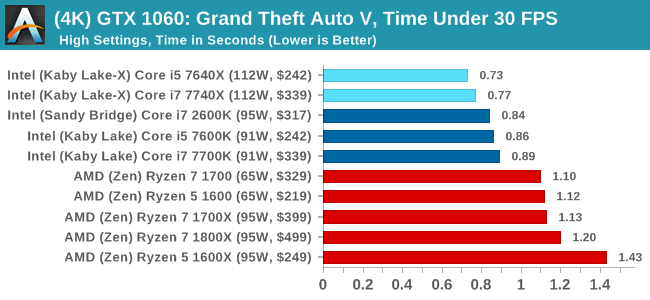

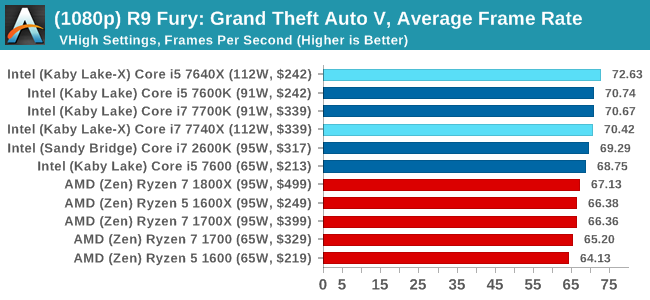

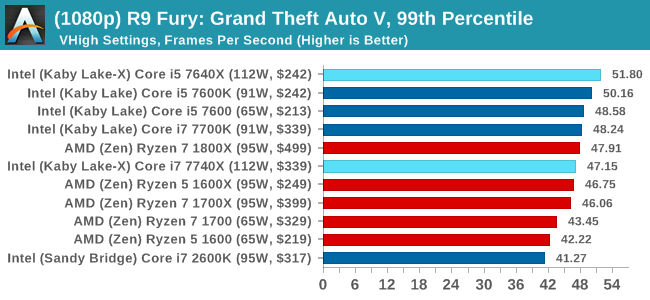

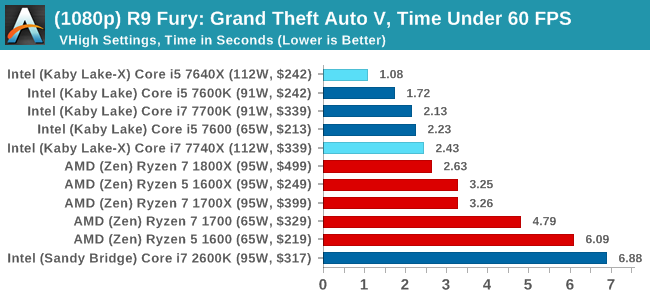

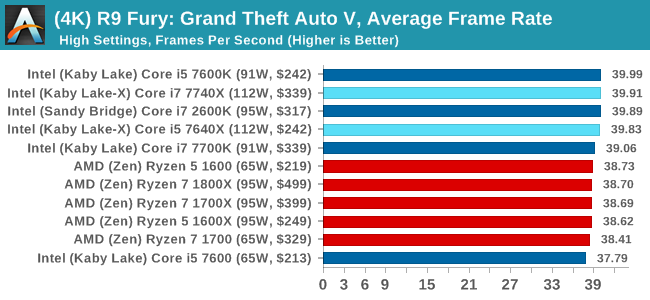

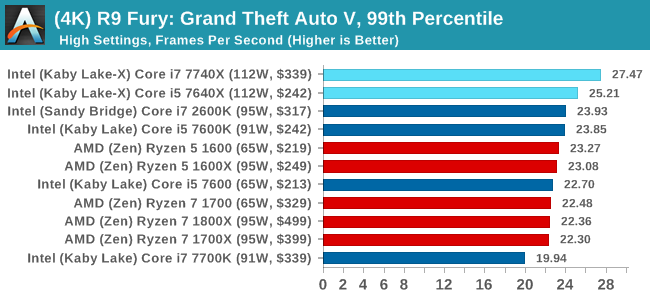

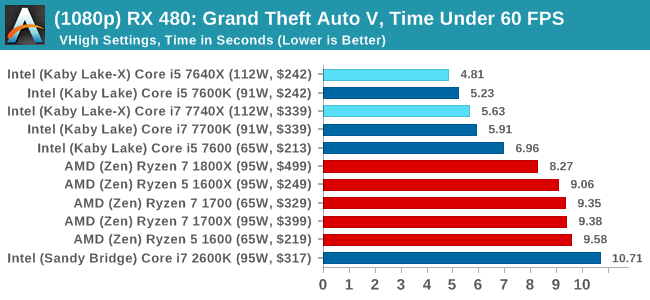

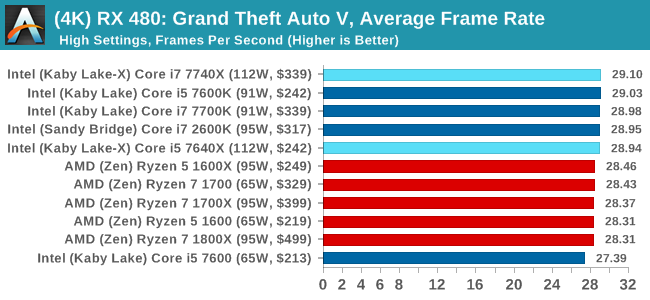

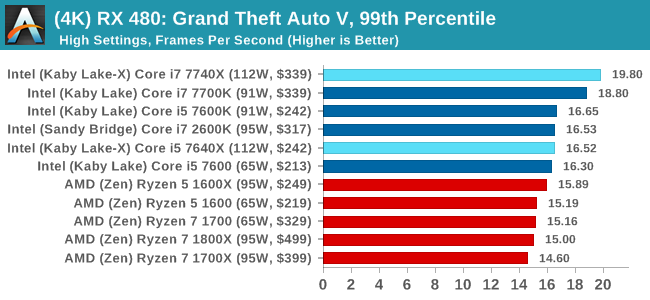

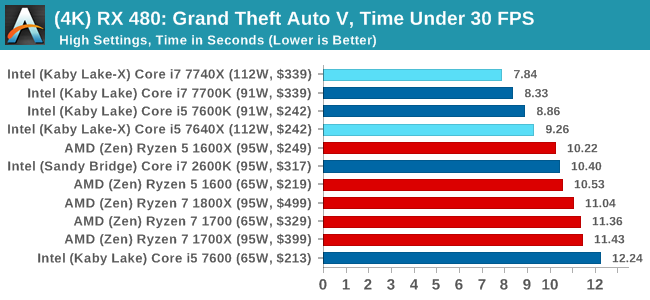

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

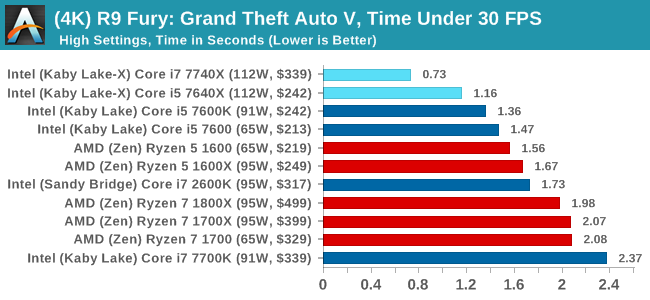

Sapphire R9 Fury 4GB Performance

1080p

4K

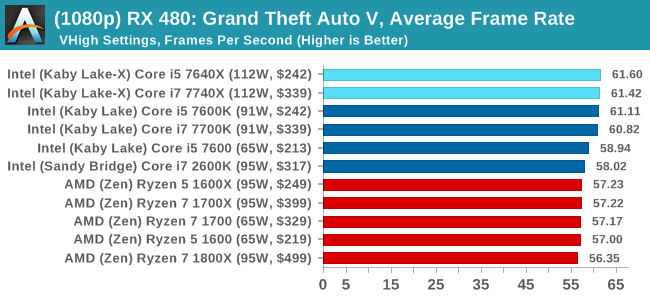

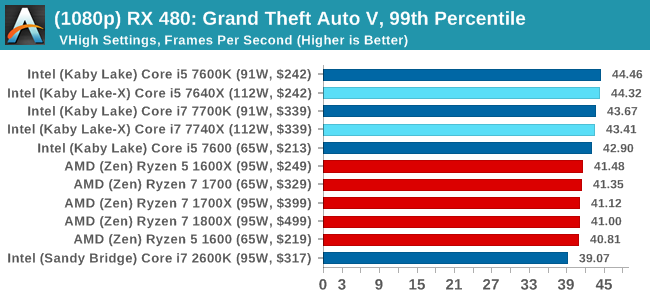

Sapphire RX 480 8GB Performance

1080p

4K

Grand Theft Auto Conclusions

Looking through the data, there seems to be a difference when looking at the results with an AMD GPU and an NVIDIA GPU. With the GTX 1080, there's a mix of AMD and Intel results there, but Intel takes a beating in the Time Under analysis at 1080p. The GTX 1060 is a mix at 1080p, but Intel takes the lead at 4K. When an AMD GPU is paired to the processor, all flags fly Intel.

176 Comments

View All Comments

mapesdhs - Monday, July 24, 2017 - link

Ok, you get a billion points for knowing Commodore BASIC. 8)IanHagen - Monday, July 24, 2017 - link

Dr. Ian, I would like to apologize for my poor choice of words. Reading it again, it sounds like I accused you of something which is not the case.I'm merely puzzled by how Ryzen performs poorly using msvc compared to other compilers. To be honest, your finds are very relevant to anyone using Visual Studio. But again, I find Microsoft's VS compilar to be a bit of an oddball.

A few weeks ago I was running my own tests to determine wether my Core i5 4690K was up to my compiling tasks. Since most of my professional job sits on top of programming languages with either short compile times or no compilation needed at all, I never bothered much about it. But recently I've been using C++ more and more during my game development hobby and compile times started to bother me. What I found puzzling is that after running a few test I couldn't manage to get any gains through parallelism, even after verifying that msvc was indeed spanning all 4 threads to compile files. Than I tried disabling two cores and clocking the thing higher and... it was faster! Not by a lot, but faster still. How could it be faster with a 50% decrease in the number of active cores and consequently threads doing compile jobs? I'm fully aware that linking is single threaded, but at least a few seconds should be gained with two extra cores, at least in theory. Today I had the chance to compile the same project on a Core i7 7700HQ and it was substantially slower than my Core i5 4690K even with clocks capped to 3.2 GHz. In fact, it was 33% slower than my Core i5 at stock speeds.

Anyhow… Dr. Ian’s findings are a very good to point out to those compiling C++ using msvc that Skylake-X is probably worth it over Ryzen. For my particular case, it would appear that Kaby Lake-X with the Core i7 7740X could even be the best choice, since my project somehow only scales nicely with clocks.

I just would like to see the wording pointing out that Skylake-X isn’t a better compiling core. It’s a better compiling core using msvc at this particular workload. On the GCC side of things, Ryzen is very competitive to it and a much better value in my humble opinion.

As for the suggestion, I’d say that since Windows is a requirement trying to script something to benchmark compile times using GCC would be daunting and unrealistic. Not a lot of people are using GCC to work on the Windows side of things. If Linux could be thrown into the equation, I’d suggest a project based on CMake. That would make it somewhat easy to write a simple script to setup, create a makefile and compile the project. Unfortunately, I can not readily think of any big name projects such as Chromium that fulfill that requirement without having to meddle with eventual dependency problems as the time goes by.

Kevin G - Monday, July 24, 2017 - link

These chips edge out their LGA 1151 counter parts at stock with overclocking also carrying a slight razor edge over LGA 1151 overclocks. There are gains but ultimately these really don't seem worth it, especially in light of the fragmentation that this causes the X299 platform. Hard to place real figures on this but I'd wager that the platform confusion is going to cost Intel more than what they will gain with these chips. Intel should have kept these in the lab until they could offer something a bit more substantial.mapesdhs - Monday, July 24, 2017 - link

I wonder if it would have been at least a tad better received if they hadn't cripplied the on-die gfx, etc.DanNeely - Tuesday, July 25, 2017 - link

LGA2066 doesn't have video out pins because it was originally designed only for the bigger dies that don't include them; and even if Intel had some 'spare' pins it could use adding video out would only make already expensive mobos with a wide set of features that vary based on the CPU model even more expensive and more confusing. Unless they add a GPU to either future CPUs in the family (or IMO a bit more likely) a very basic one to a chipset variant (to remove the crappy one some server boards add for KVM support) keeping the IGP fully off in mainstream dies on the platform is the right call IMO.DrKlahn - Monday, July 24, 2017 - link

Great article, but the conclusion feels off:"The benefits in the benchmarks are clear against the nearest competition: these are the fastest CPUs to open a complex PDF, at the top for office work, and at the top for most web interactions by a noticeable amount."

In most cases you're talking about a second or less between the Intel and AMD systems. That will not be noticeable to the average office worker. You're much more likely to run into scenarios where the extra cores or threads will make an impact. I know in my own user base shaving a couple of seconds off opening a large PDF will pale in comparison to running complex reports with 2 (4 threads) extra cores for less money. I have nothing against Intel, but I struggle to see anything in here that makes their product worth the premium for an Office environment. The conclusion seems a stretch to me.

mapesdhs - Monday, July 24, 2017 - link

Indeed, and for those dealing with office work it makes more sense to emphasise investment where it makes the biggest difference to productivity, which for PCs is having an SSD (ie. don't buy a cheap grunge box for office work), but more generally dear god just make sure employees have a damn good chair to sit on and a decent IPS display that'll be kind to their eyes. Plus/minus 1s opening a PDF is a nothingburger compared to good ergonomics for office productivity.DrKlahn - Tuesday, July 25, 2017 - link

Yeah an SSD is by far the best bang for the buck. From a CPU standpoint there are more use cases for Ryzen 1600 than there is the i5/i7 options we have from HP/Dell. Even the Ryzen 1500 series would probably be sufficient and allow even more per unit savings to put into other areas that would benefit folks more.JimmiG - Monday, July 24, 2017 - link

The 7740X runs at a just over 2% higher clock speed than the 7700X. It can overclock maybe 4% higher than the 7700X. You'd really have to be a special kind of stupid to pay hundreds more for an X299 mobo just for those gains that are nearly within the margin of error.It doesn't make sense as a "stepping stone" onto HEDT either, because you're much better off simply buying a real HEDT right away. You'll pay a lot more in total if you first get the 7740X and then the 7820X for example.

mapesdhs - Monday, July 24, 2017 - link

Intel seems to think there's a market for people who buy a HEDT platform but can't afford a relevant CPU, but would upgrade later. Highly unlikely such a market exists. By the time such a theoretical user would be in a position to upgrade, more than likely they'd want a better platform anyway, given how fast the tech is changing.