The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

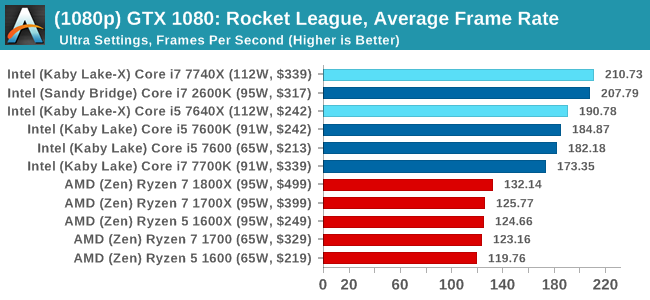

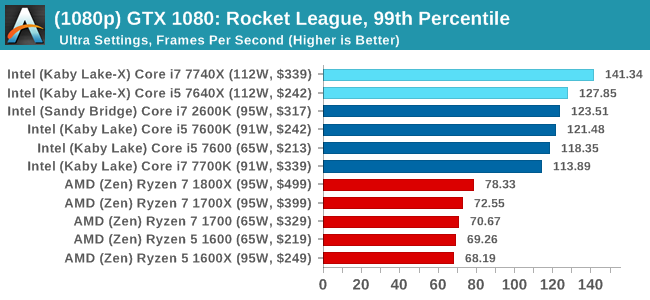

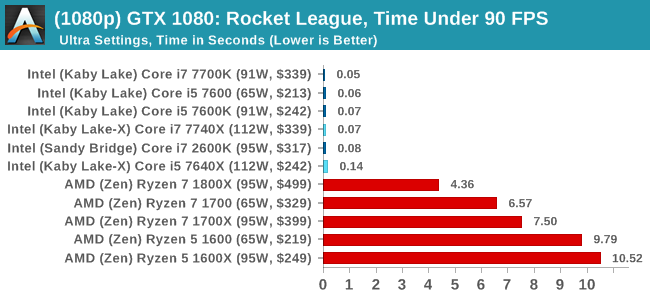

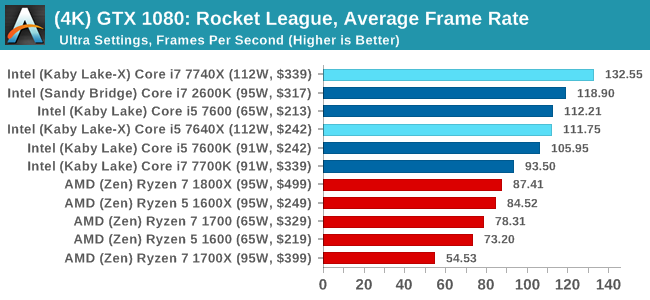

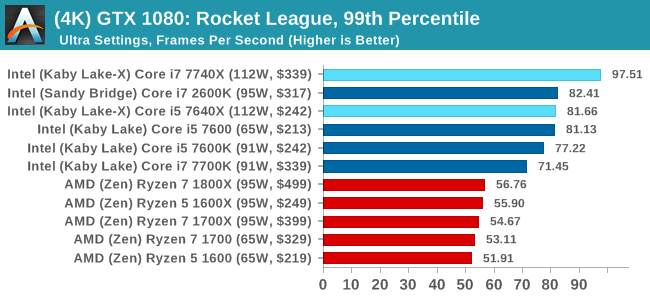

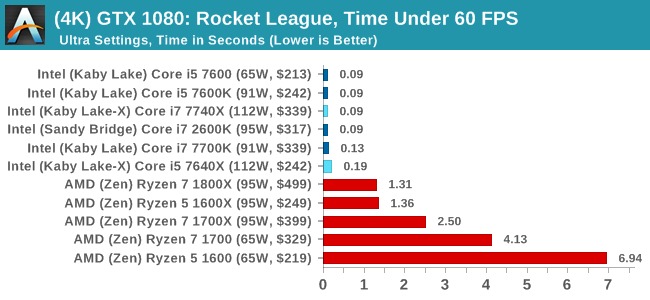

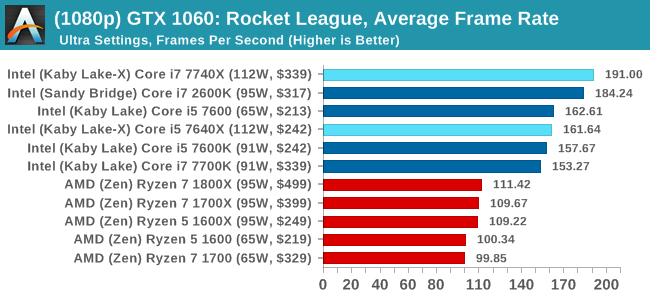

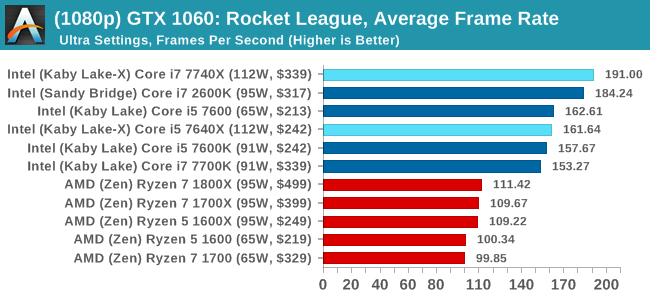

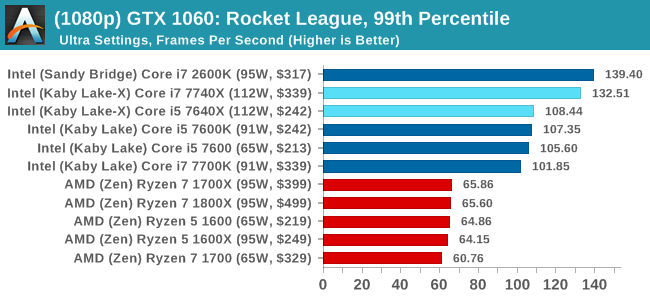

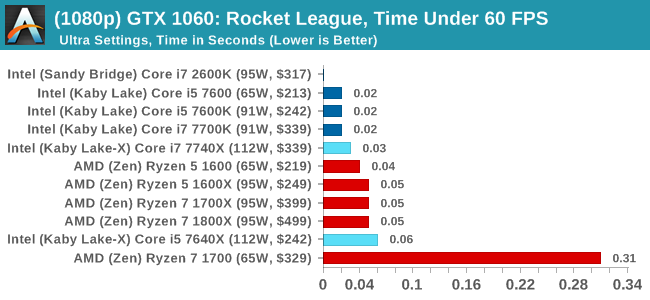

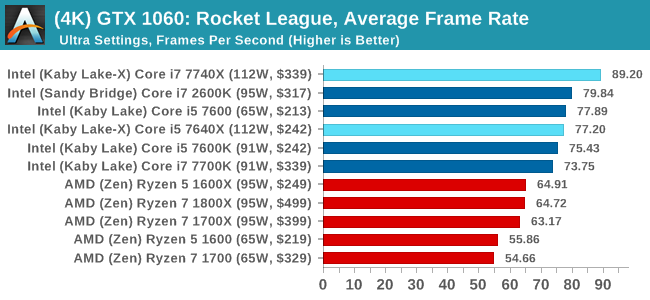

Rocket League

Hilariously simple pick-up-and-play games are great fun. I'm a massive fan of the Katamari franchise for that reason — passing start on a controller and rolling around, picking up things to get bigger, is extremely simple. Until we get a PC version of Katamari that I can benchmark, we'll focus on Rocket League.

Rocket League combines the elements of pick-up-and-play, allowing users to jump into a game with other people (or bots) to play football with cars with zero rules. The title is built on Unreal Engine 3, which is somewhat old at this point, but it allows users to run the game on super-low-end systems while still taxing the big ones. Since the release in 2015, it has sold over 5 million copies and seems to be a fixture at LANs and game shows. Users who train get very serious, playing in teams and leagues with very few settings to configure, and everyone is on the same level. Rocket League is quickly becoming one of the favored titles for e-sports tournaments, especially when e-sports contests can be viewed directly from the game interface.

Based on these factors, plus the fact that it is an extremely fun title to load and play, we set out to find the best way to benchmark it. Unfortunately for the most part automatic benchmark modes for games are few and far between. Partly because of this, but also on the basis that it is built on the Unreal 3 engine, Rocket League does not have a benchmark mode. In this case, we have to develop a consistent run and record the frame rate.

Read our initial analysis on our Rocket League benchmark on low-end graphics here.

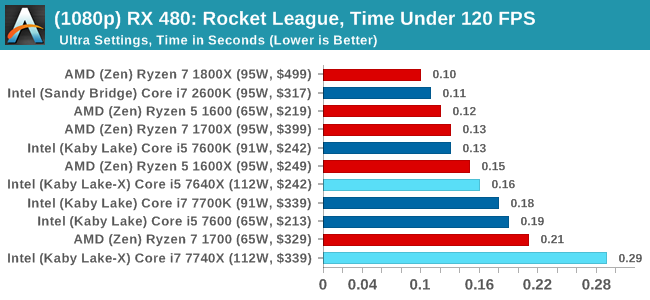

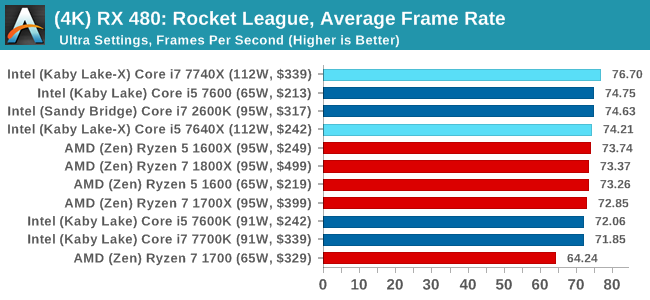

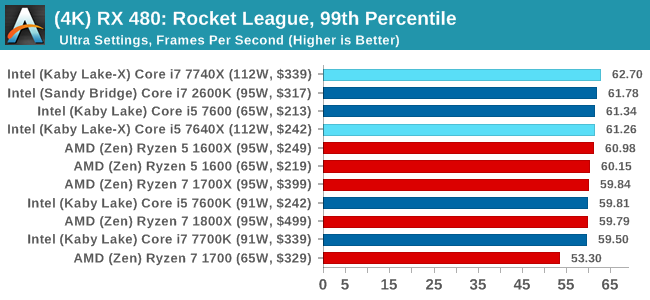

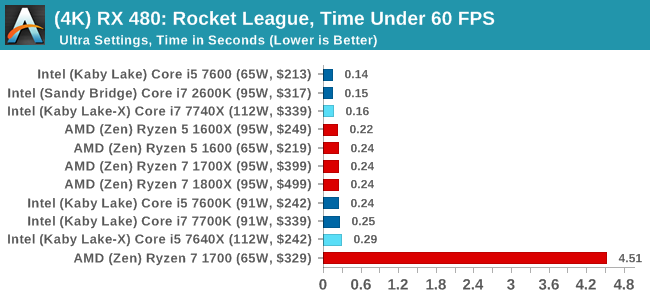

With Rocket League, there is no benchmark mode, so we have to perform a series of automated actions, similar to a racing game having a fixed number of laps. We take the following approach: Using Fraps to record the time taken to show each frame (and the overall frame rates), we use an automation tool to set up a consistent 4v4 bot match on easy, with the system applying a series of inputs throughout the run, such as switching camera angles and driving around.

It turns out that this method is nicely indicative of a real bot match, driving up walls, boosting and even putting in the odd assist, save and/or goal, as weird as that sounds for an automated set of commands. To maintain consistency, the commands we apply are not random but time-fixed, and we also keep the map the same (Aquadome, known to be a tough map for GPUs due to water/transparency) and the car customization constant. We start recording just after a match starts, and record for 4 minutes of game time (think 5 laps of a DIRT: Rally benchmark), with average frame rates, 99th percentile and frame times all provided.

The graphics settings for Rocket League come in four broad, generic settings: Low, Medium, High and High FXAA. There are advanced settings in place for shadows and details; however, for these tests, we keep to the generic settings. For both 1920x1080 and 4K resolutions, we test at the High preset with an unlimited frame cap.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

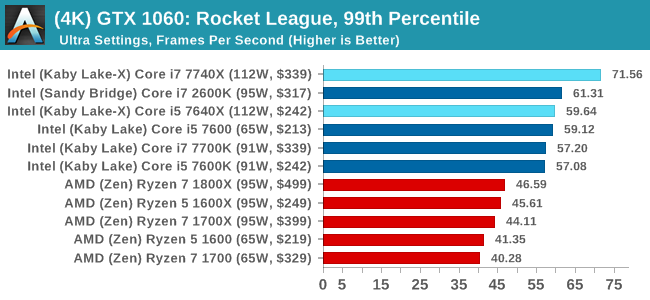

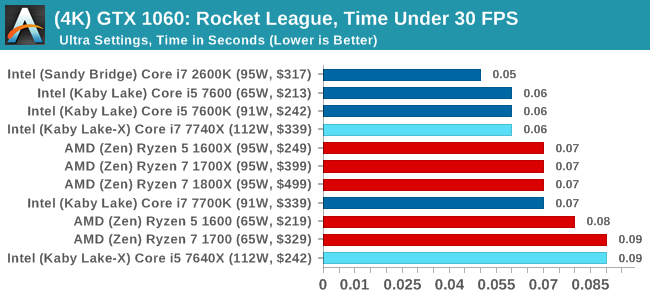

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

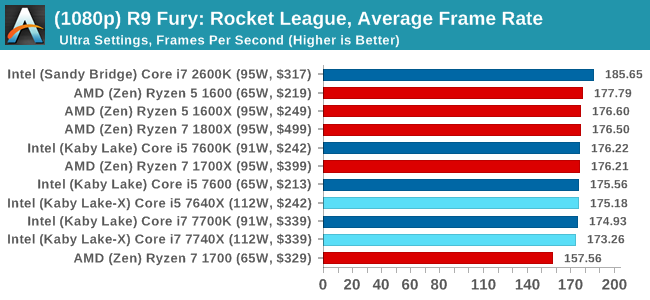

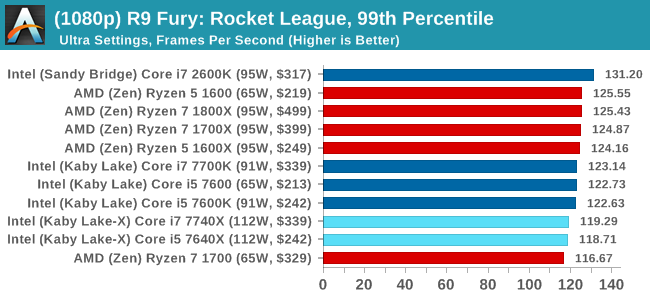

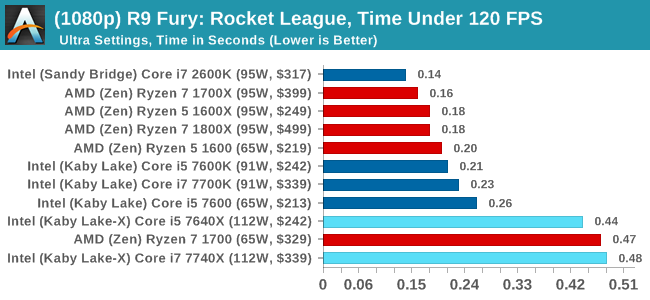

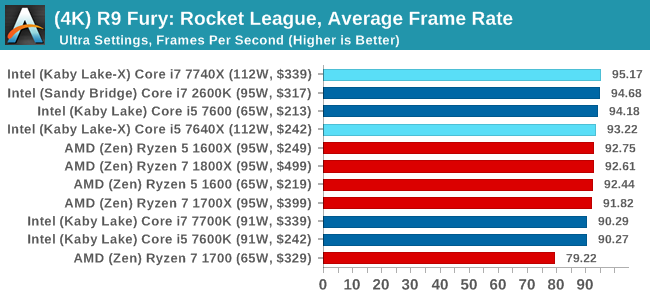

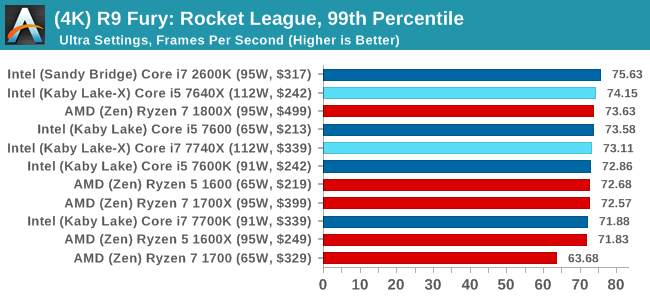

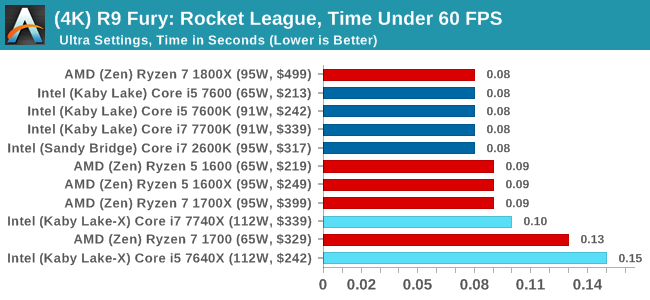

Sapphire R9 Fury 4GB Performance

1080p

4K

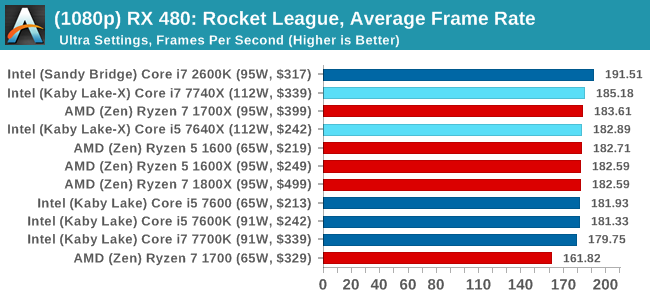

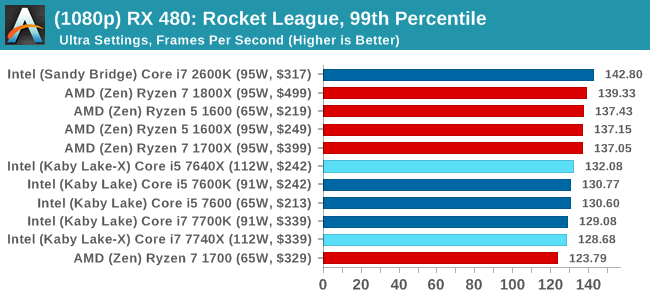

Sapphire RX 480 8GB Performance

1080p

4K

Rocket League Conclusions

The map we use in our testing, Aquadome, is known to be strenuous on a system, hence we see frame rates lower than what people expect for Rocket League - we're trying to cover the worst case scenario. But the results also show how AMD CPUs and NVIDIA GPUs do not seem to be playing ball with each other, which we've been told is likely related to drivers. The AMD GPUs work fine here regardless of resolution, and both AMD and Intel CPUs get in the mix.

176 Comments

View All Comments

Gulagula - Wednesday, July 26, 2017 - link

Can anyone explain to me how the 7600k and in some cases the 7600 beating the 7700k almost consistenly. I don't doubt the Ryzen results but the Intel side of results confuses the heck out of me.Ian Cutress - Wednesday, July 26, 2017 - link

Sustained turbo, temperatures, quality of chips from binning (a good 7600 chip will turbo much longer than a 7600K will), time of day (air temperature is sometimes a pain - air conditioning doesn't really exist in the UK, especially in an old flat in London), speed shift response, uncore response, data locality (how often does the system stall, how long does it take to get the data), how clever the prefetchers are, how a motherboard BIOS ramps up and down the turbos or how accurate its thermal sensors are (I try and keep the boards constant for a full generation because of this). If it's only small margin between the data, there's not much to discuss.Funyim - Thursday, August 10, 2017 - link

Are you absolutely sure your 7700k isn't broken? It sure looks like it is. I understand your point about margins but numbers are numbers and yours look wrong. No other benchmarks I've seen to date aligns with your findings. And please for the love of god ammend this article if it is.Hurr Durr - Monday, July 24, 2017 - link

One wonders why would you relegate yourself to subpar performance of AMD processors.Alistair - Tuesday, July 25, 2017 - link

Your constant refrain belonged in the bulldozer era (when the single threaded performance difference was on the order of 80-100 percent). Apparently you can't move past the Ryzen launch. If a different company such as Samsung had launched these CPUs the reception would have been very different. I've never bought AMD before but my Ryzen 1700 is incredible for its price, and I had to be disillusioned by my terrible Skylake upgrade first before I was willing to purchase from AMD.Gothmoth - Tuesday, July 25, 2017 - link

don´t argue with trolls....StevoLincolnite - Tuesday, July 25, 2017 - link

Why would Intel enable HT when they could sell it as DLC?https://www.engadget.com/2010/09/18/intel-wants-to...

coolhardware - Tuesday, July 25, 2017 - link

Glad to hear that the benchmarking is (becoming) less of a chore :-) Kudos and thank you for the great article!fallaha56 - Tuesday, July 25, 2017 - link

Surely that AVX drop -10 when overclocking was too much?What about delidding?

Samus - Monday, July 24, 2017 - link

It still stands that the best value in this group is the Ryzen 1600X, mostly because it's platform cost is 1/3rd that of Intel's HEDT. So unless you need those platform advantages (PCIe, which even x299 doesn't completely have on these KBL-X CPU's) it really won't justify spending $300 more on a system, even if single threaded performance is 15-20% better.Just the fact an AMD system of less than half the cost can ice a high end Intel system in WinRAR speaks a lot to AMD's credibility here.