The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

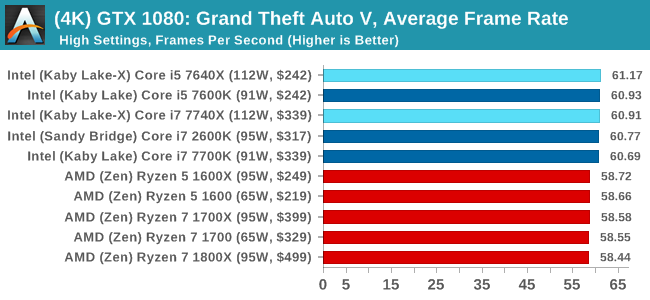

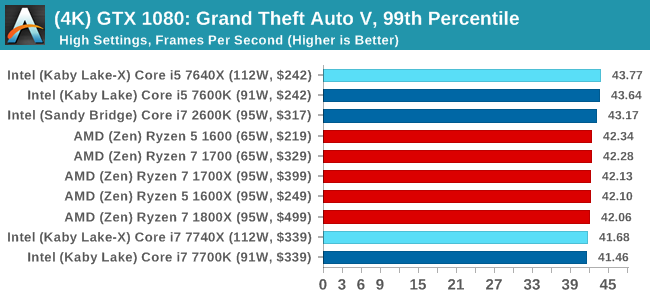

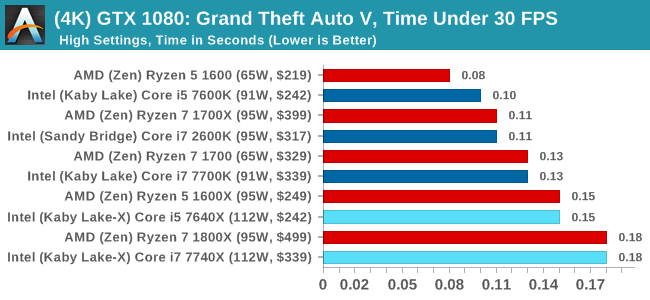

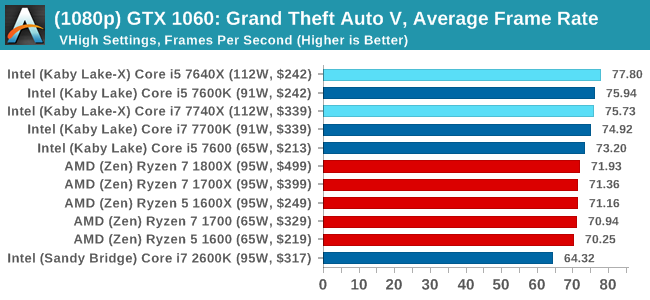

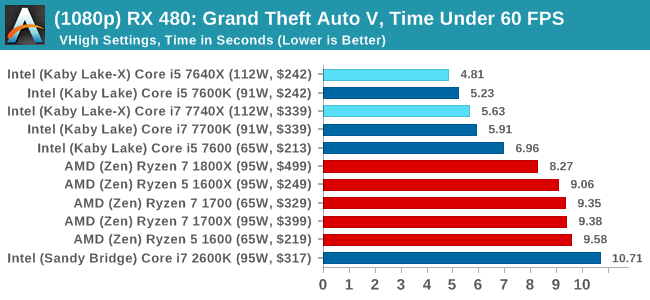

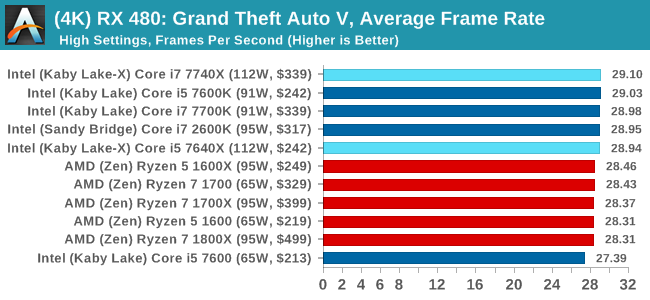

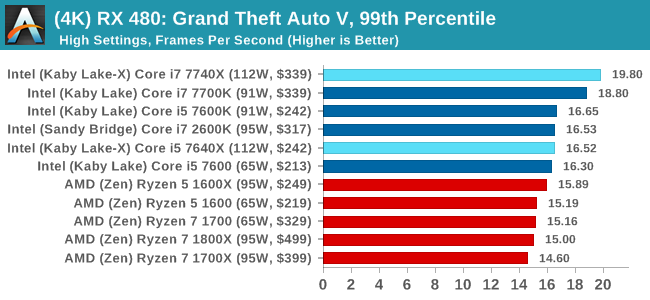

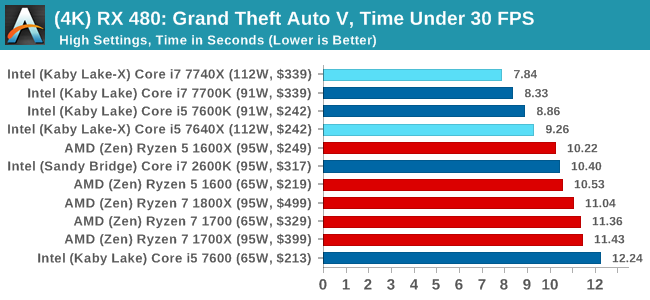

Grand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

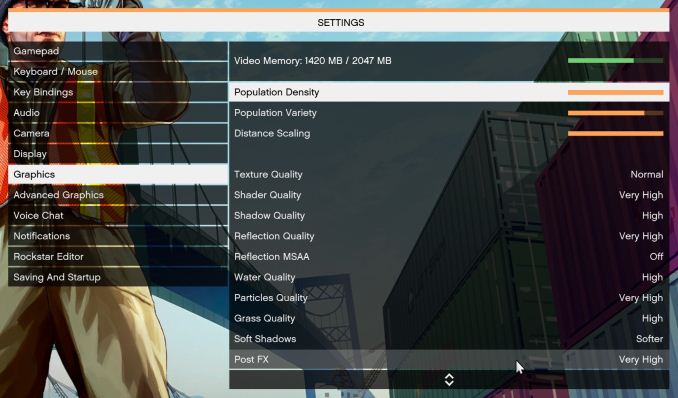

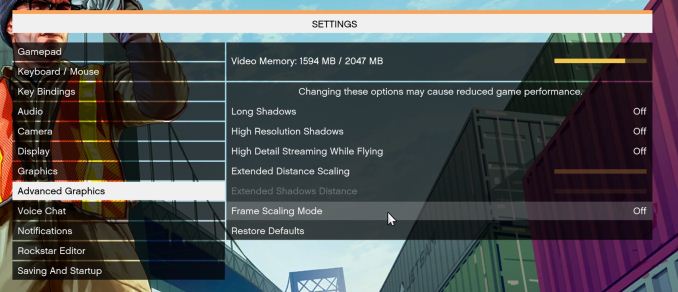

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

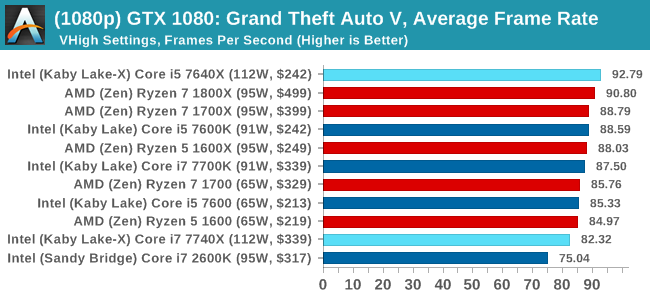

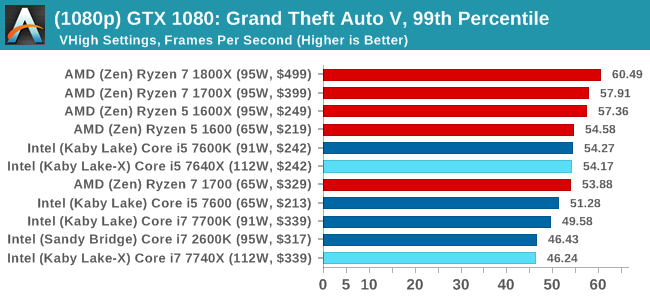

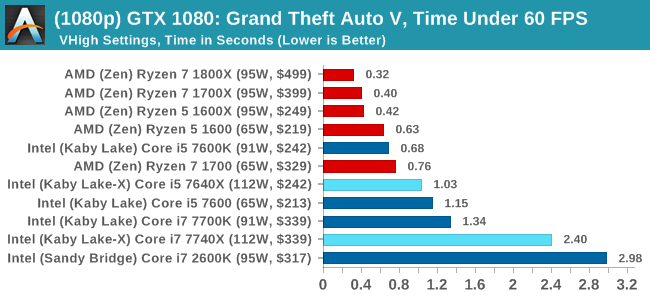

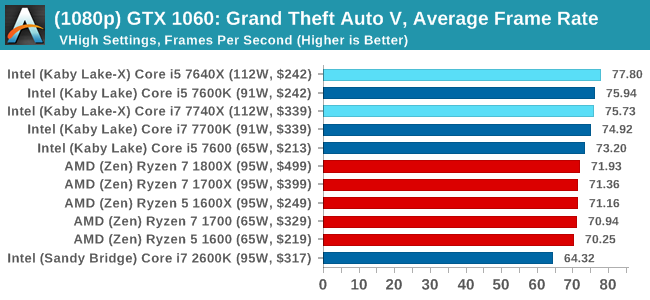

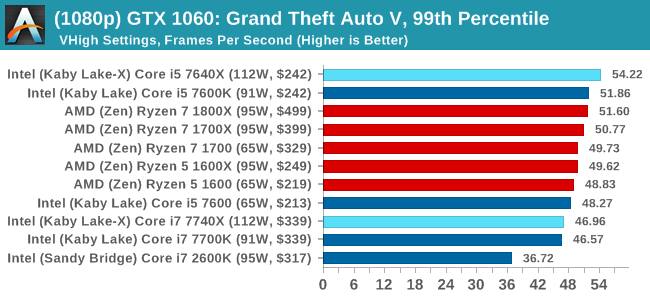

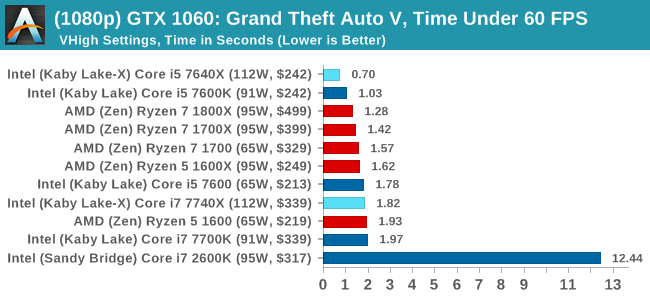

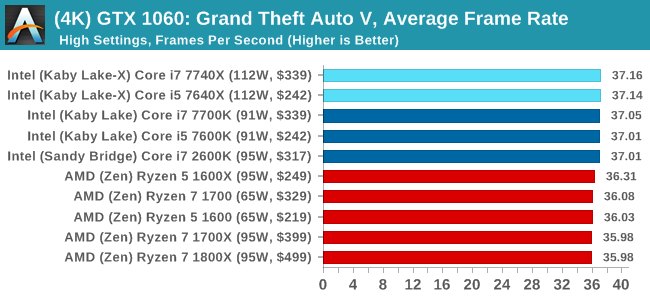

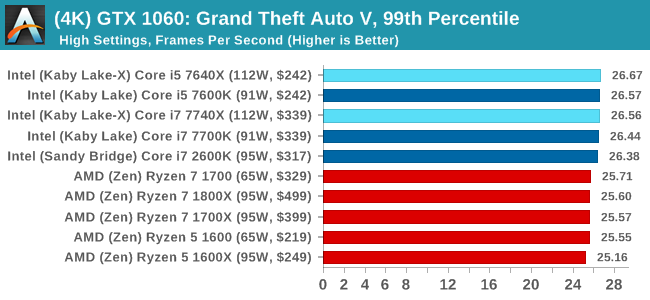

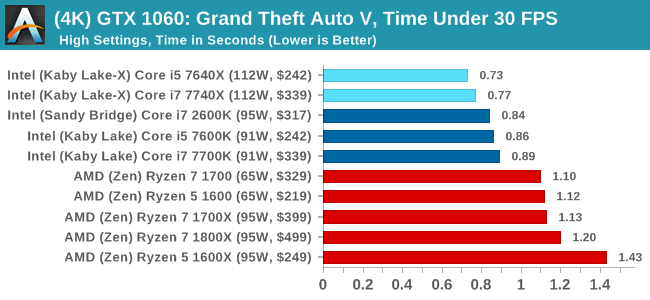

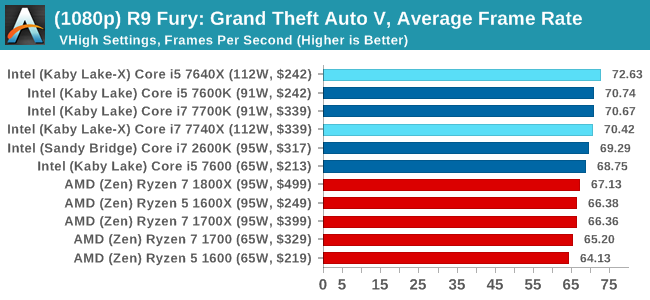

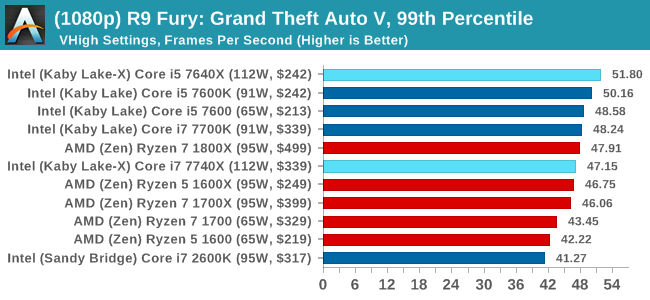

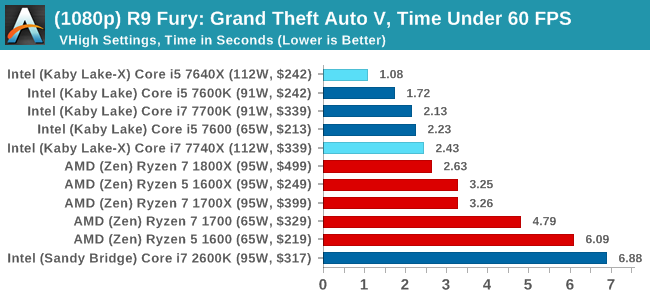

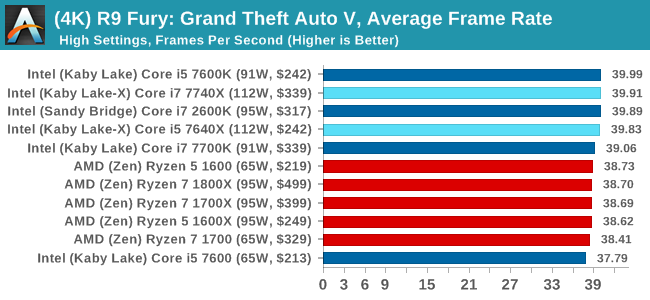

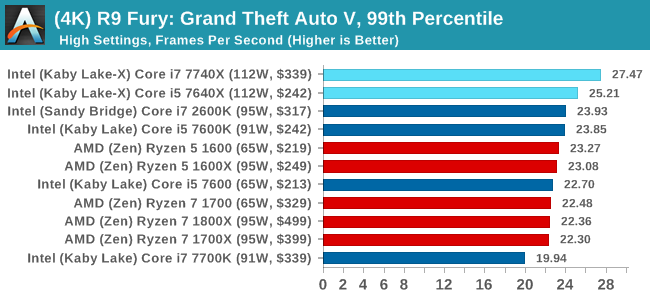

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

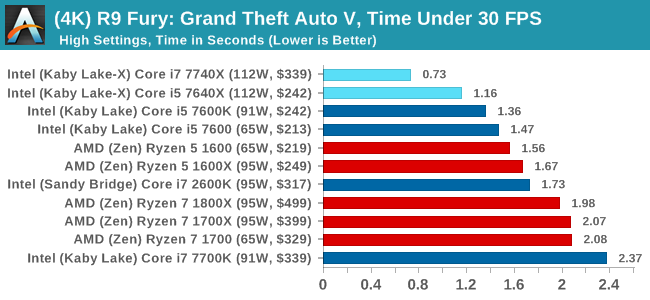

Sapphire R9 Fury 4GB Performance

1080p

4K

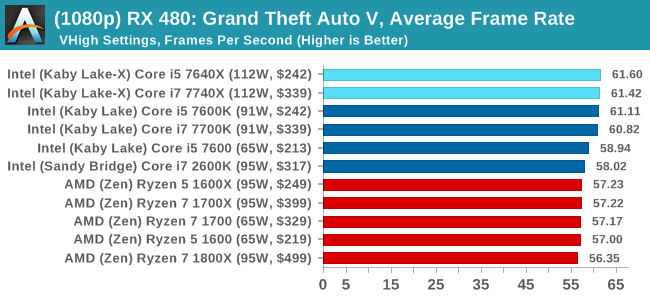

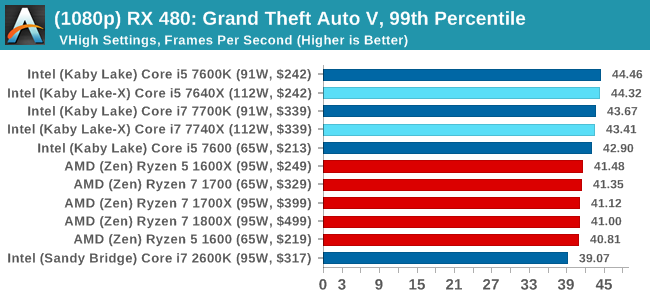

Sapphire RX 480 8GB Performance

1080p

4K

Grand Theft Auto Conclusions

Looking through the data, there seems to be a difference when looking at the results with an AMD GPU and an NVIDIA GPU. With the GTX 1080, there's a mix of AMD and Intel results there, but Intel takes a beating in the Time Under analysis at 1080p. The GTX 1060 is a mix at 1080p, but Intel takes the lead at 4K. When an AMD GPU is paired to the processor, all flags fly Intel.

176 Comments

View All Comments

mapesdhs - Monday, July 24, 2017 - link

Let the memes collide, focus the memetic radiation, aim it at IBM and get them to jump into the x86 battle. :Ddgz - Monday, July 24, 2017 - link

Man, I could really use an edit button. my brain has shit itselfmapesdhs - Monday, July 24, 2017 - link

Have you ever posted a correction because of a typo, then realised there was a typo in the correction? At that point my head explodes. :DGlock24 - Monday, July 24, 2017 - link

"The second is for professionals that know that their code cannot take advantage of hyperthreading and are happy with the performance. Perhaps in light of a hyperthreading bug (which is severely limited to minor niche edge cases), Intel felt a non-HT version was required."This does not make any sense. All motherboards I've used since Hyper Threading exists (yes, all the way back to the P4) lets you disable HT. There is really no reason for the X299 i5 to exist.

Ian Cutress - Monday, July 24, 2017 - link

Even if the i5 was $90-$100 cheaper? Why offer i5s at all?yeeeeman - Monday, July 24, 2017 - link

First interesting point to extract from this review is that i7 2600K is still good enough for most gaming tasks. Another point that we can extract is that games are not optimized for more than 4 core so all AMD offerings are yet to show what they are capable of, since all of them have more than 4 cores / 8 threads.I think single threading argument absolute performance argument is plain air, because the differences in single thread performance between all top CPUs that you can currently buy is slim, very slim. Kaby Lake CPUs are best in this just because they are sold with high clocks out of the box, but this doesn't mean that if AMD tweaks its CPUs and pushes them to 5Ghz it won't get back the crown. Also, in a very short time there will be another uArch and another CPU that will have again better single threaded performance so it is a race without end and without reason.

What is more relevant is the multi-core race, which sooner or later will end up being used more and more by games and software in general. And when games will move to over 4 core usage then all these 4 cores / 8 threads overpriced "monsters" will become useless. That is why I am saying that AMD has some real gems on their hands with the Ryzen family. I bet you that the R7 1700 will be a much better/competent CPU in 3 years time compared to 7700K or whatever you are reviewing here. Dirt cheap, push it to 4Ghz and forget about it.

Icehawk - Monday, July 24, 2017 - link

They have been saying for years that we will use more cores. Here we are almost 20 years down the road and there are few non professional apps and almost no games that use more than 4 cores and the vast majority use just two. Yes, more cores help with running multiple apps & instances but if we are just looking at the performance of the focused app less cores and more MHz is still the winner. From all I have read the two issues are that not everything is parallelizable and that coding for more cores/threads is more difficult and neither of those are going away.mapesdhs - Monday, July 24, 2017 - link

Thing is, until now there hasn't been a mainstream-affordable solution. It's true that parallel coding requires greater skill, but that being the case then the edu system should be teaching those skills. Instead the time is wasted on gender studies nonsense. Intel could have kick started this whole thing years ago by releasing the 3930K for what it actually was, an 8-core CPU (it has 2 cores disabled), but they didn't have to because back then AMD couldn't even compete with mid-range SB 2500K (hence why they never bothered with a 6-core for mainstream chipsets). One could argue the lack of market sw evolvement to exploit more cores is Intel's fault, they could have helped promote it a long time ago.cocochanel - Tuesday, July 25, 2017 - link

+1!!!twtech - Monday, July 24, 2017 - link

What can these chips do with a nice watercooling setup, and a goal of 24x7 stability? Maybe 4.7? 4.8?These seem like pretty moderate OCs overall, but I guess we were a bit spoiled by Sandy Bridge, etc., where a 1GHz overclock wasn't out of the question.