The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

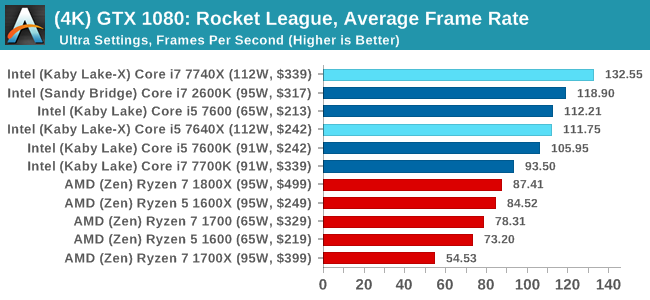

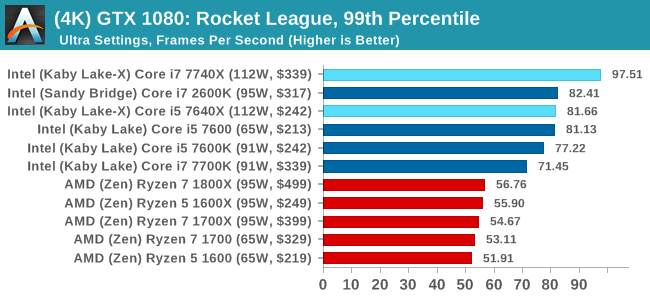

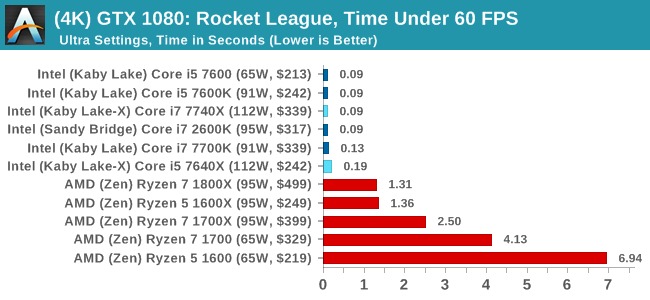

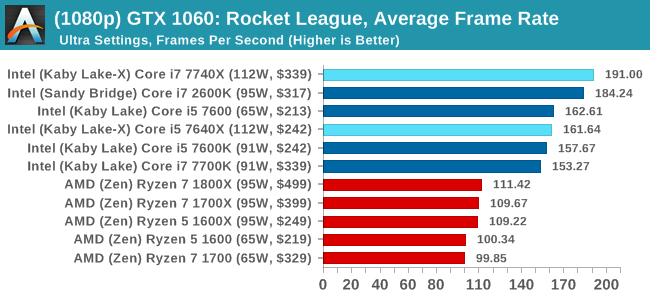

Rocket League

Hilariously simple pick-up-and-play games are great fun. I'm a massive fan of the Katamari franchise for that reason — passing start on a controller and rolling around, picking up things to get bigger, is extremely simple. Until we get a PC version of Katamari that I can benchmark, we'll focus on Rocket League.

Rocket League combines the elements of pick-up-and-play, allowing users to jump into a game with other people (or bots) to play football with cars with zero rules. The title is built on Unreal Engine 3, which is somewhat old at this point, but it allows users to run the game on super-low-end systems while still taxing the big ones. Since the release in 2015, it has sold over 5 million copies and seems to be a fixture at LANs and game shows. Users who train get very serious, playing in teams and leagues with very few settings to configure, and everyone is on the same level. Rocket League is quickly becoming one of the favored titles for e-sports tournaments, especially when e-sports contests can be viewed directly from the game interface.

Based on these factors, plus the fact that it is an extremely fun title to load and play, we set out to find the best way to benchmark it. Unfortunately for the most part automatic benchmark modes for games are few and far between. Partly because of this, but also on the basis that it is built on the Unreal 3 engine, Rocket League does not have a benchmark mode. In this case, we have to develop a consistent run and record the frame rate.

Read our initial analysis on our Rocket League benchmark on low-end graphics here.

With Rocket League, there is no benchmark mode, so we have to perform a series of automated actions, similar to a racing game having a fixed number of laps. We take the following approach: Using Fraps to record the time taken to show each frame (and the overall frame rates), we use an automation tool to set up a consistent 4v4 bot match on easy, with the system applying a series of inputs throughout the run, such as switching camera angles and driving around.

It turns out that this method is nicely indicative of a real bot match, driving up walls, boosting and even putting in the odd assist, save and/or goal, as weird as that sounds for an automated set of commands. To maintain consistency, the commands we apply are not random but time-fixed, and we also keep the map the same (Aquadome, known to be a tough map for GPUs due to water/transparency) and the car customization constant. We start recording just after a match starts, and record for 4 minutes of game time (think 5 laps of a DIRT: Rally benchmark), with average frame rates, 99th percentile and frame times all provided.

The graphics settings for Rocket League come in four broad, generic settings: Low, Medium, High and High FXAA. There are advanced settings in place for shadows and details; however, for these tests, we keep to the generic settings. For both 1920x1080 and 4K resolutions, we test at the High preset with an unlimited frame cap.

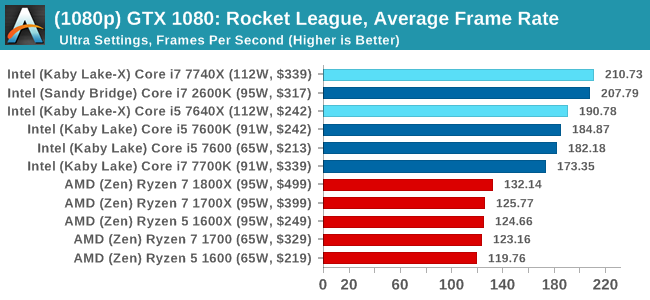

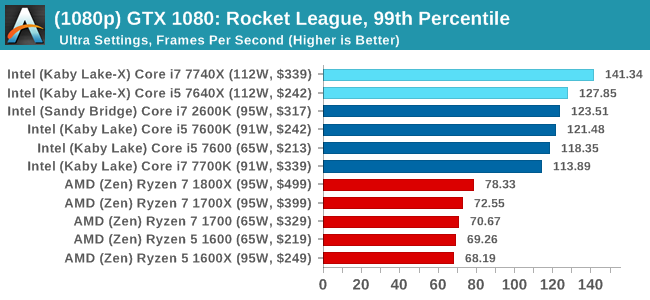

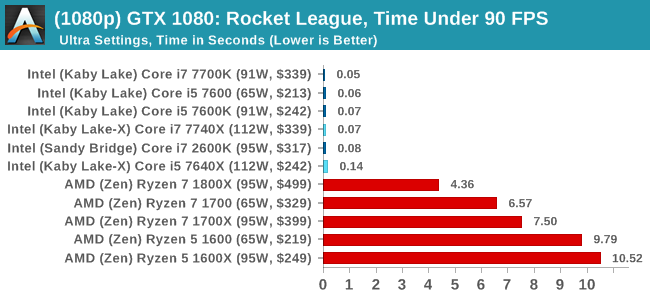

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

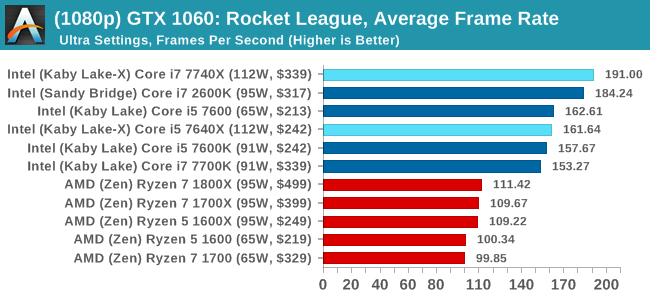

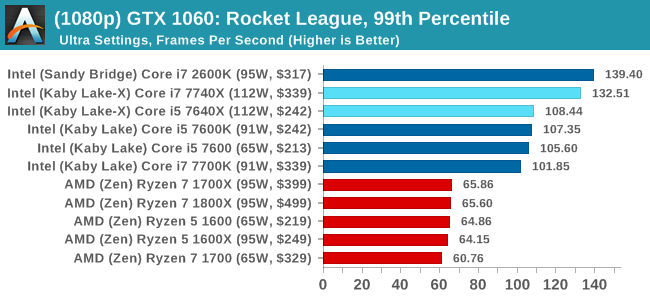

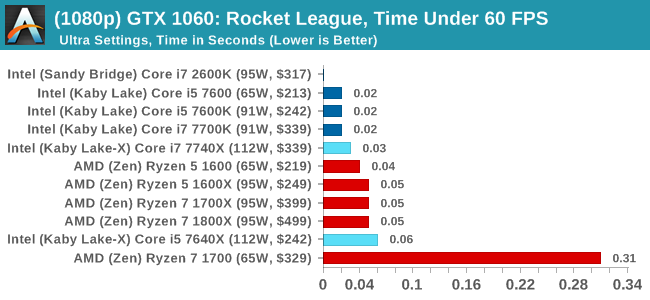

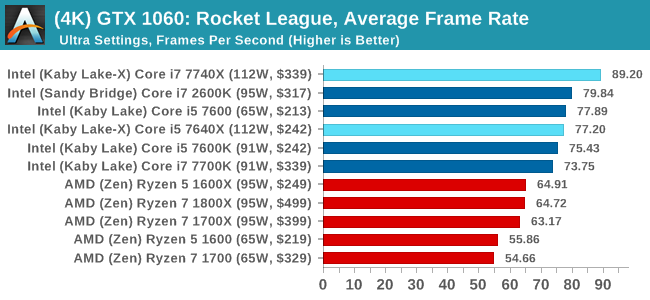

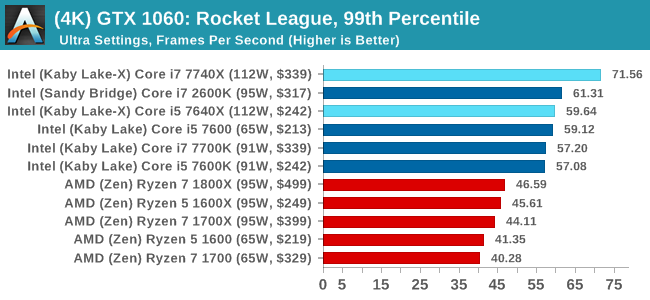

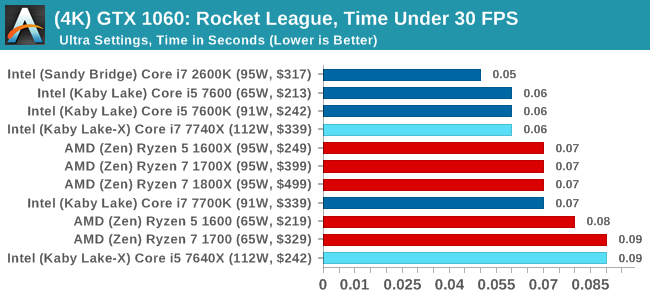

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

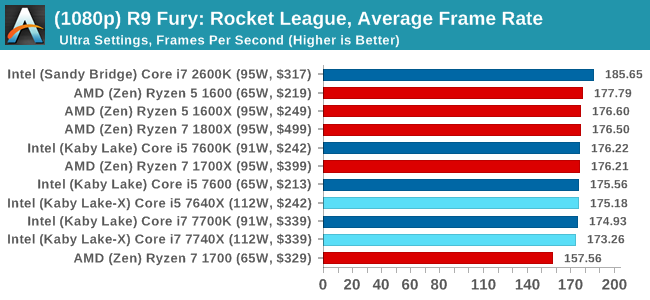

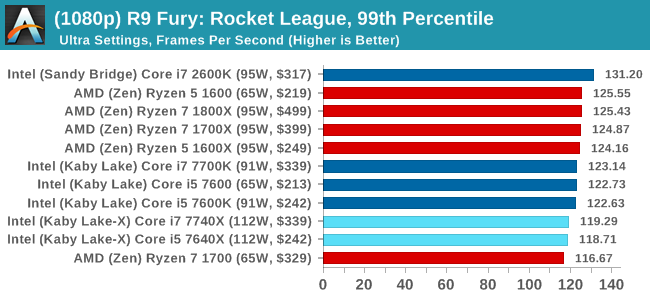

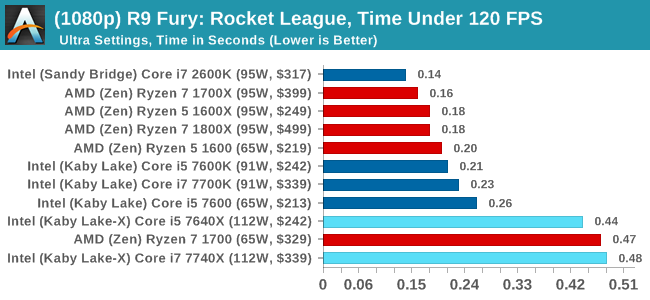

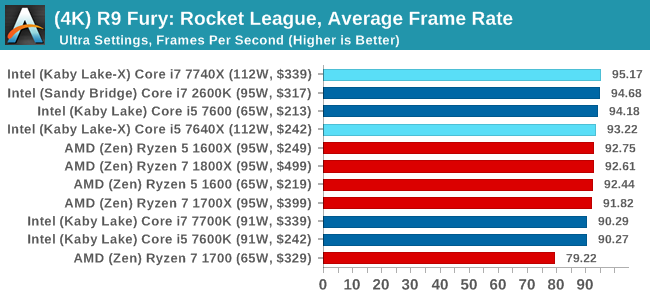

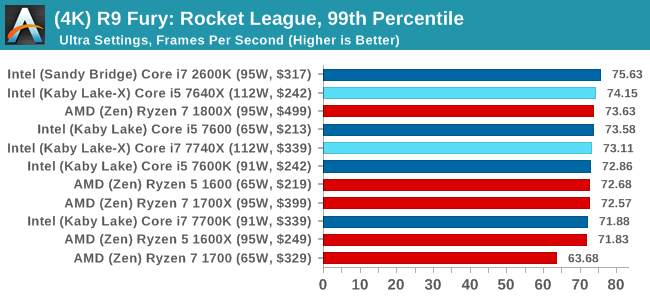

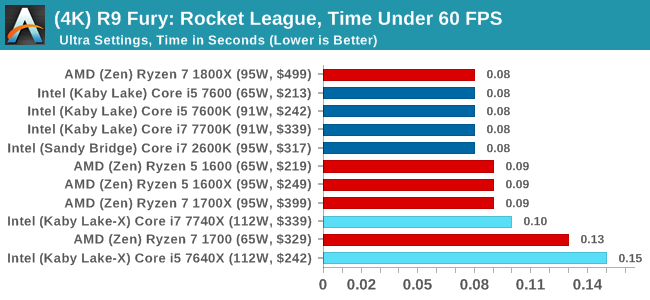

Sapphire R9 Fury 4GB Performance

1080p

4K

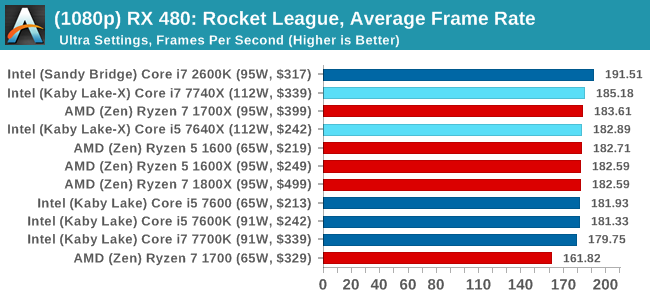

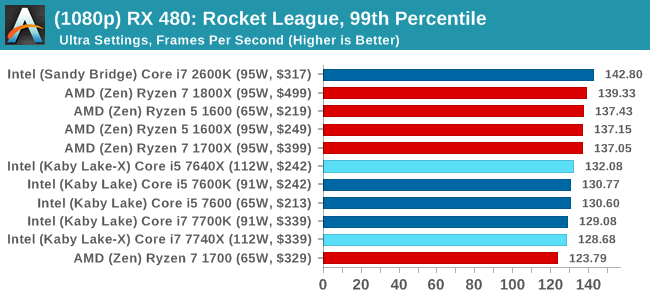

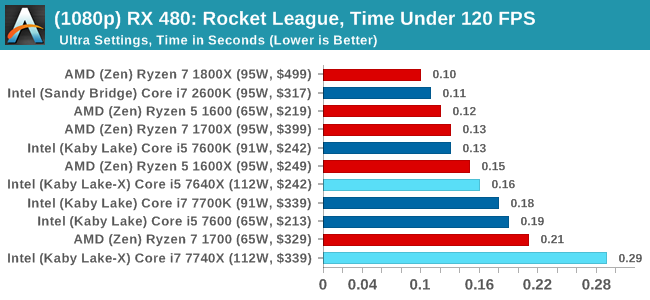

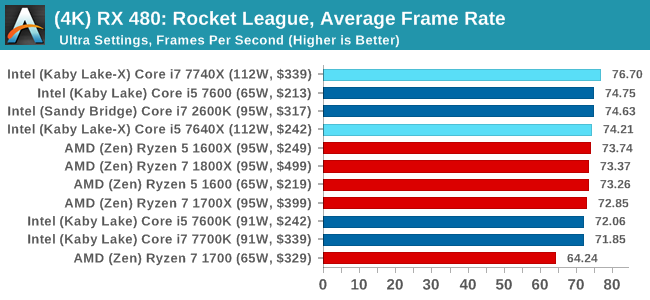

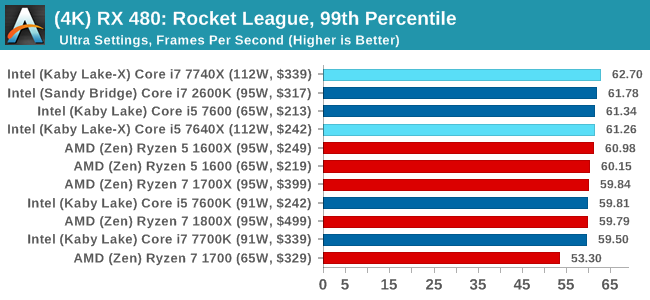

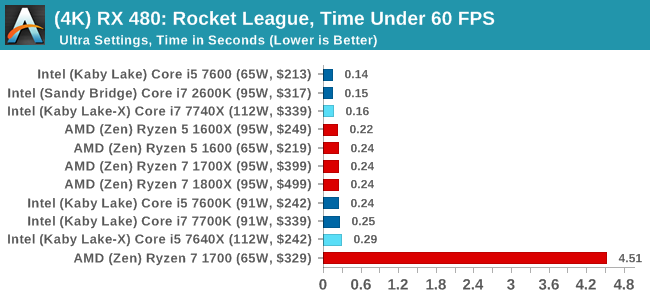

Sapphire RX 480 8GB Performance

1080p

4K

Rocket League Conclusions

The map we use in our testing, Aquadome, is known to be strenuous on a system, hence we see frame rates lower than what people expect for Rocket League - we're trying to cover the worst case scenario. But the results also show how AMD CPUs and NVIDIA GPUs do not seem to be playing ball with each other, which we've been told is likely related to drivers. The AMD GPUs work fine here regardless of resolution, and both AMD and Intel CPUs get in the mix.

176 Comments

View All Comments

mapesdhs - Monday, July 24, 2017 - link

Let the memes collide, focus the memetic radiation, aim it at IBM and get them to jump into the x86 battle. :Ddgz - Monday, July 24, 2017 - link

Man, I could really use an edit button. my brain has shit itselfmapesdhs - Monday, July 24, 2017 - link

Have you ever posted a correction because of a typo, then realised there was a typo in the correction? At that point my head explodes. :DGlock24 - Monday, July 24, 2017 - link

"The second is for professionals that know that their code cannot take advantage of hyperthreading and are happy with the performance. Perhaps in light of a hyperthreading bug (which is severely limited to minor niche edge cases), Intel felt a non-HT version was required."This does not make any sense. All motherboards I've used since Hyper Threading exists (yes, all the way back to the P4) lets you disable HT. There is really no reason for the X299 i5 to exist.

Ian Cutress - Monday, July 24, 2017 - link

Even if the i5 was $90-$100 cheaper? Why offer i5s at all?yeeeeman - Monday, July 24, 2017 - link

First interesting point to extract from this review is that i7 2600K is still good enough for most gaming tasks. Another point that we can extract is that games are not optimized for more than 4 core so all AMD offerings are yet to show what they are capable of, since all of them have more than 4 cores / 8 threads.I think single threading argument absolute performance argument is plain air, because the differences in single thread performance between all top CPUs that you can currently buy is slim, very slim. Kaby Lake CPUs are best in this just because they are sold with high clocks out of the box, but this doesn't mean that if AMD tweaks its CPUs and pushes them to 5Ghz it won't get back the crown. Also, in a very short time there will be another uArch and another CPU that will have again better single threaded performance so it is a race without end and without reason.

What is more relevant is the multi-core race, which sooner or later will end up being used more and more by games and software in general. And when games will move to over 4 core usage then all these 4 cores / 8 threads overpriced "monsters" will become useless. That is why I am saying that AMD has some real gems on their hands with the Ryzen family. I bet you that the R7 1700 will be a much better/competent CPU in 3 years time compared to 7700K or whatever you are reviewing here. Dirt cheap, push it to 4Ghz and forget about it.

Icehawk - Monday, July 24, 2017 - link

They have been saying for years that we will use more cores. Here we are almost 20 years down the road and there are few non professional apps and almost no games that use more than 4 cores and the vast majority use just two. Yes, more cores help with running multiple apps & instances but if we are just looking at the performance of the focused app less cores and more MHz is still the winner. From all I have read the two issues are that not everything is parallelizable and that coding for more cores/threads is more difficult and neither of those are going away.mapesdhs - Monday, July 24, 2017 - link

Thing is, until now there hasn't been a mainstream-affordable solution. It's true that parallel coding requires greater skill, but that being the case then the edu system should be teaching those skills. Instead the time is wasted on gender studies nonsense. Intel could have kick started this whole thing years ago by releasing the 3930K for what it actually was, an 8-core CPU (it has 2 cores disabled), but they didn't have to because back then AMD couldn't even compete with mid-range SB 2500K (hence why they never bothered with a 6-core for mainstream chipsets). One could argue the lack of market sw evolvement to exploit more cores is Intel's fault, they could have helped promote it a long time ago.cocochanel - Tuesday, July 25, 2017 - link

+1!!!twtech - Monday, July 24, 2017 - link

What can these chips do with a nice watercooling setup, and a goal of 24x7 stability? Maybe 4.7? 4.8?These seem like pretty moderate OCs overall, but I guess we were a bit spoiled by Sandy Bridge, etc., where a 1GHz overclock wasn't out of the question.