The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

Benchmarking Performance: CPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

Corona 1.3: link

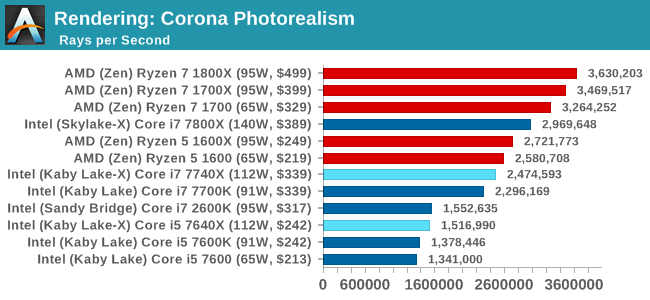

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

More threads win the day, although the Core i7 does knock at the door of the Ryzen 5 (presumably with $110 in hand as well). It is worth noting that the Core i5-7640X and the older Core i7-2600K are on equal terms.

Blender 2.78: link

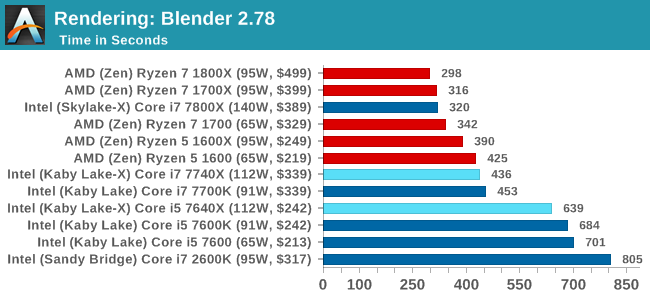

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

Similar to Corona, more threads means a faster time.

LuxMark v3.1: Link

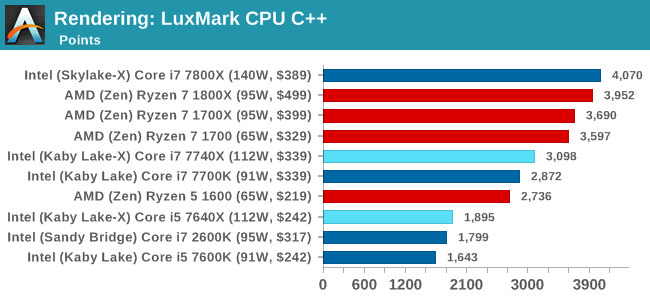

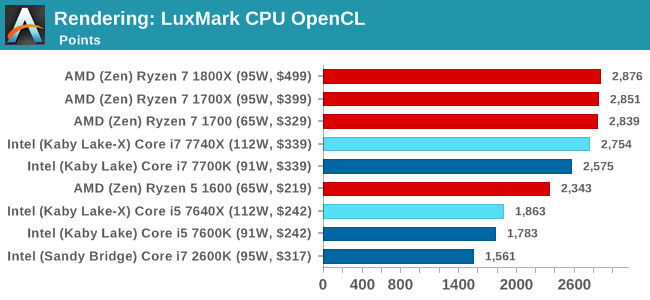

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

Luxmark is more thread and cache dependent, and so the Core i7 nips at the heels of the AMD parts with double the threads. The Core i5 sits behind the the Ryzen 5 parts though, due to the 1:3 thread difference.

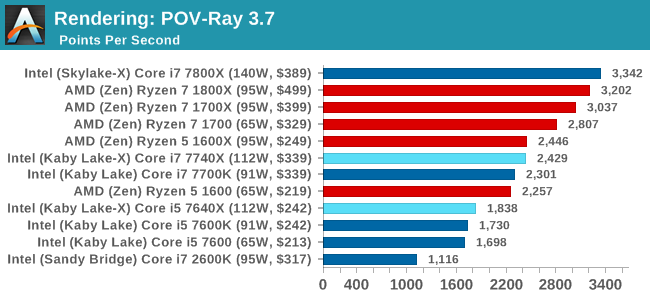

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

Mirror Mirror on the wall...

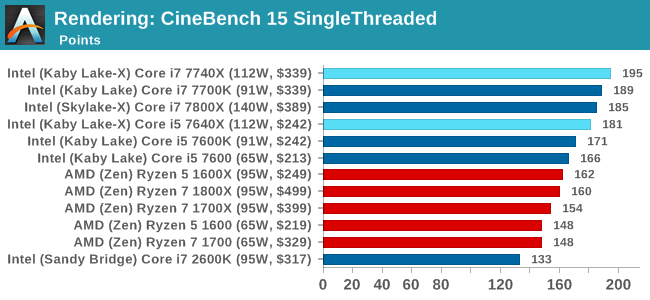

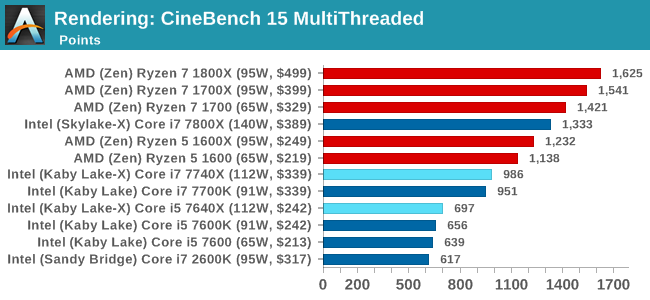

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

CineBench gives us singlethreaded numbers, and it is clear who rules the roost, almost scoring 200. The Core i7-2600K, due to its lack of instruction support, sits in the corner.

176 Comments

View All Comments

MTEK - Monday, July 24, 2017 - link

Random amusement: Sandy Bridge got 1st place in the Shadow of Mordor bench w/ a GTX 1060.shabby - Monday, July 24, 2017 - link

That's funny and sad at the same time unfortunately.mapesdhs - Monday, July 24, 2017 - link

S'why I love my 5GHz 2700K (daily system). And the other one (gaming PC). And the third (benchmarking rig), the two I've sold to companies, another built for a friend, another set aside to sell, another on a shelf awaiting setup... :D 5GHz every time. M4E, TRUE, one fan, 5 mins, done.GeorgeH - Monday, July 24, 2017 - link

Those decreased overclocking performance numbers aren't just red flags, they're blinding red flashing lights with the power of a thousand suns.Seriously, that should have been the entire article - this platform is a disaster if it loses performance under sustained load. That's not hyperbole, it's cold hard truth. Sustained load is part of what HEDT is about, and with X299 you're spending more money for significantly less performance?

I sincerely hope you're going to get to the bottom of this and not just shrug and let it slide away as a mystery. Hopefully it's just platform immaturity that gets ironed out, but at the present time I have absolutely no clue how you could recommend X299 in any way. Significantly less sustained performance is a do not pass go, do not collect $200, turn the car around, oh hell no, all caps showstopper.

deathBOB - Monday, July 24, 2017 - link

But they're big AVX workloads. We know heat and power get a bit crazy with the AVX, and at some point we should just step back and realize that overclocking may not be appropriate for these workloads.GeorgeH - Monday, July 24, 2017 - link

But other AVX workloads didn't have the issue.Until we know exactly what is going on and what will be required to fix it, I can't comprehend how anyone can regard X299, at least with the quad core CPUs, as anything but "Nope". Maybe a BIOS update will help, or tuning the overclock, but maybe it'll require new motherboard revisions or delidding the CPU. I'm sure it'll get fixed/understood at some point, but for now recommending this platform is really hard to accept as a good idea.

MrSpadge - Monday, July 24, 2017 - link

> But other AVX workloads didn't have the issue.Using a few of those instructions is different from hammering the CPU with them. Not sure what this software does, but this could easily explain it.

Icehawk - Monday, July 24, 2017 - link

I do a lot of Handbrake encoding to HEVC which will peg all cores on my O/C'd 3770, it uses AVX but obviously a much older version with less functionality, and I can have it going indefinitely without issue.I've looked at the 7800\7820 as an upgrade but if they cannot sustain performance with a reasonable cooling setup then there is no point. The KBL-X parts don't offer enough of a performance improvement to be worth the cost of the X299 mobo which also seem to be having teething problems.

Future proofing is laughable, let's say you bought a 7740x today with the thought of upgrading in two years to a higher core count proc - how likely is it that your motherboard and the new proc will have the same pinout? History says it ain't happening at Camp Intel.

At this point I'm giving a hard pass to this generation of Intel products and hope that v2 will fix these issues. By then AMD may have come close enough in ST performance where I would consider them again, I really want the best ST & MT performance I can get in the $350 CPU zone which has traditionally been the top i7. AMD's MT performance almost tempts me to just build an encoding box.

I loved my Athlon back in the day, anyone remember Golden Fingers? :D

mapesdhs - Monday, July 24, 2017 - link

Golden Fingers... I had to look that up, blimey! :DDrKlahn - Tuesday, July 25, 2017 - link

I recently went from a 4.6GHz 3770K to a 1700X @ 4GHz at home. I play some older games that don't thread well (WoW being one of them). The Ryzen is at least as fast or faster in those workloads. Run Handbrake or Sony Movie Studio and the Ryzen is MUCH faster. We use built 6 core 5820K stations at work for some users and have recently added Ryzen 1600 stations due to the tremendous cost savings. We have yet to run into any tangible difference between the two platforms.Intel does have a lead in ST, but tests like these emphasize it to the point it seems like a bigger advantage than it is in reality. The only time I could see the premium worth it is if you have a task that needs ST the majority of the time (or a program is simply very poorly optimized for Ryzen). Otherwise AMD is offering an extraordinary value and as you point out AM4 will at least be supported for 2 more spins.