The Intel Kaby Lake-X i7 7740X and i5 7640X Review: The New Single-Threaded Champion, OC to 5GHz

by Ian Cutress on July 24, 2017 8:30 AM EST- Posted in

- CPUs

- Intel

- Kaby Lake

- X299

- Basin Falls

- Kaby Lake-X

- i7-7740X

- i5-7640X

Rise of the Tomb Raider

One of the newest games in the gaming benchmark suite is Rise of the Tomb Raider (RoTR), developed by Crystal Dynamics, and the sequel to the popular Tomb Raider which was loved for its automated benchmark mode. But don’t let that fool you: the benchmark mode in RoTR is very much different this time around.

Visually, the previous Tomb Raider pushed realism to the limits with features such as TressFX, and the new RoTR goes one stage further when it comes to graphics fidelity. This leads to an interesting set of requirements in hardware: some sections of the game are typically GPU limited, whereas others with a lot of long-range physics can be CPU limited, depending on how the driver can translate the DirectX 12 workload.

Where the old game had one benchmark scene, the new game has three different scenes with different requirements: Geothermal Valley (1-Valley), Prophet’s Tomb (2-Prophet) and Spine of the Mountain (3-Mountain) - and we test all three. These are three scenes designed to be taken from the game, but it has been noted that scenes like 2-Prophet shown in the benchmark can be the most CPU limited elements of that entire level, and the scene shown is only a small portion of that level. Because of this, we report the results for each scene on each graphics card separately.

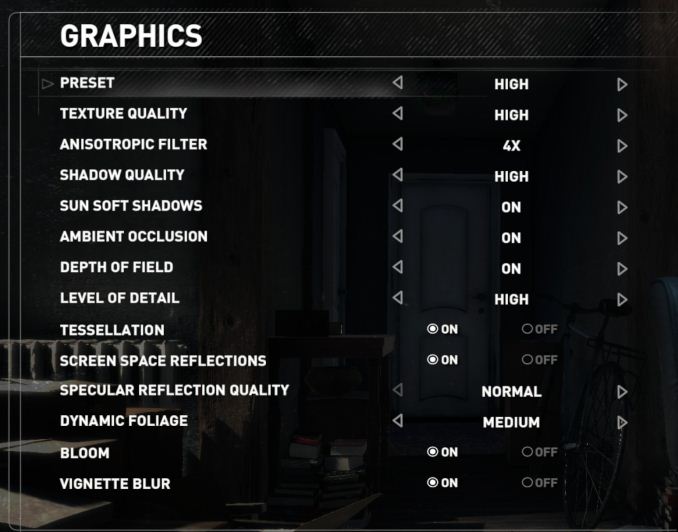

Graphics options for RoTR are similar to other games in this type, offering some presets or allowing the user to configure texture quality, anisotropic filter levels, shadow quality, soft shadows, occlusion, depth of field, tessellation, reflections, foliage, bloom, and features like PureHair which updates on TressFX in the previous game.

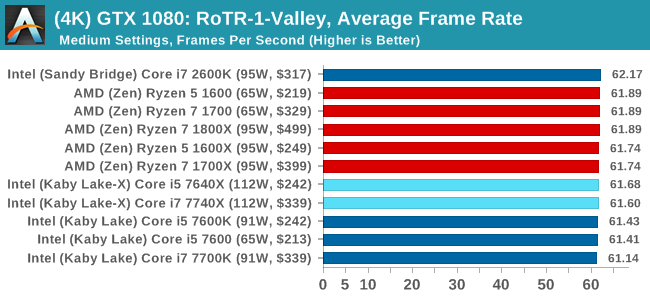

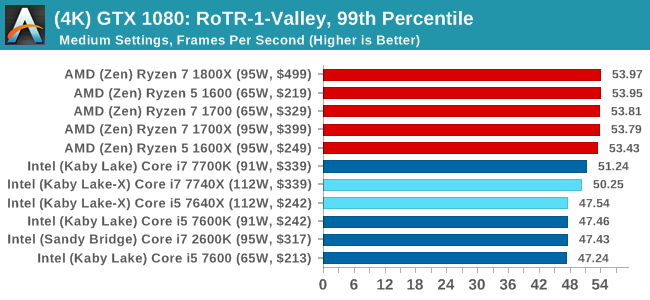

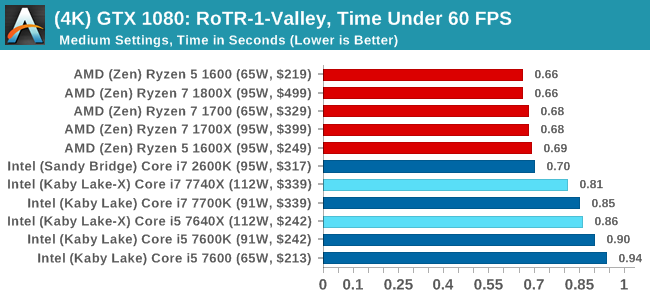

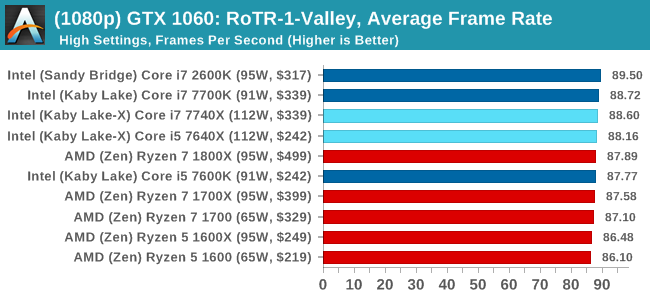

Again, we test at 1920x1080 and 4K using our native 4K displays. At 1080p we run the High preset, while at 4K we use the Medium preset which still takes a sizable hit in frame rate.

It is worth noting that RoTR is a little different to our other benchmarks in that it keeps its graphics settings in the registry rather than a standard ini file, and unlike the previous TR game the benchmark cannot be called from the command-line. Nonetheless we scripted around these issues to automate the benchmark four times and parse the results. From the frame time data, we report the averages, 99th percentiles, and our time under analysis.

For all our results, we show the average frame rate at 1080p first. Mouse over the other graphs underneath to see 99th percentile frame rates and 'Time Under' graphs, as well as results for other resolutions. All of our benchmark results can also be found in our benchmark engine, Bench.

#1 Geothermal Valley

MSI GTX 1080 Gaming 8G Performance

1080p

4K

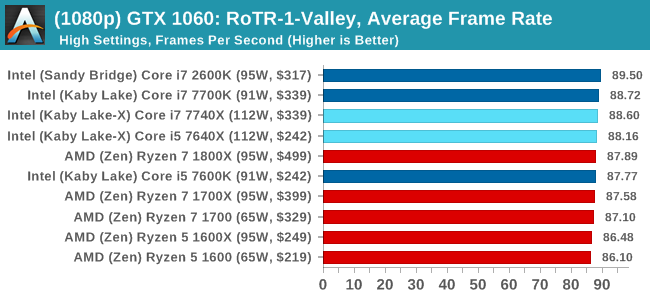

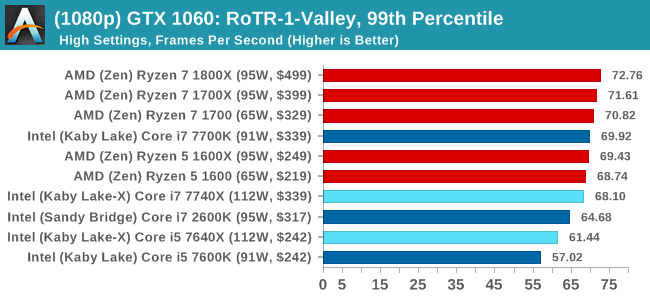

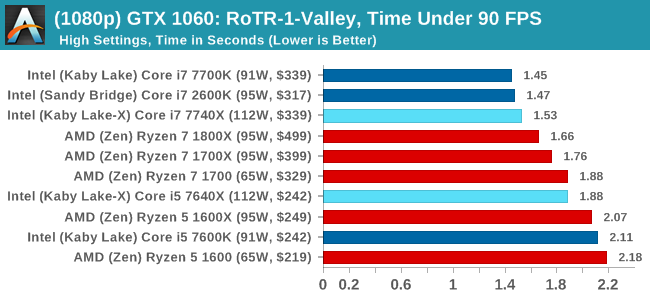

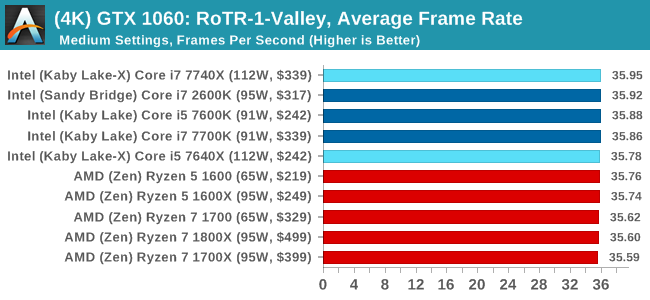

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

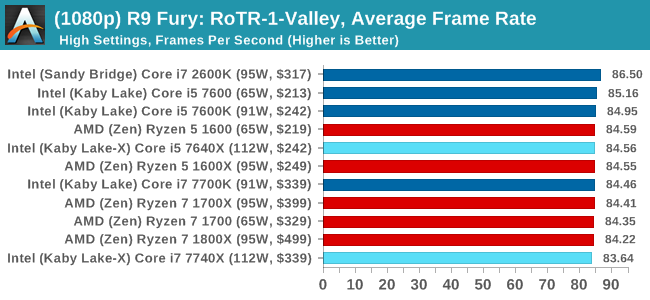

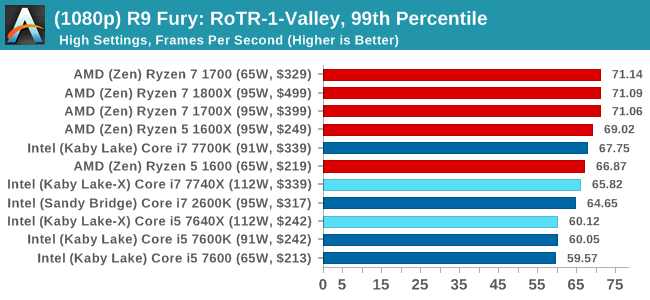

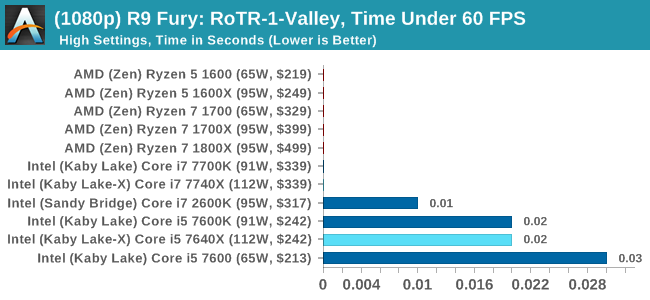

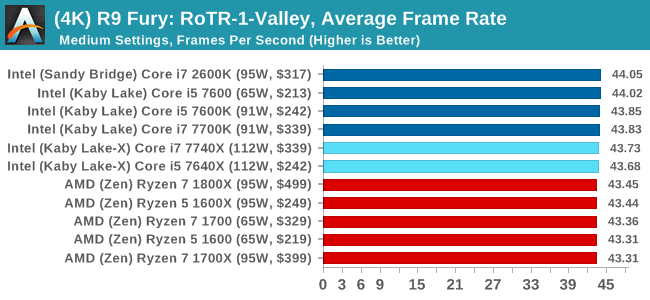

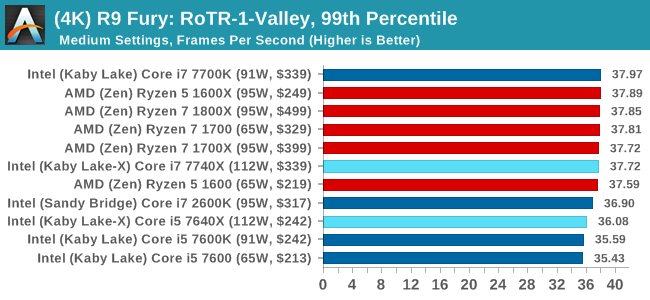

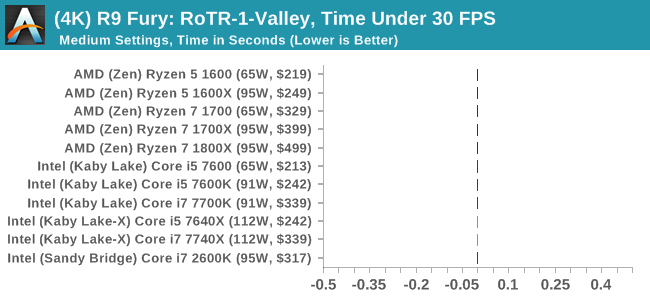

Sapphire R9 Fury 4GB Performance

1080p

4K

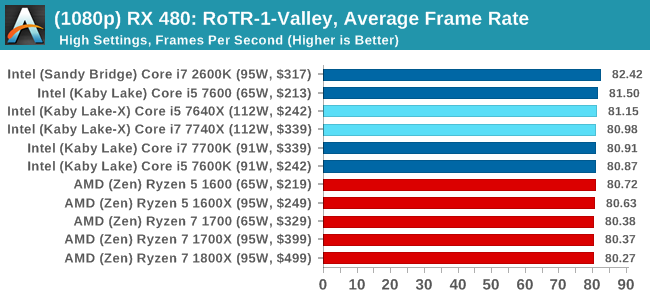

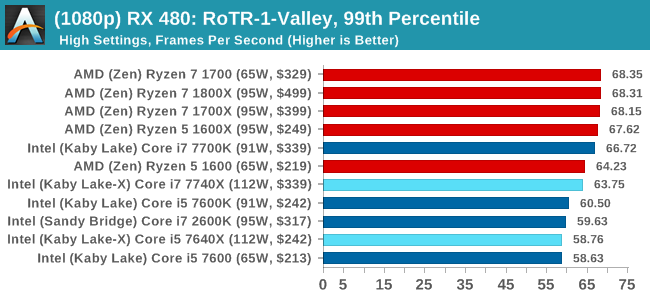

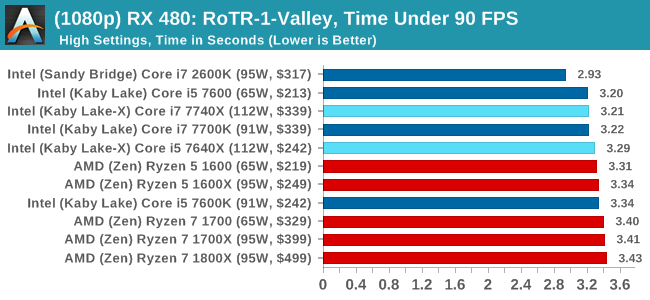

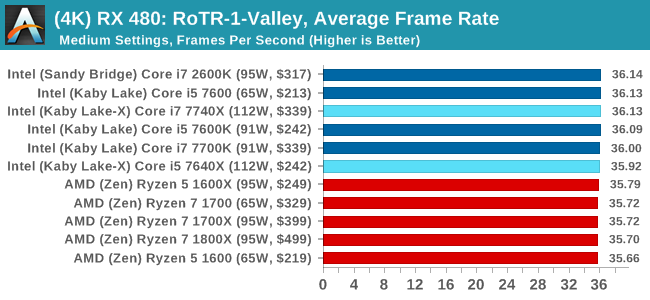

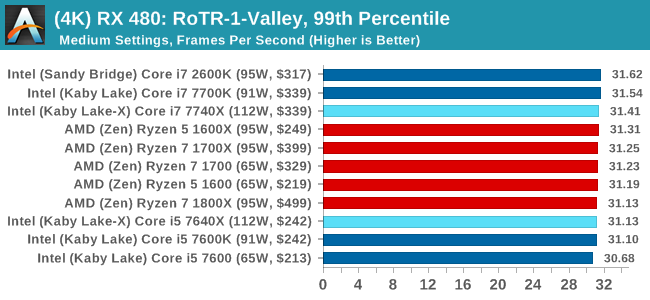

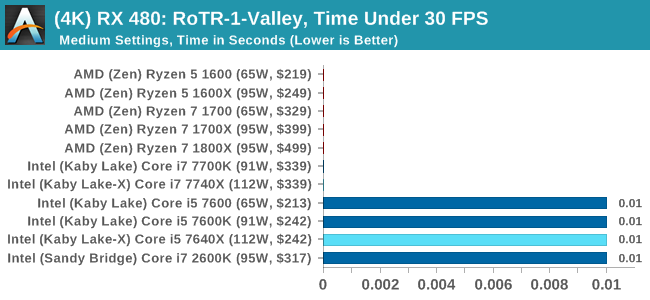

Sapphire RX 480 8GB Performance

1080p

4K

RoTR: Geothermal Valley Conclusions

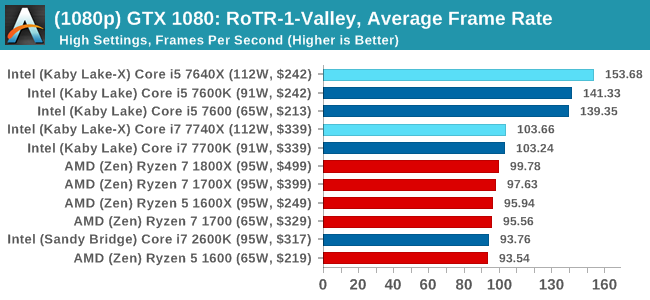

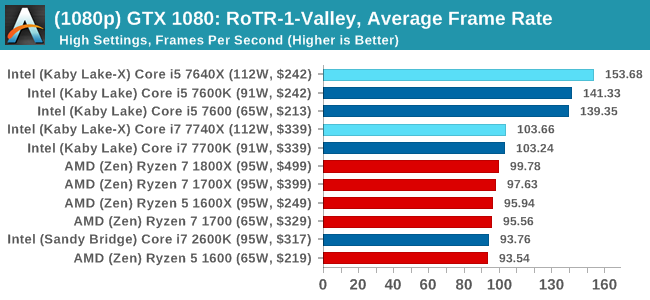

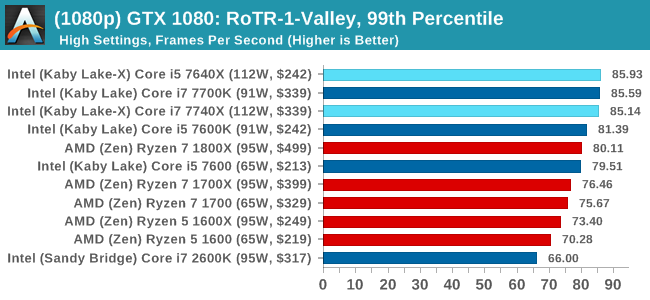

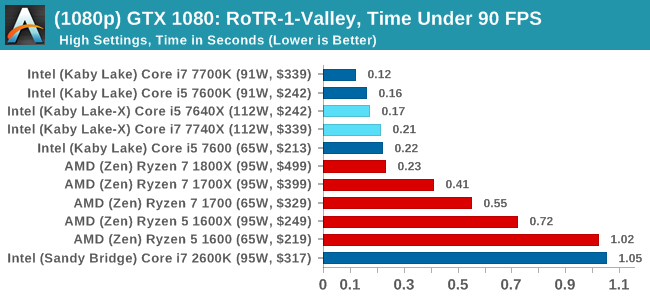

If we were testing a single GTX 1080 at 1080p, you might think that the graph looks a little odd. All the quad-core, non HT processors (so, the Core i5s) get the best frame rates and percentiles on this specific test on this specific hardware by a good margin. The rest of the tests do not mirror that result though, with the results ping-ponging between Intel and AMD depending on the resolution and the graphics card.

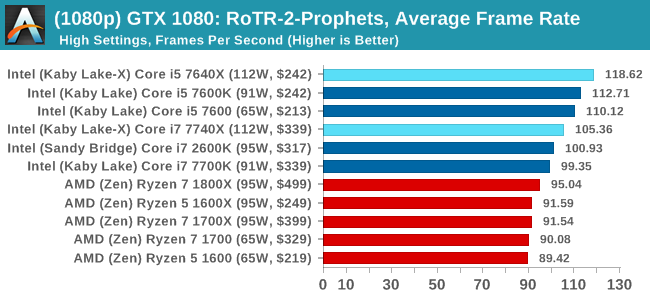

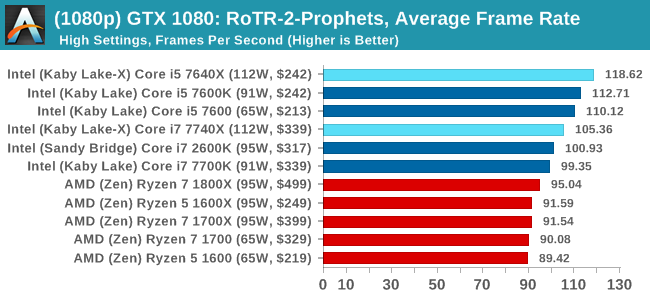

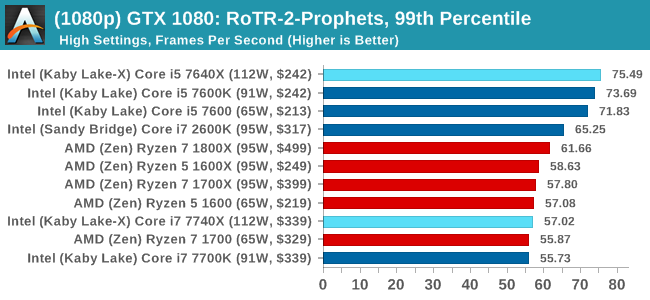

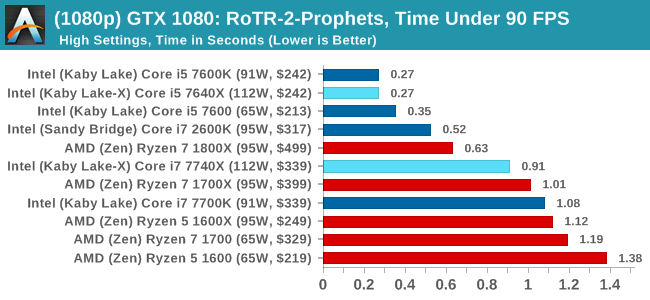

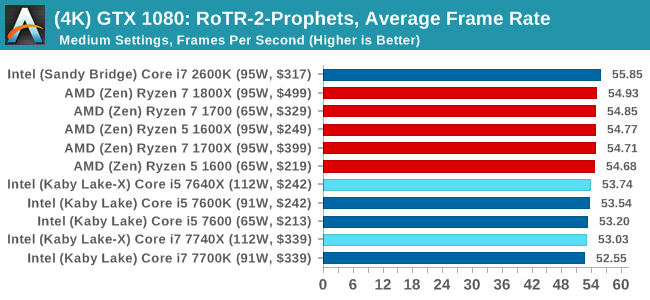

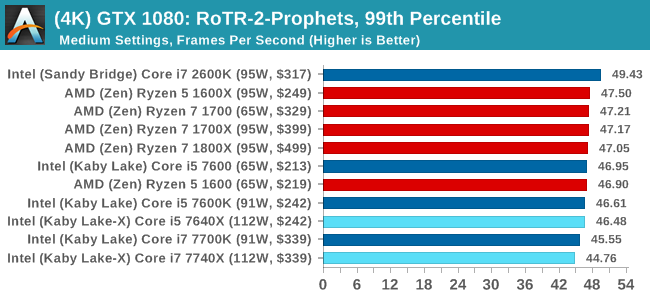

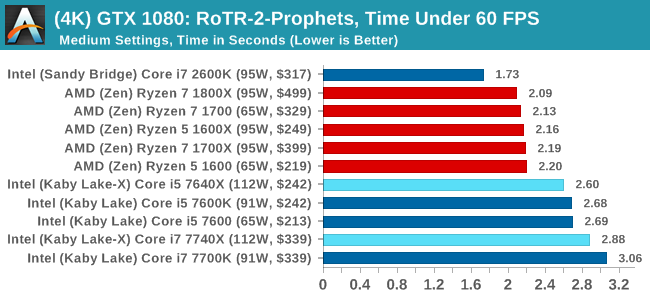

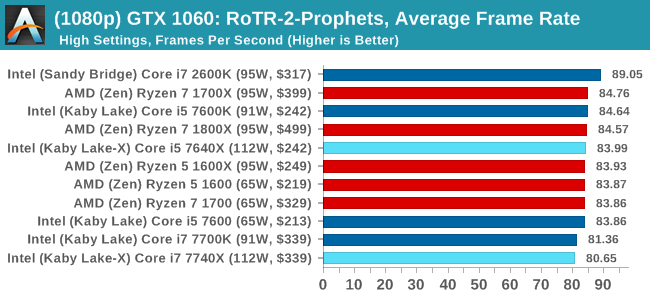

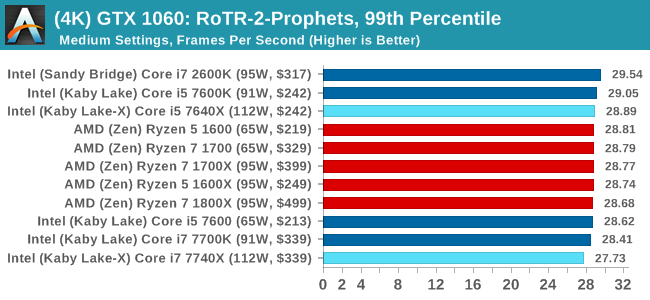

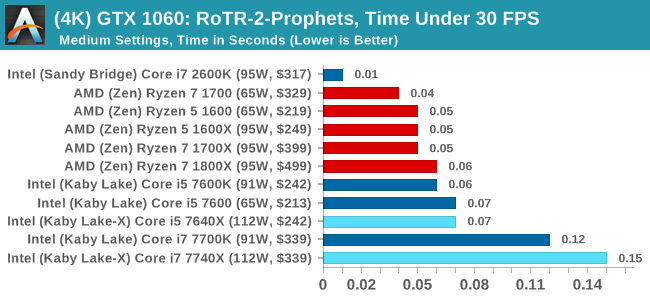

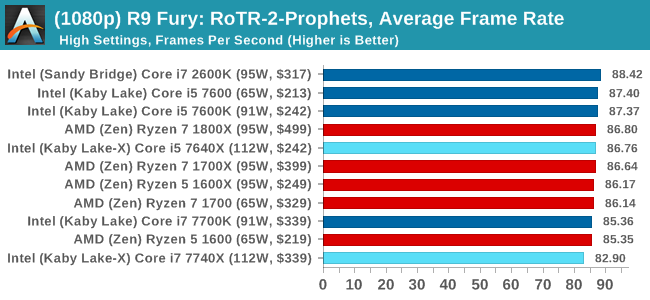

#2 Prophet's Tomb

MSI GTX 1080 Gaming 8G Performance

1080p

4K

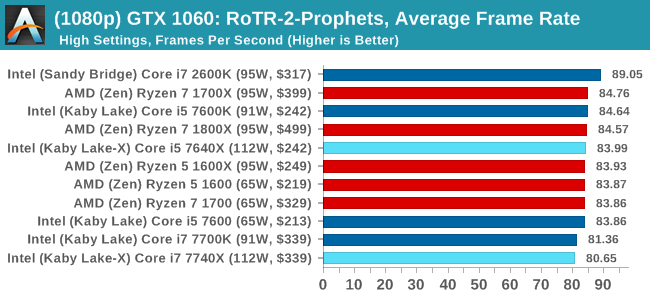

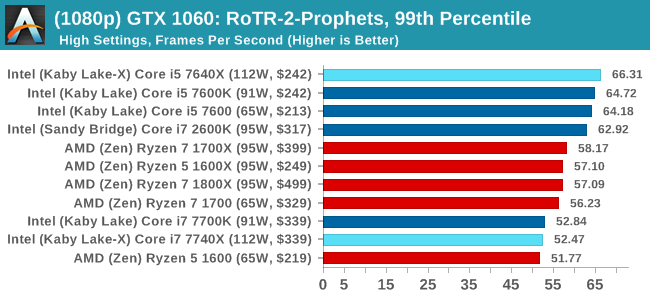

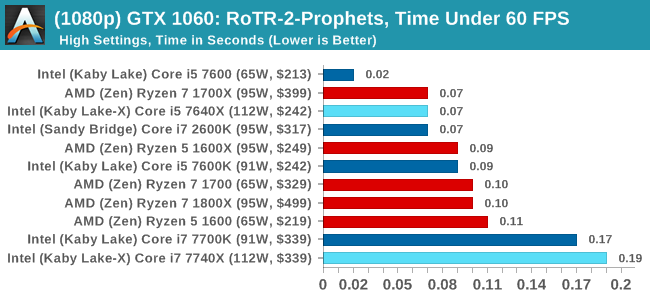

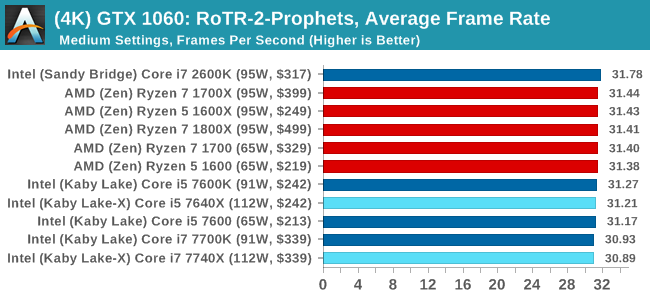

ASUS GTX 1060 Strix 6GB Performance

1080p

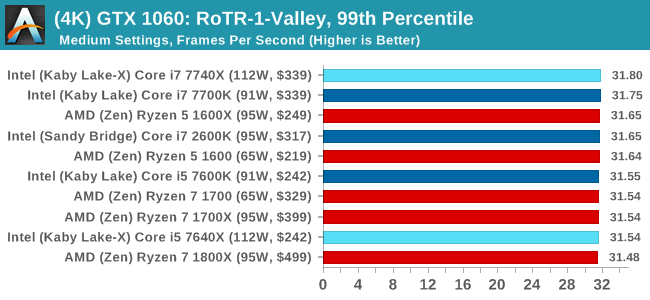

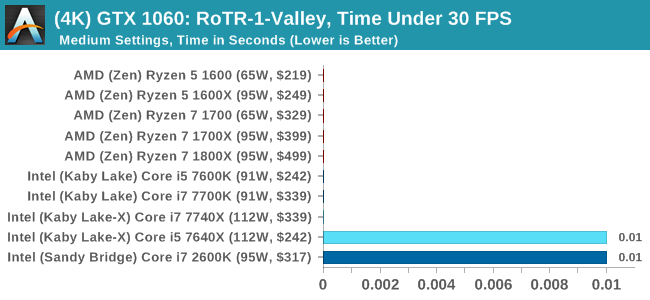

4K

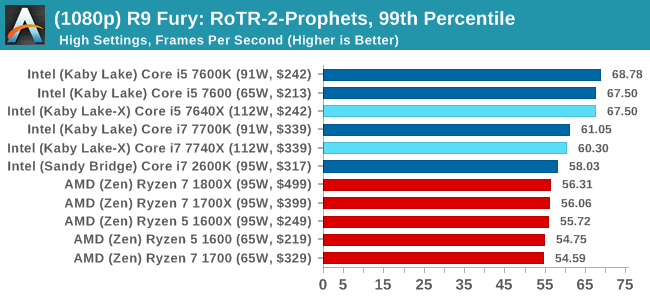

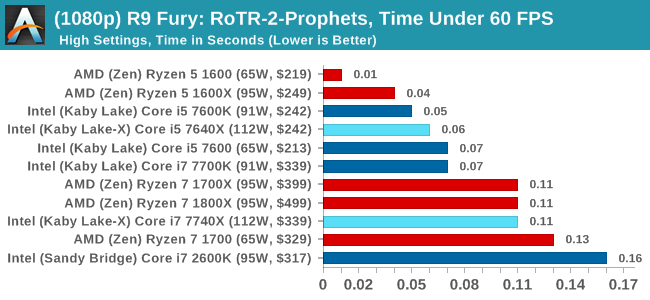

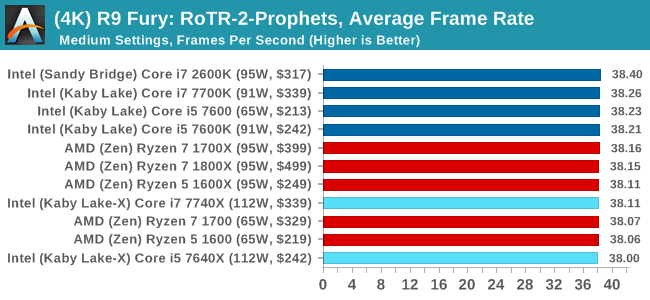

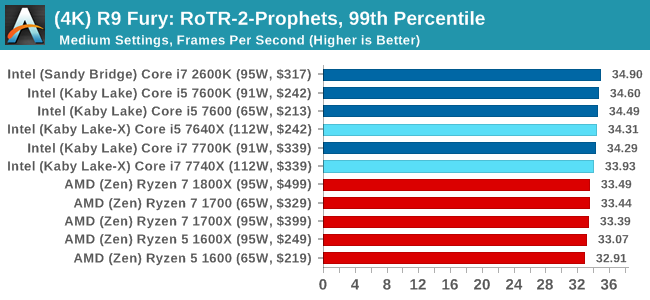

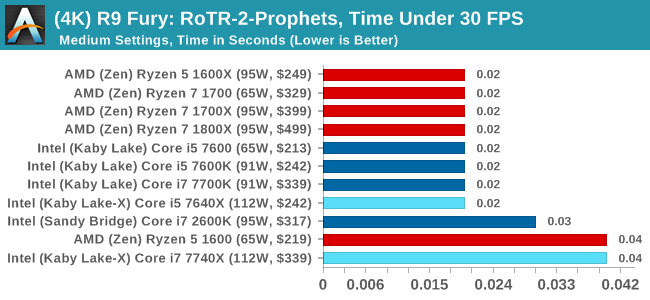

Sapphire R9 Fury 4GB Performance

1080p

4K

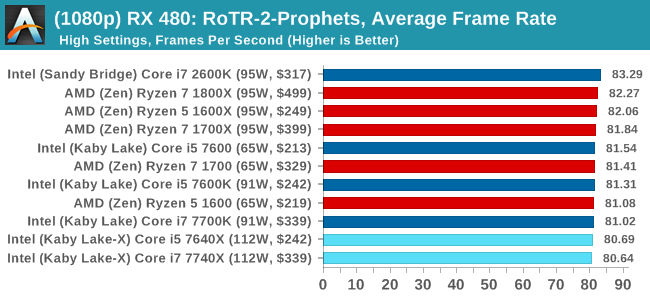

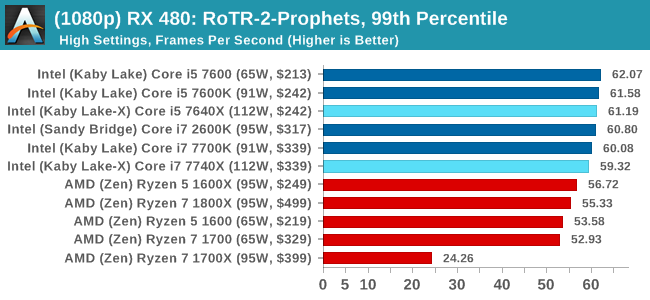

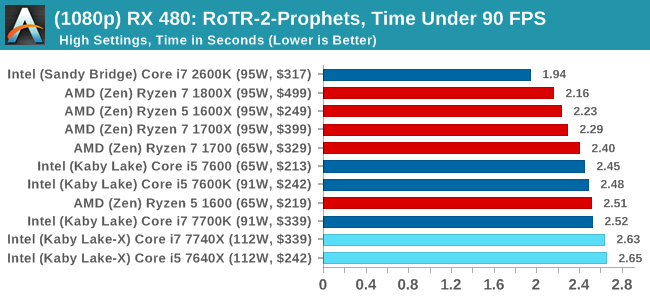

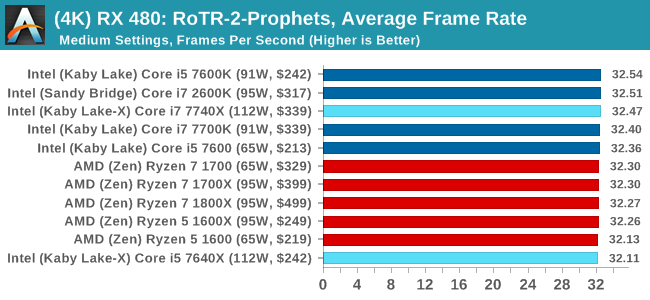

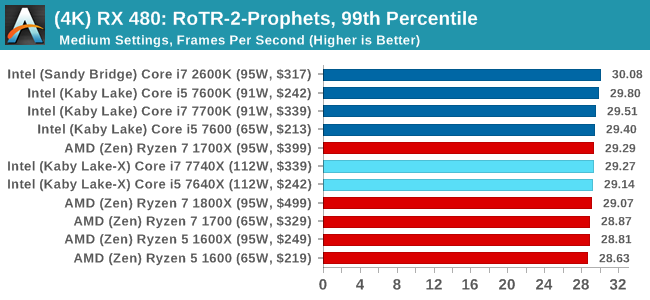

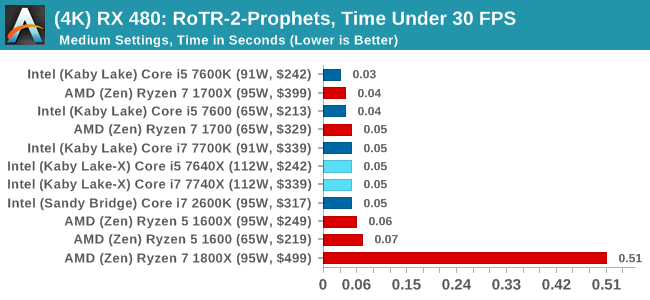

Sapphire RX 480 8GB Performance

1080p

4K

RoTR: Prophet's Tomb Conclusions

For Prophet's Tomb, we again see the Core i5s pull a win at 1080p using the GTX 1080, but the rest of the tests are a mix of results, some siding with AMD and others for Intel. There is the odd outlier in the Time Under analysis, which may warrant further inspection.

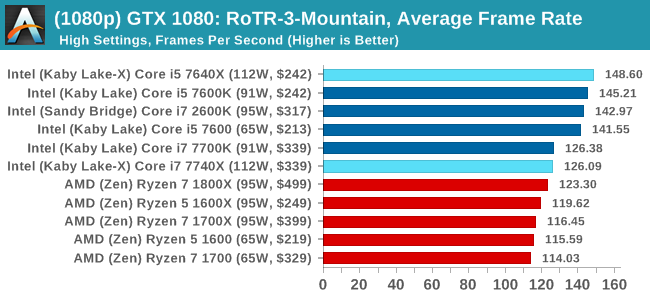

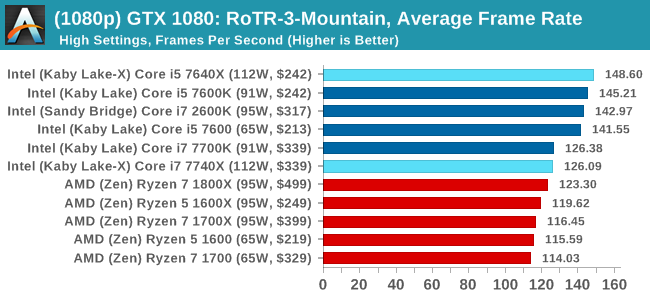

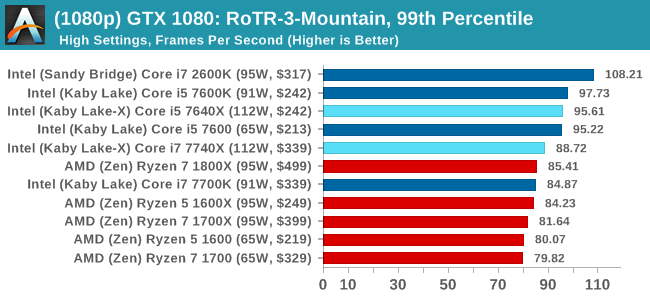

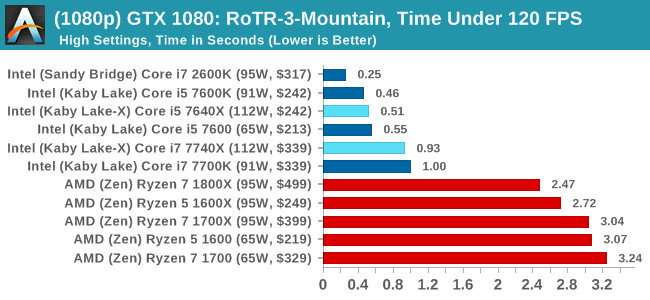

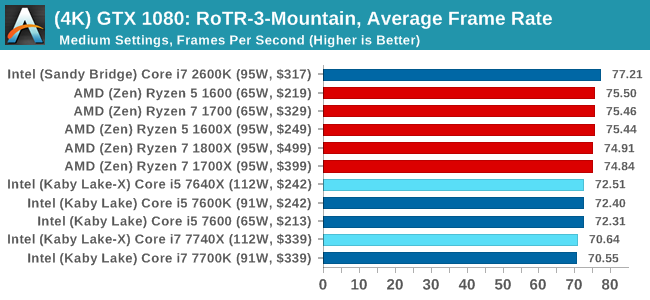

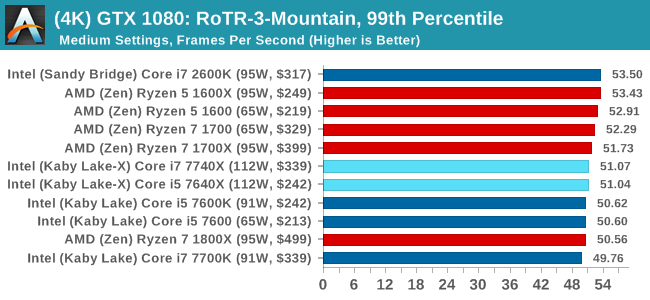

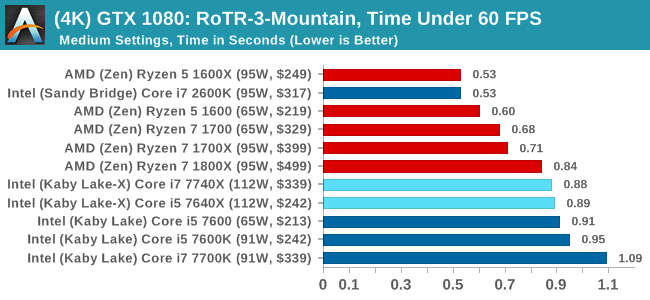

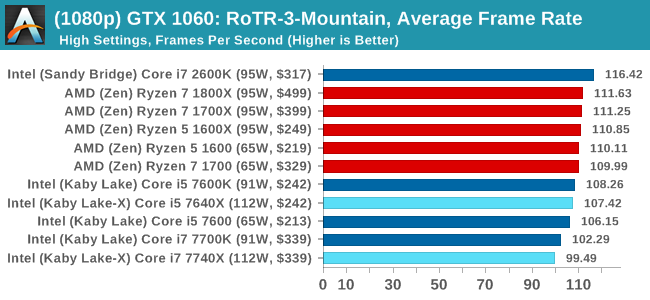

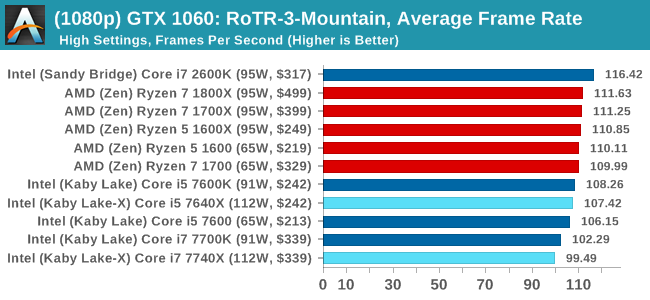

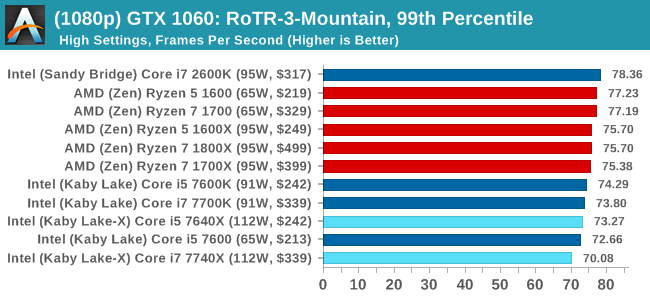

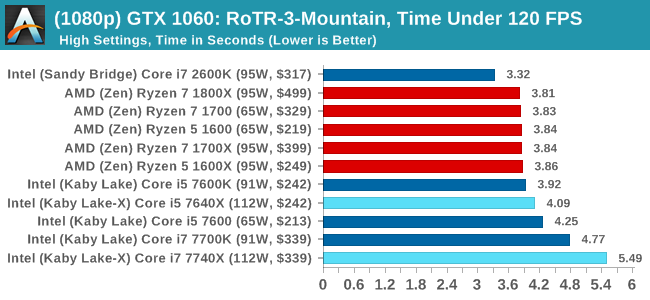

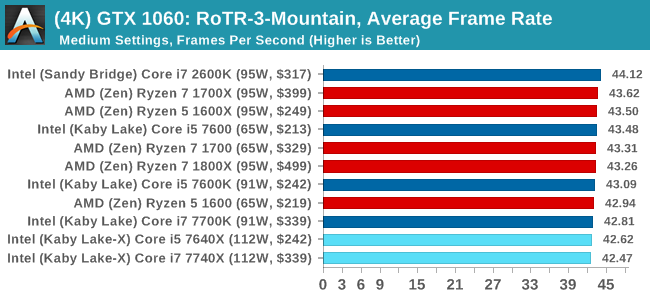

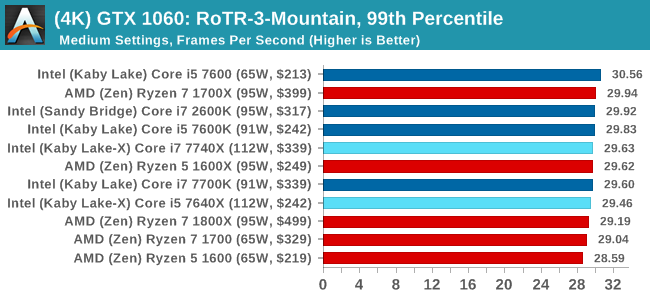

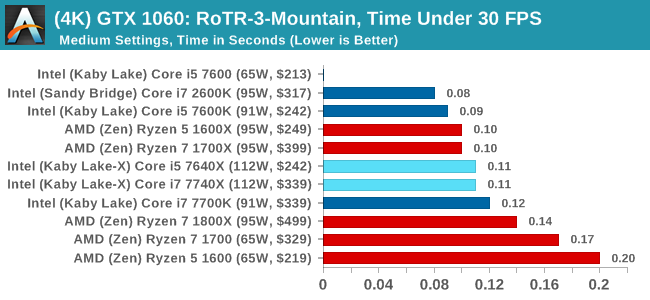

#3 Spine of the Mountain

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6GB Performance

1080p

4K

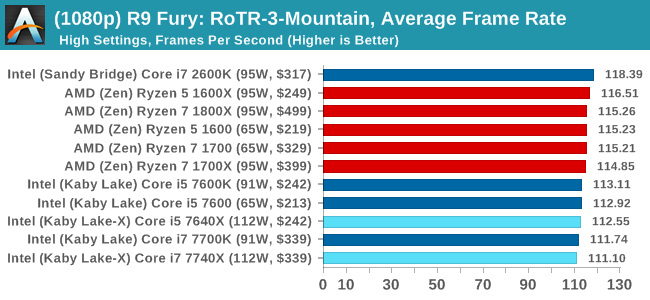

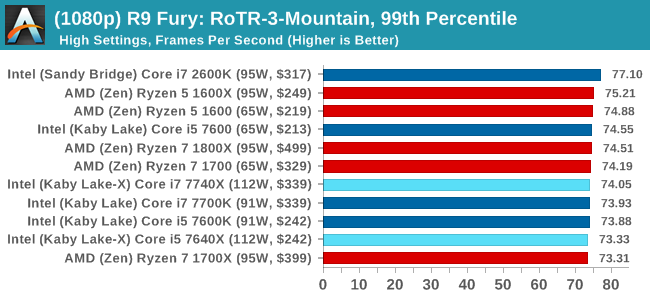

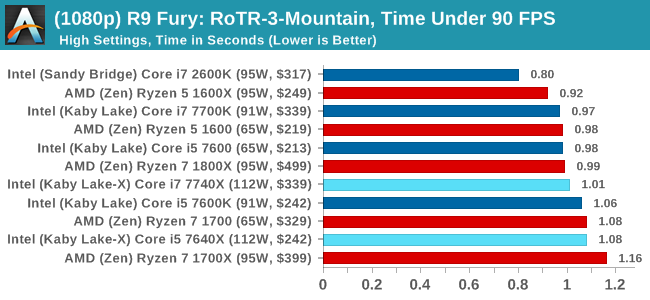

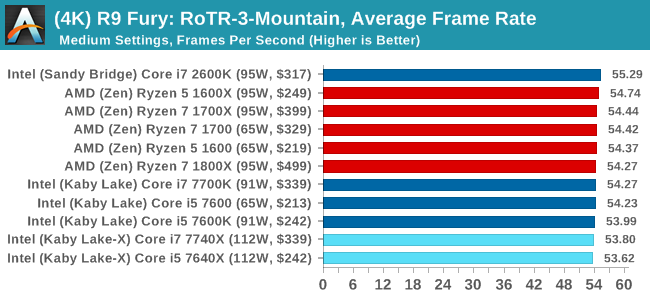

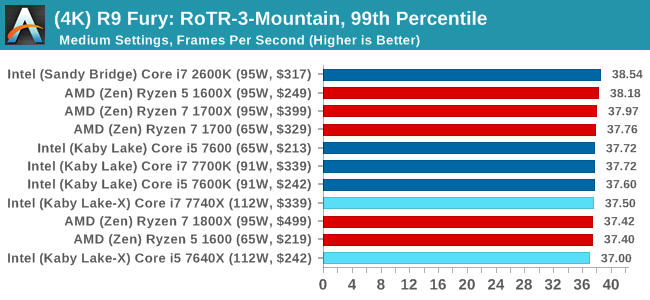

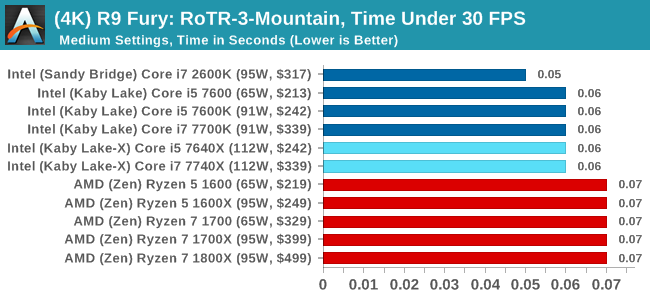

Sapphire R9 Fury 4GB Performance

1080p

4K

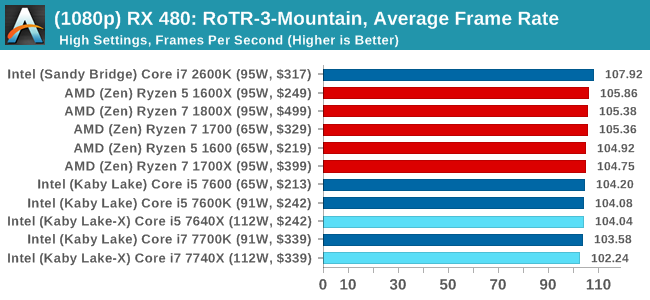

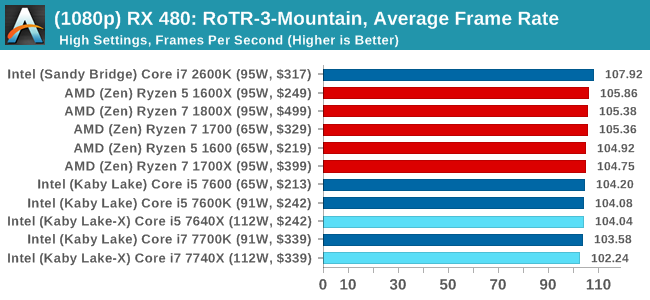

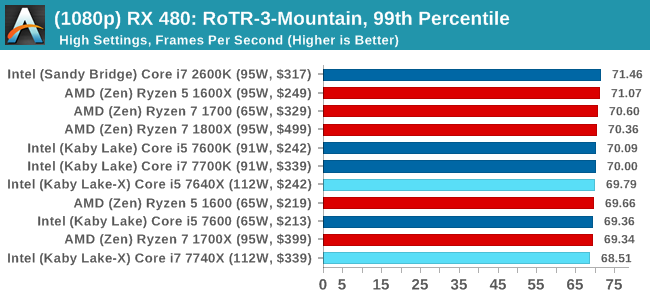

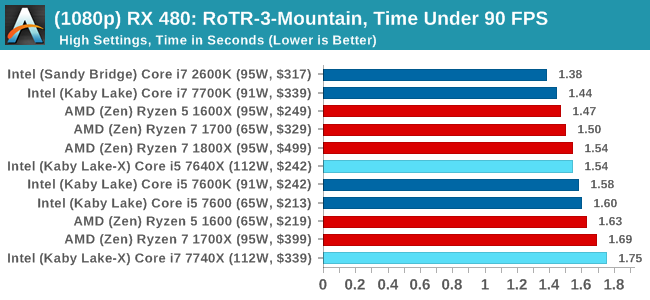

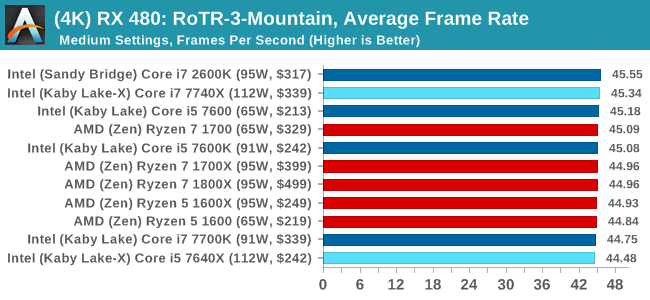

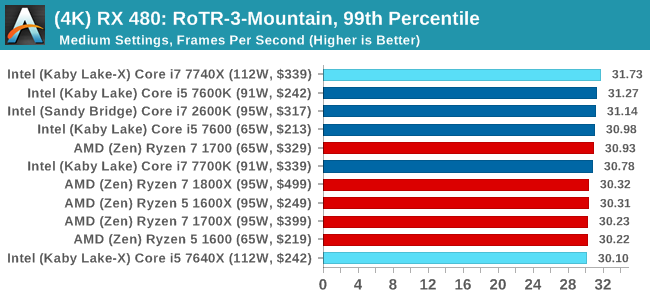

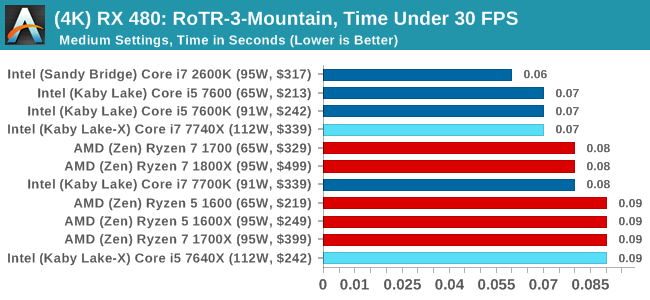

Sapphire RX 480 8GB Performance

1080p

4K

RoTR: Spine of the Mountain Conclusions

Core i5, we're assigning you to run at 1080p with a GTX 1080. That's an order. The rest of you, stand easy.

176 Comments

View All Comments

MTEK - Monday, July 24, 2017 - link

Random amusement: Sandy Bridge got 1st place in the Shadow of Mordor bench w/ a GTX 1060.shabby - Monday, July 24, 2017 - link

That's funny and sad at the same time unfortunately.mapesdhs - Monday, July 24, 2017 - link

S'why I love my 5GHz 2700K (daily system). And the other one (gaming PC). And the third (benchmarking rig), the two I've sold to companies, another built for a friend, another set aside to sell, another on a shelf awaiting setup... :D 5GHz every time. M4E, TRUE, one fan, 5 mins, done.GeorgeH - Monday, July 24, 2017 - link

Those decreased overclocking performance numbers aren't just red flags, they're blinding red flashing lights with the power of a thousand suns.Seriously, that should have been the entire article - this platform is a disaster if it loses performance under sustained load. That's not hyperbole, it's cold hard truth. Sustained load is part of what HEDT is about, and with X299 you're spending more money for significantly less performance?

I sincerely hope you're going to get to the bottom of this and not just shrug and let it slide away as a mystery. Hopefully it's just platform immaturity that gets ironed out, but at the present time I have absolutely no clue how you could recommend X299 in any way. Significantly less sustained performance is a do not pass go, do not collect $200, turn the car around, oh hell no, all caps showstopper.

deathBOB - Monday, July 24, 2017 - link

But they're big AVX workloads. We know heat and power get a bit crazy with the AVX, and at some point we should just step back and realize that overclocking may not be appropriate for these workloads.GeorgeH - Monday, July 24, 2017 - link

But other AVX workloads didn't have the issue.Until we know exactly what is going on and what will be required to fix it, I can't comprehend how anyone can regard X299, at least with the quad core CPUs, as anything but "Nope". Maybe a BIOS update will help, or tuning the overclock, but maybe it'll require new motherboard revisions or delidding the CPU. I'm sure it'll get fixed/understood at some point, but for now recommending this platform is really hard to accept as a good idea.

MrSpadge - Monday, July 24, 2017 - link

> But other AVX workloads didn't have the issue.Using a few of those instructions is different from hammering the CPU with them. Not sure what this software does, but this could easily explain it.

Icehawk - Monday, July 24, 2017 - link

I do a lot of Handbrake encoding to HEVC which will peg all cores on my O/C'd 3770, it uses AVX but obviously a much older version with less functionality, and I can have it going indefinitely without issue.I've looked at the 7800\7820 as an upgrade but if they cannot sustain performance with a reasonable cooling setup then there is no point. The KBL-X parts don't offer enough of a performance improvement to be worth the cost of the X299 mobo which also seem to be having teething problems.

Future proofing is laughable, let's say you bought a 7740x today with the thought of upgrading in two years to a higher core count proc - how likely is it that your motherboard and the new proc will have the same pinout? History says it ain't happening at Camp Intel.

At this point I'm giving a hard pass to this generation of Intel products and hope that v2 will fix these issues. By then AMD may have come close enough in ST performance where I would consider them again, I really want the best ST & MT performance I can get in the $350 CPU zone which has traditionally been the top i7. AMD's MT performance almost tempts me to just build an encoding box.

I loved my Athlon back in the day, anyone remember Golden Fingers? :D

mapesdhs - Monday, July 24, 2017 - link

Golden Fingers... I had to look that up, blimey! :DDrKlahn - Tuesday, July 25, 2017 - link

I recently went from a 4.6GHz 3770K to a 1700X @ 4GHz at home. I play some older games that don't thread well (WoW being one of them). The Ryzen is at least as fast or faster in those workloads. Run Handbrake or Sony Movie Studio and the Ryzen is MUCH faster. We use built 6 core 5820K stations at work for some users and have recently added Ryzen 1600 stations due to the tremendous cost savings. We have yet to run into any tangible difference between the two platforms.Intel does have a lead in ST, but tests like these emphasize it to the point it seems like a bigger advantage than it is in reality. The only time I could see the premium worth it is if you have a task that needs ST the majority of the time (or a program is simply very poorly optimized for Ryzen). Otherwise AMD is offering an extraordinary value and as you point out AM4 will at least be supported for 2 more spins.