The Intel Optane SSD DC P4800X (375GB) Review: Testing 3D XPoint Performance

by Billy Tallis on April 20, 2017 12:00 PM ESTTest Configurations

So while the Intel SSD DC P4800X is technically launching today, 3D XPoint memory is still in short supply. Only the 375GB add-in card model has been shipped, and only as part of an early limited release program. The U.2 version of the 375GB model and the add-in card 750GB model are planned for a Q2 release, and the U.2 750GB model and the 1.5TB model are expected in the second half of 2017. Intel's biggest enterprise customers, such as the Super Seven, have had access to Optane devices throughout the development process, but broad retail availability is still a little ways off.

Citing the current limited supply, Intel has taken a different approach to review sampling for this product. Their general desire for secrecy regarding the low-level details of 3D XPoint has also likely been a factor. Instead of shipping us the Optane SSD DC P4800X to test on our own system, as is normally the case with our storage testing, this time around Intel has only provided us with remote access to a DC P4800X system housed in their data center. Their Non-Volatile Memory Solutions Group maintains a pool of servers to provide partners and customers with access to the latest storage technologies and their software partners have been using these systems for months to develop and optimize applications to take advantage of Optane SSDs.

Intel provisioned one of these servers for our exclusive use during the testing period, and equipped it with a 375GB Optane SSD DC P4800X and a 800GB SSD DC P3700 for comparison. The P3700 was the U.2 version of the drive and was connected through a PLX PEX 9733 PCIe switch. The Optane SSD under test was initially going to be a U.2 version connected to the same backplane, but Intel found that the PCIe switch was introducing some inconsistency in the access latency on the order of a microsecond or two, which is a problem when trying to benchmark a drive with ~8µs best case latency. Intel swapped out the U.2 Optane SSD for an add-in card version that uses PCIe lanes direct from the processor, but the P3700 was still potentially subject to whatever problems the PCIe switch may have caused. Clearly, there's some work to be done to ensure the ecosystem is ready to take full advantage of the performance promised by Optane SSDs, but debugging such issues is beyond the scope of this review.

| Intel NSG Marketing Test Server | |

| CPU | 2x Intel Xeon E5 2699 v4 |

| Motherboard | Intel S2600WTR2 |

| Chipset | Intel C612 |

| Memory | 256GB total, Kingston DDR4-2133 CL11 16GB modules |

| OS | Ubuntu Linux 16.10, kernel 4.8.0-22 |

The system was running a clean installation of Ubuntu 16.10, with no Intel or Optane-specific software or drivers installed, and the rest of the system configuration was as expected. We had full administrative access to tweak the software to our liking, but chose to leave it mostly in its default state.

Our benchmarking is a variety of synthetic workloads generated and measured using fio version 2.19. There are quite a few operating system and fio options that can be tuned, but we generally ignored them: for example the NVMe driver wasn't manually switched to polling mode, or the CPU affinity was not manually set, and nothing was tweaked about power management or CPU clock speed turbo. There is work underway to switch fio over to using nanosecond-precision time measurement, but it has not reached a usable state yet. Our tests only record latencies in microsecond increments, and mean latencies that report fractional microseconds are just weighted averages of eg. how many operations were closer to 8µs than 9µs.

All tests were run directly on the SSD with no intervening filesystem. Real-world applications will almost always be accessing the drive through a filesystem, but will also be benefiting from the operating system's cache in main RAM, which is bypassed with this testing methodology.

To provide an extra point of comparison, we also tested the Micron 9100 MAX 2.4TB on one of our systems, using a Xeon E3 1240 v5 processor. In order to not unfairly disadvantage the Micron 9100, most of the tests were limited to use at most 4 threads. Our test system was running the same Linux kernel as the Intel NSG marketing test server and used a comparable configuration with the Micron 9100 connected directly to the CPU's PCIe lanes rather than through the PCH.

| AnandTech Enterprise SSD Testbed | |

| CPU | Intel Xeon E3 1240 v5 |

| Motherboard | ASRock Fatal1ty E3V5 Performance Gaming/OC |

| Chipset | Intel C232 |

| Memory | 4x 8GB G.SKILL Ripjaws DDR4-2400 CL15 |

| OS | Ubuntu Linux 16.10, kernel 4.8.0-22 |

Because this was not a hands-on test of the Optane SSD on our own equipment, we were unable to conduct any power consumption measurements. Due to the limited time available for testing, we were unable to make any systematic test of write endurance or the impact of extra overprovisioning on performance. We hope to have the opportunity to conduct a full hands-on review later in the year to address these topics.

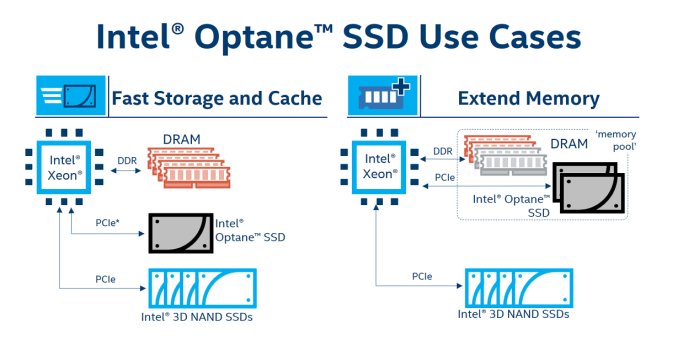

Due to time, we were unable to cover Intel's new Memory Drive Technology software. This is an optional software add-on that can be purchased with the Optane SSD. The Memory Drive Technology software is a minimal virtualization system that allows software to pretend that their Optane SSD is RAM. The hypervisor will present to the guest OS a pool of memory equal to the amount of available DRAM plus up to 320GB of the Optane SSD's 375GB capacity. The hypervisor manages the placement of data to automatically cache hot data in DRAM, such that applications or the guest OS cannot explicitly address or allocate Optane storage. We may get a chance to look at this in the future, as it offers an interesting aspect of the new ways multi-tiered storage will be affecting the Enterprise market over the next few years.

117 Comments

View All Comments

lilmoe - Thursday, April 20, 2017 - link

With all the Intel hype and PR, I was expecting the charts to be a bit more, um, flat? Looking at the deltas from start to finish of each benchmark, it looks like the drive has lots of characteristics similar to current flash based SSDs for the same price.Not impressed. I'll wait for your hands on review before bashing it more.

DrunkenDonkey - Thursday, April 20, 2017 - link

This is what the reviews don't explain and leave people in total darkness. You think your shiny new samsung 960 pro with 2.5g/s will be faster than your dusty old 840 evo barely scratching 500? Yes? Then you are in for a surprise - graphs look great, but check on loading times and real program/game benches and see it is exactly the same. That is why SSD reviews should always either divide to sections for the different usage or explain in great simplicity and detail what you need to look for in a PART of the graph. This one is about 8-10 times faster than your SSD so it IS impressive a lot, but price is equally impressive.lilmoe - Friday, April 21, 2017 - link

Yes, that's the problem with readers. They're comparing this to the 960 Pro and other M.2 and even SATA drives. Um.... NO. You compare this with similar form factor SSDs with similar price tags and heat sinks.And no, even QD1 benches aren't that big of a difference.

lilmoe - Friday, April 21, 2017 - link

"And no, even QD1 benches aren't that big of a difference"This didn't sound right, I meant to say that even QD1 isn't very different **compared to enterprise full PCIe SSDs*** at similar prices.

sor - Friday, April 21, 2017 - link

You're crazy. This thing is great. The current weak spot of NAND is on full display here, and xpoint is decimating it. We all know SSDs chug when you throw a lot of writes at them, all of Anandtech "performance consistency" benchmarks show that iops take a nose dive if you benchmark for more than a few seconds. Xpoint doesn't break a sweat and is orders of magnitude faster.I'm also pleasantly surprised at the consistency of sequential. A lot of noise was made about their sequential numbers not being as good as the latest SSDs, but one thing not considered is that SSDs don't hit that number until you get to high queue depths. For individual transfers xpoint seems to actually come closer to max performance.

tuxRoller - Friday, April 21, 2017 - link

I think the controllers have a lot to due with the perf.It's perf profile is eerily similar to the p3700 in too many cases.

Meteor2 - Thursday, April 20, 2017 - link

So... what is a queue depth? And what applications result in short or long QDs?DrunkenDonkey - Thursday, April 20, 2017 - link

Queue depth is concurent access to the drive, at the same time.For desktop/gaming you are looking at 4k random read (95-99% of the time), QD=1

For movie processing you are looking at sequential read/write at QD=1

For light file server you are looking at both higher blocks, say 64k random read and also sequential read, at QD=2/4

For heavy file server you go for QD=8/16

For light database you are looking for QD=4, random read/random write (depends on db type)

For heavy database you are looking for QD=16/more, random read/random write (depends on db type)

Meteor2 - Thursday, April 20, 2017 - link

Thank you!bcronce - Thursday, April 20, 2017 - link

A heavy file server only has such a small queue depth if using spinning rust, to keep down latency. When using SSDs, file servers have QDs in 64-256 range.