The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTFetch

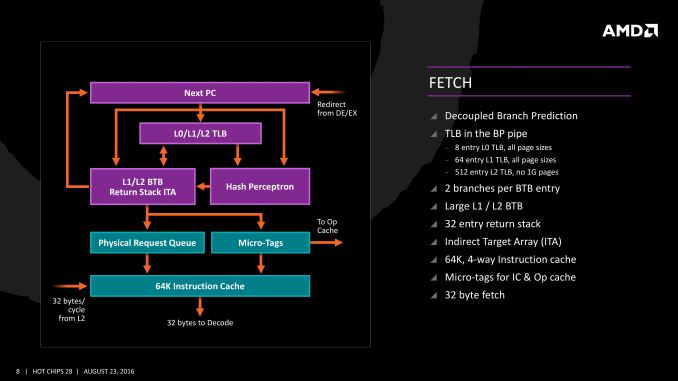

For Zen, AMD has implemented a decoupled branch predictor. This allows support to speculate on incoming instruction pointers to fill a queue, as well as look for direct and indirect targets. The branch target buffer (BTB) for Zen is described as ‘large’ but with no numbers as of yet, however there is an L1/L2 hierarchical arrangement for the BTB. For comparison, Bulldozer afforded a 512-entry, 4-way L1 BTB with a single cycle latency, and a 5120 entry, 5-way L2 BTB with additional latency; AMD doesn’t state that Zen is larger, just that it is large and supports dual branches. The 32 entry return stack for indirect targets is also devoid of entry numbers at this point as well.

The decoupled branch predictor also allows it to run ahead of instruction fetches and fill the queues based on the internal algorithms. Going too far into a specific branch that fails will obviously incur a power penalty, but successes will help with latency and memory parallelism.

The Translation Lookaside Buffer (TLB) in the branch prediction looks for recent virtual memory translations of physical addresses to reduce load latency, and operates in three levels: L0 with 8 entries of any page size, L1 with 64 entries of any page size, and L2 with 512 entries and support for 4K and 256K pages only. The L2 won’t support 1G pages as the L1 can already support 64 of them, and implementing 1G support at the L2 level is a more complex addition (there may also be power/die area benefits).

When the instruction comes through as a recently used one, it acquires a micro-tag and is set via the op-cache, otherwise it is placed into the instruction cache for decode. The L1-Instruction Cache can also accept 32 Bytes/cycle from the L2 cache as other instructions are placed through the load/store unit for another cycle around for execution.

Decode

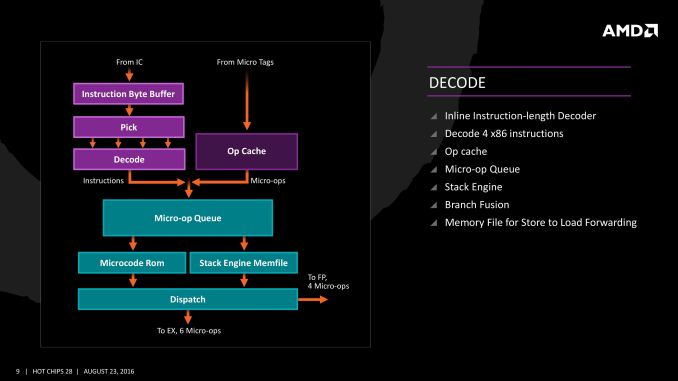

The instruction cache will then send the data through the decoder, which can decode four instructions per cycle. As mentioned previously, the decoder can fuse operations together in a fast-path, such that a single micro-op will go through to the micro-op queue but still represent two instructions, but these will be split when hitting the schedulers. The purpose of this allows the system to fit more into the micro-op queue and afford a higher throughput when possible.

The new Stack Engine comes into play between the queue and the dispatch, allowing for a low-power address generation when it is already known from previous cycles. This allows the system to save power from going through the AGU and cycling back around to the caches.

Finally, the dispatch can apply six instructions per cycle, at a maximum rate of 6/cycle to the INT scheduler or 4/cycle to the FP scheduler. We confirmed with AMD that the dispatch unit can simultaneously dispatch to both INT and FP inside the same cycle, which can maximize throughput (the alternative would be to alternate each cycle, which reduces efficiency). We are told that the operations used in Zen for the uOp cache are ‘pretty dense’, and equivalent to x86 operations in most cases.

574 Comments

View All Comments

Notmyusualid - Saturday, March 4, 2017 - link

Can't disagree with you pal. They look like they execptional value for money.I on the other hand, am already on LGA2011-v3 platform, so I won't be changing, but the main point here is - AMD are back. And we welcome them too.

Alexvrb - Saturday, March 4, 2017 - link

Yeah... if the pricing is as good as rumored for the Ryzen 5, I may pick up a quad-core model. Gives me an upgrade path too, maybe a Ryzen+ hexa or octa-core down the road. For budget builds that Ryzen 3 non-SMT quad-core is going to be hard to argue with though.wut - Sunday, March 5, 2017 - link

You're really optimistically assuming things.Kaby Lake Core i5 7400 $170

Ryzen 5 1600X $259

...and single thread benchmark shows Core i5 to be firmly ahead, just as Core i7 is. The story doesn't seem to change much in the mid range.

Meteor2 - Tuesday, March 7, 2017 - link

@wut spot-on. It also seems that Zen on GloFlo 14 nm doesn't clock higher than 4.0 GHz. Zen has lower IPC and lower actual clocks than Intel KBL.Whichever way you cut it, however many cores in a chip are being considered, in terms of performance, Intel leads. Intel's pricing on >4 core parts is stupid and AMD gives them worthy price competition here. But at 4C and below, Intel still leads. AMD isn't price-competitive here either. No wonder Intel haven't responded to Zen. A small clock bump with Coffee Lake and a slow move to 10 nm starting with Cannon Lake for mobile CPUs (alongside or behind the introduction of 10 nm 'datacentre' chips) is all they need to do over the next year.

After all, if Intel used the same logic as TSMC and GloFlo in naming their process nodes, i.e. using the equivalent nanometre number of if finFETs weren't being used, Intel would say they're on a 10 nm process. They have a clear lead over GloFlo and thus anything AMD can do.

Cooe - Sunday, February 28, 2021 - link

I'm here from the future to tell you that you were wrong about literally everything though. AMD is kicking Intel's ass up and down the block with no end in sight.Cooe - Sunday, February 28, 2021 - link

Hahahaha. I really fucking hope nobody actually took your "buying advice". The 6-core/12-thread Ryzen 5 1600 was about as fast at 1080p gaming as the 4c/4t i5-7400 ON RELEASE in 2017, and nowadays with modern games/engines it's like TWICE AS FAST.deltaFx2 - Saturday, March 4, 2017 - link

I think the reviewer you're quoting is Gamers Nexus. He doesn't come across as being a particularly erudite person on matters of computer architecture. He throws a bunch of tests at it, and then spews a few untutored opinions, which may or may not be true. Tom's hardware does a lot of the same thing, and more, and their opinions are far more nuanced. Although they too could have tried to use an AMD graphics card to see if the problems persist there as well, but perhaps time was the constraint.There's the other question of whether running the most expensive GPU at 1080p is representative of real-world performance. Gaming, after all, is visual and largely subjective. Will you notice a drop of (say) 10 FPS at 150 FPS? How do you measure goodness of output? Let's contrive something.

All CPUs have bottlenecks, including Intel. The cases where AMD does better than Intel are where AMD doesn't have the bottlenecks Intel has, but nobody has noticed it before because there wasn't anything else to stack up against it. The question that needs to be answered in the following weeks and months is, are AMD's bottlenecks fixable with (say) a compiler tweak or library change? I'd expect much of it is, but lets see. There was a comment on some forum (can't remember) that said that back when Athlon64 (K8) came out, the gaming community was certain that it was terrible for gaming, and Netburst was the way to go. That opinion changed pretty quickly.

Notmyusualid - Saturday, March 4, 2017 - link

Gamers Nexus seem 'OK' to me. I don't know the site like I do Anandtech, but since Anand missed out the games....I am forced to make my opinions elsewhere. And funny you mentions Toms, they seem to back it up to some degree too, and I know these two sites are cross-owned.

But still, when Anand get around to benching games with Ryzen, only then will I draw my final conclusions.

deltaFx2 - Sunday, March 5, 2017 - link

@ Notmyusualid: I'm sure Gamers Nexus numbers are reasonable. I think they and Tom's (and other reviewers) see a valid bottleneck that I can only guess is software optimization related. The issue with GN was the bizarre and uninformed editorializing. Comments like, the workloads that AMD does well at are not important because they can be accelerated on GPU (not true, but if true, why on earth did GN use it in the first place?). There are other cases where he drops i5s from evaluation for "methodological reasons" but then says R7 == i5. Even based on the tests he ran, this is not true. Anyway, the reddit link goes over this in far more detail than I could (or would).Meteor2 - Tuesday, March 7, 2017 - link

@DeltaFX2 in what way was GamersNexus conclusion that tasks that can be pushed to GPUs should be incorrect? Are you saying Premiere and Blender can't be used on GPUs?GN's conclusion was:

"If you’re doing something truly software accelerated and cannot push to the GPU, then AMD is better at the price versus its Intel competition. AMD has done well with its 1800X strictly in this regard. You’ll just have to determine if you ever use software rendering, considering the workhorse that a modern GPU is when OpenCL/CUDA are present. If you know specific in stances where CPU acceleration is beneficial to your workflow or pipeline, consider the 1800X."

I think that's very fair and a very good summary of Ryzen.