Ten Year Anniversary of Core 2 Duo and Conroe: Moore’s Law is Dead, Long Live Moore’s Law

by Ian Cutress on July 27, 2016 10:30 AM EST- Posted in

- CPUs

- Intel

- Core 2 Duo

- Conroe

- ITRS

- Nostalgia

- Time To Upgrade

Core: Decoding, and Two Goes Into One

The role of the decoder is to decipher the incoming instruction (opcode, addresses), and translate the 1-15 byte variable length instruction into a fixed-length RISC-like instruction that is easier to schedule and execute: a micro-op. The Core microarchitecture has four decoders – three simple and one complex. The simple decoder can translate instructions into single micro-ops, while the complex decoder can convert one instruction into four micro-ops (and long instructions are handled by a microcode sequencer). It’s worth noting that simple decoders are lower power and have a smaller die area to consider compared to complex decoders. This style of pre-fetch and decode occurs in all modern x86 designs, and by comparison AMD’s K8 design has three complex decoders.

The Core design came with two techniques to assist this part of the core. The first is macro-op fusion. When two common x86 instructions (or macro-ops) can be decoded together, they can be combined to increase throughput, and allows one micro-op to hold two instructions. The grand scheme of this is that four decoders can decode five instructions in one cycle.

According to Intel at the time, for a typical x86 program, 20% of macro-ops can be fused in this way. Now that two instructions are held in one micro-op, further down the pipe this means there is more decode bandwidth for other instructions and less space taken in various buffers and the Out of Order (OoO) queue. Adjusting the pipeline such that 1-in-10 instructions are fused with another instruction should account for an 11% uptick in performance for Core. It’s worth noting that macro-op fusion (and macro-op caches) has become an integral part of Intel’s microarchitecture (and other x86 microarchitectures) as a result.

The second technique is a specific fusion of instructions related to memory addresses rather than registers. An instruction that requires an addition of a register to a memory address, according to RISC rules, would typically require three micro-ops:

| Pseudo-code | Instructions |

| read contents of memory to register2 | MOV EBX, [mem] |

| add register1 to register2 | ADD EBX, EAX |

| store result of register2 back to memory | MOV [mem], EBX |

However, since Banias (after Yonah) and subsequently in Core, the first two of these micro-ops can be fused. This is called micro-op fusion. The pre-decode stage recognizes that these macro-ops can be kept together by using smarter but larger circuitry without lowering the clock frequency. Again, op fusion helps in more ways than one – more throughput, less pressure on buffers, higher efficiency and better performance. Alongside this simple example of memory address addition, micro-op fusion can play heavily in SSE/SSE2 operations as well. This is primarily where Core had an advantage over AMD’s K8.

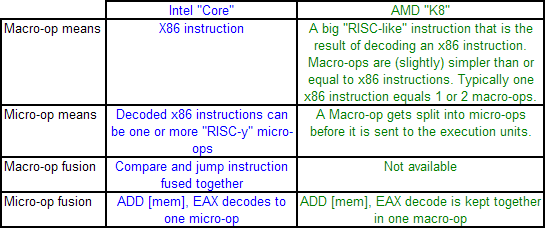

AMD’s definitions of macro-ops and micro-ops differ to that of Intel, which makes it a little confusing when comparing the two:

However, as mentioned above, AMD’s K8 has three complex decoders compared to Core’s 3 simple + 1 complex decoder arrangement. We also mentioned that simple decoders are smaller, use less power, and spit out one Intel micro-op per incoming variable length instruction. AMD K8 decoders on the other hand are dual purpose: it can implement Direct Path decoding, which is kind of like Intel’s simple decoder, or Vector decoding, which is kind of like Intel’s complex decoder. In almost all circumstances, the Direct Path is preferred as it produces fewer ops, and it turns out most instructions go down the Direct Path anyway, including floating point and SSE instructions in K8, resulting in fewer instructions over K7.

While extremely powerful in what they do, AMD’s limitation for K8, compared to Intel’s Core, is two-fold. AMD cannot perform Intel’s version of macro-op fusion, and so where Intel can pack one fused instruction to increase decode throughput such as the load and execute operations in SSE, AMD has to rely on two instructions. The next factor is that by virtue of having more decoders (4 vs 3), Intel can decode more per cycle, which expands with macro-op fusion – where Intel can decode five instructions per cycle, AMD is limited to just three.

As Johan pointed out in the original article, this makes it hard for AMD’s K8 to have had an advantage here. It would require three instructions to be fetched for the complex decoder on Intel, but not kick in the microcode sequencer. Since the most frequent x86 instructions map to one Intel micro-op, this situation is pretty unlikely.

158 Comments

View All Comments

bcronce - Wednesday, July 27, 2016 - link

My AMD 2500+XP lasted me until a Nahalem i7 2.66ghz. It was a slight.... upgradeartk2219 - Friday, July 29, 2016 - link

Very minor, im sure you barely noticed :).jjpcat@hotmail.com - Wednesday, July 27, 2016 - link

I have a Q6600 in my household and it's still running well.In term on performance, E6400 is about the same as the CPUs (e.g. z3735f/z3745f) used in nearly all cloudbook these days.

Michael Bay - Thursday, July 28, 2016 - link

Yep, I was surprised at that when looking through the benchmarks. Turns out Atom is not so slow after all.stardude82 - Wednesday, July 27, 2016 - link

I've just finished decommissioning all my Core 2 Duo parts, several of which have been upgraded with 2nd hand Sandy Bridge components.Yeah, CPU performance has been relatively stagnant. CPUs have come to where commercial jets are now in their technological development. Jets now fly slower than they did the 1960s, but have much better fuel economy per seat.

Not noted in the E6400 v. i5-6600 comparison is that they both have the same TDP which is pretty impressive. Also, you've got to take inflation into account which would bring the CPU price up to $256 or there about, enough for a i5-6600K.

ScottAD - Wednesday, July 27, 2016 - link

One could argue that while Core put Intel on top of the heap again, Sandy Bridge was a more important shift in design and as a result, many users went from Conroe to Sandy Bridge and have stayed there.That pretty much defines my PC currently. Haven't needed to upgrade. Crazy a decade like nothing.

ianmills - Wednesday, July 27, 2016 - link

When a website has trouble keeping up with current content and instead recycles decades old content.... things that make you go hmm...Ian Cutress - Wednesday, July 27, 2016 - link

I'm the CPU editor, we've been up to date for every major CPU launch for the last couple of years, sourcing units that Intel haven't sourced other websites and have done comprehensive and extensive reviews of every leading x86 development. We have had every Haswell-K (2), Haswell-E(3) Broadwell (2), Broadwell E3 Xeon (3), Broadwell-E (4) and Skylake-K (2) CPU tested and reviewed on each official day of launch. We have covered Kaveri and Carrizo in deep repeated detail over the last few years as well.This is an important chip and today marks in an important milestone.

Hmm...?

smilingcrow - Wednesday, July 27, 2016 - link

Ananand do CPUs very well, can't think of anyone better. Kudos and thanks to you 'guys'."This primarily leaves ARM (who was recently acquired by Softbank)"

They are under offer so not guaranteed to go through and ARM isn't a person. :)

ianmills - Wednesday, July 27, 2016 - link

I agree you do a good job with CPU's. Its some of the other topics that this site has been slowed down in when compared to previous years