Ten Year Anniversary of Core 2 Duo and Conroe: Moore’s Law is Dead, Long Live Moore’s Law

by Ian Cutress on July 27, 2016 10:30 AM EST- Posted in

- CPUs

- Intel

- Core 2 Duo

- Conroe

- ITRS

- Nostalgia

- Time To Upgrade

Core: Decoding, and Two Goes Into One

The role of the decoder is to decipher the incoming instruction (opcode, addresses), and translate the 1-15 byte variable length instruction into a fixed-length RISC-like instruction that is easier to schedule and execute: a micro-op. The Core microarchitecture has four decoders – three simple and one complex. The simple decoder can translate instructions into single micro-ops, while the complex decoder can convert one instruction into four micro-ops (and long instructions are handled by a microcode sequencer). It’s worth noting that simple decoders are lower power and have a smaller die area to consider compared to complex decoders. This style of pre-fetch and decode occurs in all modern x86 designs, and by comparison AMD’s K8 design has three complex decoders.

The Core design came with two techniques to assist this part of the core. The first is macro-op fusion. When two common x86 instructions (or macro-ops) can be decoded together, they can be combined to increase throughput, and allows one micro-op to hold two instructions. The grand scheme of this is that four decoders can decode five instructions in one cycle.

According to Intel at the time, for a typical x86 program, 20% of macro-ops can be fused in this way. Now that two instructions are held in one micro-op, further down the pipe this means there is more decode bandwidth for other instructions and less space taken in various buffers and the Out of Order (OoO) queue. Adjusting the pipeline such that 1-in-10 instructions are fused with another instruction should account for an 11% uptick in performance for Core. It’s worth noting that macro-op fusion (and macro-op caches) has become an integral part of Intel’s microarchitecture (and other x86 microarchitectures) as a result.

The second technique is a specific fusion of instructions related to memory addresses rather than registers. An instruction that requires an addition of a register to a memory address, according to RISC rules, would typically require three micro-ops:

| Pseudo-code | Instructions |

| read contents of memory to register2 | MOV EBX, [mem] |

| add register1 to register2 | ADD EBX, EAX |

| store result of register2 back to memory | MOV [mem], EBX |

However, since Banias (after Yonah) and subsequently in Core, the first two of these micro-ops can be fused. This is called micro-op fusion. The pre-decode stage recognizes that these macro-ops can be kept together by using smarter but larger circuitry without lowering the clock frequency. Again, op fusion helps in more ways than one – more throughput, less pressure on buffers, higher efficiency and better performance. Alongside this simple example of memory address addition, micro-op fusion can play heavily in SSE/SSE2 operations as well. This is primarily where Core had an advantage over AMD’s K8.

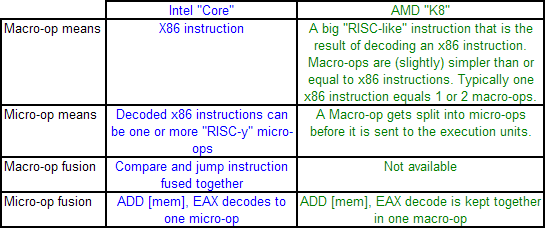

AMD’s definitions of macro-ops and micro-ops differ to that of Intel, which makes it a little confusing when comparing the two:

However, as mentioned above, AMD’s K8 has three complex decoders compared to Core’s 3 simple + 1 complex decoder arrangement. We also mentioned that simple decoders are smaller, use less power, and spit out one Intel micro-op per incoming variable length instruction. AMD K8 decoders on the other hand are dual purpose: it can implement Direct Path decoding, which is kind of like Intel’s simple decoder, or Vector decoding, which is kind of like Intel’s complex decoder. In almost all circumstances, the Direct Path is preferred as it produces fewer ops, and it turns out most instructions go down the Direct Path anyway, including floating point and SSE instructions in K8, resulting in fewer instructions over K7.

While extremely powerful in what they do, AMD’s limitation for K8, compared to Intel’s Core, is two-fold. AMD cannot perform Intel’s version of macro-op fusion, and so where Intel can pack one fused instruction to increase decode throughput such as the load and execute operations in SSE, AMD has to rely on two instructions. The next factor is that by virtue of having more decoders (4 vs 3), Intel can decode more per cycle, which expands with macro-op fusion – where Intel can decode five instructions per cycle, AMD is limited to just three.

As Johan pointed out in the original article, this makes it hard for AMD’s K8 to have had an advantage here. It would require three instructions to be fetched for the complex decoder on Intel, but not kick in the microcode sequencer. Since the most frequent x86 instructions map to one Intel micro-op, this situation is pretty unlikely.

158 Comments

View All Comments

Icehawk - Wednesday, July 27, 2016 - link

I replaced my C2D a couple of years ago only because it needed yet another mobo and PSU and I do like shiny things, I'd bet if it was still around I could pop in my old 660GTX and run most games just fine at 1080. At work there are some C2Ds still kicking around... and a P4 w XP! Of course a lot of larger businesses have legacy gear & apps but it made me chuckle when I saw the P4.With the plateau in needed performance on the average desktop there just isn't much reason to upgrade these days other than video card if you are a gamer. Same thing with phones and tablets - why aren't iPads selling? Everyone got one and doesn't see a need to upgrade! My wife has an original iPad and it works just fine for what she uses it for so why spend $600 on a new one?

zepi - Wednesday, July 27, 2016 - link

You are not mentioning FPGA's and non-volatile memory revolution which could very well be coming soon (not just flash, but x-point and other similar stuff).Personally I see FPGAs as a clear use for all the transistors we might want to give them.

Program stuff, let it run through a compiler-profiler and let it's adaptive cloud trained AI create an optimal "core" for your most performance intensive code. This recipe is then baked together with the executable, which will get programmed near-realtime to the FPGA portion of the SOC you are using. Only to be reprogrammed when you "alt-tab" to another program.

Obviously we'll still need massively parallel "GPU" portion in chip, ASIC-blocks for H265 encode / decode with 8K 120Hz HDR support, encryption / decryption + other similar ASIC usages and 2-6 "XYZlake" CPU's. Rest of the chip will be FPGA with ever more intelligent libraries + compilers + profilers used to determine at software compile time the optimal recipe for the FPGA programming.

Not to mention the paradigm chances that non-volatile fast memory (x-point and future competitors) could bring.

wumpus - Thursday, August 4, 2016 - link

FPGAs are old hat. Granted, it might be nice if they could replace maybe half of their 6T SRAM waste (probably routing definitions, although they might get away with 4T), but certainly the look-up needs to be 6T SRAM. I'd claim that the non-volitile revolution happened in FPGAs (mainly off chip) at least 10 years ago.But at least they can take advantage of the new processes. So don't count them out.

lakerssuperman - Wednesday, July 27, 2016 - link

I'm reading this from my old Sony laptop with a Core 2 Duo and Nvidia GPU in it. With an SSD added in, the basic task performance is virtually indistinguishable from my other computers with much newer and more powerful CPU's. Granted it can get loud when under load, but the Core 2 era was still a ways away from the new mobile focused Intel we have now.I guess my basic point is that I got this laptop in 2009 and for regular browsing tasks etc, it is still more than adequate which is a testament to both the quality and longevity of the Core 2 family, but where we are with CPU power in general. Good stuff.

jeffry - Monday, August 1, 2016 - link

I agree. Got me a Sony SZ1m in 2007 (i think?), flip switched the core duo yonah with a core2duo T7200 merom. Because its 64 bit and now i can run 64 bit os and 64 bit software on it.boozed - Wednesday, July 27, 2016 - link

Funny to think that despite four process shrinks, there's been minimal power and clock improvement since then.UtilityMax - Wednesday, July 27, 2016 - link

To some of you it may sound like a surprise, but a Core2Duo desktop can still be fairly usable as a media consumption device running Windows 10. I am friends with a couple who are financially struggling graduate students. The other way, they brought an ancient Gateway PC with LCD from work, and they were wondering if they could rebuild it into a PC for their kid. The specs were 2GB of memory and Pentium E2180 CPU. I found inside of a box of ancient computer parts which I never throw away an old Radeon graphics card and a 802.11n USB adapter. I told them to buy a Core2Duo E4500 processor online because it cost just E4500. After installing Windows 10, the PC runs fairly smoothly. Good enough for web browsing and video streaming. I could even load some older games like Quake 3 and UrbanTerror 4.2 and play them with no glitch.UtilityMax - Wednesday, July 27, 2016 - link

I mean, the E4500 cost just 5 bucks..DonMiguel85 - Wednesday, July 27, 2016 - link

Still using a Core 2 Quad 9550. It bottlenecks most modern games with my GTX 960, but can still run DOOM at 60FPS.metayoshi - Wednesday, July 27, 2016 - link

Wow. Actually, just last holiday season, I replaced my parents' old P4 system (with 512 MB RAM! and 250 GB SATA Maxtor HDD!) with my old Core i7-860 since I upgraded to a system with a Core i7-4790K that I got on a black friday sale. The old 860 could definitely still run well for everyday tasks and even gaming, so it was more than good enough for my parents, but the video processing capabilities of the more recent chips are a lot better, which is the main reason I updated. Also, the single threaded performance was amazing for the 4790K, and the Dolphin emulator did not run so well on my 860, so there was that.Speaking of Core 2, though, I owned an ASUS UL30Vt with the Core 2 Duo SU7300 CPU and an Nvidia GeForce G 210M. While the weak GPU was not so great for high end gaming, the overall laptop was amazing. It was more than powerful enough for everyday tasks, and had amazing battery life. It was pretty much what every ultrabook today desires to be: sleek, slim, but powerful enough with great battery life. That laptop to me was the highlight of the Core 2 era. I was kind of sad to let it go when I upgraded to a more powerful laptop with an Ivy Bridge CPU and 640M LE GPU. I don't think any laptop I owned gave me as much satisfaction as that old Asus. Good times.