The AMD Radeon RX 480 Preview: Polaris Makes Its Mainstream Mark

by Ryan Smith on June 29, 2016 9:00 AM ESTThe Polaris Architecture: In Brief

For today’s preview I’m going to quickly hit the highlights of the Polaris architecture.

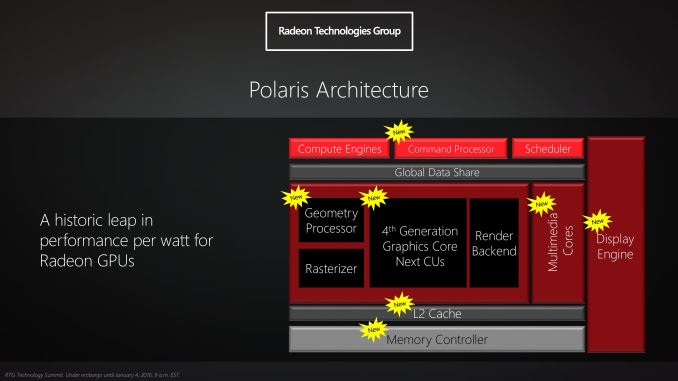

In their announcement of the architecture this year, AMD laid out a basic overview of what components of the GPU would see major updates with Polaris. Polaris is not a complete overhaul of past AMD designs, but AMD has combined targeted performance upgrades with a chip-wide energy efficiency upgrade. As a result Polaris is a mix of old and new, and a lot more efficient in the process.

At its heart, Polaris is based on AMD’s 4th generation Graphics Core Next architecture (GCN 4). GCN 4 is not significantly different than GCN 1.2 (Tonga/Fiji), and in fact GCN 4’s ISA is identical to that of GCN 1.2’s. So everything we see here today comes not from broad, architectural changes, but from low-level microarchitectural changes that improve how instructions execute under the hood.

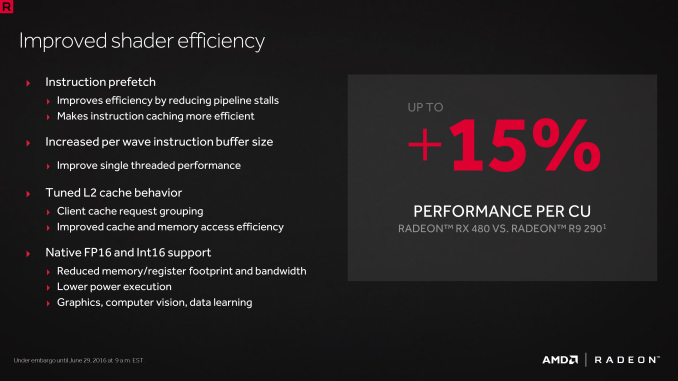

Overall AMD is claiming that GCN 4 (via RX 480) offers a 15% improvement in shader efficiency over GCN 1.1 (R9 290). This comes from two changes; instruction prefetching and a larger instruction buffer. In the case of the former, GCN 4 can, with the driver’s assistance, attempt to pre-fetch future instructions, something GCN 1.x could not do. When done correctly, this reduces/eliminates the need for a wave to stall to wait on an instruction fetch, keeping the CU fed and active more often. Meanwhile the per-wave instruction buffer (which is separate from the register file) has been increased from 12 DWORDs to 16 DWORDs, allowing more instructions to be buffered and, according to AMD, improving single-threaded performance.

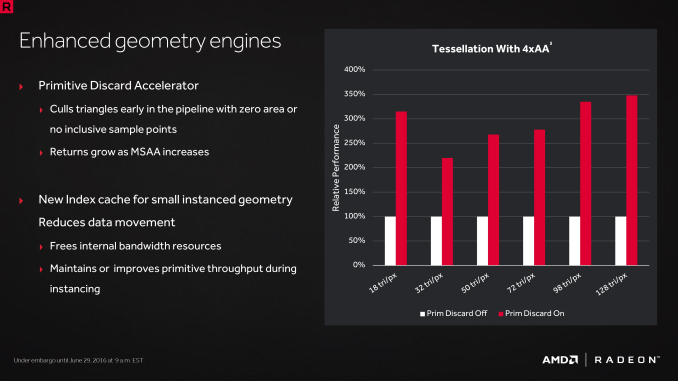

Outside of the shader cores themselves, AMD has also made enhancements to the graphics front-end for Polaris. AMD’s latest architecture integrates what AMD calls a Primative Discard Accelerator. True to its name, the job of the discard accelerator is to remove (cull) triangles that are too small to be used, and to do so early enough in the rendering pipeline that the rest of the GPU is spared from having to deal with these unnecessary triangles. Degenerate triangles are culled before they even hit the vertex shader, while small triangles culled a bit later, after the vertex shader but before they hit the rasterizer. There’s no visual quality impact to this (only triangles that can’t be seen/rendered are culled), and as claimed by AMD, the benefits of the discard accelerator increase with MSAA levels, as MSAA otherwise exacerbates the small triangle problem.

Along these lines, Polaris also implements a new index cache, again meant to improve geometry performance. The index cache is designed specifically to accelerate geometry instancing performance, allowing small instanced geometry to stay close by in the cache, avoiding the power and bandwidth costs of shuffling this data around to other caches and VRAM.

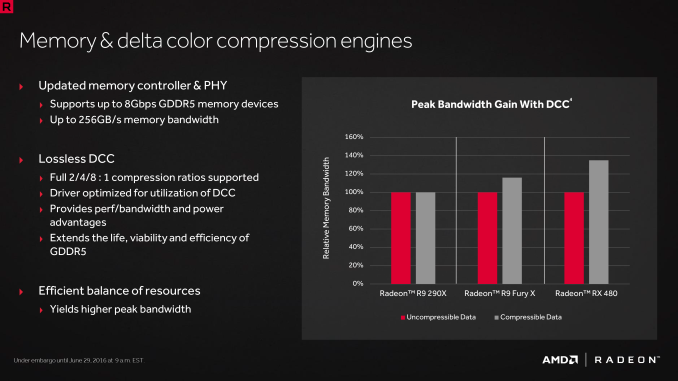

Finally, at the back-end of the GPU, the ROP/L2/Memory controller partitions have also received their own updates. Chief among these is that Polaris implements the next generation of AMD’s delta color compression technology, which uses pattern matching to reduce the size and resulting memory bandwidth needs of frame buffers and render targets. As a result of this compression, color compression results in a de facto increase in available memory bandwidth and decrease in power consumption, at least so long as buffer is compressible. With Polaris, AMD supports a larger pattern library to better compress more buffers more often, improving on GCN 1.2 color compression by around 17%.

Otherwise we’ve already covered the increased L2 cache size, which is now at 2MB. Paired with this is AMD’s latest generation memory controller, which can now officially go to 8Gbps, and even a bit more than that when oveclocking.

449 Comments

View All Comments

D1v1n3D - Friday, July 1, 2016 - link

I think it is funny did all these Nvidia people forget what 970 landed on cost wise and then the limped along 3.5gb ram. AMD has many more models to come for instance the GDDR5X models and the hbm2 models AMD is just bringing in cash flow off a very capable mid-range card i will be buying one for my mini itx that currently is running 1gb 6950hd talk about an upgrade and at such an amazing value and allowing me to not stress my 450w PSU you guys and your unrealistic crossfire the latency alone would drive me up the wall to much fluctuation from so many sources gpu x 2 and cpu. maybe when it's two hbm2 cards crossed maybe the latency will be nonexistent.currently waiting for fm2+ refresh I sure hope they have one more before fm3/+ boards come out.

monohouse - Sunday, July 3, 2016 - link

it's a prayr-view, pray for AMD !monohouse - Sunday, July 3, 2016 - link

"Wise gamers" ? is such a thing even exists ?, do you know I play Doom 2 on a GTX 780 Ti ? so what ? more graphic resources are never a bad thing, I also play many DX9 games on windows XP so what ? a technologically fast video card benefits not only the bloat-filled spyware-infested windows 10, it's also good for accelerating old software (that is, if you can make it work / are given drivers to make it work)monohouse - Sunday, July 3, 2016 - link

but now that you mention smart gamers ? this is how I see it: there are 2 types of gamers:the first type are the ones that don't know what they are going to run on the system, their load is variable and they jump from one game to another, never knowing which game they will run next because they wait it's release

and the second are the ones that do know what they will run ahead of time and will not run anything but what they know

I classify them as static and dynamic types of gamers, so the RX 480 is it good or not good ? the question in my opinion is more complex than it seems, because I believe that there is more to video cards than just performance watts heat noise and price, you have to look at the bigger picture, at things like OpenCL (and you know that AMD are pretty good at that), at things like hardware quality (how precise is the graphic calculation) (which is also a department where AMD is better (usually, excluding the brilinear filtering of the 9600XT) and also have to look at image quality produced by the card(s), also look into stuttering (if there is any, and how much) all these aspects are just hardware related, but hardware does not exist on it's own, it exists together with driver, so not less important than looking at hardware quality is also software quality (a department which is usually pretty bad with AMD) how stable is the driver ? is there any BSOD ? is there any rendering bugs ? and of corse the performance of the driver.

how does this relate to type of gamers ? consider it: you know what you are going to run in the card, it could be a OpenCL program or some very specific games - then all you need is to find out how the card (with it's driver) is handling this specific load - and based on that decide if you want to buy/use it

but for the dynamic gamer this is much more complex because there is no way to predict what the software/load is going to be, so the decision is more difficult whether or not to buy, so here is my way:

static gamer buy what suits your load, if RX 480 can run your OpenCL/games well enough, stable and fast enough looking good then you have no problem to buy it

but for dynamic gamer I have to disagree on all the posts mentioned here, and all the estimationing cliche "1080p gaming", there is no line you can draw and all games having an equal load at a given resolution, there is no such thing as "this card will last me 2 years at 1080p", for the dynamic gamer this strategy is flawed, because nobody knows the future (that being said, console generations do have an impact because modern games are console games) and in addition some games are pre-built for a specific FPS target, and game engines are differently designed. so for the dynamic gamer I would not recommend RX 480, because it's performance already struggles with games that exist in the present, so for the dynamic gamer the best bet is the highest performing card (only under the condition that it runs every already released game at higher than the required FPS for you)

K_Space - Saturday, July 9, 2016 - link

Apologies for reading your comment so late, however it is was not posted under the parent thread:1) why wouldn't wise gamers exists? :) "the gamer self" does not exist in vacuum, a wise human who plays games is a wise gamer.

2) your analysis of the static vs dynamic gamer is really well put, though your use cases are broader, so user is more apt than gamer (and really fit with the gamer does not exist in a vaccum statement earlier). You have put plenty of caveats that we almost essentially agree: whilst no one can predict the future precisely, one can certainly develop some foresight by looking at trends/as well as typical use scenarios to predict future use cases. It's what IT departments do all the time anyway, "wise" gamers are no different :) Just as you'd predict after DX11 was released that most if not all AAA games will feature the buzz word tessellation, you would rightly predict that with the current gen of consoles future AAA games will be more VRAM heavy than current ones. multi core CPUs will be utalised more than they would in current DX11 games, etc. Ditto with the 1080p statement, if im planning a future GFX purchase I'd factor in if I'm considering to stick to native 1080p display or a denser display and act accordingly. By your definition a dynamic gamer does not know what their future games will demand, but their interests are typically static even if the games change; thus if hypothetically speaking i likes MOBA or indie games I'd hazard a good guess that a 480 and not a 1070 card is what I need for future indie/MOBA games. Remember, these "predictions" don't need to be pin point accurate, just something that will get me to my next upgrade cycle.

3) RTG has been quite spectular with their driver support releases. previous GCN cards have been reaping these benefits to this day. I've the additional benefit of retroscopy in considering how they promptly dealt with the 480 powergate fiasco.

dmark07 - Sunday, July 3, 2016 - link

I'm a little confused as to when benchmarks were performed for the NVIDIA 1070FE card. The only articles on Anandtech for this generation of NVIDIA cards only has benchmarks for the 1080. I wish your team would have written an article about the 1070 first before adding numbers for them to this article. As it appears, this either indicates someone used the 1080 numbers and labeled them as 1070FE or this was a biased move with unpublished numbers that could have been pulled out of thin air... This is very uncharacteristic of Anandtech. Don't get me wrong, I've been looking forward to a full breakdown of the 1070 but the Anandtech team use to stick to the standard of performing a write up on a card prior to using benchmarks for that card in comparisons.Ranger1065 - Tuesday, July 5, 2016 - link

Speaking as someone who has happily visited this site for many years, it's clear the Anandtech "team" is not what it used to be. I'm tired of visiting here only to NOT find the reviews I'm interested in and so I visit Anandtech less and less frequently. It's a shame because I know the staff are capable of writing really good reviews but my patience and faith in Anandtech is just about exhausted. Other sites may not have the detail that Anandtech does (if and when they actually post a review) but the difference is not huge and at least they do actually post reviews that people care about.Murloc - Tuesday, July 5, 2016 - link

this is not a scientific publication, they can put whatever "unpublished" numbers they want in there.But yes, the issue is that they've become slow at posting and are unable to solve the issue.

Keinz - Tuesday, July 5, 2016 - link

Each and every time I bother reading the comments I'm reminded why free speech for the dumb is a very, very, VERY bad thing.beast6228 - Wednesday, July 6, 2016 - link

Too bad you didn't add the r9 295x2 to the benchmarks it would have destroyed most of the cards.