Assessing IBM's POWER8, Part 1: A Low Level Look at Little Endian

by Johan De Gelas on July 21, 2016 8:45 AM ESTMemory Subsystem: Bandwidth

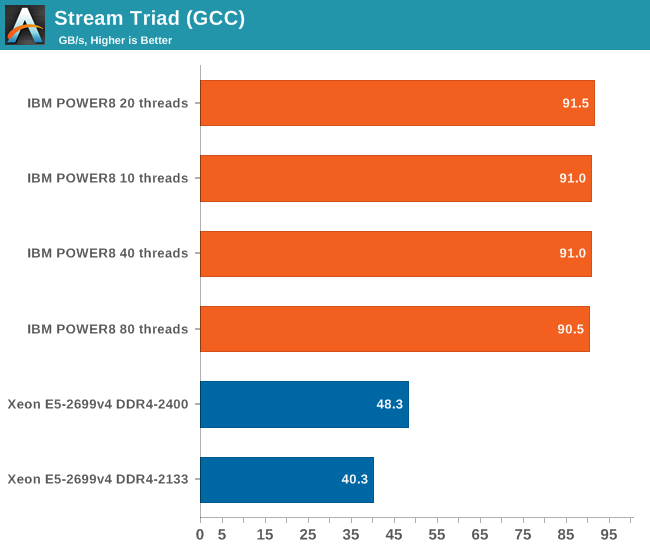

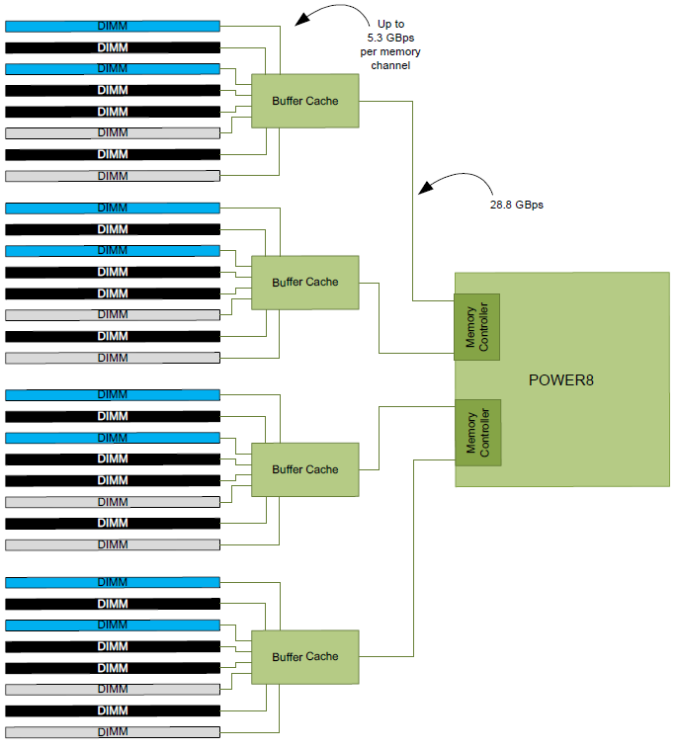

As we mentioned before, the IBM POWER8 has a memory subsystem which is more similar to the Xeon E7's than the E5's. The IBM POWER8 connects to 4 "Centaur" buffer cache chips, which have both a 19.2 GB/s read and 9.6 GB/s write link to the processor, or 28.8 GB/s in total. So the 105 GB/s aggregate bandwidth of the POWER8 is not comparable to Intel's peak bandwidth. Intel's peak bandwidth is the result of 4 channels of DDR4-2400 that can either write or read at 76.8 GB/s (2.4 GHz x 8 bytes per channel x 4 channels).

Bandwidth is of course measured with John McCalpin's Stream bandwidth benchmark. We compiled the stream 5.10 source code with gcc 5.2.1 64 bit. The following compiler switches were used on gcc:

-Ofast -fopenmp -static -DSTREAM_ARRAY_SIZE=120000000

The latter option makes sure that stream tests with array sizes which are not cacheable by the Xeons' huge L3 caches.

It is important to note why we use the GCC compiler and not vendors' specialized compilers: the GCC compiler is not as good at vectorizing the code. Intel's ICC compiler does that very well, and as result shows the bandwidth available to highly optimized HPC code, which is great for that code in the real world, but it's not realistic for multi-threaded server applications.

With ICC, Intel can use the very wide 256-bit load units to their full potential and we measured up to 65 GB/s per socket. But you also have to consider that ICC is not free, and GCC is much easier to integrate and automate into the daily operations of any developer. No licensing headaches, no time consuming registrations.

The combination of the powerful four load and two store subsystem of the POWER8 and the read/write interconnect between the CPU and the Centaur chips makes it much easier to offer more bandwidth. The IBM POWER8 delivers a solid 90 GB/s despite using old DDR3-1333 memory technology.

Intel claims higher bandwidth numbers, but those numbers can only be delivered in vectorized software.

124 Comments

View All Comments

Michael Bay - Sunday, July 24, 2016 - link

Hardware does not exist for its own sake, it exists to run software. AT is entirely correct in their methodology.jospoortvliet - Tuesday, July 26, 2016 - link

I'd argue it is the other way around, GCC might leave 5-10% performance on the table in some niche cases but does just fine most of the time. There's a reason Intel and IBM contribute to GCC - to make sure it doesn't get too far behind as they know very well most of their customers use these compilers and not their proprietary ones.Of course, for scientific computing and other niches it makes all the difference and one can argue these heavy systems ARE for niche markets but I still think it was a sane choice to go with GCC.

abufrejoval - Thursday, August 4, 2016 - link

Actually exercising 90% of all transistors on a CPU die these days, is both very hard to do (next to impossible) and will only slow the clock to avoid overstepping TDP.And I seriously doubt that the GCC will underuse a CPU at 10% its computational capacity.

Actually from what I saw the GCC by itself (compiling) was best at exploiting the full 8T potential of the Power8. And since the GCC is compiled by itself, that speaks for the quality of machine code that it can produce, if the source allows it. And that speaks for the quality of the GCC source code, ergo prove you can do better before you rant.

abufrejoval - Thursday, August 4, 2016 - link

Well this is part 1 and describes one scenario. What you want is another scenario and of course it's a valid if a very distinct one.Actually distinct is the word here: You'd be using a vendor's compiler if your main job is a distinct workload, because you'd want to squeeze every bit of performance out of that.

The problem with that is of course, that any distinct workload makes it rather boring for the general public because they cannot translate the benchmark to their environment.

AT aims to satisfy the broadest meaningful audience and Johan as done a great, great job at that.

I'm sure he'll also write a part 4711 for you specifically, if you make it economically attractive.

Hell, even I'd do that given the proper incentive!

Zan Lynx - Sunday, July 24, 2016 - link

Using GCC as the compiler is also why (in my opinion) the Intel chips aren't using their full TDP. Large areas of Intel chips are dedicated to vector operations in SSE and AVX. If you don't issue those instructions then half the chip isn't even being used.Some gamers who love their overclocked Intel chips have actually complained to game engine developers who add AVX to the game engine. Because it ruins their overclock even if the game runs much faster. Then they're in the situation of being forced to clock down from 4.5 GHz to 3.7 in order to avoid lockups or thermal throttling.

Kevin G - Sunday, July 24, 2016 - link

The Xeon E3 v3's had different clock speeds for AVX code: it consumed too much power and got too hot while under total load.This holds true on the E5 v4's but the AVX penalty is done on a core-by-core basis, not across the entire chip. The result is improved performance in mixed workloads. This is a good thing as AVX hasn't broken out much beyond the HPC markets.

talonted - Monday, July 25, 2016 - link

For those interested in getting a Power8 workstation. Check out Talos.https://www.raptorengineering.com/TALOS/prerelease...

137ben - Monday, July 25, 2016 - link

I made an account to say that this article (along with the subsequent stock-cooler comparison article) is why I really love Anandtech. A lot of the code I run/write for my research is CPU-bottlenecked. Still, until the last year or so, I didn't know very much about hardware. Now, reading Anandtech, I have learned so much more about the hardware I depend on from this website than from any other website. Most just repeat announcements or run meaningless cursory synthetic benchmarks. The fact that Johan De Gelas has written such a deep dive into the inner workings of something as complex as a server CPU architecture, and done it in a way that I can understand, is remarkable. Great job Anandtech, keep it up and I'll always come back.JohanAnandtech - Thursday, July 28, 2016 - link

You made me a happy man, I achieved my goal :-)alpha754293 - Wednesday, July 27, 2016 - link

Excellent work and review as always Johan. I would have been interest to see how the two processors perform in floating point intensive benchmarks though...