ARM Unveils Next Generation Bifrost GPU Architecture & Mali-G71: The New High-End Mali

by Ryan Smith on May 30, 2016 7:00 AM EST

Over the last few years the SoC GPU space has taken an interesting path, and one I admittedly wasn’t expecting. At the start of this decade the playing field for SoC-class GPUs was rather diverse, with everyone from NVIDIA to Broadcom (and everything in between) participating in it. Consolidation in the GPU space would be inevitable – something we’ve already seen with SoC vendors dropping out – however I am surprised by just how quickly it has happened. In just six years, the number of GPU vendors with a major presence in high-end Android phones has been whittled down to only two: the vertically integrated Qualcomm, and the IP-licensing ARM.

That ARM has managed to secure most of the licensed GPU market for themselves is a testament to both their engineering and their IP licensing efforts. ARM’s path into this market has been non-traditional, having acquired an essentially unknown GPU vendor a decade ago, and growing it into the 800lb gorilla it has now become. ARM’s expertise in IP licensing, coupled with a somewhat unusual GPU architecture, has proven to be a powerful combination for the company as they have secured a number of significant wins from the high end to the low end.

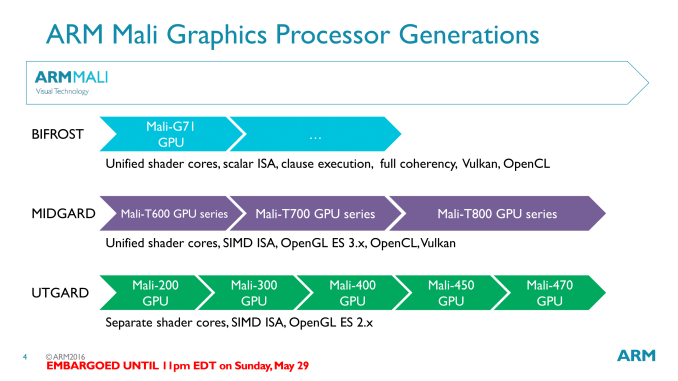

Much of this growth was built on the back of the company’s GPU architecture of the last few years, Midgard. Initially launched in 2012, Midgard has been the cornerstone of ARM’s Mali 600, 700, and 800 series designs. As ARM’s first unified shader design for GPUs, Midgard has been extended over the years to support newer features such as geometry tessellation and 10bpc color, along with newer APIs such as OpenGL ES 3.1/3.2 and Vulkan.

However as Midgard approaches its fourth birthday and the SoC GPU landscape evolves, Midgard’s time at the top will soon be coming to an end. Amidst the backdrop of Computex 2016 and alongside their new Cortex-A73 CPU, ARM is announcing their next generation GPU architecture, Bifrost. A significant update to ARM’s GPU architecture, Bifrost will first be deployed in ARM’s Mali-G71 GPU.

Recap: Mali & VLIW

One of the interesting aspects of SoC GPU development over the years is that it has been a very distinct echo of larger discrete GPU development. Many innovations and changes that first show up with dGPUs will show up in SoC GPUs a few years later, as newer manufacturing processes allow for those developments to fit within the extreme space and power requirements of an SoC-class GPU. At the same time mobile games/graphics development follows a similar path, with mobile application developers picking up rendering techniques first used elsewhere.

ARM’s architectural development, in turn, has been a good example of this process. The non-unified Utgard architecture gave way to the unified Midgard architecture in 2012, about 6 years after dGPUs first made the transition. And as we learned when we examined the Midgard architecture in depth, Midgard was an architecture well suited for the rendering paradigms of the time.

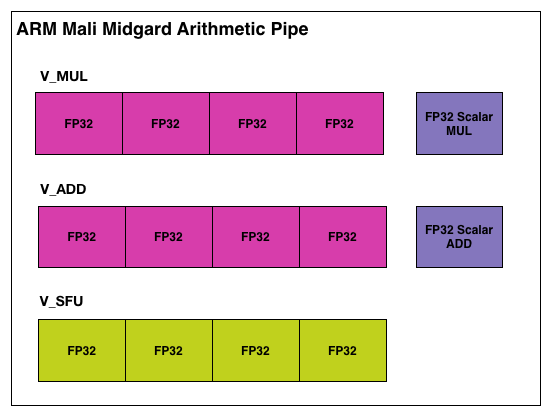

Midgard’s shader core, in short, was an Instruction Level Parallelism-centric design, employing a Very Long Instruction Word (VLIW) instruction format. To achieve maximum utilization out of Midgard’s shader cores, you needed to be able to extract a significant amount of ILP – 4 concurrent instructions – in order to fill all of the slots in a shader core. This sort of design maps well to basic graphics workloads, as 4 color component RGBA is a natural fit for the 4 lanes of ARM’s VLIW-4 design. Furthermore VLIW designs are traditionally very space efficient, as there’s relatively little overhead logic, which is always a boon for the tight constraints of the SoC space.

However getting back to what we said earlier about SoC GPUs being an echo of discrete GPUs, as we’ve seen there, VLIW does have a limited shelf life. Newer rendering paradigms often work with just 1 or 2 components at once, which leaves open lanes that need to be filled to achieve full GPU utilization. A good shader compiler can help here, but it does become an escalating technology war over time, as getting good performance becomes increasingly compiler-centric, and writing a compiler that can extract the necessary ILP is a challenge in and of itself. What history has shown us – and what is going to happen again in the mobile market – is that rendering workloads will continue to shift away from a style that is suitable for VLIW.

57 Comments

View All Comments

mdriftmeyer - Tuesday, May 31, 2016 - link

Aren't you glad you commented yesterday? See the update to HSA.prisonerX - Wednesday, June 1, 2016 - link

You're confusing OpenCL C with OpenCL. SPIR-V is an intermediate language also supported by OpenCL.pencea - Monday, May 30, 2016 - link

How about the review for the GTX 1080? It's been days since the card came out. Other major sites have already posted their reviews on both the 1080 and 1070, while AnandTech still haven't posted one yet. Only a pathetic preview.Quake - Monday, May 30, 2016 - link

Quantiy over quality my friend. Anandtech is known to write some concise, detailed and thorough articles. For me, sites like Engadget are tabloid newspapers while Anandtech is a respected newspaper that takes it time to write some thorough and intelligent reviews.Tabalan - Monday, May 30, 2016 - link

True, but they could split review into 2 parts - 1st one would consists of tests, benchmarks, etc (like other websites do), other would be about uarchi on it's own. This way almost everyone would be happy.funkforce - Monday, May 30, 2016 - link

Engadget?! Come on! PCWorld, PCGamesHardware, HardOCP, KitGuru, Hothardware, Tomshardware, TechSpot, HardwareCanucks, TweakTown all posted their review, many almost as thourough as Anandtech... 13 days ago! And we could forgive Mr Smith if it was a one time thing, but it's been like this every GPU review since he took over as Editor in Chief.When Anand was in charge, no review was late like this and it still was as thorough.

Now I LOVE Ryan's writing, it's the best bar none, he is and awesome guy for sure!

But please! For the love of GOD, step down as Editor and focus on writing only, delivering on time without a boss to push you is not your thing...

Just check earlier reviews, same thing, 1-2 weeks late, even though promised so many times it would come out a week or two before actually published. (Except GTX 960 which never got published at all after 7 weeks of promises and then just silence)

Alexa shows this website has lost an insane amount of readers in 1 year. I have been here for almost 20 years and I just want AT to be great again. Please someone do something! Anyone?! <3 Please save AT!

r3loaded - Tuesday, May 31, 2016 - link

Because while other sites will be satisfied with a review that covers "zomg runs Crysis 3 and GTA V on max settings at xyz FPS, temps and noise are pretty good", Anandtech doesn't really roll that way. They won't be satisfied with their review until they've completed a deep dive on the Pascal architecture, the merits of GDDR5X and how it compares with GDDR5 and HBM/HBM2, and quantifying frame latency and consistency.Other reviews are written by gamers and computer enthusiasts. Anandtech reviews are written by computer engineers.

prisonerX - Tuesday, May 31, 2016 - link

1080 whiners like you are really tedious. I hope they cancel the review.jjj - Monday, May 30, 2016 - link

Any clue about cache sizes and if a reduction there is factored into the perf density math? Also wondering about thermal , some of the mentioned changes will help but some more details would be nice.allanmac - Monday, May 30, 2016 - link

Nice review. Delivering a full-featured Vulkan/SPIR-V 1.1 GPU to the masses is something we're all ready for.