The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTGP104’s Architecture

Looking at an architecture diagram for GP104, Pascal ends up looking a lot like Maxwell, and this is not by chance. After making more radical changes to their architecture with Maxwell, for Pascal NVIDIA is taking a bit of a breather. Which is not to say that Pascal is Maxwell on 16nm – this is very much a major feature update – but when it comes to discussing the core SM architecture itself, there is significant common ground with Maxwell.

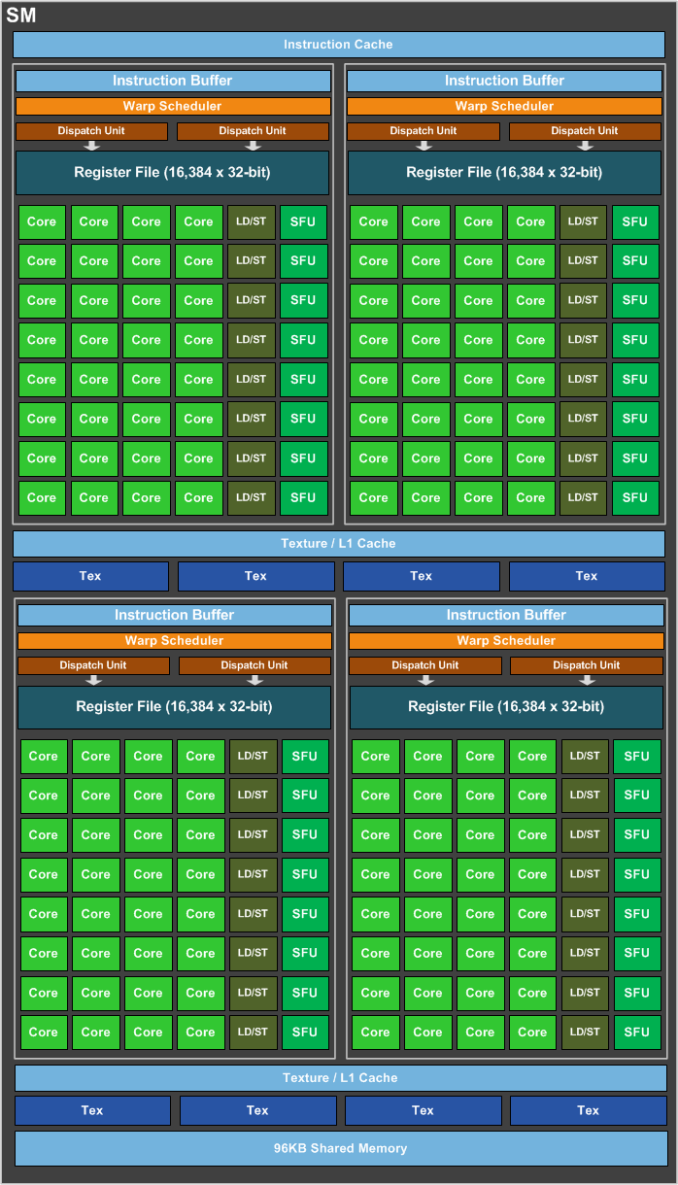

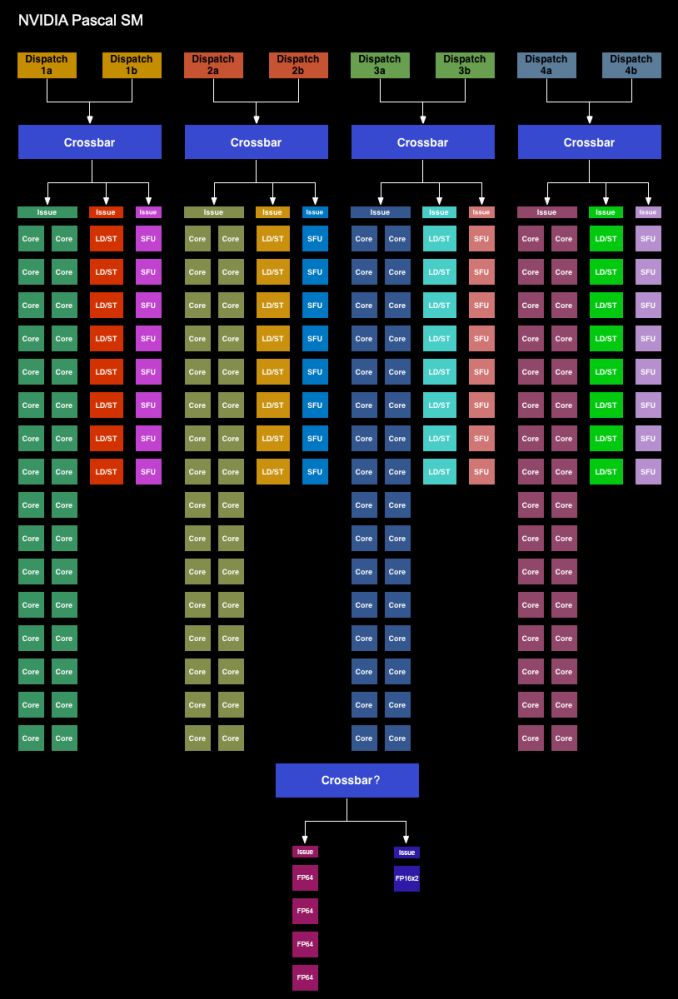

We’ll start with the GP104 SM. Simply named the SM for this generation – NVIDIA has ditched the generational suffix due to the potential for confusion with the used-elsewhere SMP – the GP104 SM is very similar to the Maxwell SM. We’re still looking at a single SM partially sub-divided into four pieces, each containing a single warp scheduler that’s responsible for feeding 32 CUDA cores, 8 load/store units, and 8 Special Function Units, backed by a 64KB register file. There are two dispatch ports per warp schedule, so when an instruction stream allows it, a warp scheduler can extract a limited amount of ILP with an instruction stream by issuing a second instruction to an unused resource.

Meanwhile shared between every pair of sub-partitions is 4 texture units and the combined L1/texture cache, again unchanged from Maxwell. Finally, we have the resources shared throughout the whole SM: the 96KB shared memory, the instruction cache, and not pictured on NVIDIA’s diagrams, the 4 FP64 CUDA cores and 1 FP16x2 CUDA core.

Overall then at the diagram level the GP104 SM looks almost identical to the Maxwell SM, but with one exception: the PolyMorph Engine. Although the distinction is largely arbitrary for GP104, the PolyMorph Engine has been moved up a level; it’s no longer part of the SM, but rather part of the newly re-introduced TPC, which itself sits between the GPC and the SM.

The TPC exists because although GP104 still has a 1:1 ratio between PolyMorph Engines and SMs, the Pascal architecture itself allows for different SM configurations, which is in turn used on GP100 to allow it to have multiple smaller SMs of 64 CUDA Cores. For GP100 the TPC allows for multiple SMs to share a PolyMorph Engine, but for GP104 there’s no sharing involved. To that end the TPC as an organizational unit technically exists across all Pascal parts, but it has no real significance for GP104. In fact it doesn’t even have a real name; NVIDIA reused the acronym from earlier DX10 architectures, where the TPC was the name assigned to the Texture Processor Cluster.

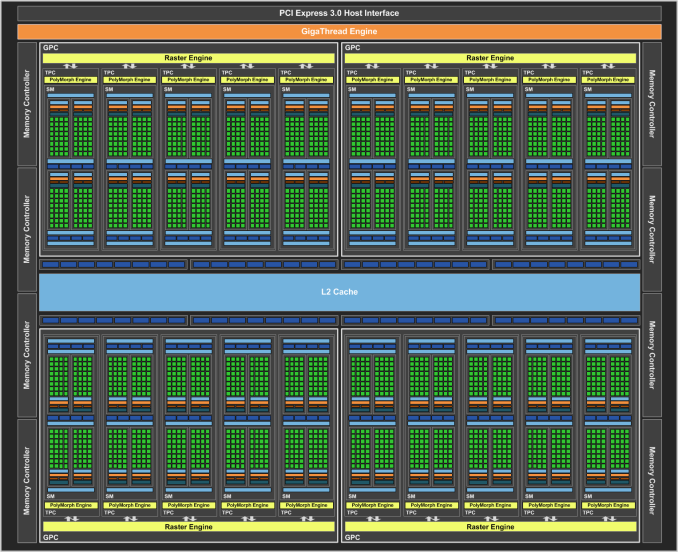

Looking at the bigger picture of the complete GP104 GPU, the similarities continue between GP104 and GM204. GP104’s SMs are clustered five-a-piece inside of the GPC, with each cluster sharing a single Raster Engine. Overall there are 4 such GPCs, giving us 20 SMs altogether. Compared to GM204 then, we’re looking at the same number of GPCs, with each GPC having gained 1 SM.

Things get more interesting when we look at the back end of the rendering/execution pipeline, which is comprised of the L2 cache, ROPs, and memory controllers. The ROP/L2 count has not changed relative to GM204 – we still have 64 ROPs paired up with a total of 2MB of L2 cache – however the memory controller count has. And with it the logical configuration of the ROP/L2 blocks have changed as well.

Whereas GM204 had 4 64bit GDDR5 memory controllers, each connected to 2 or 4 memory chips, GP104 breaks that down further to 8 32bit GDDR5X memory controllers, each of which is connected to 1 memory chip on GTX 1080. I’ll go into greater detail on GDDR5X a bit later, but the significance of this backend organizational change has to do with the introduction of GDDR5X. Because GDDR5X reads and writes data in 64B amounts (versus 32B amounts on GDDR5), NVIDIA has reorganized the memory controllers to ensure that each memory controller still operates on the same amount of data. With GDDR5 they teamed up two GDDR5 channels to get 64B operations, whereas with GDDR5X this can be accomplished with a single memory channel.

This in turn is where the ROP reorganization comes from. As there’s a 1:1 relationship between ROP partitions and memory controllers, the 64 ROPs are now broken up into 8 partitions for GP104, as opposed to 4 partitions on GM204. There are some performance tradeoffs that come from having more ROP partitions, but to the best of my knowledge these should not be significant.

Meanwhile the new GDDR5X memory controllers are also backwards compatible with traditional GDDR5, which in turn is used to drive the GTX 1070 with its 8Gbps GDDR5. The difference in operation between GDDR5 and GDDR5X does make the ROP situation a bit trickier overall for NVIDIA’s architects – now they need to be able to handle two different memory access patterns – though for NVIDIA this isn’t a wholly new problem. Previous generation architectures have supported both GDDR5 and DDR3, the two of which have their own differences in memory access patterns.

In a by-the-numbers comparison then, Pascal does not bring any notable changes in throughput relative to Maxwell. CUDA cores, texture units, PolyMorph Engines, Raster Engines, and ROPs all have identical theoretical throughput-per-clock as compared to Maxwell. So on a clock-for-clock, unit-for-unit basis, Pascal is not any faster on paper. And while NVIDIA does not disclose the size/speed of most of their internal datapaths, so far I haven’t seen anything to suggest that these have radically changed. This continuity means that outside of its new features, GP104 behaves a lot like GM204. Though it should be noted that real world efficiency isn’t quite as cut and dry, as various factors such as the increased SM count and changes in memory technology can greatly influence this.

200 Comments

View All Comments

eddman - Wednesday, July 20, 2016 - link

That puts a lid on the comments that Pascal is basically a Maxwell die-shrink. It's obviously based on Maxwell but the addition of dynamic load balancing and preemption clearly elevates it to a higher level.Still, seeing that using async with Pascal doesn't seem to be as effective as GCN, the question is how much of a role will it play in DX12 games in the next 2 years. Obviously async isn't be-all and end-all when it comes to performance but can Pascal keep up as a whole going forward or not.

I suppose we won't know until more DX12 are out that are also optimized properly for Pascal.

javishd - Wednesday, July 20, 2016 - link

Overwatch is extremely popular right now, it deserves to be a staple in gaming benchmarks.jardows2 - Wednesday, July 20, 2016 - link

Except that it really is designed as an e-sport style game, and can run very well with low-end hardware, so isn't really needed for reviewing flagship cards. In other words, if your primary desire is to find a card that will run Overwatch well, you won't be looking at spending $200-$700 for the new video cards coming out.Ryan Smith - Wednesday, July 20, 2016 - link

And this is why I really wish Overwatch was more demanding on GPUs. I'd love to use it and DOTA 2, but 100fps at 4K doesn't tell us much of use about the architecture of these high-end cards.Scali - Wednesday, July 20, 2016 - link

Thanks for the excellent write-up, Ryan!Especially the parts on asynchronous compute and pre-emption were very thorough.

A lot of nonsense was being spread about nVidia's alleged inability to do async compute in DX12, especially after Time Spy was released, and actually showed gains from using multiple queues.

Your article answers all the criticism, and proves the nay-sayers wrong.

Some of them went so far in their claims that they said nVidia could not even do graphics and compute at the same time. Even Maxwell v2 could do that.

I would say you have written the definitive article on this matter.

The_Assimilator - Wednesday, July 20, 2016 - link

Sadly that won't stop the clueless AMD fanboys from continuing to harp on that NVIDIA "doesn't have async compute" or that it "doesn't work". You've gotta feel for them though, NVIDIA's poor performance in a single tech demo... written with assistance from AMD... is really all the red camp has to go on. Because they sure as hell can't compete in terms of performance, or power usage, or cooler design, or adhering to electrical specifications...tipoo - Wednesday, July 20, 2016 - link

Pretty sure critique was of Maxwell. Pascals async was widely advertised. It's them saying "don't worry, Maxwell can do it" to questions about it not having it, and then when Pascal is released, saying "oh yeah, performance would have tanked with it on Maxwell", that bugs people as it shouldScali - Wednesday, July 20, 2016 - link

Nope, a lot of critique on Time Spy was specifically *because* Pascal got gains from the async render path. People said nVidia couldn't do it, so FutureMark must be cheating/bribed.darkchazz - Thursday, July 21, 2016 - link

It won't matter much though because they won't read anything in this article or Futuremark's statement on Async use in Time Spy.And they will keep linking some forum posts that claim nvidia does not support Async Compute.

Nothing will change their minds that it is a rigged benchmark and the developers got bribed by nvidia.

Scali - Friday, July 22, 2016 - link

Yea, not even this official AMD post will: http://radeon.com/radeon-wins-3dmark-dx12/