The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTBattlefield 4

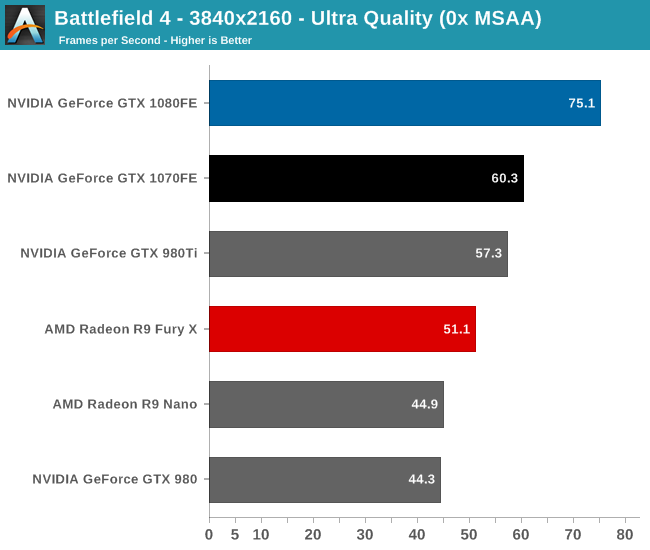

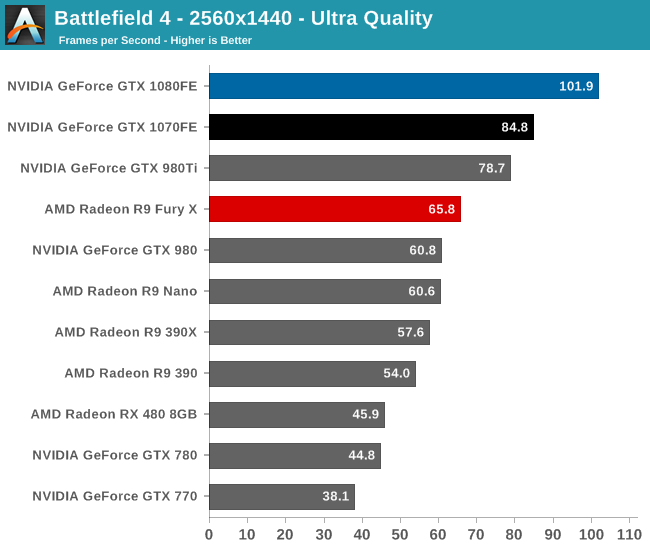

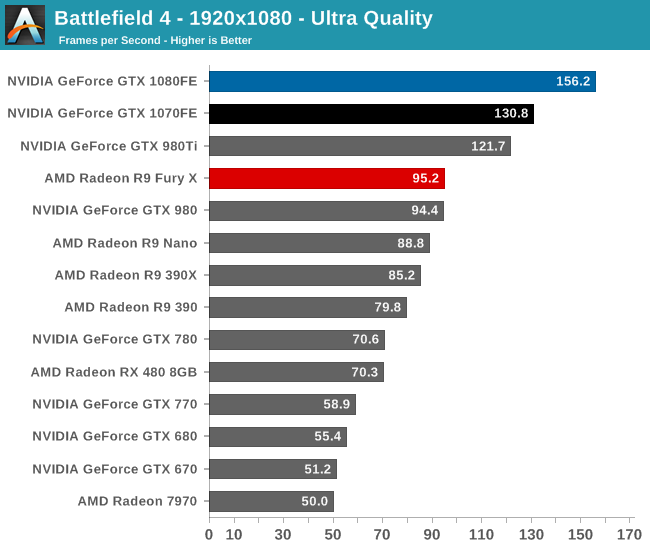

One of the older games in our benchmark suite, DICE’s Battlefield 4 remains a staple of MP gaming. Even at its age, Battlefield 4 remained a challenging game in its own right, as very few mass market MP shooters push the envelope on graphics quality right now. As these benchmarks are from single player mode, based on our experiences our rule of thumb here is that multiplayer framerates will dip to half our single player framerates, which means a card needs to be able to average at least 60fps if it’s to be able to hold up in multiplayer.

As a game that has traditionally favored NVIDIA, Battlefield 4 makes for a very clean sweep of the field. The GTX 1080 takes top honors with the GTX 1070 some distance behind it. Notably, the two Pascal cards become the first cards to cross 60fps at 4K, which means that they’re the first cards we can be reasonably sure won’t have framerate dips below 30fps in multiplayer.

Looking at our standard generational comparisons, both GTX 1080 and GTX 1070 improve upon their predecessors by about what we’d expect; 67% and 58% respectively. Or to see how GTX 1080 and GTX 1070 compare, we find that the GTX 1080 leads its cut-down sibling by between 20% and 25%, with the gap increasing with the resolution. This is consistent with what we know about GTX 1080, as its bandwidth advantage means that it’s going to have an easier time pushing pixels at 4K, as the case is here.

Finally, to check in on the GTX 680, we find the GTX 1080 has only improved in performance by 2.8x, which is actually a bit less of a gain than the average. None the less we’ve gone from a card that can’t quite muster 1080p with 4xMSAA to a card that can easily handle 4K without any MSAA.

200 Comments

View All Comments

grrrgrrr - Wednesday, July 20, 2016 - link

Solid review! Some nice architecture introductions.euskalzabe - Wednesday, July 20, 2016 - link

The HDR discussion of this review was super interesting, but as always, there's one key piece of information missing: WHEN are we going to see HDR monitors that take advantage of these new GPU abilities?I myself am stuck at 1080p IPS because more resolution doesn't entice me, and there's nothing better than IPS. I'm waiting for HDR to buy my next monitor, but being 5 years old my Dell ST2220T is getting long in the teeth...

ajlueke - Wednesday, July 20, 2016 - link

Thanks for the review Ryan,I think the results are quite interesting, and the games chosen really help show the advantages and limitations of the different architectures. When you compare the GTX 1080 to its price predecessor, the 980 Ti, you are getting an almost universal ~25%-30% increase in performance.

Against rival AMDs R9 Fury X, there is more of a mixed bag. As the resolutions increase the bandwidth provided by the HBM memory on the Fury X really narrows the gap, sometimes trimming the margin to less that 10%,s specifically in games optimized more for DX12 "Hitman, "AotS". But it other games, specifically "Rise of the Tomb Raider" which boasts extremely high res textures, the 4Gb memory size on the Fury X starts to limit its performance in a big way. On average, there is again a ~25%-30% performance increase with much higher game to game variability.

This data lets a little bit of air out of the argument I hear a lot that AMD makes more "future proof" cards. While many Nvidia 900 series users may have to upgrade as more and more games switch to DX12 based programming. AMD Fury users will be in the same boat as those same games come with higher and higher res textures, due to the smaller amount of memory on board.

While Pascal still doesn't show the jump in DX12 versus DX11 that AMD's GPUs enjoy, it does at least show an increase or at least remain at parity.

So what you have is a card that wins in every single game tested, at every resolution over the price predecessors from both companies, all while consuming less power. That is a win pretty much any way you slice it. But there are elements of Nvidia’s strategy and the card I personally find disappointing.

I understand Nvidia wants to keep features specific to the higher margin professional cards, but avoiding HBM2 altogether in the consumer space seems to be a missed opportunity. I am a huge fan of the mini ITX gaming machines. And the Fury Nano, at the $450 price point is a great card. With an NVMe motherboard and NAS storage the need for drive bays in the case is eliminated, the Fury Nano at only 6” leads to some great forward thinking, and tiny designs. I was hoping to see an explosion of cases that cut out the need for supporting 10-11” cards and tons of drive bays if both Nvidia and AMD put out GPUs in the Nano space, but it seems not to be. HBM2 seems destined to remain on professional cards, as Nvidia won’t take the risk of adding it to a consumer Titan or GTX 1080 Ti card and potentially again cannibalize the higher margin professional card market. Now case makers don’t really have the same incentive to build smaller cases if the Fury Nano will still be the only card at that size. It’s just unfortunate that it had to happen because NVidia decided HBM2 was something they could slap on a pro card and sell for thousands extra.

But also what is also disappointing about Pascal stems from the GTX 1080 vs GTX 1070 data Ryan has shown. The GTX 1070 drops off far more than one would expect based off CUDA core numbers as the resolution increases. The GDDR5 memory versus the GDDR5X is probably at fault here, leading me to believe that Pascal can gain even further if the memory bandwidth is increased more, again with HBM2. So not only does the card limit you to the current mini-ITX monstrosities (I’m looking at you bulldog) by avoiding HBM2, it also very likely is costing us performance.

Now for the rank speculation. The data does present some interesting scenarios for the future. With the Fury X able to approach the GTX 1080 at high resolutions, most specifically in DX12 optimized games. It seems extremely likely that the Vega GPU will be able to surpass the GTX 1080, especially if the greatest limitation (4 Gb HBM) is removed with the supposed 8Gb of HBM2 and games move more and more the DX12. I imagine when it launches it will be the 4K card to get, as the Fury X already acquits itself very well there. For me personally, I will have to wait for the Vega Nano to realize my Mini-ITX dreams, unless of course, AMD doesn’t make another Nano edition card and the dream is dead. A possibility I dare not think about.

eddman - Wednesday, July 20, 2016 - link

The gap getting narrower at higher resolutions probably has more to do with chips' designs rather than bandwidth. After all, Fury is the big GCN chip optimized for high resolutions. Even though GP104 does well, it's still the middle Pascal chip.P.S. Please separate the paragraphs. It's a pain, reading your comment.

Eidigean - Wednesday, July 20, 2016 - link

The GTX 1070 is really just a way for Nvidia to sell GP104's that didn't pass all of their tests. Don't expect them to put expensive memory on a card where they're only looking to make their money back. Keeping the card cost down, hoping it sells, is more important to them.If there's a defect anywhere within one of the GPC's, the entire GPC is disabled and the chip is sold at a discount instead of being thrown out. I would not buy a 1070 which is really just a crippled 1080.

I'll be buying a 1080 for my 2560x1600 desktop, and an EVGA 1060 for my Mini-ITX build; which has a limited power supply.

mikael.skytter - Wednesday, July 20, 2016 - link

Thanks Ryan! Much appreciated.chrisp_6@yahoo.com - Wednesday, July 20, 2016 - link

Very good review. One minor comment to the article writers - do a final check on grammer - granted we are technical folks, but it was noticeable especially on the final words page.madwolfa - Wednesday, July 20, 2016 - link

It's "grammar", though. :)Eden-K121D - Thursday, July 21, 2016 - link

Oh the ironychrisp_6@yahoo.com - Thursday, July 21, 2016 - link

oh snap, that is some funny stuff right there