The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTSLI: The Abridged Version

Not to be outdone by their efforts to reduce input lag, for Pascal NVIDIA is also rolling out some fairly important changes to SLI. These operate at both the hardware level and the software level, and for gamers fortunate enough to be able to own multiple Pascal cards, they will want to pay close attention to this.

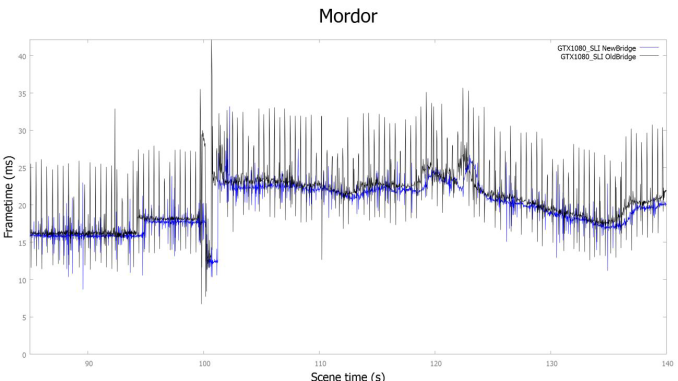

On the hardware side of matters, NVIDIA is boosting the speed of the SLI connection. Previously with Maxwell 2 it operated at up to 400MHz, but with Pascal it can now operate at up to 650MHz. This is a substantial 63% increase in link speed.

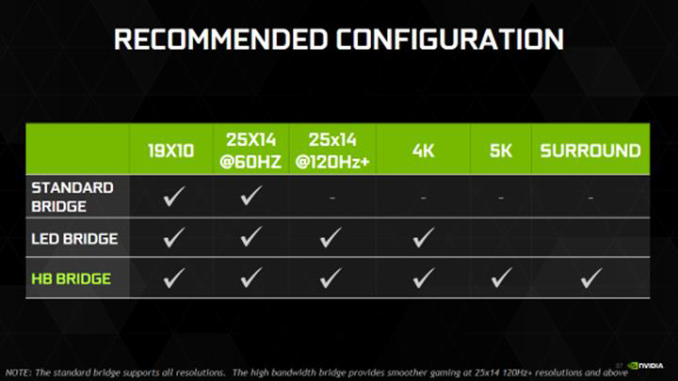

However to actually get the faster link speed, in many cases new(er) SLI bridges are needed. The older bridges, particularly the flexible bridges, are not rated nor capable of supporting 650MHz. Only the more recent (and relatively rare) LED bridge, and NVIDIA’s brand new High Bandwidth (HB) bridge are capable of 650MHz.

And while the older LED Bridge is 650MHz capable, NVIDIA is still going to be phasing it out in favor of the new HB Bridge. The reason why is because it adds support for Pascal’s second SLI hardware feature: SLI link teaming.

With previous GPU generations, a GPU could only use a single SLI link to communicate with another GPU. The purpose of including multiple SLI links on a high-end card then was to allow it to communicate with multiple (3+) cards. But if you had a more basic 2-way SLI setup, then the second link on each card would go unused.

Pascal changes this up by allowing the SLI links to be teamed. Now two cards can connect to each other over two links, almost doubling the amount of bandwidth between the cards. Combined with the higher frequency of the SLI link itself, and the effective increase in bandwidth between cards in a 2-way SLI setup is 170%, or just short of a 3x increase in bandwidth.

The purpose of teaming SLI links is that even though the bandwidth boost from the higher link frequency is significant, for the highest resolutions and refresh rates it’s still not enough. By NVIDIA’s own admittance, SLI performance at better than 1440p60 was subpar, as the SLI interface would get saturated. The faster link gets NVIDIA enough bandwidth to comfortably handle 2-way SLI at 1440p120 and 4Kp60, but that’s it. Once you go past that, to configurations that essentially require DisplayPort 1.3+ (4Kp120, 5Kp60, and multi-monitor surround), then even a single 650MHz link isn’t enough. Ergo NVIDIA has started link teaming to get yet more bandwidth.

Getting back to the new HB bridge then, the new bridge is being introduced to provide a bridge suitable for link teaming. Previous bridges simply weren’t wired two have multiple links connect the same video cards – the cards didn’t support such a thing – whereas HB bridges are. Meanwhile as these are fixed (PCB) bridges, NVIDIA is offering their reference bridges in 3 sizes: 2 (40mm), 3 (60mm), and 4 (80mm) slot spacing, to mesh with cards that are either directly next to each other, have 1 empty slot between them, or 2 empty slots between them. NVIDIA is selling the new HB bridge for $40 over on their store, and NVIDIA’s partners are also preparing their own custom bridges. EVGA has announced a LED-let HB bridge, as the LED bridges proved rather popular with both system builders and customers looking for a bit more flare for their windowed cases.

Meanwhile, on a brief aside, I asked NVIDIA why they were still using SLI bridges instead of just routing everything over PCI Express. While I doubt they mind selling $40 bridges, the technical answer is that all things considered, this gave them more bandwidth. Rather than having to share potentially valuable PCIe bandwidth with CPU-GPU communication, the SLI links are dedicated links, eliminating any contention and potentially making them more reliable. The SLI links are also directly routed to the display controller, so there’s a bit more straightforward (lower latency) path as well.

Deprecated: 3-Way & 4-Way SLI

These aforementioned hardware updates to SLI are also having a major impact on the kinds of SLI configurations NVIDIA is going to be able (and willing) to support in the future. With both available SLI links on a Pascal card now teamed together for a single card, it’s not possible to do 3-way/4-way SLI and link teaming at the same time, as there aren’t enough links for both. As a result, NVIDIA is going to be deprecating 3-way and 4-way SLI.

Until shortly after the GTX 1080 launch, NVIDIA’s plans here were actually a bit more complex – involving a feature the company called an Enthusiast Key – but thankfully things have been simplified some. As it stands, NVIDIA is not going to be eliminating support for 3-way and 4-way SLI entirely; if you have a 3/4–way bridge, you can still setup a 3+ card configuration, bandwidth limitations and all. But for the Pascal generation there are going to be focusing their development resources on 2-way SLI, hence making 3-way and 4-way SLI deprecated.

In practice the way this will work is that NVIDIA will only be supporting 3 and 4-way SLI for a small number of programs – things like Unigine and 3DMark that are used by competitive benchmarkers/overclockers, so that they may continue their practices. For actual gamer use they are strongly discouraging anything over 2-way SLI, and in fact NVIDIA will not be enabling 3+ card configurations in their drivers for the vast majority of games (unless a developer specifically comes to them and asks). This all but puts an end to 3-way and 4-way SLI on consumer gaming setups.

As for why NVIDIA would want to do this, the answer boils down to two factors. The first of course is the introduction of SLI link teaming, while the second has to deal with games themselves. As we’ve discussed in the past, game engines are increasingly becoming AFR-unfriendly, which is making it harder and harder to get performance benefits out of SLI. 2-way SLI is hard enough, never mind 3/4-way SLI where upwards of 4 frames need to be rendered concurrently. Consequently, with greater bandwidth requirements necessitating link teaming, Pascal is as good a point as any to deprecate larger SLI card configurations.

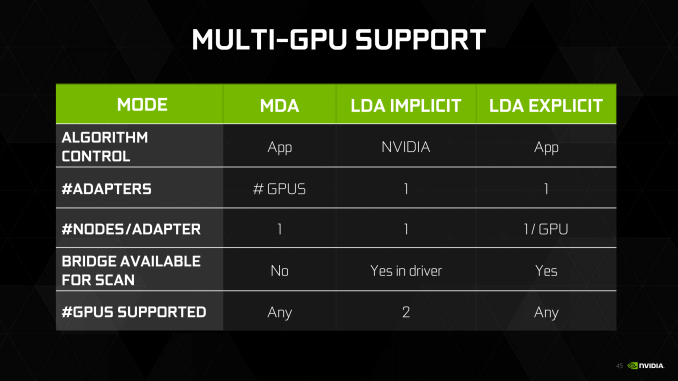

Now with all of that said, however. DirectX 12 makes the picture a little more complex still. Because DirectX 12 adds new multi-GPU modes – some of which radically change how mGPU works – NVIDIA’s own changes only impact specific scenarios. All DX9/10/11 games are impacted by the new 2-way SLI limit. However whether a DX12 game is impacted depends on the mGPU mode used.

In implicit mode, which essentially recreates DX11 style mGPU under DX12, the 2-way SLI limit is in play. This mode is, by design, under the control of the GPU vendor and relies on all of the same mGPU technologies as are already in use today. This means traffic passes over the SLI bridge, and NVIDIA will only be working to optimize mGPU for 2-way SLI.

However with explicit mode, the 2-way limit is lifted. In explicit mode it’s the game developer that has control over how mGPU works – NVIDIA has no responsibility here – and it’s up to them to decide if they want to support more than 2 GPUs. In unlinked explicit mode this is all relatively straightforward, with the game addressing each GPU separately and working over the PCIe bus.

Meanwhile in explicit linked mode, where the relevant GPUs are presented as a single linked adapter, the GPU limit is still up to the developer. In this mode developers can even use the SLI bridge if they want – though again keeping in mind the bandwidth limitations – and it’s the most powerful mode for matching GPUs.

As for whether developers will actually want to support 3+ GPUs using DX12 explicit multiadapter, this remains to be seen. So far of the small number of games to even use it, none support 3+ GPUs, and as with NVIDIA-managed mGPU, the larger the number of GPUs the harder the task of keeping them all productive. We will have to see what developers decide to do, but outside of dedicated benchmarks (e.g. 3DMark) I would be a bit surprised to see developers support anything more than 2 GPUs.

200 Comments

View All Comments

grrrgrrr - Wednesday, July 20, 2016 - link

Solid review! Some nice architecture introductions.euskalzabe - Wednesday, July 20, 2016 - link

The HDR discussion of this review was super interesting, but as always, there's one key piece of information missing: WHEN are we going to see HDR monitors that take advantage of these new GPU abilities?I myself am stuck at 1080p IPS because more resolution doesn't entice me, and there's nothing better than IPS. I'm waiting for HDR to buy my next monitor, but being 5 years old my Dell ST2220T is getting long in the teeth...

ajlueke - Wednesday, July 20, 2016 - link

Thanks for the review Ryan,I think the results are quite interesting, and the games chosen really help show the advantages and limitations of the different architectures. When you compare the GTX 1080 to its price predecessor, the 980 Ti, you are getting an almost universal ~25%-30% increase in performance.

Against rival AMDs R9 Fury X, there is more of a mixed bag. As the resolutions increase the bandwidth provided by the HBM memory on the Fury X really narrows the gap, sometimes trimming the margin to less that 10%,s specifically in games optimized more for DX12 "Hitman, "AotS". But it other games, specifically "Rise of the Tomb Raider" which boasts extremely high res textures, the 4Gb memory size on the Fury X starts to limit its performance in a big way. On average, there is again a ~25%-30% performance increase with much higher game to game variability.

This data lets a little bit of air out of the argument I hear a lot that AMD makes more "future proof" cards. While many Nvidia 900 series users may have to upgrade as more and more games switch to DX12 based programming. AMD Fury users will be in the same boat as those same games come with higher and higher res textures, due to the smaller amount of memory on board.

While Pascal still doesn't show the jump in DX12 versus DX11 that AMD's GPUs enjoy, it does at least show an increase or at least remain at parity.

So what you have is a card that wins in every single game tested, at every resolution over the price predecessors from both companies, all while consuming less power. That is a win pretty much any way you slice it. But there are elements of Nvidia’s strategy and the card I personally find disappointing.

I understand Nvidia wants to keep features specific to the higher margin professional cards, but avoiding HBM2 altogether in the consumer space seems to be a missed opportunity. I am a huge fan of the mini ITX gaming machines. And the Fury Nano, at the $450 price point is a great card. With an NVMe motherboard and NAS storage the need for drive bays in the case is eliminated, the Fury Nano at only 6” leads to some great forward thinking, and tiny designs. I was hoping to see an explosion of cases that cut out the need for supporting 10-11” cards and tons of drive bays if both Nvidia and AMD put out GPUs in the Nano space, but it seems not to be. HBM2 seems destined to remain on professional cards, as Nvidia won’t take the risk of adding it to a consumer Titan or GTX 1080 Ti card and potentially again cannibalize the higher margin professional card market. Now case makers don’t really have the same incentive to build smaller cases if the Fury Nano will still be the only card at that size. It’s just unfortunate that it had to happen because NVidia decided HBM2 was something they could slap on a pro card and sell for thousands extra.

But also what is also disappointing about Pascal stems from the GTX 1080 vs GTX 1070 data Ryan has shown. The GTX 1070 drops off far more than one would expect based off CUDA core numbers as the resolution increases. The GDDR5 memory versus the GDDR5X is probably at fault here, leading me to believe that Pascal can gain even further if the memory bandwidth is increased more, again with HBM2. So not only does the card limit you to the current mini-ITX monstrosities (I’m looking at you bulldog) by avoiding HBM2, it also very likely is costing us performance.

Now for the rank speculation. The data does present some interesting scenarios for the future. With the Fury X able to approach the GTX 1080 at high resolutions, most specifically in DX12 optimized games. It seems extremely likely that the Vega GPU will be able to surpass the GTX 1080, especially if the greatest limitation (4 Gb HBM) is removed with the supposed 8Gb of HBM2 and games move more and more the DX12. I imagine when it launches it will be the 4K card to get, as the Fury X already acquits itself very well there. For me personally, I will have to wait for the Vega Nano to realize my Mini-ITX dreams, unless of course, AMD doesn’t make another Nano edition card and the dream is dead. A possibility I dare not think about.

eddman - Wednesday, July 20, 2016 - link

The gap getting narrower at higher resolutions probably has more to do with chips' designs rather than bandwidth. After all, Fury is the big GCN chip optimized for high resolutions. Even though GP104 does well, it's still the middle Pascal chip.P.S. Please separate the paragraphs. It's a pain, reading your comment.

Eidigean - Wednesday, July 20, 2016 - link

The GTX 1070 is really just a way for Nvidia to sell GP104's that didn't pass all of their tests. Don't expect them to put expensive memory on a card where they're only looking to make their money back. Keeping the card cost down, hoping it sells, is more important to them.If there's a defect anywhere within one of the GPC's, the entire GPC is disabled and the chip is sold at a discount instead of being thrown out. I would not buy a 1070 which is really just a crippled 1080.

I'll be buying a 1080 for my 2560x1600 desktop, and an EVGA 1060 for my Mini-ITX build; which has a limited power supply.

mikael.skytter - Wednesday, July 20, 2016 - link

Thanks Ryan! Much appreciated.chrisp_6@yahoo.com - Wednesday, July 20, 2016 - link

Very good review. One minor comment to the article writers - do a final check on grammer - granted we are technical folks, but it was noticeable especially on the final words page.madwolfa - Wednesday, July 20, 2016 - link

It's "grammar", though. :)Eden-K121D - Thursday, July 21, 2016 - link

Oh the ironychrisp_6@yahoo.com - Thursday, July 21, 2016 - link

oh snap, that is some funny stuff right there