Micron Confirms Mass Production of GDDR5X Memory

by Anton Shilov on May 12, 2016 3:00 PM EST

Micron Technology this week confirmed that it had begun mass production of GDDR5X memory. As revealed last week, the first graphics card to use the new type of graphics DRAM will be NVIDIA’s upcoming GeForce GTX 1080 graphics adapter powered by the company’s new high-performance GPU based on its Pascal architecture.

Micron’s first production GDDR5X chips (or, how NVIDIA calls them, G5X) will operate at 10 Gbps and will enable memory bandwidth of up to 320 GB/s for the GeForce GTX 1080, which is only a little less than the memory bandwidth of NVIDIA’s much wider memory bus equipped (and current-gen flagship) GeForce GTX Titan X/980 Ti. NVIDIA’s GeForce GTX 1080 video cards are expected to hit the market on May 27, 2016, and presumably Micron has been helping NVIDIA stockpile memory chips for a launch for some time now.

| NVIDIA GPU Specification Comparison | |||||||

| GTX 1080 | GTX 1070 | GTX 980 Ti | GTX 980 | GTX 780 | |||

| TFLOPs (FMA) | 9 TFLOPs | 6.5 TFLOPs | 5.6 TFLOPs | 5 TFLOPs | 4.1 TFLOPs | ||

| Memory Clock | 10Gbps GDDR5X | GDDR5 | 7Gbps GDDR5 |

6Gbps GDDR5 |

|||

| Memory Bus Width | 256-bit | ? | 384-bit | 256-bit | 384-bit | ||

| VRAM | 8 GB | 8 GB | 6 GB | 4 GB | 3 GB | ||

| VRAM Bandwidth | 320 GB/s | ? | 336 GB/s | 224 GB/s | 288 GB/s | ||

| Est. VRAM Power Consumption | ~20 W | ? | ~31.5 W | ~20 W | ? | ||

| TDP | 180 W | ? | 250 W | 165 W | 250 W | ||

| GPU | "GP104" | "GP104" | GM200 | GM204 | GK110 | ||

| Manufacturing Process | TSMC 16nm | TSMC 16nm | TSMC 28nm | ||||

| Launch Date | 05/27/2016 | 06/10/2016 | 05/31/2015 | 09/18/2014 | 05/23/2013 | ||

Earlier this year Micron began to sample GDDR5X chips rated to operate at 10 Gb/s, 11 Gb/s and 12 Gb/s in quad data rate (QDR) mode with 16n prefetch. However, it looks like NVIDIA decided to be conservative and only run the chips at the minimum frequency.

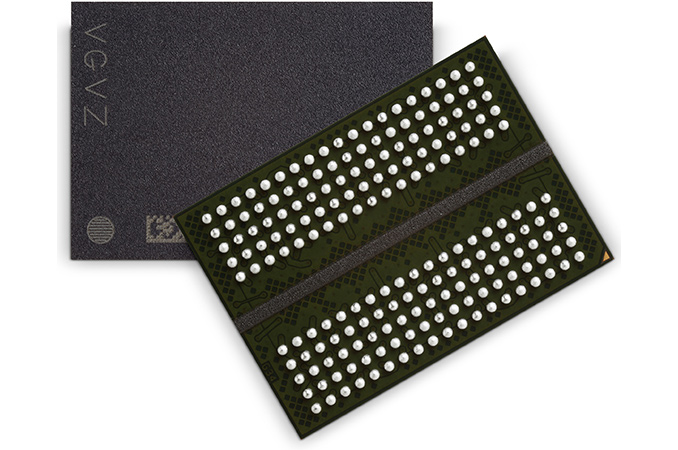

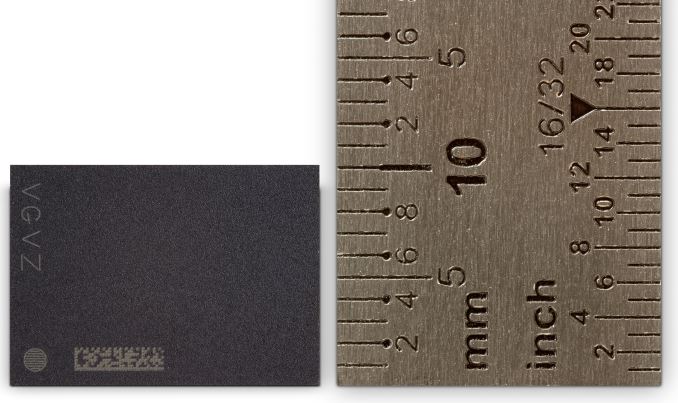

As reported, Micron’s first GDDR5X memory ICs (integrated circuits) feature 8 Gb (1 GB) capacity, sport 32-bit interface, use 1.35 V supply and I/O voltage as well as 1.8 V pump voltage (Vpp). The chips come in 190-ball BGA packages with 14×10 mm dimensions, so, they will take a little less space on graphics cards than GDDR5 ICs.

The announcement by Micron indicates that the company will be the only supplier of GDDR5X memory for NVIDIA’s GeForce GTX 1080 graphics adapters, at least initially. Another important thing is that GDDR5X is real, it is mass produced now and it can indeed replace GDDR5 as a cost-efficient solution for gaming graphics cards. How affordable is GDDR5X? It should not be too expensive - particularly as it's designed as an alternative to more complex technologies such as HBM - but this early in the game it's definitely a premium product over tried and true (and widely available) GDDR5.

Source: Micron

59 Comments

View All Comments

BurntMyBacon - Friday, May 13, 2016 - link

@Yojimbo: "Purposely holding it back? ... They have to worry too much about competition for that."Current evidence to the contrary. AMD and nVidia seem to be targeting two completely different market spaces. AMD states that Polaris 10 will be R9-390(X) level performance where nVidia is targeting the space above the GTX980Ti.

@Yojimbo: "Their gross margins would plummet if they spend billions of dollars developing and manufacturing a highly capable chip and then don't take full advantage of it by pairing it with sub-optimal memory configurations, because their selling prices would be lower."

Why? They are selling these things for $600/$700. Using an inferior memory pairing doesn't magically lower that price. The selling price won't need to drop until competition enters the field so the only thing an inferior memory pairing might do at this point is lower costs and increase their gross margins.

That all said, I don't think nVidia is "purposely holding back" either. Add-In-Board partners pairing the GPU with higher speed GDDR5X is entirely likely (Micron can go up to 12Gbps). This feels more like Kepler (600 / 700 series) where nVidia had to wait until yields were high enough for such large chips to be cost effective in the consumer market. We'll no doubt see higher bandwidth memory configurations when they get to market.

@Yojimbo: "I doubt HBM will find its way onto a consumer graphics-oriented GPU that has a die size close to that of GP104. GDDR5X still has headroom above its implementation in the 1080."

Agreed. Perhaps with later iterations and lower costs, but not as the technology currently stands.

Yojimbo - Friday, May 13, 2016 - link

"Current evidence to the contrary. AMD and nVidia seem to be targeting two completely different market spaces. AMD states that Polaris 10 will be R9-390(X) level performance where nVidia is targeting the space above the GTX980Ti."First of all that isn't "evidence to the contrary", that's just an opening of opportunity. It's like saying you have "evidence" that John will rob a bank because the vault door will be left unlocked. Secondly, the money spent on R&D on Polaris is a sunk cost. The amount it costs to manufacture doesn't change significantly by purposely gimping the chip with not enough memory bandwidth. The higher the performance of the GPU relative to what else is out there the more they can charge for the GPU. Since the costs are mostly fixed, what do they have to gain by gimping the performance and selling the card for lower margins? As an aside, AMD isn't targeting the 390(X) level of performance with their flagship Polaris card, they are targeting the market segment that the 390(X) currently occupies. NVIDIA is not targeting the the 980Ti market segment with the 1080, it's targeting the 980 market segment. The new x80 market segment will have a performance greater than the 980Ti. The new x90(X) market segment will have a performance greater than that of the 390(X).

"Why? They are selling these things for $600/$700. Using an inferior memory pairing doesn't magically lower that price. The selling price won't need to drop until competition enters the field so the only thing an inferior memory pairing might do at this point is lower costs and increase their gross margins."

You're right. Pairing the cards with debilitating memory doesn't magically lower the price they can charge for the GPUs, it does so in a quite logical and understandable way. Even without AMD competing directly in the segment (although they will be with Fury), the greater the performance delta between the 1080 and Polaris 10, the more NVIDIA can charge for the 1080. Also consider that AMD will eventually release Vega, which NVIDIA will eventually have to compete with. If NVIDIA has to re-engineer a new card and worry about properly clearing the inventory of the 1080 in order to compete with the Vega that's going to be enormously inefficient. It just makes a whole lot more sense to engineer and sell a non-gimped card to begin with.

"That all said, I don't think nVidia is "purposely holding back" either."

You also said:

"@Yojimbo: "Purposely holding it back? ... They have to worry too much about competition for that."

Current evidence to the contrary. AMD and nVidia seem to be targeting two completely different market spaces. AMD states that Polaris 10 will be R9-390(X) level performance where nVidia is targeting the space above the GTX980Ti."

Which is it? Do you think they are purposely holding it back or not? Why are you bothering to try to provide "evidence" for something you don't believe?

xthetenth - Tuesday, May 17, 2016 - link

1080 is priced much less on price/performance and more on being the fastest card right now. Taking extra time to come out with faster memory to make it faster is directly counterproductive to that appeal.xthetenth - Tuesday, May 17, 2016 - link

They're not purposely holding back Pascal, they're building it to get it out now, rather than in 2017, which is when HBM2 is going to be available in enough supply to sell to customers, or however long it takes to get better clocks out of GDDR5X.aznchum - Thursday, May 12, 2016 - link

I believe the GK 110 was a 384-bit bus with 288 GB/s of VRAM bandwidth.LarsonP - Friday, May 13, 2016 - link

GDDR5X is right here... right now.See you in Q2 2018 HBM 2 !

Yojimbo - Friday, May 13, 2016 - link

You mean Q2 2017?willis936 - Saturday, May 14, 2016 - link

We're in Q2 2017 right now :pYojimbo - Saturday, May 14, 2016 - link

Only if you're operating on NVIDIA fiscal year time.