Intel Unveils New Low-Cost PC Platform: Apollo Lake with 14nm Goldmont Cores

by Anton Shilov on April 15, 2016 6:00 PM EST

This week, at IDF Shenzhen, Intel has formally introduced its Apollo Lake platform for the next generation of Atom-based notebook SoCs. The platform will feature a new x86 microarchitecture as well as a new-generation graphics core for increased performance. Intel’s Apollo Lake is aimed at affordable all-in-ones, miniature PCs, hybrid devices, notebooks and tablet PCs in the second half of this year.

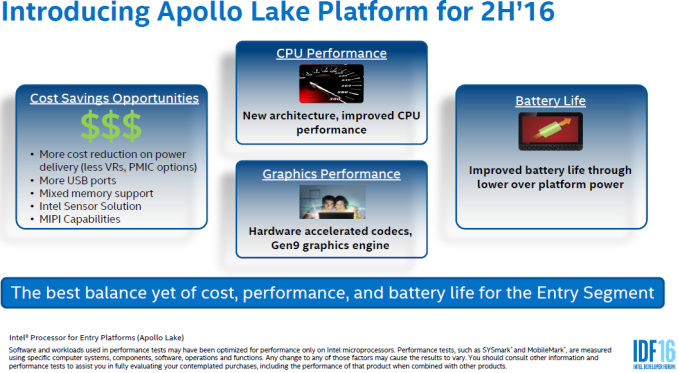

The Apollo Lake system-on-chips for PCs are based on the new Atom-based x86 microarchitecture, named Goldmont, as well as a new graphics core that features Intel’s ninth-generation architecture (Gen9) which is currently used in Skylake processors. Intel claims that due to microarchitectural enhancements the new SoCs will be faster in general-purpose tasks, but at this stage Intel has not quantified the improvements. The new graphics core is listed as being more powerful (most likely due to both better architecture and a higher count of execution units), but will also integrate more codecs, enabling hardware-accelerated playback of 4K video from hardware decoding of HEVC and VP9 codecs. The SoCs will support dual-channel DDR4, DDR3L and LPDDR3/4 memory, which will help PC makers to choose DRAM based on performance and costs. As for storage, the Apollo Lake will support traditional SATA drives, PCIe x4 drives and eMMC 5.0 options to appeal to all types of form-factors. When it comes to I/O, Intel proposes to use USB Type-C along with wireless technologies with Apollo Lake-powered systems.

| Comparison of Intel's Entry-Level PC and Tablet Platforms | ||||||

| Bay Trail | Braswell | Cherry Trail | Apollo Lake | |||

| Microarchitecture | Silvermont | Airmont | Airmont | Goldmont | ||

| SoC Code-Name | Valleyview | Braswell | Cherryview | unknown | ||

| Core Count | Up to 4 | |||||

| Graphics Architecture | Gen 7 | Gen8 | Gen8 | Gen9 | ||

| EU Count | unknown | 16 | 12/16 | unknown (24?) | ||

| Multimedia Codecs | MPEG-2 MPEG-4 AVC VC-1 WMV9 HEVC (software only) VP9 (software only) |

MPEG-2 MPEG-4 AVC VC-1 WMV9 HEVC (8-bit software/hybrid) VP9 (software/hybrid) |

MPEG-2 MPEG-4 AVC VC-1 WMV9 HEVC VP9 |

|||

| Process Technology | 22 nm | 14 nm | 14 nm | 14 nm | ||

| Launch | Q1 2014 | H1 2015 | 2015 | H2 2016 | ||

From the IDF presentation, Intel shares only a few brief details regarding its new Apollo Lake design platform, but does not disclose exact specifications or performance numbers. At this point, based on 14nm Airmont designs, it is pretty safe to assume that the new SoCs will contain up to four Goldmont cores in consumer devices but perhaps 8+ in communications and embedded systems. Intel has not specified the TDP of its new processors but claims that power management features of the platform will help it to improve battery life compared to previous-gen systems (which might point to a Speed Shift like arrangement similar to what we see on Skylake, perhaps). While Intel does not reveal specifics of its own SoCs, the company shares its vision for the upcoming PCs powered by the Apollo Lake platform.

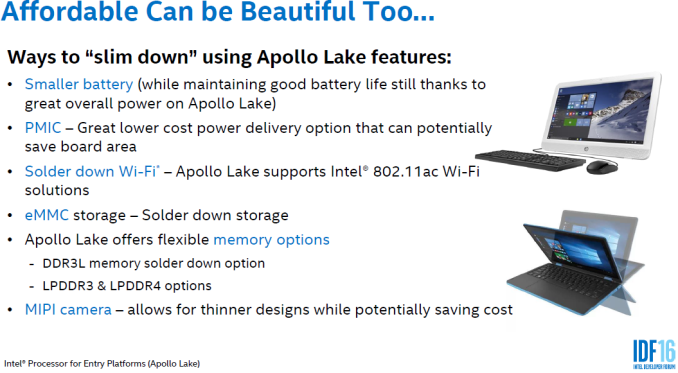

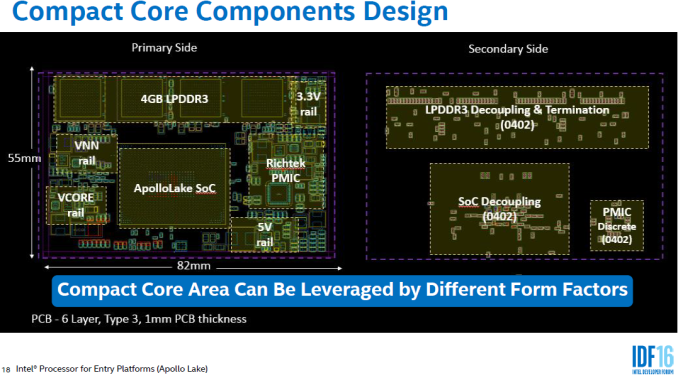

Firstly, Intel believes that the upcoming affordable PCs, whether these are all-in-one desktops, miniature systems, 2-in-1 hybrids, laptops or tablets, should be very thin. According to their market research, this will make the devices more attractive to the buyer, which is important. To make systems thinner, Intel traditionally proposes to use either M.2 or solder-down eMMC solid-state storage options instead of 2.5” HDDs/SSDs. In addition, the company believes that it makes sense to use solder-down Wi-Fi, instead of using a separate module. Intel seems to be especially proud with the compactness of the Apollo Lake SoC (as well as other core components) and thus the whole platform, which is another factor that will help to make upcoming systems thinner. For the first time in recent years, Intel also proposes the use of smaller batteries, but devices can maintain long battery life by cutting the power consumption of the entire platform. While in many cases reduction of battery size makes sense, it should be noted that high-resolution displays typically consume a lot of energy, which is why it is hard to reduce the size of batteries, but maintain the visual experience along with a long battery life.

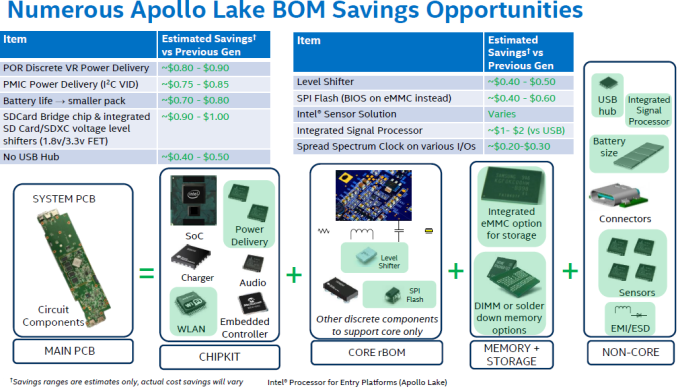

Secondly, PCs based on the Apollo Lake should be very affordable, which is why Intel’s reference core components design can be used for different form-factors (AiO and mobile). Additionally, the company reveals a number of BOM (bill of materials) savings opportunities, which are a result of higher level of SoC integration as well as a recommended choice of components. In the slide above, using all the savings can make a difference in BOM for between $5.55 and $7.35, which could mean double memory or a better display for the same price for the new generation.

Intel’s reference design for Apollo Lake-based PCs seems to be a tablet/2-in-1 hybrid system with an 11.6” full-HD (1920x1080) 10-point multi-touch display, 4 GB of LPDDR3-1866 memory, 64 GB M.2 SATA3 SSD or 32 GB eMMC storage, an M.2 wireless module supporting 802.11ac, an optional M.2 LTE modem, an integrated USB 2 camera, a host of sensors (accelerometer, ambient light, proximity detection, and magnetic switching) as well as a USB Type-C connector supporting USB power delivery and alternate modes. Such reference design can power not only mobile, but also Aan IO and even small form-factor desktop PCs. Still, given the fact that we are talking about low-cost systems, do not expect retail computers to feature multiple storage devices and LTE modems. However, PC makers may opt for more advanced displays as well as better integrated cameras, or an SI might plump for a half-price 'Macbook-like' device design using Type-C, albeit on the Atom microarchitecture. This is Intel's vision forthe next generation of Chromebooks: the 'cloud book' market.

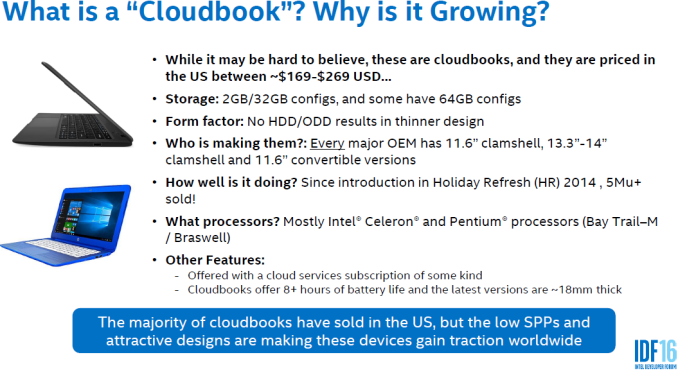

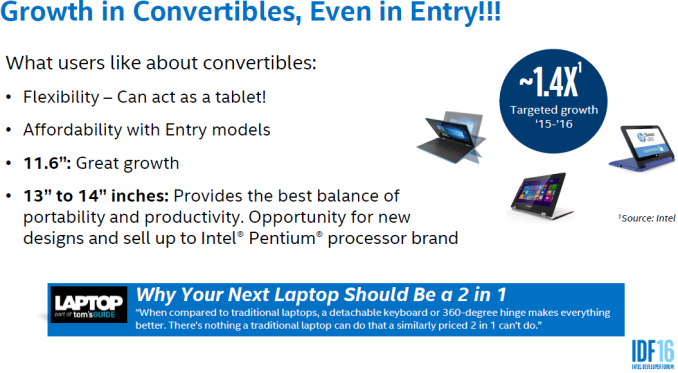

PCs based on Intel’s Apollo Lake platforms will emerge in the second half of the year and will carry Celeron and Pentium-branded processors. At present, entry-level notebooks (which Intel calls Cloudbooks) offer 2 GB of memory, 32 GB of storage, 8+ hours of battery life and ~18mm thick designs. With Apollo Lake, OEMs should be able to increase the amount of RAM and/or storage capacity, make systems generally thinner, but maintain their $169 - $269 price-points. Intel also believes that its Apollo Lake presents great opportunities to build 2-in-1 hybrid PCs (convertibles) and capitalize on higher margins of such systems.

Traditionally, Intel discusses options and its vision, but not actual PCs, at its IDF trade-shows. It remains to be seen whether PC makers decide to build low-cost convertibles or ultra-thin notebook designs, or will stick to more traditional clamshell notebooks. In fact, we will learn more about BTS (back-to-school) plans of major PC OEMs regarding Apollo Lake at the upcoming Computex trade-show in early June. There's also IDF San Francisco in August where Intel may open some lids on how the new Goldmont core differs from Airmont.

Source: Intel

95 Comments

View All Comments

name99 - Friday, April 15, 2016 - link

Except that the product segmentation is what is made so problematic BECAUSE of their insistence on x86 ISA... If they used a different ISA, there would be much less fear about Atom cannibalizing the high end, and they wouldn't need such strong crippling of Atom.And the delay in implementation is because of the x86 ISA... Implementing a high end ARM CPU takes, worst case, around 4 years. The equivalent figure for x86 was 7 years at the time of Nehalem, and is now probably 8 years or more.

x86 is not ORTHOGONAL to the issues you have identified as a problem, it is a CAUSE of them.

Reflex - Saturday, April 16, 2016 - link

I do not agree with this. Part of what makes Intel chips so expensive is an advantage mentioned above: Their class leading process technology. You cannot escape that cost. Intel has the most expensive to operate fabs in the world, if they produced a completely generic ARM chip with no special features it would still have to be sold for more money than what any of their competitors could sell theirs for.Given that, Intel has to have an advantage that makes that higher initial cost worthwhile. The advantage they attempted to leverage was x86 compatibility. And it is a powerful lever. But their lack of full commitment for a variety of reasons made it not worth it to venders to consider. If they had had a highly integrated SoC based on x86 in 2010, ARM would likely already be reduced to the very low end. But instead they avoided that for reasons you are correct about above (product segmentation).

Simply aping the ARM ISA and making a cheap SOC out of it would have still produced an overpriced product for these market segments. And even then, you still aren't addressing the fact that they have repeatedly failed to create any of the necessary supporting logic for a chip, such as a decent LTE modem. That does not change, even if it was an ARM chip.

jasonelmore - Saturday, April 16, 2016 - link

well, when you shrink transistors and go to a smaller process, you save money per transistor. That is was the main reason we advance these lithography processes. Now we are shrinking for power savings.Intel has a 20% lead over Samsung and TSMC 14/16nm.. Nobody can touch their SRAM tech, which determines size of cache. ARM/Samsung are marketing 14/16nm, but their size is really 20nm.

https://www.semiwiki.com/forum/content/3759-intel-...

epobirs - Saturday, April 16, 2016 - link

And why would anyone buy a non-x86 compatible product from Intel instead of a different ISA from another vendor? Running existing x86 code is a huge, huge asset they aren't going to let go unless they can find some great advantage elsewhere they can leverage. Remember, Intel was already in the ARM business a long time ago and sold it off. This was before mobile turned huge but it seems unlikely they were completely blind to that prospect. They just didn't see any advantage for them to wield against other ARM vendors that was greater than x86 compatibility.name99 - Saturday, April 16, 2016 - link

You're missing the point. Intel had the chance to create mobile chips that were superior to what was available from ARM (because they had superior process and circuits). Why would companies have bought them? Uh, because they were SUPERIOR.Instead, by sticking with x86, they were late to the party, shipping garbage that no-one actually wants (how many phones with x86? how many watches do you expect to see with quark?) but the stupidity doesn't end there, because not only did they not acquire the market they hoped for, they're slowly hurting the market they actually care about.

Look, if it were such a great strategy, then why do we keep seeing headlines like this:

http://www.oregonlive.com/silicon-forest/index.ssf...

(and that follows layoffs last year...)

Intel is not going to disappear tomorrow. But there is a difference between simply hanging on doing the same old same old as ten years ago, and being part of the future. Intel is now in the same sort of place as a company like IBM --- they have their niche, they make plenty of money, but for the most part they're just not very interesting, and they're unlikely ever to be so. They've swapped innovation for repetition; they've swapped what could have been more spectacular growth for flat profits.

Basically textbook Clayton Christensen stuff.

Reflex - Sunday, April 17, 2016 - link

I'm not sure how many different ways I can explain this, but lets just make some bullet points:- The ISA is irrelevant here. x86 does not cost any more or less power than ARM inherently. x86 does not perform any better or worse than ARM inherently. x86 is not any harder or easier to develop new designs for inherently.

- The reason Intel's designs take years to complete has nothing to do with the ISA. It has to do with the fact that a given design has to serve more markets. In other words, it has to scale. ARM, on the other hand, serves only very specific markets, and they do not have to worry about how scalable their designs are.

- The reason scaling is important is that it permits Intel to spread the cost of fab research and upgrades across more products, which permits them to fund continuing research to stay ahead of their competition in this space. Take away that advantage and they would soon NOT be class leading on process tech.

- The argument that if Intel just used their existing 14nm fabs to build ARM chips they would have a superior product is misleading. Given the R&D cost and build cost that went into those fabs, and given that they cannot sell silicon at a loss, the base price on those chips would be around 2x the base price of a comparable Qualcomm design. While it is true that the CPU's would likely be able to perform at a higher level while consuming less power, no one will double their bill of materials cost for that when the end result is going into a tablet or phone. There is a reason why AMD's ARM efforts are aimed at the server room, where absolute lowest cost is not important.

- Given that simply having higher performance can't justify a higher price, why jettison x86 compatibility at all? It's a huge potential asset.

- And finally, none of that addresses the fact that Intel's real issue is lack of integration. They have no products that contain all that is needed in one simple SoC. Their efforts at a LTE modem have been crap, and numerous other typically integrated parts simply don't exist for Intel, meaning that if you go Atom you are stuck not only paying more for the CPU, but paying more for all the extra logic you have to buy to make it functional in a phone design vastly inflating your cost and reducing your power efficiency. That aspect is the largest impediment to success, and it is not addressed by taking the Atom and making it ARM.

name99 - Sunday, April 17, 2016 - link

The problem is that you keep thinking I am using arguments that I am not.I am NOT saying that x86 imposes a power or a performance barrier.

I am saying that it

(a) imposes a COMPLEXITY barrier. To design a performant x86 CPU seems to take 1.5 to 2x as long as designing an equivalent RISC CPU (POWER, SPARC, ARM, I don't care). We have two pieces of evidence for this:

- we know how long it takes to design Intel CPUs, and we have a pretty good idea how long it takes to design their competitors

- we've had AMD say words to this effect when asked why they were considering designing an ARM server chip

(b) the use of a more-or-less identical instruction set for Intel's low-end as their high-end means that it is OBVIOUS that the low-end will compete against the high-end. This is not the case when a different instruction set is used. Again, this is a business fact, it is not a matter of opinion. The alternative instruction set Intel used did not have to be ARM (though given Intel's track record with designing instruction sets, they should DEFINITELY have outsourced the problem to someone with competence in this space), it just had to be different from x86. One weak alternative, for example, which would still somewhat solve the complexity problem of (a) would have been to use a maximally simplified version of the x-64 instruction set and machine mode. Dump everything from the 16 and 32-bit world, dump x87, dump PEA and SMM and segment registers and 4 rings and all the rest of it.

Present a user facing instruction set that accepts purely 64-bit code (though, if you insist, with the same assembly language), and am OS-facing model that looks like the standard sort of RISC model --- hypervisor, OS, user, hierarchical page tables --- and dump the obsolete IO stuff that still hanging around --- broken timer models, broken interrupt models, etc.

This would have allowed for very rapid creation/porting of compilers, assemblers, and other dev tools; and would have allowed any modern code to be compiled to this new target just fine.

But Intel couldn't figure out what the actual goal was. Was the goal to create a performant phone chip and build a completely new business every bit the equal of the existing PC business? Or was the goal to run Windows and DOS code from 1980 on small form factor devices?

For some reason (my theory being that there are utterly deluded about the value of absolute x86 compatibility) they assumed the second goal was vastly more important than the first. Well, we've seen how that turned out.

I'll point out that we have an example of a company that does exactly what I've suggested and handles it just fine, namely IBM. IBM sells z-series at the high end and POWER at the (for IBM) low end. Completely different instructions sets and machine models, with completely different constraints on how each can be evolved. I suspect there is a fair bit of sharing between the two teams as appropriate (process technology, packaging, circuits, verification, perhaps even some aspects of the micro-architecture like the branch predictors and pre-fetchers); and this is the sort of sharing I'd expect Intel likewise to have engaged in.

Reflex - Sunday, April 17, 2016 - link

I'm sorry but you really don't understand Intel's fabrication facilities. They have no ability to simply slap an ARM design out at 14nm. Their facilities are designed hand in hand with their CPU designs, each complementing the other. The reason their designs take so long has nothing to do with the complexity of x86, indeed they have been producing revamped designs every 2 years until just recently. The reason it takes so long (and I submit the tick-tock schedule was as fast as anything ARM produced) is that they have considerations from the top to the bottom, and that scalability adds complexity.As for the rest where you say what Intel 'should' do, well all I can say is that CPU design is not that simple, and you just made a design that is nothing like any other ARM player, which would mean a lot of work would have to go into it and it would not be compatible with a lot of existing software up and down the stack.

And you still seem to be avoiding the obvious: Even if everything you say there were true, how does any of that have anything to do with the fact that Intel does not have the component pieces, such as integrated LTE modems, required to make a competitive product? Even if they could magically by adopting ARM create a CPU that permitted annual updates on their latest and greatest processes, that really does not get them past the problem that the product is not what OEM's actually need.

name99 - Sunday, April 17, 2016 - link

Jesus! I tell you MULTIPLE times that I am NOT talking about Intel buying an ARM physical design and fabbing that, and yet you keep claiming that is my suggestion. I mean, half the above comment (the one you replied to) talks about an Intel solution based on (and only on) the x86-64 instruction set!This suggests that you are more interested in pushing an agenda than in understanding the world, or your fellow commenters.

Likewise, you may have noticed that Apple has not (so far) included an LTE modem on their SoC. Hmm. Seems to suggest that it is quite possible to create a compelling (and low enough power solution) by using external radio components. Hmm. And the rumors are that the A10 will come with an LTE baseband on the SoC. Provided by Intel no less. Hmm.

So once again, I have to wonder what's going on in your head, given your eagerness to

(a) utterly misrepresent what you read (even when it is repeatedly explained to you that you ARE misrepresenting it), and that you

(b) seem more than a little unaware of just what exists in Intel's product portfolio.

Strunf - Monday, April 18, 2016 - link

X86 is what keeps Intel relevant. Intel already tried the other ISA approach it was the IA-64... how did it go? A new ISA means a huge risk, you need invested partners and to provide them with a cost competitive solution, even if it was better at twice the price no one cares.Why do you think MS and SONY went the AMD way despite Intel+nVIDIA probably was a more performant solution?

Even if Intel was making ARM SOCs why would Samsung buy from them when they can make their own SOCs, why would Apple buy from Intel when they can design their own SOCs and then shop around for a foundry?...