The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

by Johan De Gelas on March 31, 2016 12:30 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

- Broadwell

TSX

TSX or Transactional Synchronization Extensions is Intel's cache-based transactional memory system. Intel launched TSX with Haswell, but a bug threw a spanner in the works. Broadwell in turn got it right. The chicken is finally there, now it's time to enjoy the eggs.

Faster Virtualization

Virtualization overhead is (for most people) a thing of the past. The performance overhead with bare metal hypervisors (ESXi, Hyper-V, Xen, KVM..) is less than a few percent. There is one exception however: applications where I/O dominates. And of course, the packet switching telco applications are the prime examples. Intel, VMware and the server vendors really want to convert the telcos from their Firewall/Router/VPN "black boxes" to virtual ones using Software Defined Networking (SDN) infrastructure. To that end, Intel has continued to work on reducing the virtualization performance overhead. Virtualization overhead can be described as the number of VM exits (VM stops and hypervisor takes over) times the VM exit latency. In IO intensive application, VM exits happen frequently, which in turn leads to hard to predict and high IO latency, exactly what the telco people hate.

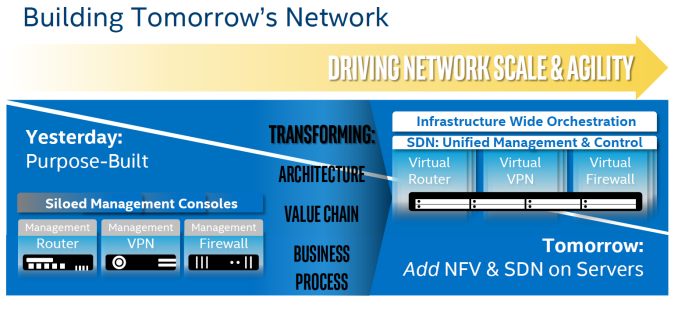

Intel wants to conquer the telco's datacenter by turning it into a SDN

So Intel worked on both factors. So Broadwell-DP VM exit latency is once again reduced from 500 cycles to 400.

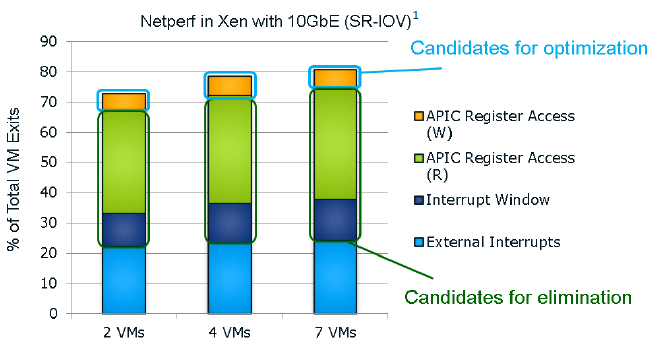

It seems that the "ticks" also get a VM exit reduction. This slide of the Ivy Bride EP presentation gives you a very good overview of the VM exits in a network intensive application; in this case a networkd bandwidth benchmark application.

I quote from our Ivy Bridge-EP review:

The Ivy Bridge core is now capable of eliminating the VMexits due to "internal" interrupts, interrupts that originate from within the guest OS (for example inter-vCPU interrupts and timers). The virtual processor will then need to access the APIC registers, which will require a VMexit. Apparently, the current Virtual Machine Monitors do not handle this very well, as they need somewhere between 2000 to 7000 cycles per exit, which is high compared to other exits.

The solution is the Advanced Programmable Interrupt Controller virtualization (APICv). The new Xeon has microcode that can be read by the Guest OS without any VMexit, though writing still causes an exit. Some tests inside the Intel labs show up to 10% better performance.

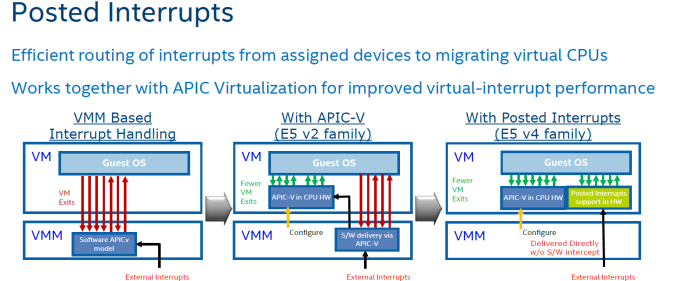

In summary, Intel eliminated the green and dark blue components of the VM exit overhead with APICv. Broadwell now takes on the VM exits due to the external interrupts.

The technology on Broadwell-EP to do this is called posted interrupt. Essentially, posted interrupts enables direct interrupt delivery to the virtual machine without incurring a VM exit, courtesy of an interrupt remapping table. It is very similar to VT-D, which allowed DMA remapping thanks to the physical to virtual memory mapping table. Telco applications - among others - are very latency sensitive. Intel's Edwin Verplancke gave us one such example: before posted interrupts, a telco application had a latency varying from 4 to 47 (!) µsec, depending on the load. Posted interrupts made this a lot less variable, and latency varied from 2.4 to 5.2 µsecs.

As far as we are aware, KVM and Xen seem to have already implemented support for posted interrupts.

112 Comments

View All Comments

JohanAnandtech - Saturday, April 2, 2016 - link

Ok, thanks, time to sleep a little longer. I have fixed the error.xrror - Friday, April 1, 2016 - link

It's depressing to see the mobile-first design philosophy really gutting into the last bastion of x86 performance.I mean I get it - a 22 (20) core xeon wouldn't even exist without the aggressive power management tech needed to keep it from melting or needing exotic cooling. But it's still depressing to see ALL of the arch improvements immediately negated with lowered clock speeds, or worse "turbo speeds" you will never actually see once the machine is running production loads.

The engineering behind these big core count chips though is always very impressive. Also did Intel ever say how they "fixed" TSX?

FunBunny2 - Friday, April 1, 2016 - link

"It's depressing to see the mobile-first design philosophy really gutting into the last bastion of x86 performance."welcome to the world of laissez faire capitalism: do what makes the most money today, irregardless of future consequences. used to be, Intel could rely on M$ making the next versions of Windoze and Office impossible to run on existing Pentiums, thus driving sales of the next Pentium (a whole machine, at that). these days it's up to gamers and data centres. not taking any bets on which turns out to be in the driver's seat.

xrror - Friday, April 1, 2016 - link

Well, considering that "computer gaming" has degraded to whatever the kids are running on their smartphones, or the parent's tablet I'm not hopeful for any new resurgence in demand for high performance PC's in the mass market.So the future consequences for Intel prioritizing power efficiency over performance, or possibly developing a separate fabrication tech for performance is... likely not very much. So there really is no "future consequence" for Intel. Sure they could go out and actually try and make a 10Ghz 9nm part possible, but nobody in 2020 would buy it because... it probably would go into whatever iDevice they care about. And HPC market I dunno. Maybe if it datamines marketing data faster or can microtrade on the stock market faster or something. meh.

The general public really doesn't care about performance anymore (honestly, they may never have), only how portable it is and if a device is good enough to run their stuff on the go.

The high end market like these multi-core xeons though, is strange because you'd think this is where Intel would go all in, but I guess when your only competitors are IBM Power and (currently non-competitive) AMD I dunno...

I mean it's sad, even Intel has to beg to justify it's R&D expenses to shareholders - which is stupid because Intel's R&D is one of it's biggest strengths. But such as it is. Apr 1 rant over ;)

abufrejoval - Friday, April 1, 2016 - link

Johan, you keep bemoaning the fact that lack of competition seems to stop "real progress" and I wonder where you expect that progress to happen.More specifically you seem to desire more GHz and I can understand that desire, which may originate from that crazy 40MHz to 4GHz rush we all experienced somewhere in the decade starting in the mid nineties.

I understand the emotion, but I wonder how it fits the scientific mind I see everywhere else in your work, because 8, 16 or 32 GHz is simply not going to happen, competition or not.

Sure 8GHz are possible, you can even purchase 5GHz off the shelves. But it simply doesn't deliver in terms of Oomp/$. And Web Scale is all about value/€ and the main driver of server evolution today.

We'll still see radical speedups where it counts, but it will have to be via special purpose function blocks either on SoCs, or by adding a couple of extra instructions or by doing something as radical as Micron's Automata Processor.

But general purpose von Neumann has hit the Gigahertz wall years ago and nothing can change that except a different model of compute.

I liked the reference to Andreas Stiller, but I'm not sure everybody here has a subscription to c't like I do since the early 1990's. There could also be the tiny issue that not everyone outside Belgium is quadrilingual.

Make no mistake: I love your work! It's a pleasure to read for form, style and the content!

The Von Matrices - Saturday, April 2, 2016 - link

Any indication of the QPI speed of these chips? Did Intel increase it from the 9.6 GT/s in Haswell-EP?Ian Cutress - Saturday, April 2, 2016 - link

Most of the high end are 9.6 GT/s. https://twitter.com/IanCutress/status/715582714099...watersb - Saturday, April 2, 2016 - link

Johan, this is fantastic work. Thanks very much.Any way to address RAS features?

isrv - Saturday, April 2, 2016 - link

well, i'm completely dissapointed.web servers wants higher clock speed.

single-thread load (like PHP) become even slower on those E5v4 due to drop in GHz's.

still, the best CPU's for that is E3-1290v2, E3-1281v3 (and 1286v3), E3-1280v5, E5-1630v3, E5-1620v2 and the only one 6-core E5-1660v2

all those are 3.7Ghz (pointless to look at turbo speed since we're under constant 24/7 load).

i was hoping to at least one 3.8GHz or even higher.

so no changes here, E5-1660v2 is still the fastest web-server CPU.

or E5-1630v3 by sacrificing 2 cores for a bit faster memory.

patrickjp93 - Sunday, April 3, 2016 - link

For those 4-8 core chips, the turbo boost is maintainable for 24/7 workloads if your cooling is sufficient. You seem to know far less about this environment than you let on. And who the hell still uses single-threaded PHP? And you're not taking into account better caching algorithms and other architectural improvements that make the 200MHz slower V4 run faster than your V2.