The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

by Johan De Gelas on March 31, 2016 12:30 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

- Broadwell

TSX

TSX or Transactional Synchronization Extensions is Intel's cache-based transactional memory system. Intel launched TSX with Haswell, but a bug threw a spanner in the works. Broadwell in turn got it right. The chicken is finally there, now it's time to enjoy the eggs.

Faster Virtualization

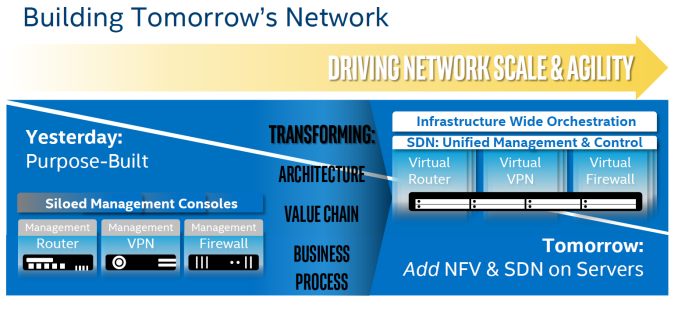

Virtualization overhead is (for most people) a thing of the past. The performance overhead with bare metal hypervisors (ESXi, Hyper-V, Xen, KVM..) is less than a few percent. There is one exception however: applications where I/O dominates. And of course, the packet switching telco applications are the prime examples. Intel, VMware and the server vendors really want to convert the telcos from their Firewall/Router/VPN "black boxes" to virtual ones using Software Defined Networking (SDN) infrastructure. To that end, Intel has continued to work on reducing the virtualization performance overhead. Virtualization overhead can be described as the number of VM exits (VM stops and hypervisor takes over) times the VM exit latency. In IO intensive application, VM exits happen frequently, which in turn leads to hard to predict and high IO latency, exactly what the telco people hate.

Intel wants to conquer the telco's datacenter by turning it into a SDN

So Intel worked on both factors. So Broadwell-DP VM exit latency is once again reduced from 500 cycles to 400.

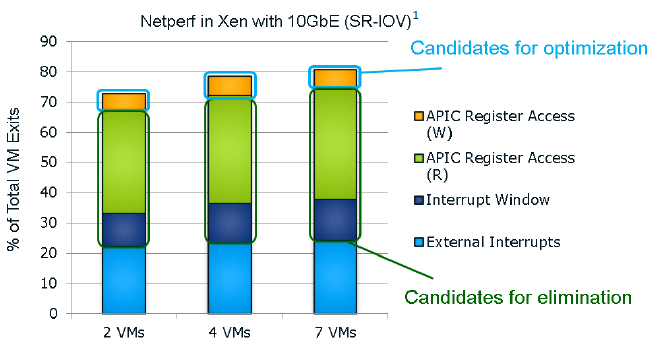

It seems that the "ticks" also get a VM exit reduction. This slide of the Ivy Bride EP presentation gives you a very good overview of the VM exits in a network intensive application; in this case a networkd bandwidth benchmark application.

I quote from our Ivy Bridge-EP review:

The Ivy Bridge core is now capable of eliminating the VMexits due to "internal" interrupts, interrupts that originate from within the guest OS (for example inter-vCPU interrupts and timers). The virtual processor will then need to access the APIC registers, which will require a VMexit. Apparently, the current Virtual Machine Monitors do not handle this very well, as they need somewhere between 2000 to 7000 cycles per exit, which is high compared to other exits.

The solution is the Advanced Programmable Interrupt Controller virtualization (APICv). The new Xeon has microcode that can be read by the Guest OS without any VMexit, though writing still causes an exit. Some tests inside the Intel labs show up to 10% better performance.

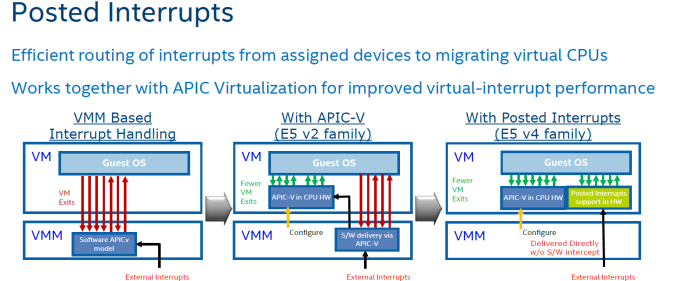

In summary, Intel eliminated the green and dark blue components of the VM exit overhead with APICv. Broadwell now takes on the VM exits due to the external interrupts.

The technology on Broadwell-EP to do this is called posted interrupt. Essentially, posted interrupts enables direct interrupt delivery to the virtual machine without incurring a VM exit, courtesy of an interrupt remapping table. It is very similar to VT-D, which allowed DMA remapping thanks to the physical to virtual memory mapping table. Telco applications - among others - are very latency sensitive. Intel's Edwin Verplancke gave us one such example: before posted interrupts, a telco application had a latency varying from 4 to 47 (!) µsec, depending on the load. Posted interrupts made this a lot less variable, and latency varied from 2.4 to 5.2 µsecs.

As far as we are aware, KVM and Xen seem to have already implemented support for posted interrupts.

112 Comments

View All Comments

xrror - Tuesday, April 5, 2016 - link

Even at 3.3Ghz though, they shouldn't be that slow. I'm taking a guess - if this was a student lab, and they bothered to specifically order xeon (or opteron back in the day) workstations - I'm guessing this was a CAD/CAM lab or something running a boatload of expensive licenced software (like, autodesk, solidworks, etc) and some of that stuff is horrible at thrashing on the hard drive, constantly.And I doubt your school could spring the cash for SSD drives in them (because Workstation SKU == you pay dearly OEM workstation 'certified' drive cost).

This is all guesses though. And not trying to defend - it does suck when you have what should be a sweet machine choking for whatever reason, and you're there trying to get your assignments done and you just want to smash the screen cause it just chhhuuuuuuggggsss... ;p

SkipPerk - Friday, April 8, 2016 - link

I have seen this many times, even in the for-profit sector. I once saw a compute cluster that was choking on server with slow storage. They had a 10 gb network and fast Xeon machines running on flash, but the primary storage was too slow. When they get a proper SAN it was an order of magnitude improvement.Back in the day storage was often the bottleneck, but it still comes up today.

someonesomewherelse - Thursday, September 1, 2016 - link

We ran everything in virtual machines with the actual disk images not stored locally.... and the lans in the classrooms were 100mbit, idk about the connection from the classroom to the server with the image. How's that for slow?I would have loved it if our stuff was as 'slow' as yours. The wifi in the classrooms was very fast too..... especially since I doubt anyone bothered with turning of their torrents (well I mean it's completely understandable, you are going to watch the new episode of your favorite show once you are back home and not everyone had (well has, but most people can get it now) fth with at least 100Mbit line (ideally symmetrical, but some isps are too gready with ul speeds so 300/50 is cheaper than 100/100...... and good luck getting 1000/1000 on a residential package (the hw isn't the problem since you can get 1000/1000 with a commercial (aka over priced) package..... using the same hw... basically I would just need to sign a new contract, send it back, and enjoy the faster line in 1 business day or less)...well at least there are no bw caps (if I didn't read foreign boards bw caps on non mobile connections would be something I'd think no isp could do and not lose all customers) and there's we have no dmca (or something similar) and afaik no plans for one either (if they tried to pass such a law I can imagine that you'd have enough support for a referendum which you would win with a huge mayority), even better, the methods used to catch people downloading torrents are illegal anyway so any evidence obtained with them or derived from them is inadmissible anyway and just by presenting it you have admitted to several crimes which the police and prosecution are obliged to investigate/prosecute.... copyright infringment however is a civil matter).

donwilde1 - Tuesday, April 5, 2016 - link

One of the more interesting Intel features, in my opinion, is that Broadwell carries an on-board encryption engine with its own interpreter similar to a small-memory, embedded JVM. This enables full Trusted Boot capability, which I view as a necessity in today's hackable world. Would you consider a follow-on article on this? The project was a clean-room development called BeiHai, done in China.JamesAnthony - Wednesday, April 6, 2016 - link

From what I can tell in looking over the benchmarks, there is not much of an increase in performance at all in core vs core performance speeds going from the V1 CPUs to the V4 CPUsAs if you look at the benchmarks, and calculate that you are comparing 16 cores to 44 cores, the 44 core setup is not 2.75x faster.

So while your overall speed goes up, your work accomplished per core is not increasing at the same rate.

Why does this matter? Well thanks to software licensing costs, as you add cores it gets very expensive quickly. So if your software costs (which can easily exceed the hardware costs very quickly) go up with each core you add, but the work done does not, you quickly wind up in a negative cost / performance ratio.

For quite a few people the E5-2667 v2 CPU with 8 cores at 3.5 GHz (Turbo 4) comes out around the best value for the software licensing cost.

So while Intel puts out processors that overall can do more work than the previous ones, the move to per core software licensing is making it a negative value proposition. This is why people keep wanting higher clock speed lower core count processors, but we seem stuck around 3.5 GHz for many years.

SkipPerk - Friday, April 8, 2016 - link

Although you are right for workstations, so much demand is for generic virtualized machines. Many buyers are fine with 2 ghz with as many cores as they can get. They load as little RAM as the spec requires and throw out the cheapest single core, dual thread 2 GB RAM VM they can. This is how call centers work, not to mention many low-level office jobs. They do not care about performance because this is more than enough.I have had specialty applications where prosumer 6-core or 8-core CPUs were the better deal (usually liquid cooled and overclocked), but not many buyers are licensing insanely expensive analytical software by the core.

SeanJ76 - Sunday, April 10, 2016 - link

@Xeon chips!! TOTAL GARBAGE!legolasyiu - Wednesday, April 20, 2016 - link

The ASUS Workstation/Server board with V4 boards are very stable and they have 10% OC. I am very interested how the processor with those boards.Bulat Ziganshin - Saturday, May 7, 2016 - link

>This increases AES (symmetric) encryption performance by 20-25%PCLMULQDQ implements part of Galois Field multiplication and bdw actually improved only GCM part of AES-GCM algo. neither AES nor other popular symmetric encryption algos became faster

oceanwave1000 - Monday, May 9, 2016 - link

This article mentioned that the Broadwell EP e5-v4 family has 3 die configurations. I got the 306mm2 and 454mm2. Did anyone catch the third one?Thanks.