The Samsung Galaxy S7 & S7 Edge Review, Part 1

by Joshua Ho on March 8, 2016 9:00 AM ESTGPU Performance

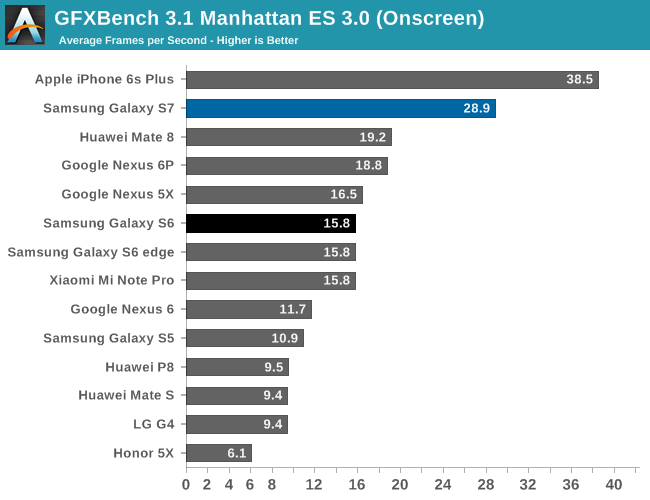

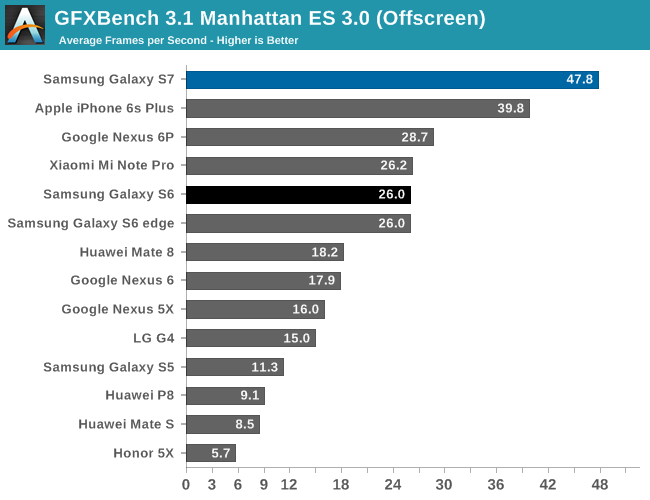

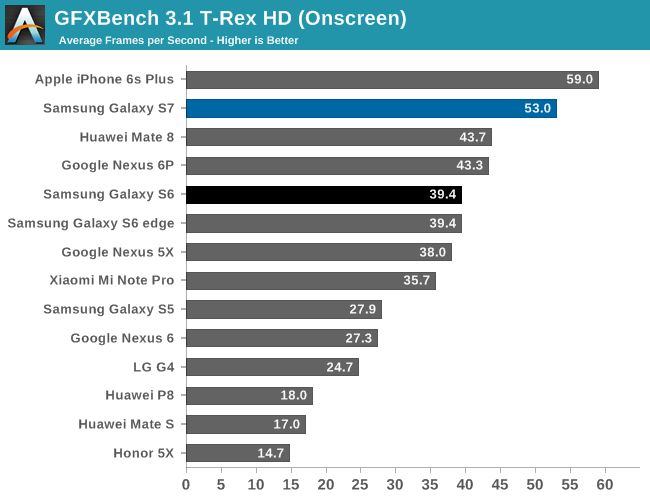

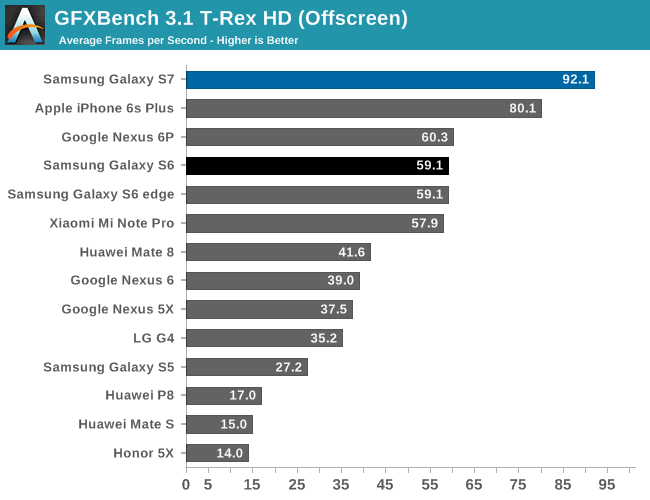

On the GPU side of things, Qualcomm's Snapdragon 820 is equipped with the Adreno 530 clocked at 624 MHz. In order to see how it performs, we ran it through our standard 2015 suite. In the future, we should be able to discuss how the Galaxy S7 performs in the context of our new benchmark suite as we test more devices on our new suite to determine relative performance.

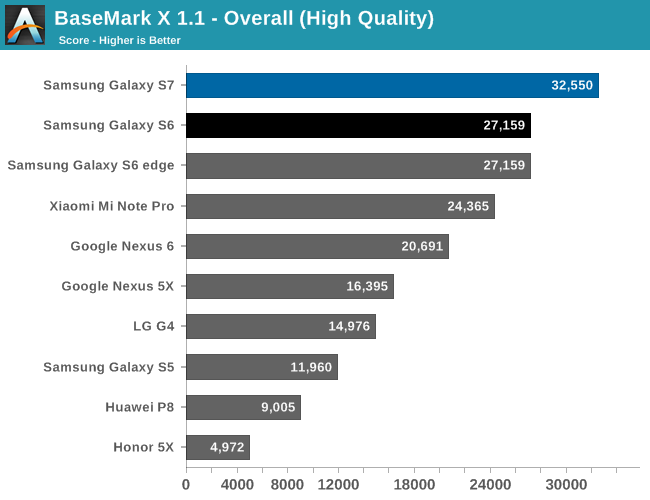

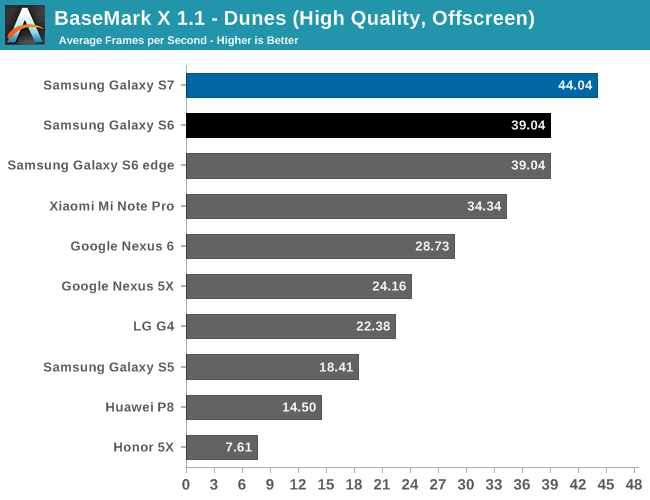

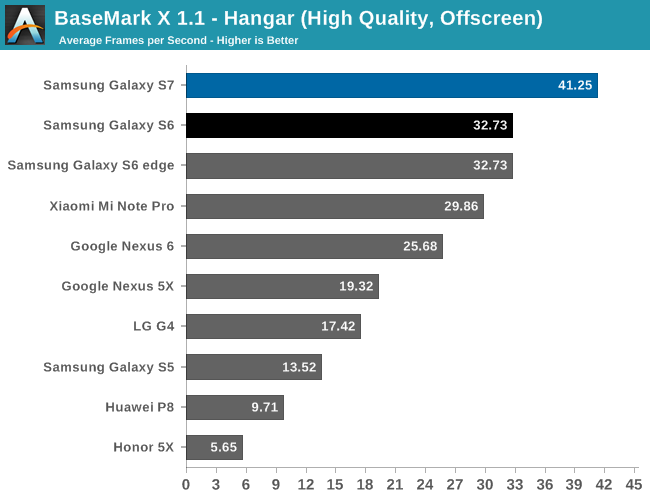

At a high level, GPU performance appears to be mostly unchanged when comparing the Galaxy S7 to the Snapdragon 820 MDP. Performance in general is quite favorable assuming that the render resolution doesn't exceed 2560x1440.

Overall, the Adreno 530 is clearly one of the best GPUs you can get in a mobile device today. The Kirin 950's GPU really falls short in comparison. One could argue that turbo frequencies in a GPU don't make a lot of sense, but given that mobile gaming workloads can be quite bursty in nature and that gaming sessions tend to be quite short I would argue that having a GPU that can achieve significant levels of overdrive performance makes a lot of sense. The A9 is comparable if you consider the resolution of iOS devices, but when looking at the off-screen results the Adreno 530 pulls away. Of course, the real question now is how the Adreno 530 compares to the Exynos 8890's GPU in the international Galaxy S7, but that's a question that will have to be left for another day.

202 Comments

View All Comments

jjj - Tuesday, March 8, 2016 - link

As for battery tests, as long as you don't simulate a bunch of social, IM, news apps in the backgroud, you can't get close to good results when you got so many diff core configs.retrospooty - Tuesday, March 8, 2016 - link

"s long as you don't simulate a bunch of social, IM, news apps in the backgroud, you can't get close to good results when you got so many diff core configs"You have to have consistent methodology to test against other phones. The point is not to show what you might get with your particular social/newsfeed apps running, the point is to test against other phones to see how it compares under the same set of circumstances so you know which one gets better life.

jjj - Tuesday, March 8, 2016 - link

Sorry but you failed to understand my points.It would be consistent ,if it is simulated.

The point is to have relatively relevant data and only by including at least that you can get such data. These apps have major impact on battery life- and it's not just FB even if they were the only ones that got a lot of bad press recently.

The different core configs - 2 big cores, 4 big cores in 2+2, then 4+4, 2+4+4, or just 8 will result in very different power usage for these background apps, as a % of total battery life, sometimes changing the rankings. Here for example, chances are the Exynos would get a significant boost vs the SD820 if such tasks were factored in.

How many such simulated tasks should be included in the test is debatable and also depends on the audience, chances are that the AT reader has a few on average.

retrospooty - Tuesday, March 8, 2016 - link

And you are missing mine as well... If you have a million users, you will have 10,000 different sets of apps. You cant just randomly pick and call it a benchmark. The methodology and the point it to measure a simple test against other phones without adding too many variables. I get what you want, but its a bit like saying "i wish your test suite tested my exact configuration" and that just isnt logical from a test perspective.jjj - Tuesday, March 8, 2016 - link

What i want is results with some relevance.The results have no relevance as they are, as actual usage is significantly different. In actual usage the rankings change because the core configs are so different. The difference is like what VW had in testing vs road conditions, huge difference.

To obtain relevant results you need somewhat realistic scenarios with a methodology that doesn't ignore big things that can turn the rankings upside down. Remember that the entire point of bigLITTLE is power and these background tasks are just the right thing for the little cores.

retrospooty - Tuesday, March 8, 2016 - link

relevant to who? I use zero social media apps and have no news feeds running at all until I launch feedly. Relevant to you is not relevant to everyone or even to most people. This site is a fairly high traffic site (for tech anyhow) and they have to test for the many, not the few. The methodology is sound. I see what you want and why you want it, but it doesn't work for "the many"jjj - Tuesday, March 8, 2016 - link

Relevant to the average user. I don't use social at all but that has no relevance as the tests should be relevant to the bulk of the users. And the bulk of the users do use those (that's a verifiable fact) and more. This methodology just favors fewer cores and penalizes bigLITTLE.retrospooty - Tuesday, March 8, 2016 - link

Its still too unpredictable. One persons Facebook feed may go nuts all day while anothers is relatively calm. This is also why specific battery ratings are never given by manufacturers... Because usage varies too much. This is why sites test a (mostly) controllable methodology against other phones to see which fares the best. I find it highly useful and when you get into the nuts and bolts, it's necessary. If you had a bunch of phones and actually started trying to test as you mentioned you would find a can of worms and inconsistent results at the end of your work...jjj - Wednesday, March 9, 2016 - link

"Its still too unpredictable" - that's the case with browsing usage too, even more so there but you can try to select a load that is representative. You might have forgotten that i said simulate such apps, there wouldn't be any difference between runs.Yes testing battery life is complex and there are a bunch of other things that could be done for better results but these apps are pretty universal and a rather big drain on resources.They could be ignored if we didn't had so many core configs but we do and that matters. Complexity for the sake of it is not a good idea but complexity that results in much better results is not something we should be afraid of.

10 years later smartphone benchmarking is just terrible. All synthetic and many of those apps are not even half decent. Even worse, after last year's mess 99% of reviews made no attempt to look at throttling. That wouldn't have happened in PC even 10 years ago.

retrospooty - Wednesday, March 9, 2016 - link

I think you are a little too hung up on benchmarks. It is just a sampling, an attempt at measuring with the goal to compare to others to help people decide, but what really matters is what it does and how well it does it. I find it highly useful even if not exact to my own usage. If unit A lasts 20 hours and unit B lasts 16 hours in the standard tests, but I know my own usage gets 80% of what standard is I can estimate my usage will be 16 hours on unit A and 12.8 hours on unit B (give or take). It really doesn't need to be more complicated than that since testing batteries is not an exact science, not even on PC/laptops as usage varies there just hte same. That is why there are no exact guarantees. "Up to X hours" is a standard explanation. It is what it is.