GeForce 6200 TurboCache: PCI Express Made Useful

by Derek Wilson on December 15, 2004 9:00 AM EST- Posted in

- GPUs

Architecting for Latency Hiding

RAM is cheap. But it's not free. Making and selling extremely inexpensive video cards means as few components as possible. It also means as simple a board as possible. Fewer memory channels, fewer connections, and less chance for failure. High volume parts need to work, and they need to be very cheap to produce. The fact that TurboCache parts can get by with either 1 or 2 32-bit RAM chips is key in its cost effectiveness. This probably puts it on a board with a few fewer layers than higher end parts, which can have 256-bit connections to RAM.But the only motivation isn't saving costs. NVIDIA could just stick 16MB on a board and call it a day. The problem that NVIDIA sees with this is the limitations that would be placed on the types of applications end users would be able to run. Modern games require framebuffers of about 128MB in order to run at 1024x768 and hold all the extra data that modern games require. This includes things like texture maps, normal maps, shadow maps, stencil buffers, render targets, and everything else that can be read from or draw into by a GPU. There are some games that just won't run unless they can allocate enough space in the framebuffer. And not running is not an acceptable solution. This brings us to the logical combination of cost savings and compatibility: expand the framebuffer by extending it over the PCI Express bus. But as we've already mentioned, main memory appears much "further" away than local memory, so it takes much longer to either get something from or write something to system RAM.

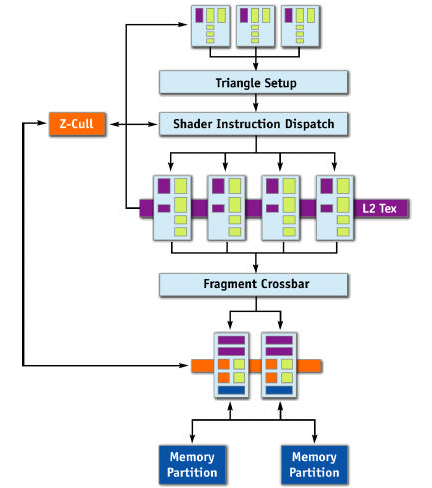

Let's take a look at the NV4x architecture without TurboCache first, and then talk about what needs to change.

The most obvious thing that will need to be added in order to extend the framebuffer to system memory is a connection from the ROPs to system memory. This will allow the TurboCache based chip to draw straight from the ROPs into things like back or stencil buffers in system RAM. We would also want to connect the pixel pipelines to system RAM directly. Not only do we need to read textures in normally, but we may also want to write out textures for dynamic effects.

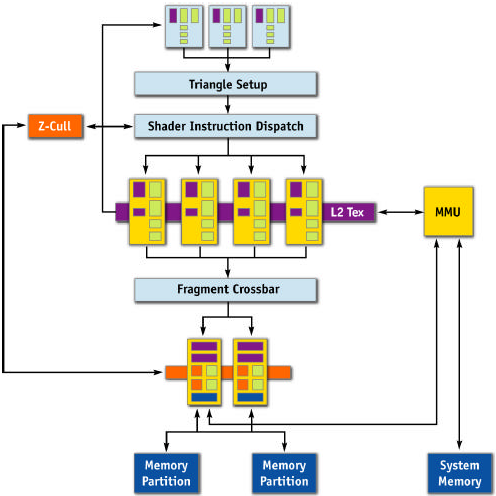

Here's what NVIDIA has offered for us today:

The parts in yellow have been rearchitected to accommodate the added latency of working with system memory. The only part that we haven't talked about yet is the Memory Management Unit. This block handles the requests from the pixel pipelines and ROPs and acts as the interface to system memory from the GPU. In NVIDIA's words, it "allows the GPU to seamlessly allocate and de-allocate surfaces in system memory, as well as read and write to that memory efficiently." This unity works in tandem with part of the Forceware driver called the TurboCache Manager, which allocates and balances system and local graphics memory. The MMU provides the system level functionality and hardware interface from GPU to system and back while the TurboCache Manager handles all the "intelligence" and "efficiency" behind the operations.

As far as memory management goes, the only thing that is always required to be on local memory is the front buffer. Other data often ends up being stored locally, but only the physical display itself is required to have known, reliable latency. The rest of local memory is treated as a something like the framebuffer and a cache to system RAM at the same time. It's not clear as to the architecture of this cache - whether it's write-back, write-through, has a copy of the most recently used 16MBx or 32MBs, or is something else altogether. The fact that system RAM can be dynamically allocated and deallocated down to nothing indicates that the functionality of local graphics memory as a cache is very adaptive.

NVIDIA hasn't gone into the details of how their pixel pipes and ROPs have been rearchitected, but we have a few guesses.

It's all about pipelining and bandwidth. A GPU needs to draw hundreds of thousands of independent pixels every frame. This is completely different from a CPU, which is mostly built around dependant operations where one instruction waits on data from another. NVIDIA has touted the NV4x as a "superscalar" GPU, though they haven't quite gone into the details of how many pipeline stages it has in total. The trick to hiding latency is to make proper use of bandwidth by keeping a high number of pixels "in flight" and not waiting for the data to come back to start work on the next pixel.

If our pipeline is long enough, our local caches are large enough, and our memory manager is smart enough, we get cycle of processing pixels and reading/writing data over the bus such that we are always using bandwidth and never waiting. The only down time should be the time that it takes to fill the pipeline for the first frame, which wouldn't be too bad in the grand scheme of things.

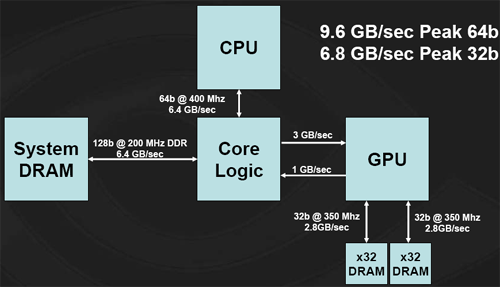

The big uncertainty is the chipset. There are no guarantees on when the system will get data back from system memory to the GPU. Worst case latency can be horrible. It's also not going to be easy to design around worst case latencies, as NVIDIA is a competitor to other chipset makers who aren't going to want to share that kind of information. The bigger the guess at the latency that they need to cover, the more pixels NVIDIA needs to keep in flight. Basically, covering more latency is equivalent to increasing die size. There is some point of diminishing returns for NVIDIA. But the larger presence that they have on the chipset side, the better off they'll be with their TurboCache parts as well. Even on the 915 chipset from Intel, bandwith is limited across the PCI Express bus. Rather than a full 4GB/s up and 4GB/s down, Intel offers only 3GB/s up and 1GB/s down, leaving the TurboCache architecture with this:

Graphics performance is going to be dependant on memory bandwidth. The majority of the bandwidth that the graphics card has available comes across the PCI Express bus, and with a limited setup such as this, the 6200 TuboCache will see a performance hit. NVIDIA has reported seeing something along the lines of a 20% performance improvement by moving from a 3-down, 1-up architecture to a 4-down, 4-up system. We have yet to verify these numbers, but it is not unreasonable to imagine this kind of impact.

The final thing to mention is system performance. NVIDIA only maps free idle pages from RAM using "an approved method for Windows XP." It doesn't lock anything down, and everything is allocated and freed on they fly. In order to support 128MB of framebuffer, 512MB of system RAM must be installed. With more system RAM, the card can map more framebuffer. NVIDIA chose 512MB as a minimum because PCI Express OEM systems are shipping with no less. Under normal 2D operation, as there is no need to store any more data than can fit in local memory, no extra system resources will be used. Thus, there should be no adverse system level performance impact from TurboCache.

43 Comments

View All Comments

paulsiu - Tuesday, March 1, 2005 - link

I am not sure I like this product at the price point. If it was $50, then it would make sense, but as another poster pointed out, the older and faster 6200 with real memory is about $10 more.The marketing is also deceptive. 6200 Turbo cache sounds like it would be faster than the 6200.

In addition, this so call innovative use of system memory sounds like nothing more than integrated video. OK, it's faster, but aren't you increasing cpu load.

The review also use an Athlon 64 4000+, I am doubtful that users who buy an A64 4000+ is going to skip on the video card.

Paul

guarana - Thursday, January 27, 2005 - link

I was forced to go for a 6200TC 64MB (up to 256Mb) solution about a week ago. Had to upgrade my MoBo to a PCI-X version and had to get the cheapest card that i could find in the PCI-X flavour.I must say its a lot better than the FX5200 card i used to have ... I am running it with only 256Mb of system RAM so its not running at optimal performance , but i can run UT2003 with everything set to HIGH and in a 1280x1024 rez :)

A few stutters when the game actually starts , but after about 10seconds , the game runs smooth and without any issues ... dont know the exact FPS though :)

I score about 12000 points on 3dMark2001 with stock clocks (yeah 3DM2001 old, but its all i could download over-night)

Will let you know what happens when i finally get another 256Mb in the damn thing.

Jeff7181 - Wednesday, December 22, 2004 - link

I don't like this... why would I want the one that costs over $100 when I can get the 6200 for $110-210 that has it's own dedicated memory and performs better. It's stupid to replace the current 6200 with this pile. It would be fine as a $50-75 card, or for use in a laptop, or a HTPC... but don't replace the current 6200 with this.icarus4586 - Friday, December 17, 2004 - link

I have a laptop with a 64MB Mobility Radeon 9600 (350MHz GPU, 466MHz DDR MHz 128bit RAM), and I can run Far Cry at 1280x800, high settings, Doom 3 1024x768 high settings, Halo 1024x768 high settings, Half-Life 2 1280x800 high settings, all at around 30fps.This is, obviously, an AGP solution. I don't really know how it does it. I was very surprised at what it could pull off, especially the high resolutions, with only 64MB onboard.

What's going on?

Rand - Friday, December 17, 2004 - link

Have you heard whether the limited PCI-E X16 bandwidth of the I915 is true for the I925/825XE chipsets also?Also, I'm curious whether you've done any testing on the nForce 4 with only one DIMM so as to limit the system bandwidth and get some indication of how the GeForce6200TC scales in performance with greater/lesser system memory bandwidth available?

Rand - Friday, December 17, 2004 - link

DerekWilson-"As far as I understand Hypermemory, it is not capable of rendering directly to system memory."

In the past ATI has indicated all of the R300 derived cores are capable of writing directly to a texture in system memory.

At the very least HyperMemory implementation on the Radeon Express 200G chipset must be able to do so, as ATI supports implementations without any local RAM they have to be capable of rendering to system memory to operate.

The only difference I've noticed in the respective implementations thus far is that nVidia's Turbocache lowest local bus size if 32-bit, whereas ATI's implementation only supports as low as 64bit so the smallest local RAM they can use is 32MB. (Well, they can use no local RAM also, though that would obviously be considerably slower)

Rand - Friday, December 17, 2004 - link

DerekWilson - Thursday, December 16, 2004 - link

And you can bet that NVIDIA's Intel chipset will have a nice, speedy, optimized for SLI and TurboCache PCIe implimentation as well.PrinceGaz - Thursday, December 16, 2004 - link

Yeah, this does all seem to make some sort of sense now. But not much sense as I can't see why Intel would delibrately limit the bandwidth of the PCIe bus they were pushing so heavily. Unless the 925 chipset has a full bi-directional 4GB/s, and the 3 down/1 up is something they decided to impose on the cheaper 915 to differentiate it from the high-end 925.I guess it's safe to assume nVidia implemented bi-directional 4GB/s in the nForce4, given that they were also working on graphics cards that would be dependent on PCIe bandwidth. And unless there was a good reason for VIA, ATI, and SiS not to do so; I would imagine the K8T890, RX480/RS480, and SiS756 will also be full 4GB/s both ways.

DerekWilson - Thursday, December 16, 2004 - link

NVIDIA tells us its a limitation of 915. Looking back, they also heavily indicated that "some key chipsets" would support the same bandwidth as NVIDIA's own bridge solution at the 6 series launch. If you remember, their solution was really a 4GB total bandwidth solution (overclocked AGP 8x to "16x" giving half the PCIe bandwidth) ... Their diagrams all showed a 3 down 1 up memory flow. But they didn't explicitly name 915 at the time.