A Timely Discovery: Examining Our AMD 2nd Gen Ryzen Results

by Ian Cutress & Ryan Smith on April 25, 2018 11:15 AM ESTConclusion: It Changes Our Results

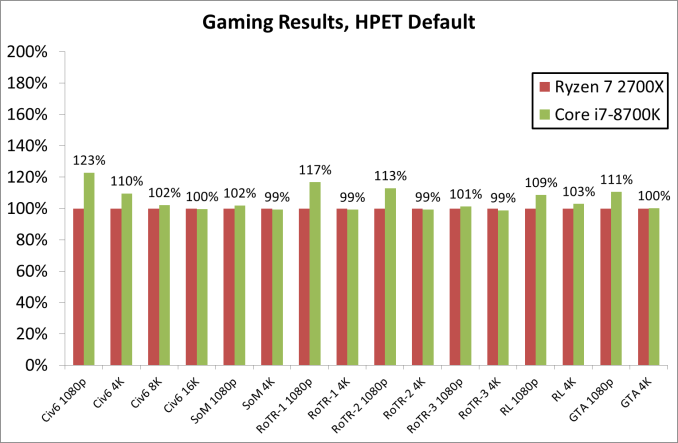

When we first published our Ryzen-2000 series review, with HPET forced as the timer in the operating system, our results were broadly showing that the new processors leading the pack. In light of the audit, especially with the way that the Intel gaming results have changed, paint a different picture.

At 1080p, the Core i7-8700K has a clear lead in most titles, although that lead does somewhat vanish moving to 4K, except with Civilization. Ultimately for any user pushing the pixel count, in our tests for the most part, the chips retain parity performance. AMD’s claims for the Ryzen-2000 launch were more focused on the 1440p gaming, however it is clear that there is still margin that benefits Intel at the most popular resolutions, such as 1080p.

Why This Matters, and How AnandTech is Set to Test in the Future

The interesting thing to come out of both Intel and AMD is that they seem to not worry if HPET is enabled or not. Regardless of the advice in the past, both companies seem to be satisfied when HPET is enabled in the BIOS and irreverent when HPET is forced in the OS. For AMD, the result change was slight but notable. For Intel we saw substantial drops in performance when HPET was the forced timer, and removing that lock improved performance.

It would be odd to hear if Intel are not seeing these results internally, and I would expect them to be including an explicit mention of ensuring the HPET settings in the operating system in every testing guide. Or Intel's thinking could be that because HPET not being forced is the default OS position, they might not see it as a setting they need to explicitly mention in their reviewer guides. Unfortunately, this opens up possibilities when it comes to overclocking software interfering with how the timers are being used.

As noted above, overclocking and monitoring tools like Ryzen Master request a restart when used for the first time in order to make sufficient changes to the system to run correctly. Some of this software will be forcing HPET in the BCD in order to enable what it needs to do, and the adjustment is unlikely to be explicitly mentioned in the request to restart. In a standard review, it is typically expected that each system will have a fresh OS and fresh software install, such that systems are tested as if it were new. For any user looking to tune the system, this is the point where any potential software issues could occur. Now should a reviewer decide to first analyze the software bundled with the system before testing or after testing could have significantly different results. It can create a conundrum, as has clearly been the case for us.

Moving forward, the immediate goal here at AnandTech is to ensure that our readers have the most up-to-date and correct results, particularly for our Ryzen 2000-series review. As a result, we are taking a few steps both immediately and in the future to correct our data, update our Ryzen 2000-series review, and to prevent this issue going forward.

First and foremost, we have decided that force-enabling HPET is not how we want to test systems, as this is non-default behavior. While it has an important role in extreme overclocking, to verify accurate timing, ultimately it was akin to taking a sledgehammer to cracking an egg for our testing - we need to be testing systems at stock. So all further CPU testing going forward will be using HPET's default behavior, and we have even put checks in our scripts to ensure this is now the case.

As a result we are retracting our existing results for all of the processors we used in the Ryzen 2000-series review. This goes for both the review and for Bench. All of these products will be updated with revised results using the default HPET behavior just as soon as the updated data is available over the course of the next week. In fact we're already the process of running this updated testing, which we've used for this article and uploaded to Bench.

The end goal here is to cover most of the popular processors from the previous few generations on our existing 2017 benchmark suite in order to fully update and republish our Ryzen 2000-series review. Meanwhile, because the results in that review are still being updated, the conclusion for that review is also being retracted. We don't anticipate updated results meaningfully changing that conclusion, but it is inappropriate to have a conclusion remain published until we have all of the data we need.

Longer-term, because this issue goes back further than just the Ryzen 2000-series review and we are already on the cusp of organizing our 2018 CPU benchmarking suite, we're also accelerating our rollout of that suite. After replacing the data for key hardware on that 2017 test suite, we will be rolling out the 2018 update in earnest. The 2018 CPU benchmarking suite will upgrade to the latest software, drivers, and a change-up on games (F1 2017, Shadow of War, Far Cry 5; also had requests for Deus Ex). Our 2018 suite will require that Spectre and Meltdown patches are in place for the systems we test, to ensure that everyone has access to the latest data.

(ed: It should be noted that this only affects Ian's CPU review data; Brett and Nate run different tools in their laptop and GPU reviews respectively)

Overall we expect to be done collecting data to finish and update the Ryzen 2000-series review next week. After that, it will take some time to roll out the 2018 CPU benchmarking suite data, but that should only be on the order of weeks assuming that there are no further surprises (ed: knock on wood).

We also would like to give all of our readers and colleagues a sincere thank you for assisting with this analysis. We continually strive to publish the best possible data, so your input is and always will be invaluable for finding patterns and oddities we may have missed.

Finally, while we're on the subject of timers, we'd like to throw out an open-ended question to everyone: given what we've found, should the use/requirement of HPET in software be made clearer? Or is there a risk that information being more confusing than helpful? One of the issues we grappled with in writing this article is that while HPET can have a performance impact, it's also not necessarily wrong to use it given its unique accuracy. So we're interested in hearing from all of you on how you think the use of HPET should be documented, so that users aren't caught off-guard by the potential performance impact..

Update: 04/26

HPET and Invariant TSC

Since publishing this follow up, several readers have reached out about their experiences with timers, as well as offering deeper explanations of some of the key points in this article. I will attempt to cover some of them here.

The main on-die CPU timer is the Time Stamp Counter (TSC), which was one of the main timers in single core systems. With the movement to multi-core, HPET became the new more accurate timer that as described can protect against clock drift. HPET was preferred to TSC, but can take 10-100x longer to be probed, due to its location on the chipset. The industry however is moving back towards TSC through an Invariant TSC (ITSC), which is a version of TSC that is stable through CPU frequency changes and C-state changes. The ITSC is accessed through the RDTSC instruction, which can be used simultaneously by both the kernel and user code if permitted (unlike HPET, which is a locked timer), and is sufficient for multi-core systems. And although this method still has the RTC bias issue, the lower latency is favoured by all, except overclockers adjusting the platform's 100 MHz base frequency.

TL;DR: HPET can take 1000s of cycles to read, and reading it with multiple cores compounds the issue. Invariant TSC, as a core instruction, is a potential solution with lower latency already in use.

“There is a HPET Bug, No Intel is Not Cheating” and TimerBench

Matthias from Overclockers.at reached out to me and linked me to his article on how they have previously encountered the issue. The article is a nice read, and well worth clicking through:

The HPET bug: What it is and what it isn't

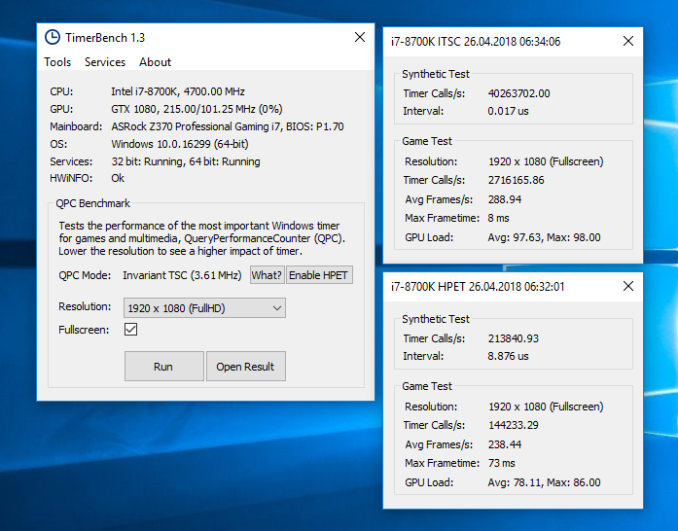

Matthais explains how during their X299 testing, they were experiencing slowdown in their game benchmarks, and pin-pointing the problem with HPET. (We also had similar issues, and didn’t post results, but never got to the bottom of the issue.) As a result, the team over at Overclockers.at developed a tool called TimerBench in order to determine the effect of HPET. As noted, HPET has a much longer latency, but is more accurate.

In the results from overclockers.at one metric stood out: moving from Broadwell-E to Skylake-X meant that the number of theoretical peak HPET calls per second reduced from 1.4 million to 0.2 million – the latency to make a HPET call suddenly became 7x longer with Skylake-X. TimerBench, the tool developed, provides an Unreal 4.7.2 scene and measures timer calls between a system running a game, and one without.

For our results, we used TimerBench on each system with a GTX 1080 in 1920x1080 mode, running fullscreen.

With the HPET timer, the i7-8700K system went from 214k timer calls per second outside of a game down to 144k timer calls per second, which is about the same fraction as with the ITSC timer. The big difference however is the frame rate, decreasing from 289 FPS with ITSC to 238 FPS with HPET, as well as the average GPU load, down from 97.6% to 78.1%. This is shown in the maximum frame time as well.

| TimerBench 1.3: GTX 1080 at 1920x1080p | ||||||||

| ITSC | HPET | Frames Per Second |

Average GPU Load |

|||||

| Calls OS | Calls Game | Calls OS | Calls Game |

ITSC | HPET | ITSC | HPET | |

| Desktop: GTX 1080 at 1920x1080 | ||||||||

| Ryzen 7 1800X | 27.7m | 2.0m | 0.4m | 0.3m | 283 | 279 | 96% | 95% |

| Core i7-8700K | 40.3m | 2.7m | 0.2m | 0.1m | 289 | 238 | 98% | 78% |

| Core i7-7820X | 35.5m | 2.4m | 0.2m | 0.1m | 285 | 252 | 95% | 83% |

| Core i7-6700K | 36.1m | 2.3m | 0.2m | 0.1m | 286 | 258 | 96% | 85% |

| Core i7-6950X* | 91.8m | 1.3m | 1.1m | 0.6m | 285 | 262 | 98% | 96% |

| Mobile: MX 150 at 800x600 | ||||||||

| Core i7-8550U | 34.3m | 0.9m | 0.2m | 0.06m | 148 | 137 | - | - |

* No Spectre/Meltdown Patches

When I correlate this data with the systems I have currently running, we see that the AMD Ryzen 7 1800X system is not particularly affected, but all of our Intel systems are: Skylake-S, Skylake-X, Coffee Lake, and even our mobile device. What is clear that the HPET timer is causing performance degredation by virtue of having a lower average GPU load. If the GPU is waiting on the same timing delays caused by HPET, this would lover the overall GPU load.

So this interesting correlation leads me to think that maybe this issue, aside from potential Spectre/Meltdown related points, is related to the chipset. HPET circuits are normally found on the chipset/southbridge, and in this case Intel has a wide HSIO chipset design in all the systems tested. As the chipset is, among other things, a PCIe switch, then it has various buffers to deal with the data coming in and out. The effect of these wide chipset and buffers might be part of the HPET issue. I need to go dig out an older system.

242 Comments

View All Comments

Dr. Swag - Wednesday, April 25, 2018 - link

It looks like you guys are re running all the benchmarks in the original review then, right? I see that the results look to be changed and less CPUs are on the lists (since you haven't rerun them all, I assume)Ryan Smith - Wednesday, April 25, 2018 - link

Correct. We knew at the start of the Ryzen 2 review what benchmarks and what products we wanted to include; this timer issue hasn't changed that.freaqiedude - Wednesday, April 25, 2018 - link

So would it be fair to say that Intel’s HPET implementation is potentially buggy? It seems to cause a disproportionate performance hit.chrcoluk - Wednesday, April 25, 2018 - link

no its just that TSC + lapic is the way to go, There is a reason thats the default in windows and other modern OS's.DanNeely - Wednesday, April 25, 2018 - link

It suggests that their implementation could probably be made less impactful than it currently is; but that high precision timers have had a performance impact has been known for a long time. In its guise as the multi-media timer in Windows over a decade ago the official MS docs recommended using lesser timing sources in lieu of it whenever possible because it would affect your system.What's new to the general tech site reading public is that there are apparently significant differences in the size of the impact between different CPU families.

Tamz_msc - Wednesday, April 25, 2018 - link

But is there a 'real' performance impact or does default HPET behavior simply introduce a fudge factor that alters how the tools report the numbers? Is there a way to verify the results externally?eddman - Wednesday, April 25, 2018 - link

I'm wondering about the same thing. Do the games' frame rate really change (they get smoother or vice versa) or the timer just messes up the numbers reported by benchmarks and the games' actual frame rate that reaches the display doesn't change?rahvin - Wednesday, April 25, 2018 - link

I'd be more concerned that Intel has found a way to make the timer report false benchmarks that are higher than they actually are. I'd also be curious if the graphics card/cpu combination is potentially at fault.Nvidia has been shown to cheat in the past on benchmarks by turning off features in certain games that are used for benchmarking to boost the score. Is Intel doing something similar?

Rob_T - Wednesday, April 25, 2018 - link

I came across a similar issue on VMware, where a virtual machine's clock would drift out of time synchronisation. The cause of this was that VMWare uses a software based clock and when a host was under heavy CPU load the VM's clock wouldn't get enough CPU resource to keep it updated accurately. This resulted in time running 'slowly' on the virtual machine.Under normal circumstances this kind of time driftissue would be handled by the Network Time Protocol daemon slewing the time back to accuracy; the problem is the maximum slew rate possible is limited to 500 parts-per-million (PPM). Under peak loads we were observing the VM's clock running slow by anywhere up to a third. This far outweighed the ability of the NTP slew mechanism to bring the time back to accuracy.

If this issue has the same root cause, the software based timers would start to run slowly when the system is under heavy load. Therefore more work could be completed in a 'second' due to it's increased duration. It would be interesting to know if the highest discrepancy were also the ones with the largest CPU loads? Looking at the gaming graphs on page 4 the biggest differences are at 1080p which suggests this might be the case.

oleyska - Wednesday, April 25, 2018 - link

You also had Idle issue with windows servers where time would drift., the high load I've never heard of in our company we have thousands upon thousands of vm's using vmware though.