Mega Memory: Crucial Ships 128 GB DDR4-2666 Modules for Servers at $3999 per Unit

by Anton Shilov on December 2, 2017 11:00 AM EST- Posted in

- Memory

- Crucial

- Micron

- DDR4

- Servers

- Skylake-SP

- Xeon Scalable

- LRDIMM

- DDR4-2666

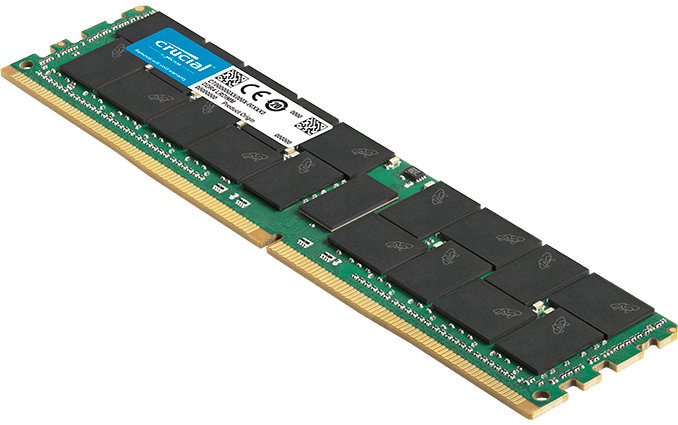

Crucial has started shipments of its fastest and highest density server-class memory modules to date. Crucial’s 128 GB DDR4-2666 LRDIMMs are compatible with the latest memory-dense servers. These modules should be usable in both AMD EPYC systems and Intel Xeon systems, however Crucial states that they are optimized for Intel’s Xeon Scalable CPUs (Skylake-SP) launched earlier this year, and are aimed at mission-critical RAM-dependent applications. Due to the complexity of such LRDIMMs, and because of their positioning as super dense memory, the price is very high.

Crucial’s 128 GB LRDIMMs are rated to operate at a 2666 MT/s interface speed with CL22 timings at 1.2 V. The module is based on Micron’s 8 Gb DRAM ICs, are made using 20 nm process technology, and are assembled into 4Hi stacks using TSVs. The LRDIMM uses 36 of such stacks of ICs. Stacking naturally makes organization of the module very complex: we are dealing with an octal ranked LRDIMM featuring two physical ranks and four logical ranks. Making such a module run at 2666 MT/s is a challenge, so they end up running at relatively high latencies (which are higher than CL17 – CL20 specified by JEDEC for DDR4-2666). This can somewhat diminish the benefits of relatively high clocks, but is not surprising in order to keep them stable.

The key advantage of 128 GB LRDIMMs is their density. For example, a dual-socket Xeon Scalable platform using the -M suffixed processors, featuring 12 memory slots, can expand the maximum memory size by 2X to 1.5 TB from 768 GB by using 128 GB LRDIMMs over 64 GB LRDIMMs. For DRAM-dependent applications, such as large databases, holding everything in memory is the most important thing for performance. Obviously, such performance advantage will come at a price.

| Specifications of Crucial's Server 128 GB DDR4-2666 LRDIMM | |||||

| Module Capacity | Latencies | Voltage | Organization | ||

| CT128G4ZFE426S | 128 GB | CL22 | 1.2 V | Octal Ranked | |

According to Crucial, production of 128 GB LRDIMMs involves 34 discrete stages with over 100 tests and verifications, making them particularly expensive to manufacture. These costs are then passed to customers buying such modules. The company sells a single 128 GB DDR4-2666 module online for $3,999 per unit, but server makers naturally get them at different rates based on quantity and support. At this rate, a full Xeon-SP system would cost $48k per socket, or for an EPYC system at 2 TB for each CPU, it would come to $64k per socket. At these rates, spending $13k or $4k for a CPU suddenly becomes a diminished part of the initial hardware cost (which is some justification for high-priced CPUs). At the Xeon-SP launch, Intel stated that fewer than 5% of its customers would use high memory capacity server configurations, though given how big the server market is, that is still a sizeable portion.

Crucial says that its 128 GB LRDIMMs are compatible with Xeon Scalable-based servers from various OEMs as well as software like Microsoft SQL and SAP HANA. We would expect them to be compatible with AMD EPYC servers too, however Crucial drives home the point about being optimized for Intel. Each module is tested individually to ensure maximum reliability for mission-critical applications. Specific support for these modules will be down to OEMs, as with other memory.

Related Reading

- Intel Unveils the Xeon Scalable Processor Family: Skylake-SP in Bronze, Silver, Gold and Platinum

- Crucial Announces DDR4-2666 DIMMs for Upcoming Server Platforms

- Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

- Dual Xeon Scalable Overclocking: ASUS WS C621E 'Sage' Workstation Motherboard Announced

- DDR4 Haswell-E Scaling Review: 2133 to 3200 with G.Skill, Corsair, ADATA and Crucial

- ASRock Rack Announces EP2C612D24 and 4L: Dual Socket Haswell-EP with 24 DDR4 Slots

Source: Crucial

28 Comments

View All Comments

milkod2001 - Saturday, December 2, 2017 - link

Wonder what's the production cost per stick , like 20 bucks?T1beriu - Saturday, December 2, 2017 - link

Reading is hard.III-V - Saturday, December 2, 2017 - link

$20 is incredibly imbecilic, but as far as "reading" goes, there's no mention of cost in this article. And it'd be kind of crazy for anyone to divulge that information.mjeffer - Saturday, December 2, 2017 - link

They also have to engineer what will be a complex, low volume part. That adds to the cost. To be honest I didn't find $3999 to be that bad considering the costs of other enterprise parts.Barilla - Sunday, December 3, 2017 - link

I'm not sure it can be considered a low volume part, sure - few customers will be buying these, but those that will? They'll order hundreds, or even thousands at a time.menting - Sunday, December 3, 2017 - link

hundreds and thousands of these modules are still considered low volume in the world of DRAM modules.evanrich - Saturday, December 2, 2017 - link

there are more than 20$ worth of parts on that stick as is, let alone mfg costs.yuhong - Saturday, December 2, 2017 - link

Main cost is producing the 4H TSV chips used. I think Samsung has been producing them for a while now.III-V - Saturday, December 2, 2017 - link

A 8 Gb die is trading at ~$9.75 (daily high) right now (and that's a slower speed -- 2133/2400). I don't know what the margins are, but they're not terribly high considering ram is a commodity. We'll just ignore that since it's an unknown and we can only go off market value.The packages use 4 dies, and if we assume Micron's magic and can make those without any additional production cost, that's $39 per package. There are 36 packages, or 144 dies on this DIMM.

That comes out to $1404 for the dies/packages themselves. The PCB, passives, and that other die aren't very expensive, probably < $10.

And as I pointed out, that price is based off a slower speed. That's going to add a lot to the cost (50%? double?). On top of that, you have retail markup (let's say, 30%?), testing, assembly, marketing, distribution costs.

Samus - Sunday, December 3, 2017 - link

That's a pretty good analysis. I would have guessed 300% margins but you are right in that it's probably closer to 200% considering the octal stacking and complexity of producing a stick reliably (a lot of binning and verification)