GlobalFoundries and AMD Announce First 14nm FinFET Sample Production Success

by Ryan Smith on November 5, 2015 9:00 PM EST- Posted in

- CPUs

- AMD

- GlobalFoundries

- APUs

- GPUs

- fabrication

- 14nm

GlobalFoundries, AMD’s former chip manufacturing arm, is a fab that has seen some hard times. After being spun-off from AMD in 2009, the company has encountered repeated trouble releasing new manufacturing nodes in a timely process, culminating in the company canceling their internally developed 14XM FinFET process. Charting a new course, the in 2014 the company opted to license Samsung’s 14nm FinFET process, and in some much-needed good news for the company, today they and AMD are announcing that they have successfully fabbed AMD’s first 14nm sample chip.

Today’s announcement, which comes by way of AMD, notes that the fab has produced their first 14nm FinFET LPP sample for AMD. The overall nature of the announcement is somewhat vague – GlobalFoundries isn’t really defining what “successful” means – though presumably this means AMD has recieved working samples back from the fab. Overall the message from the two companies is clear that they are making progress on bringing up 14nm manufacturing at GlobalFoundries ahead of mass production in 2016.

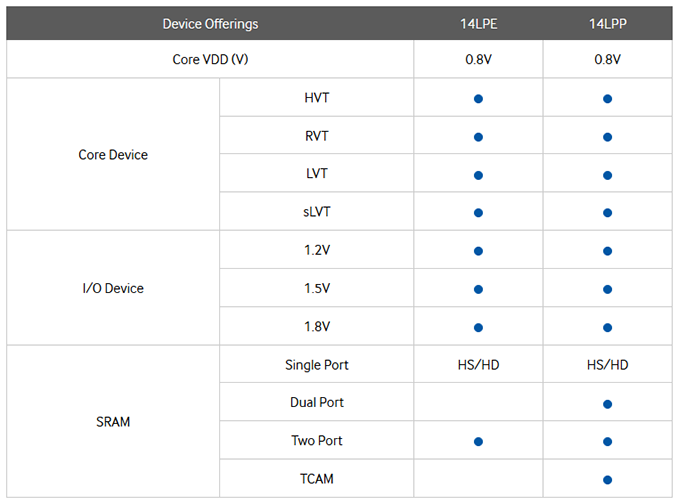

Of particular importance in today’s announcement is the node being used; the sample chips were fabbed on 14nm Low Power Plus (LPP), which is Samsung’s (and now GlobalFoundries’) second-generation 14nm FinFET design. Relative to the earlier 14nm Low Power Early (14LPE) design, 14LPP is a refined process designed to offer roughly 10% better performance, and going forward will be the process we expect all newer chips to be produced on. So in the long-run, this will be GlobalFoundries’ principle FinFET process.

Samsung Brochure on 14LPE vs. 14LPP

AMD for their part has already announced that they have taped out several 14LPP designs for GlobalFondries, so a good deal of their future success hinges on their long-time partner bringing 14LPP to market in a timely manner. For today’s announcement AMD is not disclosing what chip was successfully fabbed, so it’s not clear if this was CPU, APU, or GPU, though with GlobalFoundries a CPU/APU is more likely. Though no matter what the chip, this is a welcome development for AMD; as we have seen time and time again with chips from Intel, Samsung, and Apple, a properly implemented FinFET design can significantly cut down on leakage and boost the power/performance curve, which will help AMD become more competitive with their already FinFET-enabled competition.

Finally, looking at the expected timetables, GlobalFoundries’ production plans call for their 14LPP process to enter the early ramp-up phase this quarter, with full-scale production starting in 2016. Similarly, in today’s announcement AMD reiterated that they will be releasing products in 2016 based on GlobalFoundries’ 14LPP process.

Source: AMD

58 Comments

View All Comments

mayankleoboy1 - Friday, November 6, 2015 - link

Their GPU's are not embarassingsilverblue - Friday, November 6, 2015 - link

Nor is Carrizo, for all intents and purposes. Imagine being told that your new product which uses half the power of the old one for the same, if not greater performance, is "embarassing". And that's at 15W.gijames1225 - Friday, November 6, 2015 - link

What they managed to do with Carrizo is really impressive. If they finally have access to a process node that isn't four years old I'm hopeful about AMD to at least get back on their feet a bit.Refuge - Monday, November 9, 2015 - link

If only they could get them put into some fucking laptops!!!! Gah!Samus - Friday, November 6, 2015 - link

No, they're really good, but not competitively priced.looncraz - Saturday, November 7, 2015 - link

They're priced just fine. In the real world, you can't get a 980Ti for less than $750 that comes with a cooler as nice as the one that comes with the $650 Fury X.If water cooling doesn't matter to you that much, buy the standard Fury for $100 less and lose almost no performance.

The problem I have grabbing one is the lack of dual DVI-D. The display connectivity choices are abysmal. Hopefully AMD has learned from the backlash over their choices... or someone makes a good Fury card with Dual DVI-D connections.

markbanang - Saturday, November 7, 2015 - link

If a lack of DVI-D is your only problem, why not invest in a couple of £10 DisplayPort to DVI-D adaptors? I have a full set of DP adapters for work, because we have no standard for conference room projectors, and you might need to connect by DVI, HDMI or even VGA.Valantar - Sunday, November 8, 2015 - link

I for one applaud them for ditching obsolete standards sooner rather than later. Especially given the amazing versatility of DP and the wealth of reasonably priced adapters that can be found.Sticking a DVI port on the Fury X would have been silly and odd given the otherwise forward looking nature of the card.

If you can afford a $650 GPU, you can afford a $20 adapter dongle.

David_K - Thursday, November 12, 2015 - link

Actually for people with 1440p this adapters wont work as they support up to 1080p , and adapters from DUAL LINK to Display port that actually use the bandwidth of Dual link so you could use your old 1440p montor that does not have a native displayport connector are expensive , they are active so i am sure they also add lantecy .medi03 - Monday, November 9, 2015 - link

AMD's mid range GPUs rock, I mean R9 380 and R9 390.380 has much better perf than 960, while consuming marginally more power in games (7-30w).

In the high end, Fury is a mixed bag, and I don't care about lower end so didn't follow.