The AMD A8-7670K APU Review: Aiming for Rocket League

by Ian Cutress on November 18, 2015 8:00 AM ESTRocket League

Hilariously simple pick-up-and-play games are great fun. I'm a massive fan of the Katamari franchise for that reason — passing start on a controller and rolling around, picking up things to get bigger, is extremely simple. Until we get a PC version of Katamari that I can benchmark, we'll focus on Rocket League, as recent noise around its accessibility has had me interested.

Rocket League combines the elements of pick-up-and-play, allowing users to jump into a game with other people (or bots) to play football with cars with zero rules. The title is built on Unreal Engine 3, which is somewhat old at this point, but it allows users to run the game on super-low-end systems while still taxing the big ones. Since the release earlier in 2015, it has sold over 5 million copies and seems to be a fixture at LANs and game shows — and I even saw it being played at London’s MCM Expo (aka Comic Con) this year. Users who train get very serious, playing in teams and leagues with very few settings to configure, and everyone is on the same level. As a result, Rocket League could be a serious contender for a future title for e-sports tournaments (if it ever becomes free or almost free, which seems to be a prerequisite) when features similar to DOTA on watching high-profile games or league integration are added. (To any of the developers who may be reading this: You could make the game free and offer pay-for skins or credits for watching an official league match – it wouldn’t diminish the quality of actual gameplay in any way.)

Obligatory shot of Markiplier on Rocket League (caution, coarse language in the link)

Based on these factors, plus the fact that it is an extremely fun title to load and play, we set out to find the best way to benchmark it. Unfortunately, automatic benchmark modes for games are few and far between. In speaking with some indie developers as well as big studios, we learned that, for there to be a benchmark mode, the game has to be designed with a benchmark in mind from the beginning, because adding an automated one at a later date can be almost impossible. Some developers seem to realize this as their (first major) title is near completion, whereas large game studios don't care at all, even though a good benchmark mode will ensure its presence in many technical reviews, increasing awareness of them and answering a number of performance-related questions for the community automatically. Partly because of this, but also on the basis that it is built on the Unreal 3 engine, Rocket League does not have a benchmark mode. In this case, we have to develop a consistent run and record the frame rate.

Developing a consistent run for frame-rate analysis can be difficult without a "trace." A trace ensures that random numbers are fixed and that the same plot occurs each time – the Source engine was very good for this when we used to have Portal benchmarks, or even Battlefield 2 did it reasonably well. But when a trace is unavailable, we have to deal with non-player characters that use random action generators in sports-like titles. When faced with a task, typically, an AI function will have a weighted set of options of what it should do, and then will generate a random number that usually does option A, but might do option B and, 1 in 100 times, does uncharacteristic option C. But we've had this situation of random AI tasks before. For example, any racing benchmark that uses the Ego engine — such as DiRT, DiRT 2, DiRT Rally, GRID, GRID Autosport and any official F1 title this decade — runs a race over a fixed number of laps, representing what can happen in an actual race. While you don't get the same frames being rendered, the overall frame-rate profile of a long benchmark run should have both high- and low-fps moments and end up similar when all possible variables you can fix are fixed. As long as you don’t look at the absolute minimum frame rate and report it, the averages (mean or median) and percentiles instead should align appropriately.

With Rocket League, there is no benchmark mode, so we have to perform a series of automated actions. We take the following approach: Using Fraps to record the time taken to show each frame (and the overall frame rates), we use an automation tool to set up a consistent 4v4 bot match on easy, with the system applying a series of inputs throughout the run, such as switching camera angles and driving around. It turns out that this method is nicely indicative of a real bot match, driving up walls, boosting and even putting in the odd assist, save and/or goal, as weird as that sounds for an automated set of commands. To maintain consistency, the commands we apply are not random but time-fixed, and we also keep the map the same (Denham Park) and the car customization constant. We start recording just after a match starts, and record for 4 minutes of game time, with average frame rates, 99th percentile and frame times all provided.

The graphics settings for Rocket League come in four broad, generic settings: Low, Medium, High and High FXAA. There are advanced settings in place for shadows and details; however, for these tests, we keep to these four. Actually, due to an odd quirk with Rocket League, in most resolutions, only Low and High will generate different results. The title doesn’t require much in the way of GPU resources at times depending on the resolution (720p vs. 4K), and in our testing, the FXAA mode for High gave the same results as High, while in any resolution below 1920x1080, the Low and Medium results were equivalent. Our initial tests went through all four generic settings at 720p, 900p and 1080p to determine what would be a good metric for integrated graphics settings.

At this point, it is worth mentioning a small quick issue with Rocket League regarding frame rates. By default, the game is capped at 60 fps for a variety of reasons, including game consistency and a hybrid form of power saving, allowing the system to sleep if the system can produce and dump frames. Removing this cap requires adjusting the TASystemSettings.ini file, and the AllowPerFrameSleep parameter to False. Doing this lifts the cap, although some users of earlier versions have reported some camera issues in certain configurations. Our testing has not showed any issues resulting from an uncapped frame rate. Also, there is now a way to force MSAA, although we are not using it for this test.

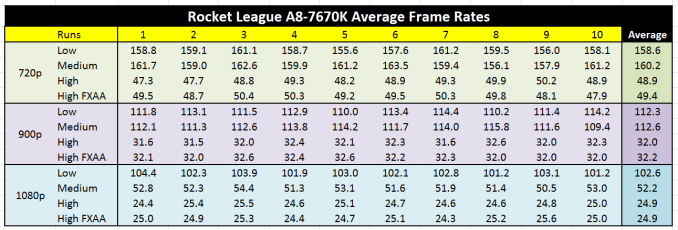

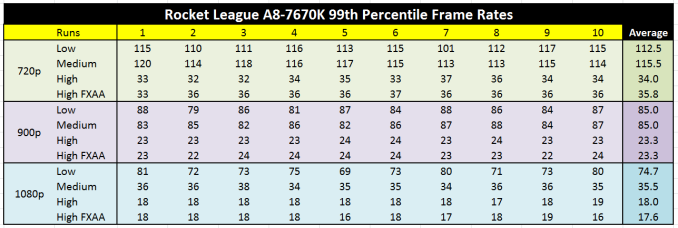

Thus, with our test, we did a sweep of 1280x720, 1600x900 and 1920x1080 at each of the four graphics settings, at 10 runs apiece. That's 120 games of football/soccer, at 4 minutes of frame recording each (plus 90 seconds for automated setup to load the game and select the right match). Because Fraps also lets us extract frame time data, we can analyze percentile profiles as well. The following results show each of the 10 runs at each setting, with a final average at the end (click through to see the full table in high resolution).

For most users, the golden value of either 60 fps or 30 fps matters a great deal, depending on the scenario. Currently, our game tests use settings designed to allow a good integrated GPU (or R7 240-like discrete GPU) to achieve either 30 fps on the average or 30 fps on the 99th percentile, with some approaching a 60-fps average. In this case, the integrated graphics for the A8-7670K feels best at either:

- 1280x720 High: 48.8 fps average, 34.0 fps at 99th

- 1600x900 High: 32.0 fps average, 23.3 fps at 99th

- 1920x1080 Medium: 52.2 fps average, 35.5 fps at 99th

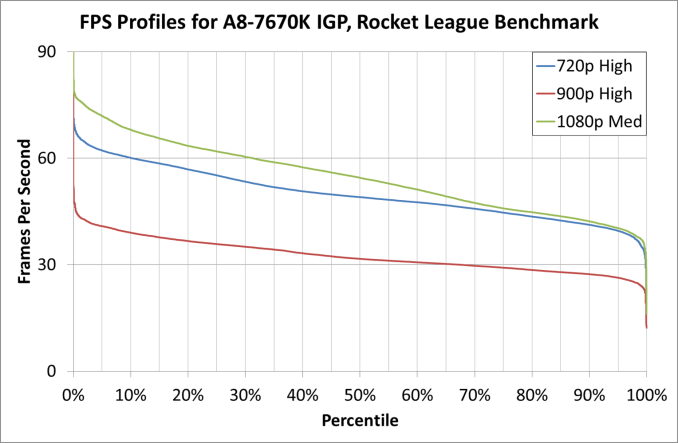

Here's what these look like on a frame-rate profile chart, indicating where the frame rate typically lies.

This graph shows that the 900p High line hits 30 fps only 67% of the time, clearly taking it out of the running. With the other two, we are comfortably in the 30-fps zone for just about the whole benchmark, but the 60-fps numbers are interesting — at 1080p and Medium settings, about 32% of the frames are over 60 fps, compared to only 10% of the frames at 720p High.

Numbers aside, look at the images below for quality and clarity, and see what you think. I've added in 900p as well, for completeness.

There's no doubt about it: At High settings, the game looks nicer. Colors and lighting are more vibrant. But this is countered by better edge compensation at the higher resolutions, making it easier to see what is in front of you at medium to long distances, as well as giving the game a smoother feel in general.

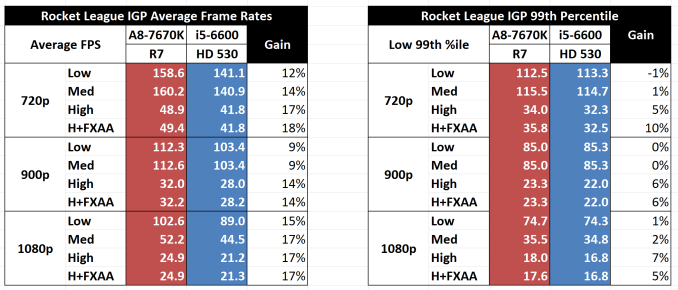

Because this is a new test, we are still testing it with other CPUs, and it'll make a full appearance next year in our 2016 benchmark update. But for now, we have an i5-6600 processor (one of Intel’s latest Skylake 65W parts for a future review) tested at all the resolution and graphics combinations. Here, we use both processors at their JEDEC memory supported frequencies (A8-7670K at DDR3-2133, i5-6600 at DDR4-2133).

On average, the A8-7670K in this comparison produces 14% better frame rates, with 720p and 1080p getting the best jumps for the more strenuous graphics settings. The 99th-percentile figures also favor the A8-7670K — this time, by an average of 4% but still preferential when the graphics settings are moving from low to high.

For our 2016 CPU benchmark tests, it would seem to suggest that 1280x720 at High or 1920x1080 at Medium would most likely be our CPU-focused benchmarks on integrated graphics going forward. Unless enough 4K monitors come my way, then we can also add in some 4K High comparisons for extreme graphics situations.

154 Comments

View All Comments

BurntMyBacon - Thursday, November 19, 2015 - link

@Ian Cutress: "It's never an issue of lack of interest or subversion, just procurement (and ensuring we can communicate with the manufacturer at the point of testing)."[sarcasm]I thought Intel and all other computer hardware manufacturers were required by LAW to send their products to Anandtech for prelaunch approval where Supreme Tech Justice Cutress and his colleagues pronounce life or death on potential products. ;' ) [/sarcasm]

Seriously though, I'd say you are doing fine despite the real world procurement and scheduling issues getting in the way.

flabber - Wednesday, November 18, 2015 - link

I can't agree any more with the point that power consumption is the least of my concerns. While there is a significant difference between a 250W TDP CPU and a 50W TDP CPU, where one would have to factor in the cost of cooling and a PSU, 100W is quite manageable. A 500W PSU is more that adequate for just about any current system. However, I am aware that decreased power consumption is an objective in all consumer products and will be seeing in upcoming computer components. (Ironically for mobile components, my 2009 Blackberry with a 1150mAh battery can still run for a couple days before I need charge it.)My rig is equipped with a A10-5800k (2012) and a year old R9-290X (2013). Everyday tasks, such as using a spreadsheet, word processor, citation management or occasional image editing, can't be improved in any noticeably way. With regard to gaming, I can't be bothered to upgrade the motherboard and CPU to a superior Intel alternative. A few more frames per second won't make a game with poor storyline any better, nor will an enjoyable game become any better.

Pissedoffyouth - Thursday, November 19, 2015 - link

If you have 5800 you should look at getting a kaveri for better performance and lower power consumptionGadgety - Thursday, November 19, 2015 - link

"AMD's first talking point is, of course, price. AMD considers their processors very price-competitive"No kidding, I got the A8-7600 for my kid and it's integrated graphics is comparable to the i7 Iris Pro, where the i7 is 5x more expensive. So for 20% of the price we get to enjoy graphics galore. Put it on Asrock's A88X M-ITX motherboard and it outputs 4K cinema. No graphics card means it's compact so we use a tiny chassis, perfect HTPC, and useable for the type of light gaming the kids do.

Gadgety - Thursday, November 19, 2015 - link

@Ian Cutress The performance parity, and sometimes superiority, of the A8-7670k compared to the A10-7850k, and also to the A10-7870k, I guess could be attributable to driver improvements. Did you use the same drivers, or updated versions? If it's improved drivers, this would likely also improve across the APU-range.Ian Cutress - Thursday, November 19, 2015 - link

Same drivers for each. I lock a set of drivers down every test-bed refresh, so in this case it would be 15.4 beta, which is getting a bit old now. Kaveri Refresh does have some minor internal improvements as well I imagine, internal bus frequencies perhaps. There's always a small amount of volatility in the benchmark, depending on what heat density or board issue you might have. Looking back, we haven't always used the same motherboard on the APUs just due to timing (but all A88X), and even though we do some overriding on power profiles it can be difficult to compensate for motherboard manufacturer non-user exposed firmware optimisations on the memory buses.Come the next year test-bed refresh (with DX12 relevant titles hopefully), I'll be going back and redoing them all. That should clear out the cobwebs on the latest drivers and updates, providing a new base.

milli - Thursday, November 19, 2015 - link

Ian Cutress, how is the review of your grandparents laptop coming along? :)I'm waiting for that Carrizo review.

zlandar - Thursday, November 19, 2015 - link

I don't see the point of being so cheap that you are unwilling to spend more for a superior i3-5 and discrete video card. Why would you chain yourself to a dead-end cpu/gpu integrated combo and motherboard that isn't very good to start with?Plenty of people have pointed out how well Intel's cpu have held up since Sandy Bridge. I'm still using a 2600k and have upgraded my video card 3 times. If you are on a tight gaming budget it makes alot more sense to buy 2nd-3rd tier last gen video cards coupled with a good cpu you don't need to upgrade.

BurntMyBacon - Thursday, November 19, 2015 - link

@zlandar "Why would you chain yourself to a dead-end cpu/gpu integrated combo and motherboard that isn't very good to start with?"Aren't just about all laptops deadend with respect to CPU/GPU? (Particularly in the Carrizo price range)

Ton's of laptops are sold without discrete GPUs and no option to upgrade. Why should this matter to a Carrizo review (clearly laptop in this request)?

Ian Cutress - Thursday, November 19, 2015 - link

Something special in the works. After SC15 finishes, I'll be digesting the mountain of data I have. :)