GeForce + Radeon: Previewing DirectX 12 Multi-Adapter with Ashes of the Singularity

by Ryan Smith on October 26, 2015 10:00 AM ESTAshes GPU Performance: Single & Mixed 2012 GPUs

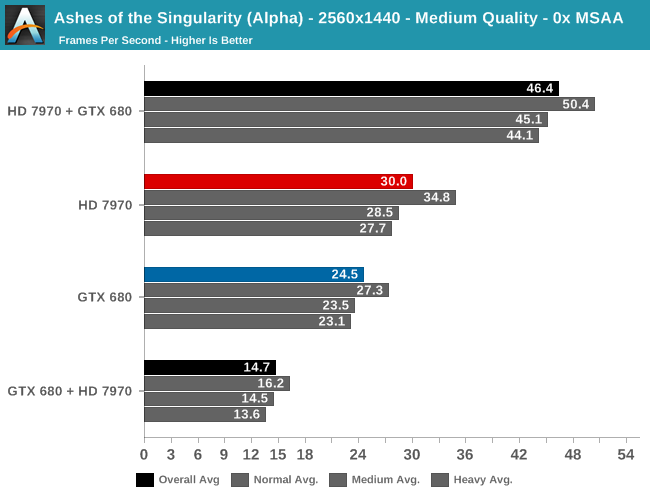

While Ashes’ mutli-GPU support sees solid performance gains with current-generation high-end GPUs, we wanted to see if those gains would extend to older DirectX 12 GPUs. To that end we’ve put the GeForce GTX 680 and the Radeon HD 7970 through a similar test, running the Ashes’ benchmark at 2560x1440 with Medium image quality and no MSAA.

First off, unlike our high-end GPUs there’s a distinct performance difference between our AMD and NVIDIA cards. The Radeon HD 7970 performs 22% better here, just averaging 30fps to the GTX 680’s 24.5fps. So right off the bat we’re entering an AFR setup with a moderately unbalanced set of cards.

Once we do turn on AFR, two very different things happen. The GTX 680 + HD 7970 setup is an outright performance regression, with performance 40% from the single GTX 680 Ti. On the other hand the HD 7970 + GTX 680 setup sees an unexpectedly good performance gain from AFR, picking up a further 55% to 46.4fps.

As this test is a smaller number of combinations it’s not clear where the bottlenecks are, but it’s none the less very interesting how we get such widely different results depending on which card is in the lead. In the GTX 680 + HD 7970 setup, either the GTX 680 is a bad leader or the HD 7970 is a bad follower, and this leads to this setup spinning its proverbial wheels. Otherwise letting the HD 7970 lead and GTX 680 follow sees a bigger performance gain than we would have expected for a moderately unbalanced setup with a pair of cards that were never known for their efficient PCIe data transfers. So long as you let the HD 7970 lead, at least in this case you could absolutely get away with a mixed GPU pairing of older GPUs.

180 Comments

View All Comments

IKeelU - Monday, October 26, 2015 - link

We've come a hell of a long way since Voodoo SLI.Leaving it up to developers is most definitely a good thing, and I'm not just saying that as hindsight on the article. We'll always be better off not depending on a small cadre of developers in Nvidia/AMD's driver departments determining SLI performance optimizations. Based on what I'm reading here, the field should be much more open. I can't wait to see how different dev houses deal with these challenges.

lorribot - Monday, October 26, 2015 - link

Generally speaking leaving it up to developers is a bad thing, you will end up with lots of fragmentation, patchy/incomplete implementation and a whole new level of instability, that is why DirectX came about in the first place.I just hope this doesn't break more than it can fix.

We need an old school 50% upgrade to the hardware capability to deliver 4K at reasonable price point, but I don't see that coming any time soon judging by the last 3 or 4 years of small incremental steps.

All of this is the industry recognising it's inability to deliver hardware and wringing every last last drop of performance from the existing equipment/nodes/architecture.

McDamon - Tuesday, October 27, 2015 - link

Really? I'm developer, so I'm biased, but to me, leaving it up to the developer is what drives the innovation in this space. DirectX, much like OpenGL, were conceived to homologate APIs and devices. Glide and such. In fact, as is obvious, both APIs have moved away from the fixed function pipeline to a programmable model to allow for developer flexibility, not hinder it. Sure, there will be challenges for the first few tries with the new model, but that's why companies hire smart people right?CiccioB - Tuesday, October 27, 2015 - link

Slow incremental steps during last 3-4 years?You probably are speaking about AMD only, as nvidia has made great progresses from GTX680 to GTX980Ti both in terms of performances and power consumption. All of this on the same PP.

loguerto - Sunday, November 1, 2015 - link

You are hugely sub estimating the GCN architecture, nvidia might have had a jump from kepler to maxwell in terms of efficiency (in part by cutting down the double precision performance), but still with the same slightly improved GCN architecture amd competes in dx11 and often outperforms the maxwells in the latests dx12 benchmarks. I when I say that I invite everyone to look at the entire GPU lineup and not only the 980ti vs fury x benchmarks.IKeelU - Tuesday, October 27, 2015 - link

Your first statement is pretty entirely wrong: a) we already have fragmentation in the form of different hardware manufacturers and driver streams. b) common solutions will be created in the form of licensed engines, c) the people currently solving these problems *are* developers, they just work for Nvidia and AMD, instead of those directly affected by the quality of end-product (game companies).Your contention that solutions should be closed off only really works when there's a clearly dominant and common solution to the problem. As we've learned over the last 15 years, there simply isn't. Every game release triggers a barrage of optimizations from the various driver teams. That code is totally out of scope - it should be managed by the concerned game company, not Nvidia/AMD/Intel.

callous - Monday, October 26, 2015 - link

why test with intel APU + fury? It's more of a mainstream configuration than 2 video cardsRefuge - Tuesday, October 27, 2015 - link

I believe it is too large of a performance gap, it would just hamstring the Fury.nagi603 - Monday, October 26, 2015 - link

nVidia already forcefully disabled using an nvidia card as physix add-in card with an AMD main GPU. When will they try to disable this extra feature?silverblue - Tuesday, October 27, 2015 - link

They may already have; then again, there could be a legitimate reason for the less than stellar performance with an AMD card as the slave.