The IBM POWER8 Review: Challenging the Intel Xeon

by Johan De Gelas on November 6, 2015 8:00 AM EST- Posted in

- IT Computing

- CPUs

- Enterprise

- Enterprise CPUs

- IBM

- POWER

- POWER8

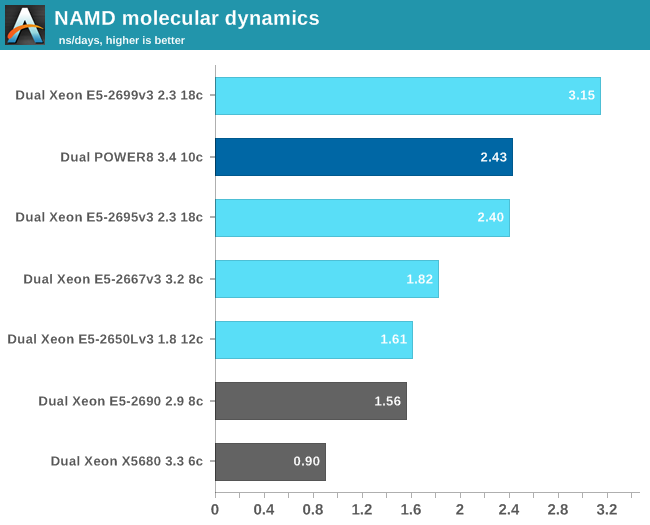

Floating Point: NAMD

After quite a bit of trouble, we managed to port a real floating point application to our POWER8 system: NAMD.

Developed by the Theoretical and Computational Biophysics Group at the University of Illinois Urbana-Champaign, NAMD is a set of parallel molecular dynamics codes for extreme parallellization on thousands of cores. NAMD is also part of SPEC CPU2006 FP.

We used the "NAMD_2.10_Linux-x86_64-multicore" binary for our Xeons. Since there was no LE Linux version for POWER8, we built our own. We got it working with the g++ compiler and used these settings:

-O3 -mcpu=power8 -ftree-vectorize -mpopcntd

These setting should push GCC to generate as much VSX (Vector Scalar eXtenstion) code as possible. We used the most popular benchmark load, apoa1 (Apolipoprotein A1). The results are expressed in simulated nanoseconds per wall-clock day.

To put this in perspective: an early Xeon Phi (7120 1.2 GHz) scores about 4.4, A top NVIDIA GPU with CUDA based NAMD can score up to 20 and more. So it is clear that this kind of software will be run mostly on GPU accelerated servers.

But it is nonetheless a real world HPC benchmark. The IBM POWER8 is once again on par with the Xeon E5-2695v3. The NAMD binary does not seem to leverage AVX2, as the Xeon E5-2667 (16 cores) does not outperform the Xeon E5-2690 (AVX) with a large margin.

146 Comments

View All Comments

Kevin G - Saturday, November 7, 2015 - link

If all you do is just mount the network volume to use the data, then likely nothing at all. While binaries do have to be modified, the file systems themselves are written to store data in a single consistent manner. If you're wondering more if there would be some overhead in translating from LE to BE to work in memory, conceptually the answer is yes but I'd predict it be rather small and dwarfed by the time to transfer data over a network. I'd be curious to see the results.Ultiamtely I'd be more concerned with kernel modules for various peripherals when switching between LE and BE versions. Considering that POWER has been BE for a few generations and you did your initial testing using LE, availability shouldn't be an issue. You've been using the version which should have had the most problems in this regard.

spikebike - Friday, November 6, 2015 - link

So basically power is somewhat competitive with intel's WORST price/perf chips which also happen to have the worst memory bandwidth/CPU. Seems nowhere close for the more reasonable $400-$650 xeons like the D-1520/1540 or the E5-2620 and E5-2630. Sure IBM has better memory bandwidth than the worst intels, but if you want more memory bandwidth per $ or per core then get the E5-2620.JohanAnandtech - Saturday, November 7, 2015 - link

It is definitely not an alternative for applications where performance/watt is important. As you mentioned, Intel offers a much better range of SKUs . But for transactional databases and data mining (traditional or unstructured), I see the POWER8 as very potent challenger. When you are handling a few hundreds of gigabytes of data, you want your memory to be reliable. Intel will then steer you to the E7 range, and that is where the POWER8 can make a difference: filling the niche between E5 and E7.nils_ - Wednesday, November 11, 2015 - link

Especially if you're running software that doesn't easily scale out very well these are very competitive. And nowadays even MySQL will scale-up nicely to many, many cores.Gigaplex - Friday, November 6, 2015 - link

"Less important, but still significant is the fact that IBM uses SAS disks, which increase the cost of the storage system, especially if you want lots of them."The Dell servers I've used had SAS controllers, and every SAS controller I've dealt with supported using SATA drives. I'm pretty sure SATA compatibility is in the SAS specification. In fact, the Dell R730 quoted in this review supports SAS drives. There shouldn't be anything stopping you from using the same drives in both servers.

JohanAnandtech - Saturday, November 7, 2015 - link

You are absolutely right about SATA drives being compatible with a SAS controller. However, afaik IBM gives you only the choice between their own rather expensive SAS drives and SSDs. And maybe I have looked over it, but in general DELL let you only chose between SATA and SSDs. And this has been the trend for a while: SATA if you want to keep costs low, SSDs for everything else.TomWomack - Sunday, November 8, 2015 - link

And mounting a storage server made out of commodity hardware over a couple of lanes of 10Gbit Ethernet if you don't want to pay the exotic-hardware-supplier's markup on disc.Gunbuster - Friday, November 6, 2015 - link

SAP and IBM AIX servers... I guess if you want to blow out your entire IT budget in once easy decision...Jake Hamby - Friday, November 6, 2015 - link

I forgot to mention: VMX is better known as AltiVec (it's also called "Velocity Engine" by Apple). It's a very nice SIMD extension that was supported by Apple's G4 (Motorola/Freescale 7400/7450) and G5 (IBM PPC 970) Macs, as well as the PPC game consoles.It would be interesting to compare the Linux VMX crypto acceleration to code written to use the newer native AES & other instructions. In x86 terms, it'd be like SSE-optimized AES vs. the AES-NI instructions.

Oxford Guy - Saturday, November 7, 2015 - link

I had a dual 450 MHz G4 system and AltiVec was quite amazing in iTunes when doing encoding. Between the second processor and the AltiVec putting things into ALAC was very fast (in comparison with other machines at the time like the G3 and the AMD machines I had).