The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTGenerational Tests on the i7-6700K: Legacy, Office and Web Benchmarks

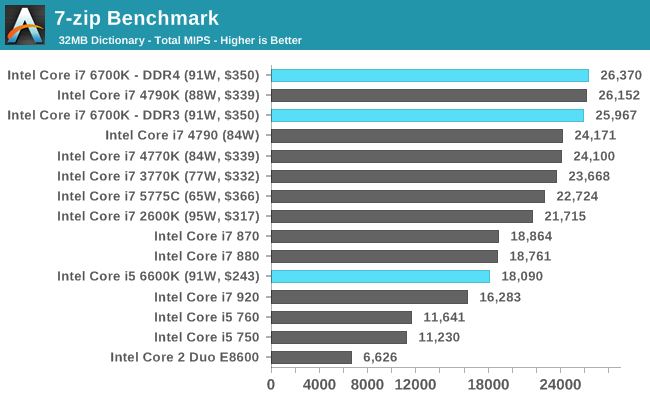

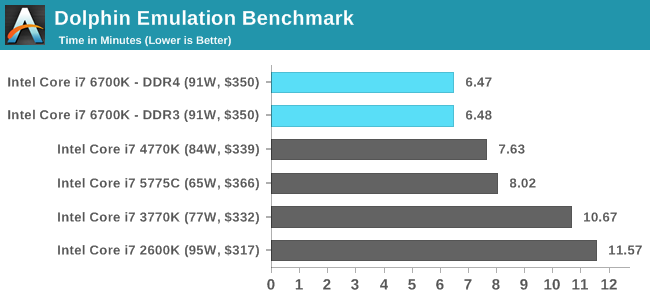

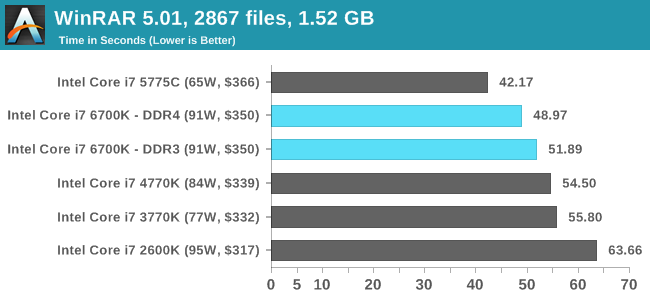

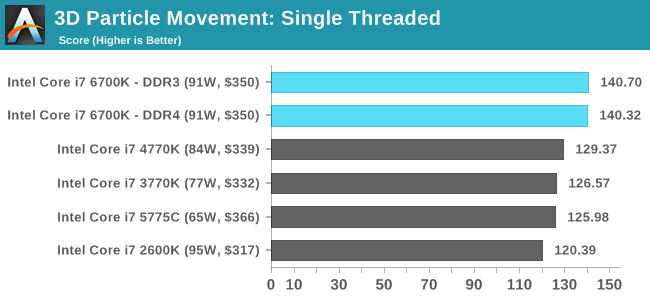

Moving on to the generational tests, and similar to our last Broadwell review I want to dedicate a few pages to specifically looking at how stock speed processors perform as Intel has released each generation. For this each CPU is left at stock, DRAM set to DDR3-1600 (or DDR4-2133 for Skylake in DDR4 mode) and we run the full line of CPU tests at our disposal.

Legacy

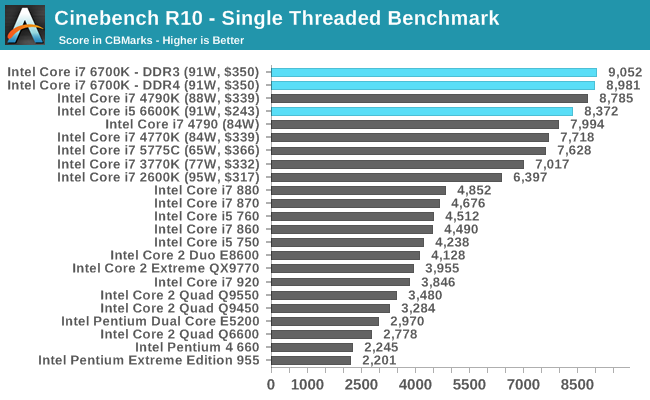

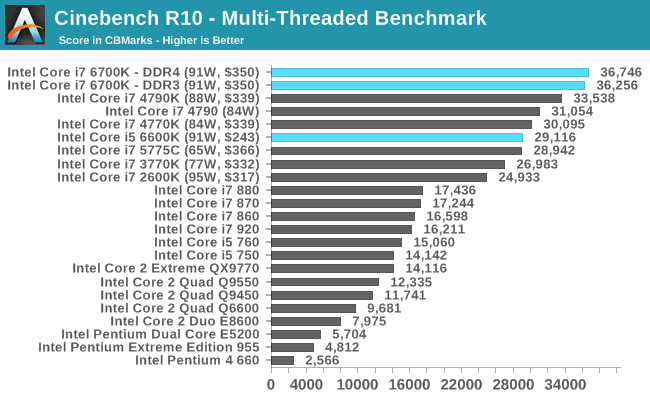

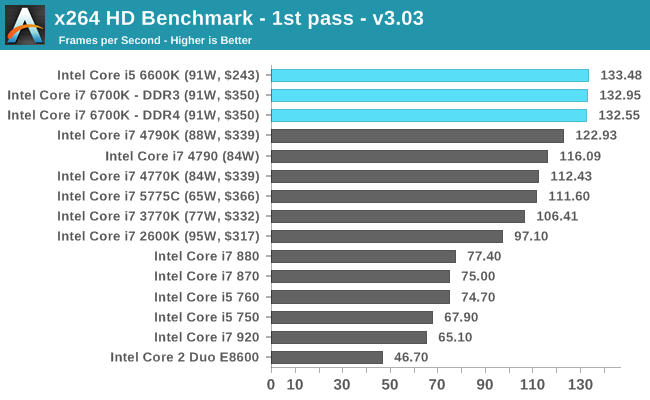

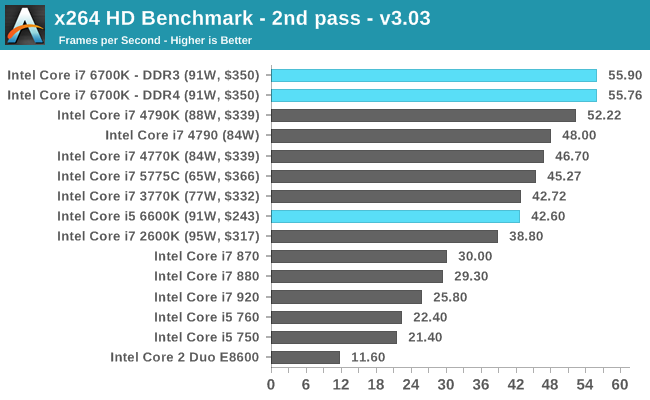

Some users will notice that in our benchmark database Bench, we keep data on the CPUs we’ve tested back over a decade and the benchmarks we were running back then. For a few of these benchmarks, such as Cinebench R10, we do actually run these on the new CPUs as well, although for the sake of brevity and relevance we tend not to put this data in the review. Well here are a few of those numbers too.

Even with the older tests that might not include any new instruction sets, the Skylake CPUs sit on top of the stack.

Office Performance

The dynamics of CPU Turbo modes, both Intel and AMD, can cause concern during environments with a variable threaded workload. There is also an added issue of the motherboard remaining consistent, depending on how the motherboard manufacturer wants to add in their own boosting technologies over the ones that Intel would prefer they used. In order to remain consistent, we implement an OS-level unique high performance mode on all the CPUs we test which should override any motherboard manufacturer performance mode.

Dolphin Benchmark: link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that raytraces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in minutes, where the Wii itself scores 17.53 minutes.

WinRAR 5.0.1: link

Our WinRAR test from 2013 is updated to the latest version of WinRAR at the start of 2014. We compress a set of 2867 files across 320 folders totalling 1.52 GB in size – 95% of these files are small typical website files, and the rest (90% of the size) are small 30 second 720p videos.

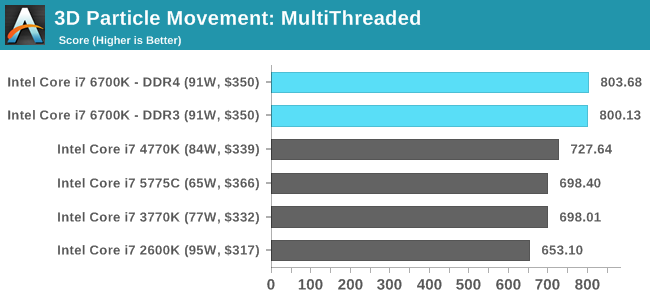

3D Particle Movement

3DPM is a self-penned benchmark, taking basic 3D movement algorithms used in Brownian Motion simulations and testing them for speed. High floating point performance, MHz and IPC wins in the single thread version, whereas the multithread version has to handle the threads and loves more cores.

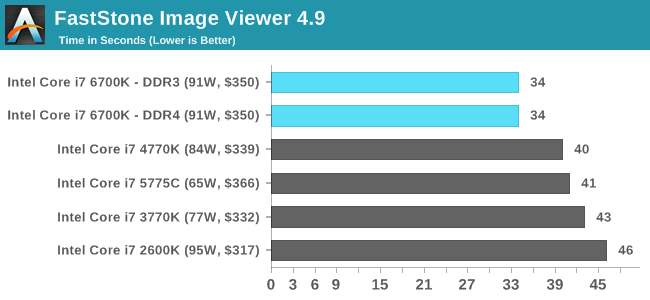

FastStone Image Viewer 4.9

FastStone is the program I use to perform quick or bulk actions on images, such as resizing, adjusting for color and cropping. In our test we take a series of 170 images in various sizes and formats and convert them all into 640x480 .gif files, maintaining the aspect ratio. FastStone does not use multithreading for this test, and results are given in seconds.

Web Benchmarks

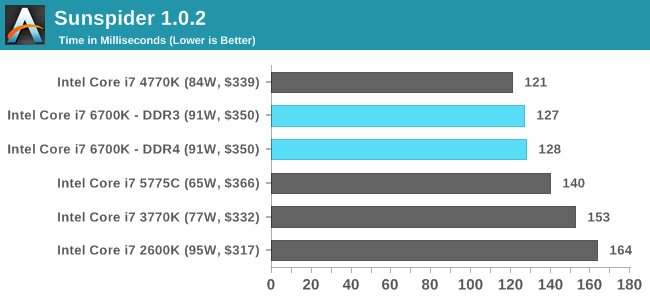

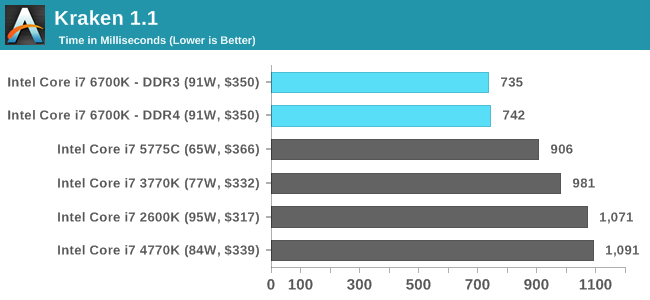

On the lower end processors, general usability is a big factor of experience, especially as we move into the HTML5 era of web browsing. For our web benchmarks, we take four well known tests with Chrome 35 as a consistent browser.

Sunspider 1.0.2

Mozilla Kraken 1.1

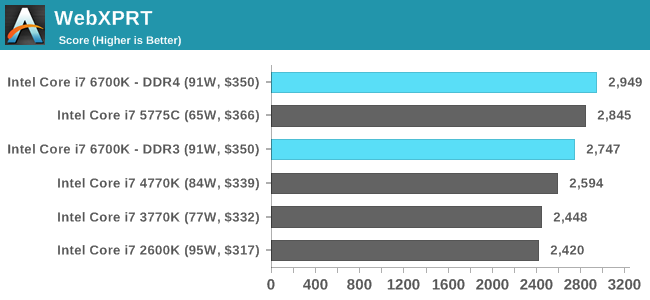

WebXPRT

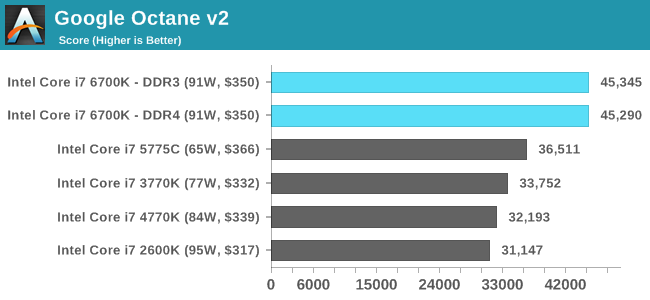

Google Octane v2

477 Comments

View All Comments

jwcalla - Wednesday, August 5, 2015 - link

I kind of agree. I think I'm done with paying for a GPU I'm never going to use.jardows2 - Wednesday, August 5, 2015 - link

If you don't overclock, buy a Xeon E3. i7 performance at i5 price, without integrated GPU.freeskier93 - Wednesday, August 5, 2015 - link

Except the GPU is still there, it's just disabled. So yes, the E3 is a great CPU for the price (I have one) but you're still paying for the GPU because the silicon is still there, you're just not paying as much.MrSpadge - Wednesday, August 5, 2015 - link

Dude, an Intel CPU does not get cheaper if it's cheaper to produce. Their prices are only weakly linked to the production costs.AnnonymousCoward - Saturday, August 8, 2015 - link

That is such a good point. The iGPU might cost Intel something like $1.Vlad_Da_Great - Wednesday, August 5, 2015 - link

Haha, nobody cares abot you @jjj. Integrating GPU with CPU saves money not to mention space and energy. Instead of paying $200 for the CPU and buy dGPU for another 200-300, you get them both on the same die. OEM's love that. If you dont want to use them just disable the GPU and buy 200W from AMD/NVDA. And it appears now the System memory will come on the CPU silicon as well. INTC wants to exterminate everything, even the cockroaches in your crib.Flunk - Wednesday, August 5, 2015 - link

Your generational tests look like they could have come from different chips in the same series. Intel isn't giving us much reason to want to upgrade. They could have at least put out a 8-core consumer chip. It isn't even that much more die space to do so.BrokenCrayons - Wednesday, August 5, 2015 - link

With Skylake's Camera Pipeline, I should be able to apply a sepia filter to my selfies faster than ever before while saving precious electricity that will let me purchase a little more black eyeliner and those skull print leg warmers I've always wanted. Of course, if it doesn't, I'm going to be really upset with them and refuse to run anything more modern than a 1Giga-Pro VIA C3 at 650 MHz because it's the only CPU on the market that is gothic enough pending the lack of much needed sepia support in Skylake.name99 - Wednesday, August 5, 2015 - link

And BrokenCrayons wins the Daredevil award for most substantial of lack vision regarding how computers can be used in the future.For augmented reality to become a thing we need to, you know, actually be able to AUGMENT the image coming in through the camera...

Today on the desktop (where it can be used to prototype algorithms, and for Surface type devices). Tomorrow in Atom, and (Intel hopes), giving them some sort of edge over ARM (though good luck with that --- I expect by the time this actually hits Atom, every major ARM vendor will have something comparable but superior).

Beyond things like AR, Apple TODAY uses CoreImage in a variety of places to handle their UI (eg the Blur and Vibrancy effects in Yosemite). I expect they will be very happy to use new GPU extensions that do this with lower power, and that same lower power will extend to all users of the CI APIs.

Without knowing EXACTLY what Camera Pipeline is providing, we're in no position to judge.

BrokenCrayons - Friday, August 7, 2015 - link

I was joking.