The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync vs. G-SYNC Performance

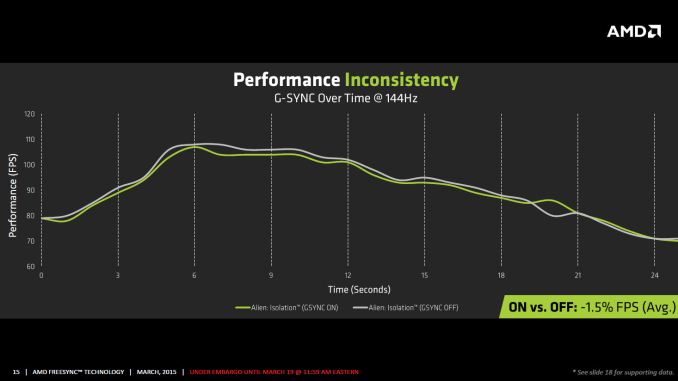

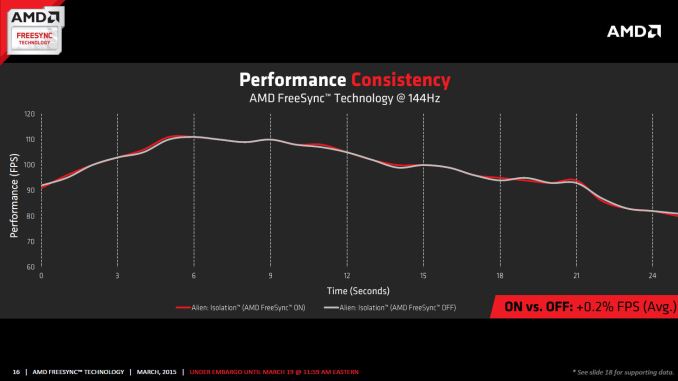

One item that piqued our interest during AMD’s presentation was a claim that there’s a performance hit with G-SYNC but none with FreeSync. NVIDIA has said as much in the past, though they also noted at the time that they were "working on eliminating the polling entirely" so things may have changed, but even so the difference was generally quite small – less than 3%, or basically not something you would notice without capturing frame rates. AMD did some testing however and presented the following two slides:

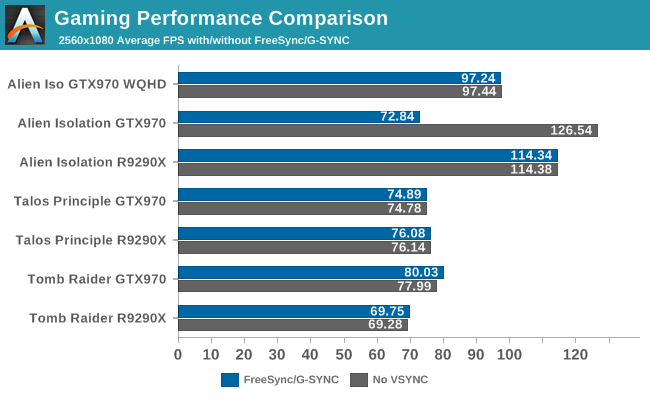

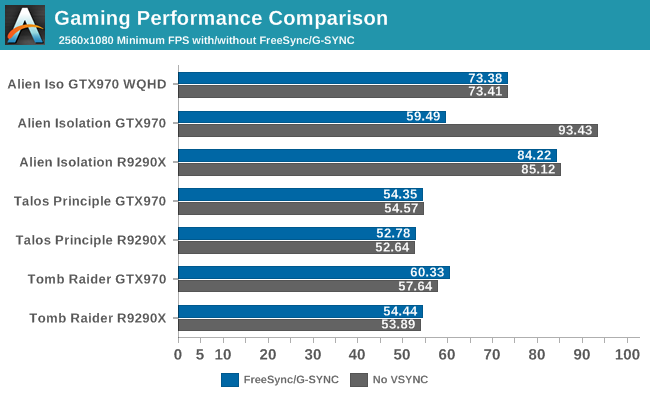

It’s probably safe to say that AMD is splitting hairs when they show a 1.5% performance drop in one specific scenario compared to a 0.2% performance gain, but we wanted to see if we could corroborate their findings. Having tested plenty of games, we already know that most games – even those with built-in benchmarks that tend to be very consistent – will have minor differences between benchmark runs. So we picked three games with deterministic benchmarks and ran with and without G-SYNC/FreeSync three times. The games we selected are Alien Isolation, The Talos Principle, and Tomb Raider. Here are the average and minimum frame rates from three runs:

Except for a glitch with testing Alien Isolation using a custom resolution, our results basically don’t show much of a difference between enabling/disabling G-SYNC/FreeSync – and that’s what we want to see. While NVIDIA showed a performance drop with Alien Isolation using G-SYNC, we weren’t able to reproduce that in our testing; in fact, we even showed a measurable 2.5% performance increase with G-SYNC and Tomb Raider. But again let’s be clear: 2.5% is not something you’ll notice in practice. FreeSync meanwhile shows results that are well within the margin of error.

What about that custom resolution problem on G-SYNC? We used the ASUS ROG Swift with the GTX 970, and we thought it might be useful to run the same resolution as the LG 34UM67 (2560x1080). Unfortunately, that didn’t work so well with Alien Isolation – the frame rates plummeted with G-SYNC enabled for some reason. Tomb Raider had a similar issue at first, but when we created additional custom resolutions with multiple refresh rates (60/85/100/120/144 Hz) the problem went away; we couldn't ever get Alien Isolation to run well with G-SYNC using our custome resolution, however. We’ve notified NVIDIA of the glitch, but note that when we tested Alien Isolation at the native WQHD setting the performance was virtually identical so this only seems to affect performance with custom resolutions and it is also game specific.

For those interested in a more detailed graph of the frame rates of the three runs (six total per game and setting, three with and three without G-SYNC/FreeSync), we’ve created a gallery of the frame rates over time. There’s so much overlap that mostly the top line is visible, but that just proves the point: there’s little difference other than the usual minor variations between benchmark runs. And in one of the games, Tomb Raider, even using the same settings shows a fair amount of variation between runs, though the average FPS is pretty consistent.

350 Comments

View All Comments

JarredWalton - Thursday, March 19, 2015 - link

Try counting again: LG 29", LG 34", BenQ 27", Acer 27" -- that's four. Thanks for playing. And the Samsung displays are announced and coming out later this month or early next. For NVIDIA, there are six displays available, and one coming next month (though I guess it's available overseas). I'm not aware of additional G-SYNC displays that have been announced, so there's our six/seven. I guess maybe we can count the early moddable LCDs from ASUS (and BenQ?) and call it 8/9 if you really want to stretch things.I'm not saying G-SYNC is bad, but the proprietary nature and price are definitely not benefits for the consumer. FreeSync may not be technically superior in every way (or at least, the individual implementations in each LCD may not be as good), but open and less expensive frequently wins out over closed and more expensive.

chizow - Friday, March 20, 2015 - link

@Jarred, thanks for playing, but you're still wrong. There's 8 G-Sync panels on the market, and even adding the 1 for AMD that's still double, so how that is "nearly caught up" is certainly an interesting lens.Nvidia also has panels in the works, including 2 new, major breakthroughs like the Acer 1440p IPS, 1st 144Hz, 1440p, IPS VRR panel and the Asus ROG Swift 4K IPS, 1st 4K IPS VRR monitor. So yes, while AMD is busy "almost catching up" with low end panels, Nvidia and their partners are continuing to pioneer the tech.

As for FreeSync bringing up the low end, I personally think it would be great if Nvidia adopted AdaptiveSync for their low end solutions and continued to support G-Sync as their premium solution. It would be great for the overwhelming majority of the market that owns Nvidia already, and would be one less reason for anyone to buy a Radeon card.

TheJian - Sunday, March 22, 2015 - link

You sure have a lot of excuses. This is beta 1.0, it's the lcd's fault (pcper didn't think so), assumption that open/free (this isn't free, $50 by your own account for freesync, which is the same as $40-60 for the gsync module right?, you even admit they're hiking prices at the vendor side for $100+) is frequently the winner. Ummm, tell that to CUDA and NV's generally more expensive cards. There is a reason they have pricing power (the are better), and own 70% discrete and ~75% workstation market. I digress...anubis44 - Tuesday, March 24, 2015 - link

@chizow:"I'll bet on the market leader that holds a commanding share of the dGPU market, consistently provides the best graphics cards, great support and features, and isn't riddled with billions in debt with a gloomy financial outlook."

You mean you'll bet on the crooked, corrupt, anti-competitive, money-grubbing company that doesn't compensate their customers when they rip them off (bumpgate), and has no qualms about selling them a bill of goods (GTX970 has 4GB ram! Well, 3.5GB of 'normal' speed ram, and .5GB of much slower, shitty ram.), likes to pay off game-makers to throw in trivial nVidia proprietary special effects (Batman franchise and PhysX, I'm looking right at you)? That company? Ok, you keep supporting the rip-off GPU maker, and see how this all ends for you.

chizow - Tuesday, March 24, 2015 - link

@Anubis44: Yeah again, I don't bother with any of that noise. The GTX 970 I bought for my wife in Dec had no adverse impact from the paper spec change made a few months ago, it is still the same fantastic value and perf it was the day it launched.But yes I am sure ignoramuses like yourself are quick to dismiss all the deceptive and downright deceitful things AMD has said in the past about FreeSync, now that we know its not really Free, can't be implemented with a firmware flash, does in fact require additional hardware, and doesn't even work with many of AMD's own GPUs. And how about CrossFireX? How long did AMD steal money from end-users like yourself on a solution that was flawed and broken for years on end, even denying there was a problem until Nvidia and the press exposed it with that entire runtframe FCAT fiasco?

And bumpgate? LMAO. AMD fanboys need to be careful who they point the finger at, especially in the case of AMD there's usually 4 more fingers pointed back at them. How about that Llano demand overstatement lawsuit still ongoing that specifically names most of AMD's exec board, including Read? How about that Apple extended warranty and class action lawsuit regarding the same package/bump issues on AMD's MacBook GPUs?

LOL its funny because idiots like you think "money-grubbing" is some pejorative and greedy companies are inherently evil, but then you look at AMD's financial woes and you understand they can only attract the kind of cheap, ignorant and obtusely stubborn customers LIKE YOU who won't even spend top dollar on their comparably low-end offerings. Then you wonder why AMD is always in a loss position, bleeding money from every orifice, saddled in debt. Because you're waiting for that R9 290 to have a MIR and drop from $208.42 to $199.97 before you crack that dusty wallet open and fork out your hard-earned money.

And when there is actually a problem with AMD product, you would rather make excuses for them and sweep those problems under the rug, rather than demand better product!

So yes, in the end, you and AMD deserve one another, for as long as it lasts anyways.

Yojimbo - Thursday, March 19, 2015 - link

HD-DVD was technically superior and higher cost? It seems BlueRay/HD-DVD is a counterexample to what you are saying, but you include it in the list to your favor. Laserdisc couldn't record whereas VCRs could. Minidisc was smaller and offered recording, but CD-R came soon after and then all it had was the smaller size. Finally MP3 players came along and did away with it.There's another difference in this instance, though, which doesn't apply to any of those situations that I am aware of, other than minidisc ): G-Sync/FreeSync are linked to an already installed user base of requisite products. (Minidisc was going up against CD libraries, although people could copy those. In any case, minidisc wasn't successful and was going AGAINST an installed user base.) NVIDIA has a dominant position in the installed GPU base, which is probably exactly the reason that NVIDIA chose to close off G-Sync and the "free" ended up being in FreeSync.

Assuming variable refresh catches on, if after some time G-Sync monitors are still significantly more expensive than FreeSync ones, it could become a problem for NVIDIA and they may have to either work to reduce the price or support FreeSync.

chizow - Thursday, March 19, 2015 - link

Uh, HD-DVD was the open standard there guy, and it lost to the proprietary one: Blu-Ray. But there's plenty of other instances of proprietary winning out and dominating, let's not forget Windows vs. Linux, DX vs. OpenGL, CUDA vs. OpenCL, list goes on and on.Fact remains, people will pay more for the better product, and better means better results, better support. I think Nvidia has shown time and again, that's where it beats AMD, and their customers are willing to pay more for it.

See: Broken Day 15 CF FreeSync drivers as exhibit A.

at80eighty - Thursday, March 19, 2015 - link

Keep paddling away, son. The ship isn't sinking at allchizow - Thursday, March 19, 2015 - link

If you buy a FreeSync monitor you will get 2-3 paddles for every 1 on a G-Sync panel. That will certainly help you pedal faster.http://www.pcper.com/image/view/54234?return=node%...

at80eighty - Friday, March 20, 2015 - link

oh hey great example - no ghosting there at all. brilliant!