Intel SSD 750 PCIe SSD Review: NVMe for the Client

by Kristian Vättö on April 2, 2015 12:00 PM ESTRandom Read Performance

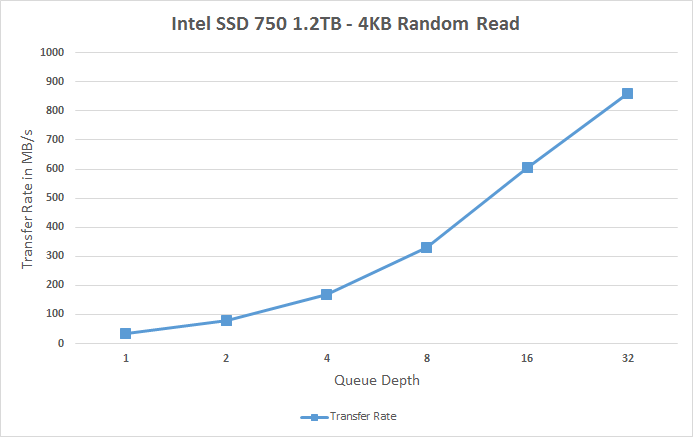

One of the major changes in our 2015 test suite is the synthetic Iometer tests we run. In the past we used to test just one or two queue depths, but real world workloads always contain a mix of different queue depths as shown by our Storage Bench traces. To get the full scope in performance, I'm now testing various queue depths starting from one and going all the way to up to 32. I'm not testing every single queue depth, but merely how the throughput scales with the queue depth. I'm using exponential scaling, meaning that the tested queue depths increase in powers of two (i.e. 1, 2, 4, 8...).

Read tests are conducted on a full drive because that is the only way to ensure that the results are valid (testing with an empty drive can substantially inflate the results and in reality the data you are reading is always valid rather than full of zeros). Each queue depth is tested for three minutes and there is no idle time between the tests.

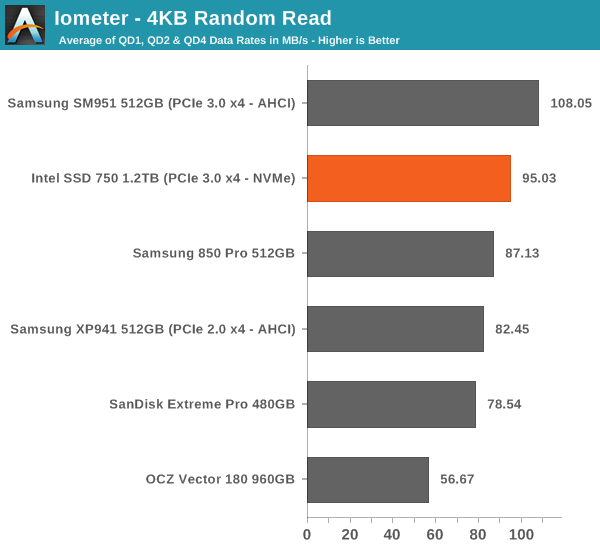

I'm also reporting two metrics now. For the bar graph, I've taken the average of QD1, QD2 and QD4 data rates, which are the most relevant queue depths for client workloads. This allows for easy and quick comparison between drives. In addition to the bar graph, I'm including a line graph, which shows the performance scaling across all queue depths. To keep the line graphs readable, each drive has its own graph, which can be selected from the drop-down menu.

I'm also plotting power for SATA drives and will be doing the same for PCIe drives as soon as I have the system set up properly. Our datalogging multimeter logs power consumption every second, so I report the average for every queue depth to see how the power scales with the queue depth and performance.

Despite having NVMe, the SSD 750 doesn't bring any improvements to low queue depth random read performance. Theoretically NVMe should be able to improve low QD random read performance because it adds less overhead compared to the AHCI software stack, but ultimately it's the NAND performance that's the bottleneck, although 3D NAND will improve that by a bit.

|

|||||||||

The performance does scale nicely, though, and at queue depth of 32 the SSD 750 is able to hit over 200K IOPS. It's capable of delivering even more than that because unlike AHCI, NVMe can support more than 32 commands in the queue, but since client workloads rarely go above QD32 I see no point in test higher queue depths just for the sake of high numbers.

Random Write Performance

Write performance is tested in the same way as read performance, except that the drive is in a secure erased state and the LBA span is limited to 16GB. We already test performance consistency separately, so a secure erased drive and limited LBA span ensures that the results here represent peak performance rather than sustained performance.

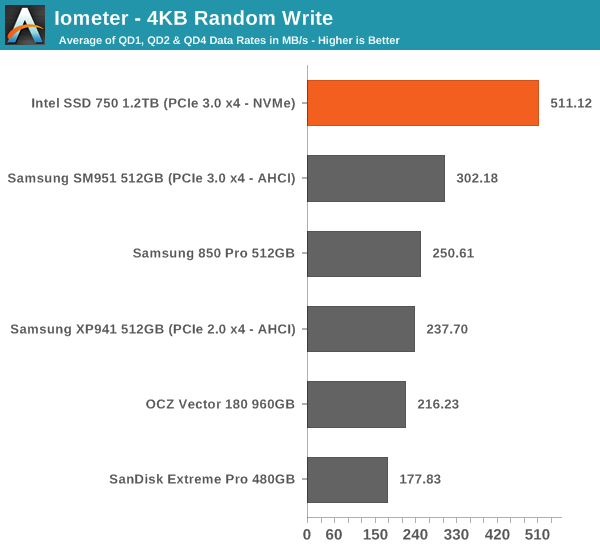

In random write performance the SSD 750 dominates the other drives. It seems Intel's random IO optimization really shows up here because the SM951 doesn't even come close. Obviously the lower latency of NVMe helps tremendously and since the SSD 750 features full power loss protection it can also cache more data in DRAM without the risk of data loss, which yields substantial performance gains.

|

|||||||||

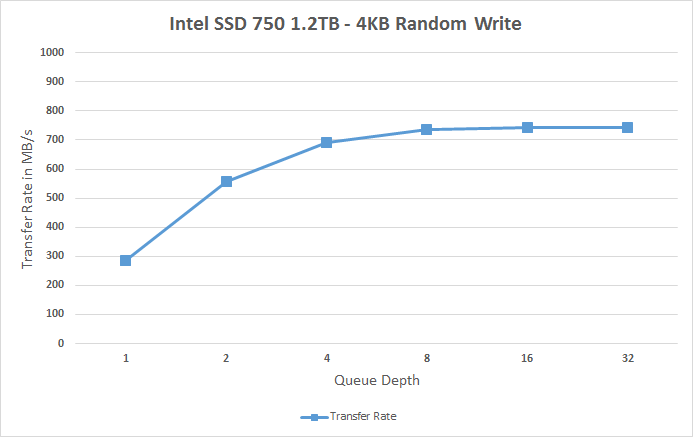

The SSD 750 also scales very efficiently and doesn't stop scaling until queue depth of 8. Note how big the difference is at queue depths of 1 and 2 -- for any random write centric workload the SSD 750 is an absolute killer.

132 Comments

View All Comments

kaisellgren - Friday, May 1, 2015 - link

Do not forget the Fiji 390x!dzezik - Saturday, May 7, 2016 - link

who needs chipset for PCIe if You have 40 lanes directly from CPU. it is step back in the configuration. it was big step ahead to put memory and PCIe to CPU. the chipset is useless.zrav - Thursday, April 2, 2015 - link

>It's again a bit disappointing that the SSD 750 isn't that well optimized for sequential IO because there's prcatically no scaling at allThat's a weird conclusion. I'd say it is quite impressive that the drive almost reaches peak throughput at QD 1 already. Requiring higher QD to achieve more throughput is a not a positive characteristic. But if that matters depends on the usage scenario ofc.

Kristian Vättö - Thursday, April 2, 2015 - link

It's impressive that the performance is almost the same regardless of queue depth, but I don't find 1.2GB/s to be very impressive for a 1.2TB PCIe drive.futrtrubl - Thursday, April 2, 2015 - link

Unfortunately your use of un-normalised standard deviation for performance consistency makes them a barrier to understanding. A 1000 IOPS drive with 5% variance is going to have lower standard deviation and by the way you have presented it "better consistency" than a 10000 IOPS drive with 1% variance.Kristian Vättö - Thursday, April 2, 2015 - link

Any suggestions for improving the metric? Perhaps divide by the average IOPS or its square root to take that into account as well?futrtrubl - Thursday, April 2, 2015 - link

Yes, I think dividing by the average IOPs would be perfect. You could even x100 to get it to a sort of percentage deviation.bricko - Saturday, April 4, 2015 - link

Here is test and review of the new 750, what is up with boot time...its SLOWEST of 14 drives. Everything else is great, but boot time. The Plextor M6 is 15 seconds, the 750 is 34 sec....ideashttp://techreport.com/review/28050/intel-750-serie...

Ethos Evoss - Saturday, April 4, 2015 - link

Plextor SSDs - BESTbricko - Saturday, April 4, 2015 - link

Its only slow on the boot time, otherwise it beats ALL other ssd on different loads and tests , by 2 - 3 times....odd it seems