Samsung Releases Firmware Update to Fix the SSD 840 EVO Read Performance Bug

by Kristian Vättö on October 16, 2014 2:40 PM EST

The news of Samsung's SSD 840 EVO read performance degradation started circulating around the Internet about a month ago. Shortly after this, Samsung announced that they have found the fix and a firmware update is expected to be released on October 15th. Samsung kept its promise and delivered the update yesterday through its website (download here).

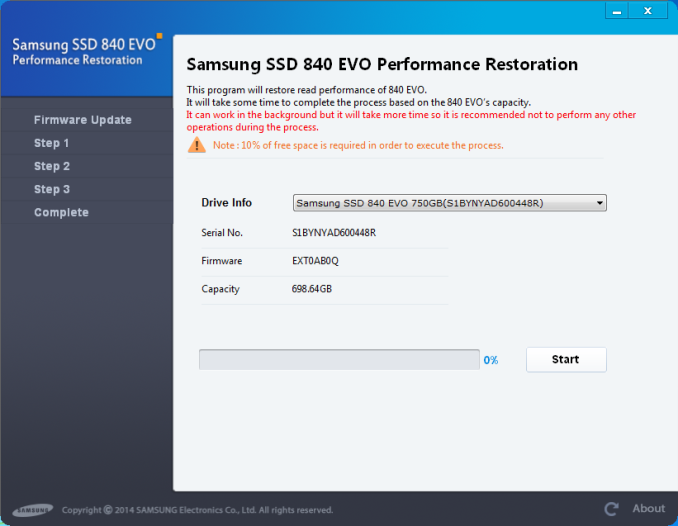

The fix is actually a bit more than just a firmware update. Because the bug specifically affects the read speed of old data, simply flashing the firmware isn't enough. The data in the drive has to be rewritten for the changes in the new firmware to take place. Thus the fix comes in the form of a separate tool, which Samsung calls Performance Restoration Software.

For now the tool is limited to the 840 EVO (both 2.5" and mSATA) and will only work under Windows. An OS-independent tool will be available later this month for Mac and Linux users, but currently there is no word on whether the 'vanilla' 840 and the OEM versions will get the update. Samsung told me that they've only seen the issue in the 840 EVO, although user reports suggested that the 'vanilla' 840 is affected as well. I'll provide an update as soon as I hear more from Samsung.

The performance restoration process itself is simple and doesn't require any input from the user once started. Basically, the tool will first update the firmware and ask for a shut down after the update has been completed. Upon the next startup the tool will run the actual three-step restoration process, although unfortunately I don't have any further information about what these steps actually do. What I do know is that all data in the drive will be rewritten and thus the process can take a while depending on how much data you have stored in your drive. Note that the process isn't destructive if completed successfully, but since there is always a risk of data loss when updating the firmware, I strongly recommend that you make sure that you have an up-to-date backup of your data before starting the process.

The restoration tool has a few limitations, though. First, it will require at least 10% of free space or the tool won't run at all, and there is no way around the 10% limitation other than deleting or moving files to another drive before running the tool. Secondly, only NTFS file system is supported at this stage, so Mac and Linux users will have to wait for the DOS version of the tool that is scheduled to be available by the end of this month. Thirdly, the tool doesn't support RAID arrays, meaning that if you are running two or more 840 EVOs in a RAID array, you'll need to delete the array and switch back to AHCI mode before the tool can be run. Any hardware encryption (TCG Opal 2.0 & eDrive) must be disabled too.

In regards to driver and platform support, the tool supports both Intel and AMD chipsets and storage drivers as well as the native Microsoft AHCI drivers. The only limitation is with AMD storage drivers where the driver must be the latest version, or alternatively you can temporarily switch to the Microsoft driver by uninstalling the AMD driver. Samsung has a detailed installation guide that goes through the driver switch process along with the rest of the performance restoration process.

Explaining the Bug

Given the wide spread of the issue, there has been quite a bit of speculation about what is causing the read performance to degrade over time. I didn't officially post my theory here, although I did Tweet about it and also mentioned it in the comments of the original news post. It turns out that my theory ended up being pretty much spot on as Samsung finally disclosed some details of the source of the bug.

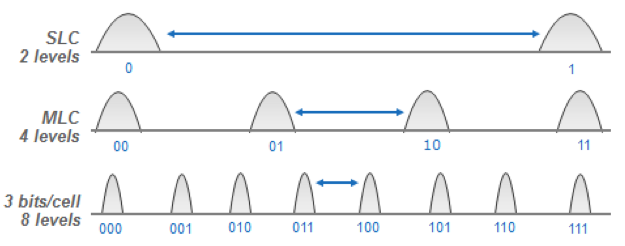

As most of you likely know already, the way NAND works is by storing a charge in the floating gate. The amount of charge determines the voltage state of the cell, which in turn translates to the bit output. Reading a cell basically works by sensing the cell voltage, which works by increasing the threshold voltage until the cell responds.

However, the cell charge is subject to multiple variables over time. Electron leakage through the tunnel oxide reduces the cell charge over time and may result in a change in the voltage state. The neighboring cells also have an impact through cell-to-cell interference in the form of floating gate coupling, which is at its strongest when programming a neighbor (or just a nearby) cell. That will affect the charge in the cell and the effect becomes stronger over time if the cell isn't erased and reprogrammed for a long time (i.e. more neighbor cell programs = more interference = bigger shift in cell charge).

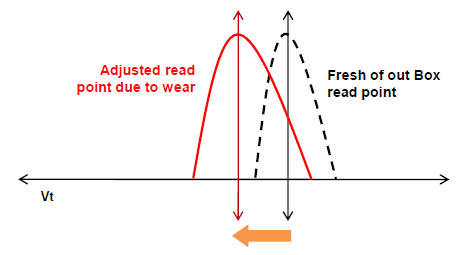

Because cell voltage change is a characteristic of NAND, all SSDs and other NAND-based devices utilize NAND management algorithm that takes the changes into account. The algorithm is designed to adjust the voltage states based on the variables (in reality there are far more than the two I mentioned above) so that the cell can be read and programmed efficiently.

In case of the 840 EVO, there was an error in the algorithm that resulted in an aggressive read-retry process when reading old data. With TLC NAND more sophisticated NAND management is needed due to the closer distribution of the voltage states. At the same time the wear-leveling algorithms need to be as efficient as possible (i.e. write as little as possible to save P/E cycles), so that's why the bug only exists on the 840 and 840 EVO. I suspect that the algorithm didn't take the change in cell voltage properly into account, which translated into corrupted read points and thus the read process had to be repeated multiple times before the cell would return the correct value. Obviously it takes more time if the read process has to be performed multiple times, so the user performance suffered as a result.

Unfortunately I don't have an 840 EVO that fits the criterion of the bug (i.e. a drive with several months old data), so I couldn't test more than the restoration process itself (which was smooth, by the way). However, PC Perspective's and The Tech Report's tests confirm that the tool restores the performance back to the original speeds. It's too early to say whether the update fixes long-term performance, but Samsung assured that the update does actually fix the NAND management algorithm and should thus be a permanent fix.

The EVO has been the most popular retail SSD so far, so it's great to see Samsung providing a fix in such a short time. None of the big SSD manufacturers have been able to avoid widespread bugs (remember the 8MB bug in the Intel SSD 320 and the 5,000-hour bug in the Crucial m4?) and I have to give Samsung credit for handling this well. In the end, this bug never resulted in data loss, so it was more of an annoyance than a real threat.

95 Comments

View All Comments

djdes - Wednesday, October 22, 2014 - link

Only works for ntfs, I shouldn't have to install windows or put it in a windows machine to solve a problem. It's a drive, not a winmodem.xkiller213 - Thursday, October 16, 2014 - link

that's it, no more Samsung SSDs for me: they won't release an update for the 840, which suffers from the same problem...Impulses - Thursday, October 16, 2014 - link

Would be nice if Kristian could press them for an answer here, tho I'm sure he's trying. They sold a lot of those vanilla 840s for a year...Kristian Vättö - Friday, October 17, 2014 - link

I'm definitely pushing them for a fixPer Hansson - Monday, October 20, 2014 - link

Yea, the fix really needs to be released for the 840 Vanilla too!Here is a pic from a 120GB 840 Vanilla belonging to a friend of me:

http://img.techpowerup.org/141020/27-september-201...

Coup27 - Friday, October 17, 2014 - link

I upgraded our entire company to vanilla 840's.konradsa - Thursday, October 16, 2014 - link

Agree. I am glad I have an EVO and I got the upgrade. But if I had a vanilla 840, I would be pretty upset now as well.konradsa - Thursday, October 16, 2014 - link

Nice article, but if changing the read parameters fixes the problem, why does all the data still need to be re-written? Either Samsung (also) changed the way the writes are done, or they only re-write the data now to cover up the symptoms.hojnikb - Thursday, October 16, 2014 - link

Its pretty much possible, that they are now doing much more agressive static wear levelling, so when used regularly, this effect doesnt not present itself.I have my doubts about the whole algorithm f*ckup.

fenix840 - Thursday, October 16, 2014 - link

I keep seeing this theory that samsung is refusing to acknowledge and fix the same problem in the "vanilla" 840's because they are so-called "dead" products (not being sold anymore). The Idea being they only fixed the 840 Evos because they still had units they needed to move. Can this possibly be so? Surely they wouldn't burn all of the non-pro 840 owners out there. That's bad for business and they're trying to generate brand loyalty. If they could fix the 840 Evo's so easily, surely they would do the same for the vanillas. So that leads me to believe that either they can't fix the 840's or they truly aren't affected by the bug.