Intel Xeon E5-2687W v3 and E5-2650 v3 Review: Haswell-EP with 10 Cores

by Ian Cutress on October 13, 2014 10:00 AM EST- Posted in

- CPUs

- IT Computing

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

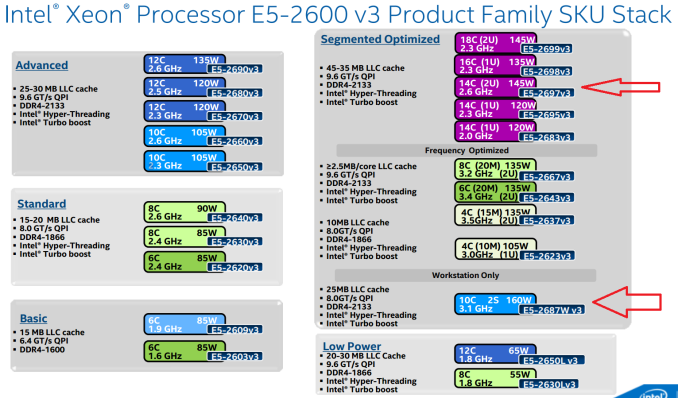

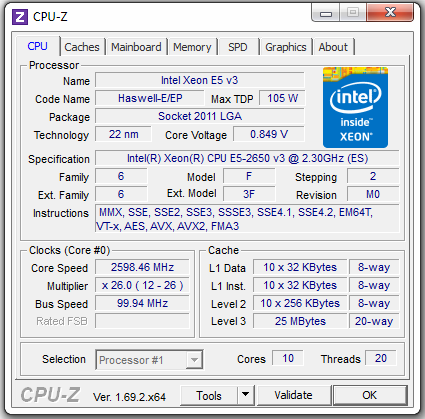

During September we managed to get hold of some Haswell-EP samples for a quick run through our testing suite. The Xeon E5 v3 range extends beyond that of the E5 v2 with the new architecture, support for DDR4 and more SKUs with more cores. These are generally split into several markets including workstation, server, low power and high performance, with a few SKUs dedicated for communications or off-map SKUs with different levels of support. Today we are testing two 10 core models, the Xeon E5-2687W v3 and the Xeon E5-2650 v3.

Intel Xeon E5 v3: The Information

Our initial Haswell-EP coverage from Johan was super extensive and well worth a read for anyone interested in the Xeon platform. My focus here will be light in comparison, mentioning key points that as an ex-workstation user I find interesting. This will be the first of several reviews on the Xeon processors, which we have split up to focus more on each area.

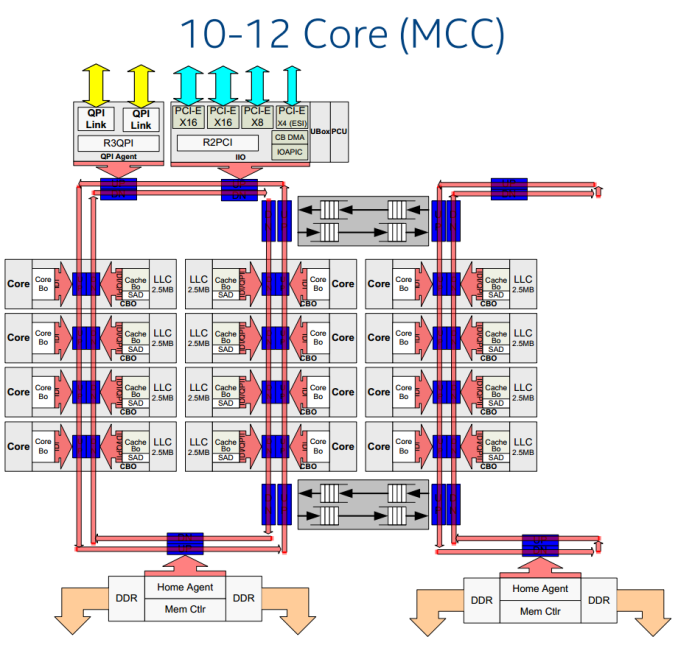

The core layouts for each of the different levels of processor are from three designs, emulating the single and dual ring bus type arrangements depending on the number of cores in each SKU. As with the Xeon E5 v2 processors, the big block of cache is in the middle of the cores and data is transferred via the ring bus. From the core designs, pairs of cores can be disabled to make lower core count CPUs, and much like the previous generation, some low core / high cache models might be possible.

In the 10-12 core image above we essentially get two classes of cores – one in the big stack to the left and another to the right. The processor is designed to treat all cores equally, although the Cluster on Die snoop mode new to E5 v3 will organize the cache data into what acts like two big sections in a NUMA style-arrangement. This allows data relevant to cores that need it to stay close and hopefully reduce read/write latencies, but is all transparent to the user. Johan goes into more detail on this front in his review.

This column arrangement is also why we do not see the progressive jump in cores we would expect. In the consumer space, we have had 1, 2, 4, 6, 8 cores, and one might expect 12 and 16 on the horizon, but 10, 14 and 18 seem a little off canter, along witht the 15-core design from Ivy Bridge-EP. Using this column design, Intel has to balance the number of cores per ring and the number of cores per column. In the large 18 core design there are 10 cores in the secondary ring and six in a single column – ideally fewer columns would be preferable however more rings allows data to transfer more frequently. It becomes a bit of a balance in terms of design, efficiency, performance and yield at the end of the day, especially when dealing with up to 5.69B transistors in 662 mm2.

| CPU Specification Comparison | |||||||||

| CPU | Node | Cores | GPU | Transistor Count (Schematic) |

Die Size | ||||

| Server CPUs | |||||||||

| Intel | Haswell-EP 14-18C | 22nm | 14-18 | N/A | 5.69B | 662mm2 | |||

| Intel | Haswell-EP 10C-12C | 22nm | 6-12 | N/A | 3.84B | 492mm2 | |||

| Intel | Haswell-EP 6C-8C | 22nm | 4-8 | N/A | 2.6B | 354mm2 | |||

| Intel | Ivy Bridge-EP 12C-15C | 22nm | 10-15 | N/A | 4.31B | 541mm2 | |||

| Intel | Ivy Bridge-EP 10C | 22nm | 6-10 | N/A | 2.89B | 341mm2 | |||

| Consumer CPUs | |||||||||

| Intel | Haswell-E 8C | 22nm | 8 | N/A | 2.6B | 356mm2 | |||

| Intel | Haswell GT2 4C | 22nm | 4 | GT2 | 1.4B | 177mm2 | |||

| Intel | Haswell ULT GT3 2C | 22nm | 2 | GT3 | 1.3B | 181mm2 | |||

| Intel | Ivy Bridge-E 6C | 22nm | 6 | N/A | 1.86B | 257mm2 | |||

| Intel | Ivy Bridge 4C | 22nm | 4 | GT2 | 1.2B | 160mm2 | |||

| Intel | Sandy Bridge-E 6C | 32nm | 6 | N/A | 2.27B | 435mm2 | |||

| Intel | Sandy Bridge 4C | 32nm | 4 | GT2 | 995M | 216mm2 | |||

| Intel | Lynnfield 4C | 45nm | 4 | N/A | 774M | 296mm2 | |||

| AMD | Trinity 4C | 32nm | 4 | 7660D | 1.303B | 246mm2 | |||

| AMD | Vishera 8C | 32nm | 8 | N/A | 1.2B | 315mm2 | |||

Intel should be offering certain configurations with more L3 cache, given that in their press materials the one they labelled '10C-12C' will actually be offered as a cut down to six cores for release. These CPUs, whichever way you slice them, are still massive.

Today our review revolves around two of the 10 core options from Intel.

| Intel Xeon E5 v3 SKU Comparison | ||||

| Xeon E5 | Cores/ Threads |

TDP | Clock Speed (GHz) |

Price |

| High Performance (35-45MB LLC) | ||||

| 2699 v3 | 18/36 | 145W | 2.3-3.6 | $4115 |

| 2698 v3 | 16/32 | 135W | 2.3-3.6 | $3226 |

| 2697 v3 | 14/28 | 145W | 2.6-3.6 | $2702 |

| 2695 v3 | 14/28 | 120W | 2.3-3.3 | $2424 |

| "Advanced" (20-30MB LLC) | ||||

| 2690 v3 | 12/24 | 135W | 2.6-3.5 | $2090 |

| 2680 v3 | 12/24 | 120W | 2.5-3.3 | $1745 |

| 2660 v3 | 10/20 | 105W | 2.6-3.3 | $1445 |

| 2650 v3 | 10/20 | 105W | 2.3-3.0 | $1167 |

| Midrange (15-25MB LLC) | ||||

| 2640 v3 | 8/16 | 90W | 2.6-3.4 | $939 |

| 2630 v3 | 8/16 | 85W | 2.4-3.2 | $667 |

| 2620 v3 | 6/12 | 85W | 2.4-3.2 | $422 |

| Frequency optimized (10-20MB LLC) | ||||

| 2687W v3 | 10/20 | 160W | 3.1-3.5 | $2141 |

| 2667 v3 | 8/16 | 135W | 3.2-3.6 | $2057 |

| 2643 v3 | 6/12 | 135W | 3.4-3.7 | $1552 |

| 2637 v3 | 4/8 | 135W | 3.5-3.7 | $996 |

| Budget (15MB LLC) | ||||

| 2609 v3 | 6/6 | 85W | 1.9 | $306 |

| 2603 v3 | 6/6 | 85W | 1.6 | $213 |

| Power Optimized (20-30MB LLC) | ||||

| 2650L v3 | 12/24 | 65W | 1.8-2.5 | $1329 |

| 2630L v3 | 8/16 | 55W | 1.8-2.9 | $612 |

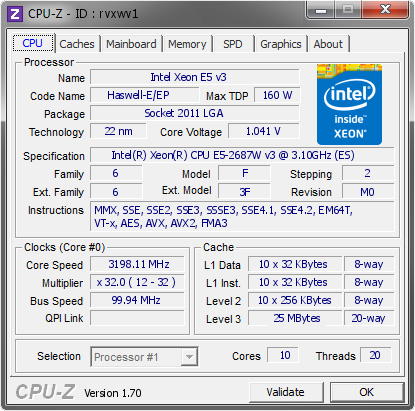

The E5-2687W v3 is an interesting model of the bunch, particularly due to the importance of the E5-2687W v2 from the previous generation. The v2 version was lauded due to the difference in peak frequencies compared to the higher core count models, but this changes with Haswell-EP.

For Ivy Bridge-EP:

- The 8-core E5-2687W v2 gave 3.6 GHz in full-load, TDP of 150W for $2108,

- The 12 core E5-2697 v2 gave 3.0 GHz in full-load, TDP of 130W for $2614

With Haswell-EP:

- The 10-core E5-2687W v3 gives 3.2 GHz for 160W at $2057,

- The 14-core E5-2697 v3 gives 3.1 GHz for 145W at $2702 or

- The 18-core E5-2699 v3 gives 2.8 GHz for 145W at $4115

If we compare the difference between the E5-2687W and E5-2697, first with v2 and then v3, it makes the new Haswell ‘W for Workstation’ CPU a little less enticing. Previously it was a trade-off between cores and frequency, and depending on the software having a high turbo mode helps with the v2 CPUs.

To make matters worse for the E5-2687W v3, if we compare single thread speeds, the E5-2697 v3 reaches 3.6 GHz compared to the E5-2687W v3 at 3.5 GHz, which puts the W processor at a disadvantage.

It is worth noting that Intel puts these two processors in different parts of the product stack, to technically they should not be 'competing' against each other:

The E5-2687W v3 is firmly for Workstations only, rather than servers, whereas the E5-2697 v3 should end up in 2U servers.

The other processor in this review, the E5-2650 v3 sits in the ‘Advanced’ section in the SKU stack, giving 2.6 GHz at load or 3.0 GHz for single threaded speed, but lists at only 105W for $1166 tray price.

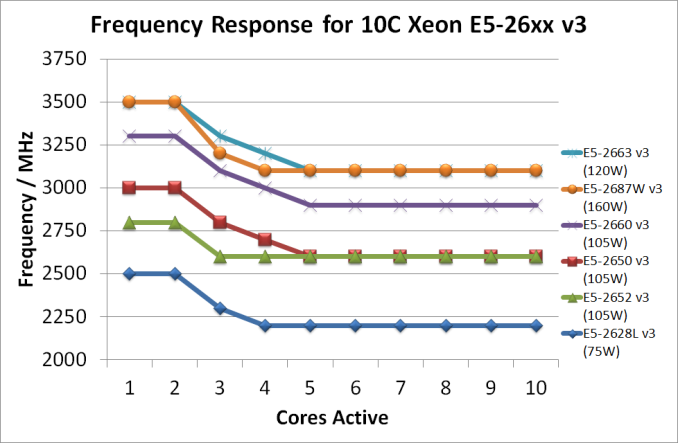

Using this information and a few SKUs that are off-roadmap, the turbo modes of the 10 core processors are:

All the 10 core processors reach their full-core turbo when five cores are in use, and are on the top turbo frequency when one or two cores are active.

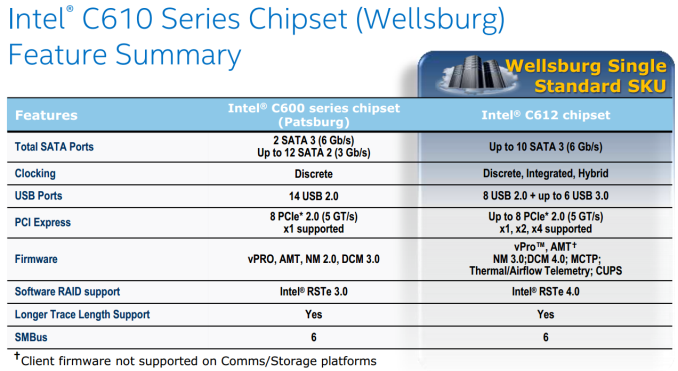

The Chipset

When we reviewed a pair of the E5 v2 processors back in March, the main server based chipsets at the time revolved around the C600 series, codename ‘Patsburg’. For the v3 processors, this moves to the C610 series, also known as Wellsburg. The C612 chipset is the primary server component at this point, offering many of the features we have already seen in our X99 reviews:

- Up to 10 SATA 6 Gbps,

- 6 ports of USB 3.0,

- 8 ports of USB 2.0

- Up to 8 PCIe 2.0, with x1/x2/x4 supported

New features for C610 series include:

- Reduced TDP, Average Power and Package (now 7W, 25mm x 25mm)

- Intel SVT

- USB 3.0 XHCI Debug

- Support for MCTP Protocol and End Points

- Support for Management Traffic over DMI

- SPI Enhancements

Intel vPro, SPS 3.0, RSTe and CAS are also supported.

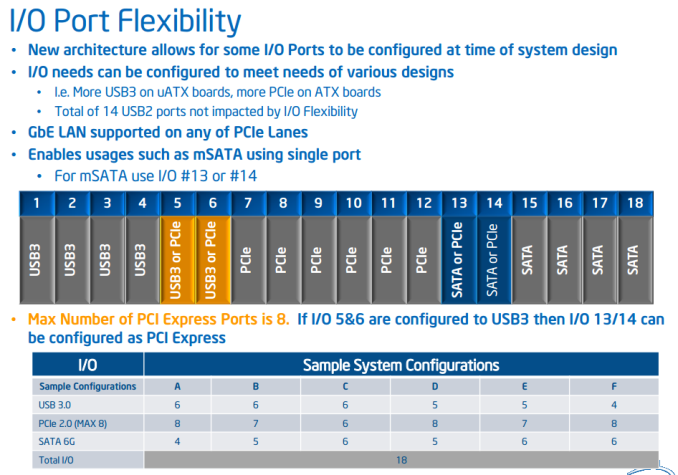

For the SATA/USB3/PCIe bencwidth combinations, Intel has implemented an extended from of Flex IO. It almost looks much the same at Z87 and Z97, offering 22 rather than 18 differential signal pairs. A certain amount of these pairs are fixed to USB3 / PCIe / SATA but two pairs are muxed:

This slide shows 18 signal pairs, although I mentioned 22. This is because the last four are from a secondary AHCI controller giving four more SATA 6 Gbps ports. Like X99, the downside of these secondary SATA ports is that they are not RAID capable due to limitations within the silicon.

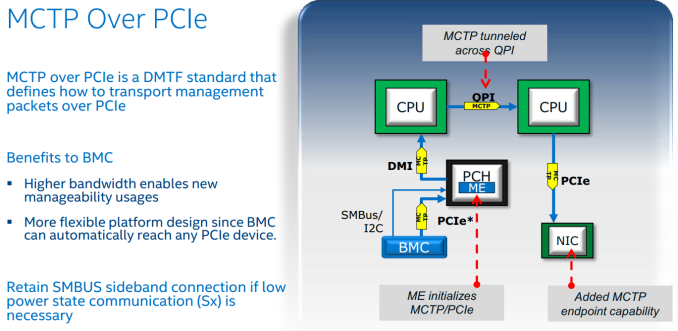

MTCP over PCIe is also an interesting new addition to Wellsburg, allowing cross CPU communication from controllers attached to the other side of the system:

The DRAM

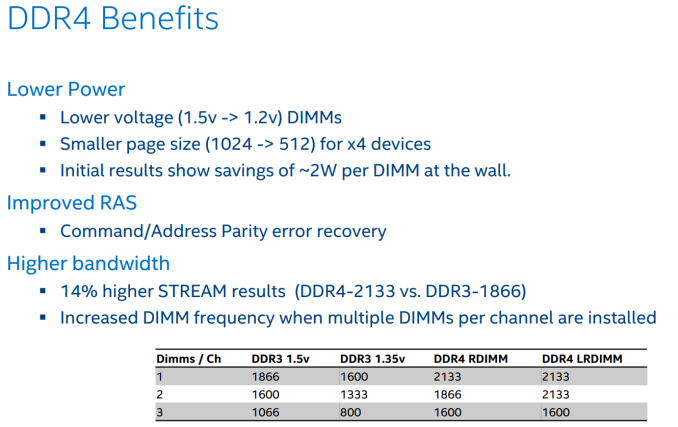

We still have a consumer class DDR4 review in the works, but the upgrade from DDR3 to DDR4 for Haswell-EP is more significant. The decrease in power consumption is often listed is the easiest-to-explain benefit, giving an approximate 2W saving at-the-wall per memory module:

One important aspect of DDR4 will be the higher memory frequency, especially when more DIMMs per channel are installed. It might also come to pass that some server motherboard manufacturers will end up supporting the DDR4-2133 at 3DPC, similar to some efforts made with Patsburg.

In a lot of Intel materials we received, it was worth noting that non-ECC UDIMM support is not often listed with the new Haswell-EP CPUs, but we can confirm that in our testing, all of our CPUs worked with standard consumer grade UDIMMs.

27 Comments

View All Comments

JarredWalton - Monday, October 13, 2014 - link

For ten cores I wouldn't expect a huge bump over the "minimum guaranteed" speed. It's one thing to boost a few cores by a large amount, but the whole problem with multi-core designs is that if you load up all the cores then either you have massive power consumption or you need to curtail the clocks. Honestly, running ten cores at 100% and still hitting 3.1GHz is impressive in my book -- and it still consumes up to 160W.Carl Bicknell - Monday, October 13, 2014 - link

I got my numbers a bit wrong: the 2687W is 3.1 GHz default and 3.2 GHz all cores on turbo, according to wikipedia.That's disappointing.

Apart from anything else, they've managed to get their best 12 (yes twelve!) core CPU (E5-2690 v3) to operate at 3.1 GHz turbo all cores in a 135 W design.

With two fewer cores and an extra 25 watts I'd hope for more than a mere 100 MHz performance.

NovoRei - Monday, October 13, 2014 - link

Ian, could you comment on performance with pure AVX2 and mixed AVX instructions and where the W version stands?Thanks.

Laststop311 - Monday, October 13, 2014 - link

4100 for an 18 core ill take 2ruthan - Tuesday, October 14, 2014 - link

I would like to see, benchmarks some of those low power - 6/12 or 12/24 - 55W a 65W models.pokazene_maslo - Tuesday, October 14, 2014 - link

Is it possible to override turbo boost to force all cores to run at maximum turbo freqency? (E5-2687W-v3 running all cores at 3.5GHz)alpha754293 - Tuesday, October 14, 2014 - link

Well, the thing with these "big" multicore systems is no different than testing large SMP system. You have to use programs for applications that where it make sense to use it. For engineering analyses and simulations, even HOW a problem is divided up (from a single, much larger problem) can have an impact on not only the speed for the analysis/simulation, but also the accuracy of the simulation, and you have to have a pretty sound understanding of the math and physics involved in order to make the best determination.And for some applications, there is such a thing and you CAN have TOO many cores (where you've divided up a problem so much that it's now so small that it can't fully load a core up anymore, and that the process of dividing and re-assembling the results takes an extremely large amount of time.) (You can run into that with some of the FEA analysis).

I was working with Johan and studying a while slew of parameters using LS-DYNA to study how the various ways of decomposing a problem can have an impact on the crash test simulation results, and how swap performance means EVERYTHING when it comes to mechanical engineering simluations.

mapesdhs - Thursday, October 16, 2014 - link

Oddly enough this can be the case with animation rendering aswell. I know a movie studio

which uses a system that can exclude cores from a render pipeline so there is more RAM

and cache bandwidth available with a fewer number of cores. This can matter because

sometimes complex film renders can use huge amounts of data. Someone at SPI told me

one frame of a big movie can involve 500GB of data.

Interesting how the same issue can crop up in such widely different fields.

Ian.

RAMdiskSeeker - Tuesday, October 14, 2014 - link

Could you please test these motherboards for supporting ECC unbuffered DIMMs, reporting that ECC is active, and overclocking potential with ECC DIMMs? It would be good to know whether Xeon chips on non-server motherboards can use ECC.nutral - Tuesday, October 14, 2014 - link

What still is strange to me is that there is still no workstation cpu focused on a workstation with single threaded software. Wouldn't an i7 cpu still be much faster than this workstation cpu?