Samsung Acknowledges the SSD 840 EVO Read Performance Bug - Fix Is on the Way

by Kristian Vättö on September 19, 2014 3:23 PM EST

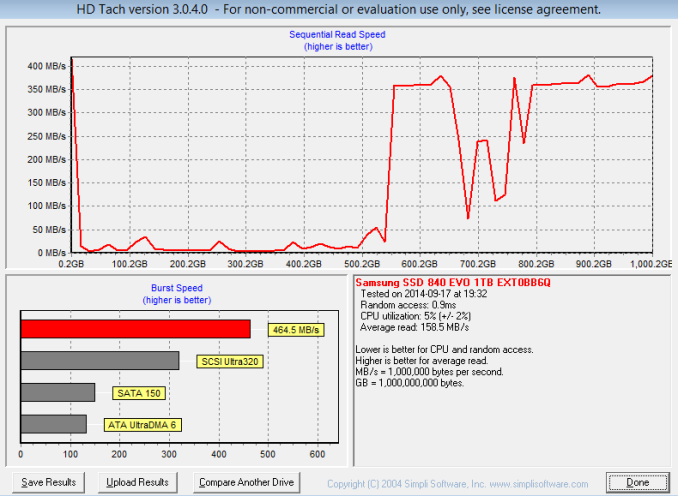

During the last couple of weeks, numerous reports of Samsung SSD 840 and 840 EVO having low read performance have surfaced around the Internet. The most extensive one is probably a forum thread over at Overclock.net, which was started about month ago and currently has over 600 replies. For those who are not aware of the issue, there is a bug in the 840 EVO that causes the read performance of old blocks of data to drop dramatically like the HD Tach graph below illustrates. The odd part is that the bug only seems to affect LBAs that have old data (>1 month) associated with them because freshly written data will read at full speed, which also explains why the issue was not discovered until now.

Source: @p_combe

I just got off the phone with Samsung and the good news is that they are aware of the problem and have presumably found the source of it. The engineers are now working on an updated firmware to fix the bug and as soon as the fix has been validated, the new firmware will be distributed to end-users. Unfortunately there is no ETA for the fix, but obviously it is in Samsung's best interest to provide it as soon as possible.

Update 9/27: Samsung just shed some light on the timeline and the fixed firmware is scheduled to be released to the public on October 15th.

I do not have any further details about the nature of the bug at this point, but we will be getting more details early next week, so stay tuned. It is a good sign that Samsung acknowledges the bug and that a fix is in the works, but for now I would advise against buying the 840 EVO until there is a resolution for the issue.

116 Comments

View All Comments

themeinme75 - Friday, September 19, 2014 - link

I have samasung magic installed and it pretty much updates the drive when a new firmware comes out you just have to click OK and let the computer reboot.stickmansam - Friday, September 19, 2014 - link

I would wait a while for a fix, if not, the MX100 is a good choiceTheWrongChristian - Monday, September 22, 2014 - link

Seriously? You don't think even in this degraded state, streaming music files will take exhaust the bandwidth available? There's no need to be a drama queen about it!People forget why SSDs feel fast. It's mostly because they're low latency. As in, you do a read, and 0.1ms later the data is available, instead of the ~10ms it takes a HDD to get the same data. 40 vs 400 MB/s makes very little difference at the random IO level for most workloads. And for most sequential workloads, like your streaming files, the bandwidth requirements are low (for a SDD or HDD even).

Chill. If you haven't noticed yet, you probably never will. It's probably only been noticed on overclockers forums because these people like doing their (synthetic) benchmarks and noticed the issues as a result.

MacDude2112 - Monday, September 22, 2014 - link

@TheWrongChristian FYI I am NOT streaming "music files," I am WRITING music compositions which involves reading thousands, if not 10's of thousands of small music samples at one time from all over the drive. Thus I'm not being a drama queen here - you just simply don't understand what it is I do. :-) (If you'd like me to explain it further let me know so you don't make the same assumption again). So again, this bug could be a show stopper for me and my work!Nexing - Monday, September 22, 2014 - link

This is the typical error made when calculating the AUDIO sector computing needs. Even techs would be surprised to know that in several massive sectors of Audio, the actual state of the PC offerings are still far below regular usage needs.Have a look about a detailed post over a common latency problem in one of the barest uses of PC in Audio; while recording and trying to monitor it;

"System latency:-Through the chip is 0.7 msec. to 0.8 msec per direction, which

makes at least 1.4 msec for a roundtrip. Analog Digital ADC / DAC converters add 0.6 msec each way, for a 1.2 msec roundtrip. A big part of the delay is added by the OS and your computer. It

could depend on the BIOS, drivers and different programs running. The PC / Mac need at least two buffers per direction to copy the data from the FireWire to the memory and from the memory

to the ASIO buffers. The data from FireWire to memory takes 0.6 msec. A PC / MAC is only able to copy the data to or from ASIO buffers at minimum of 1 msec. So the best result ideally is a 3.2ms. This means that the lowest latency with such a system and standard Windows or MAC low level drivers is probably 5.8 msec (best case)."(link interpreted as spam by this site).

And that is not the end of the story; every Audio Software that works with plug-ins, like so call DAW (the digital version of old recording studios), or Musicians' performance Software, Dj Software, Composing tools, etc. routinely get introduced additional latencies. Increased -Yes-, if frequent visits to the Harddrive/SSD are required.

The options for Audio Pro users are only two; either allow for high latencies (when <10ms of summed latencies are very difficult to achieve) or get audio glitches, what in other words is listeneable and obviously recordeable audio corruption.

Bear in mind that >5ms of audio latency is already audible and in some occasions unbeareable for performers and Audio people.

///In the global view, SSDs have meant an advancement in this matter, but cannot solve the overall audio latency problem. Another advancement is the lower total latency Thunderbolt brings as compared to USB or even the more professional Firewire connector. There are a few brands pioneering this switch.

However, the processing power needs of Audio are only starting and thus far from satisfied. Without extending in this, let my share this image; audio users tend to have a central software (for Studio, Performance/composing, DJing, Live Mixing, etc. works) typically a DAW which is akin to a stream of multiple simultaneous runs of audio, from 4 tracks to over 64, and each of them may pass thru different additional software (plugins iex Equalizers, reverbs, limiters, effects, and a long ever-increasing etc.).

So far audio users have had to limit the number of those plugins applied or the count of tracks utilized, or even the basic DAW functions applied, despite the fact of using state of the art PCs.

These simple Audio needs still remain elusive at related PC tech discussions.

TheWrongChristian - Tuesday, September 23, 2014 - link

To quote:"(aka streaming music samples for composition)"

So, I was basing my assumptions on your original post. I didn't assume it was a single music stream. A single 16-bit, 96KHz, stereo sample is what, <400KB/s? 40MB/s leaves you lots of bandwidth for many, many tracks, more tracks (100 perhaps?) than *you* could handle in realtime. And if you're not mixing in realtime, then is it a moot point?

There's been no indication that this bug has affected latency in any measurable way.

I'd be interested to know if you're already hit by the bug, but simply haven't noticed. Do a benchmark dump of all your small samples, and see what sort of bandwidth you get, then copy all the samples to a new directory, and repeat the test to see if it improves. If so, and you've not otherwise noticed any problems, then the problem simply doesn't affect you.

Sorry if the tone came across as disrespectful, however.

composer1 - Wednesday, September 24, 2014 - link

I have to agree, there is a general ignorance among the IT crowd about what is involved in a modern computer-based music composition studio. Never mind hundreds of samples of audio, we are talking THOUSANDS at once through hundreds of tracks, especially if you writing for full virtual orchestra (as most film composers are). Pile on top of this the insane deadlines typical in audio post-production and you can see start to see why composers need massive amounts of computing power. Not only do we have to be able to within minutes recall a session that uses 48+GB of RAM, we also have to be able to play our instruments in real-time with zero audio artifacts. Even with two PCs linked together in a master-slave configuration each with six-core 4.2 GHZ i7s, 128 GB of RAM, and all SATA 3 SSD drives, I still come up against the limit of what of my studio is able to do (usually the bottle neck is the random read speed). That's why composers like Hans Zimmer have *farms* of computers and a team of tech people at the ready to take care of them. Anything more than 50ms of total latency is unacceptable; from the time I play a note on my keyboard to the time I hear a note.Nexing - Tuesday, September 30, 2014 - link

And that it high up in the ladder. Whereas a simple Dj with the best laptop out there will add a plug in and try to sync an external instrument... under 5ms (in order to not have conflicting beats on air) and the regular DJ software will crash when a linear phase plug in is selected.The performance is simply not there yet.

iamezza - Friday, September 19, 2014 - link

I haven't heard of or experienced this problem on the original 840 (non EVO) so it seems unlikely that it is a problem with the TLC NAND itself. It seems to just be a problem or bug in the EVO firmware.hojnikb - Saturday, September 20, 2014 - link

There are reports of slowdown even on 840basic, so its defenetly something with TLC.