The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTCrysis: Warhead

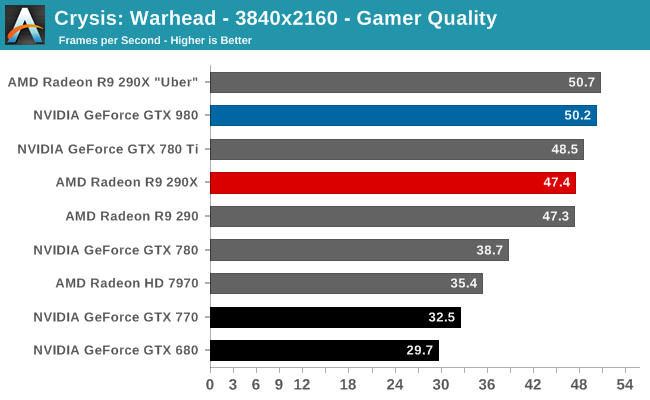

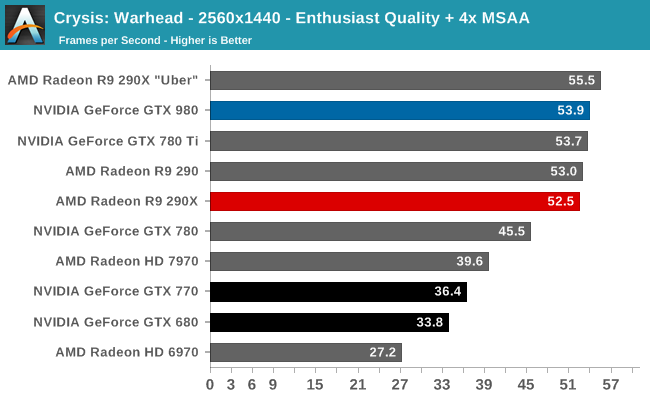

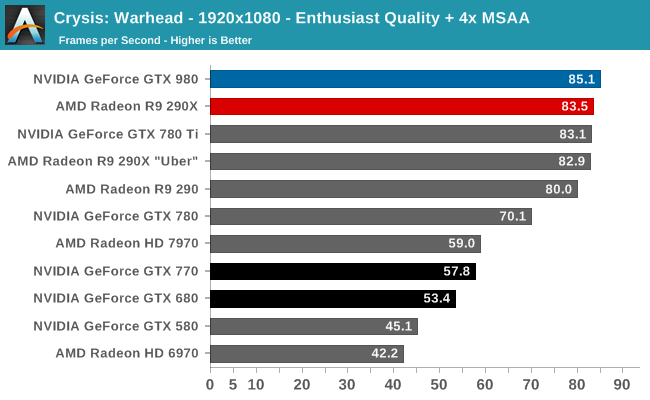

Up next is our legacy title for 2014, Crysis: Warhead. The stand-alone expansion to 2007’s Crysis, at over 5 years old Crysis: Warhead can still beat most systems down. Crysis was intended to be future-looking as far as performance and visual quality goes, and it has clearly achieved that. We’ve only finally reached the point where single-GPU cards have come out that can hit 60fps at 1920 with 4xAA, never mind 2560 and beyond.

At the launch of the GTX 680, Crysis: Warhead was rather punishing of the GTX 680’s decreased memory bandwidth versus GTX 580. The GTX 680 was faster than the GTX 580, but the gains weren’t as great as what we saw elsewhere. For this reason the fact that the GTX 980 can hold a 60% lead over the GTX 680 is particularly important because it means that NVIDIA’s 3rd generation delta color compression is working and working well. This has allowed NVIDIA to overcome quite a bit of memory bandwidth bottlenecking in this game and push performance higher.

That said, since GTX 780 Ti has a full 50% more memory bandwidth, it’s telling that GTX 780 Ti and GTX 980 are virtually tied in this benchmark. Crysis: Warhead will gladly still take what memory bandwidth it can get from NVIDIA cards.

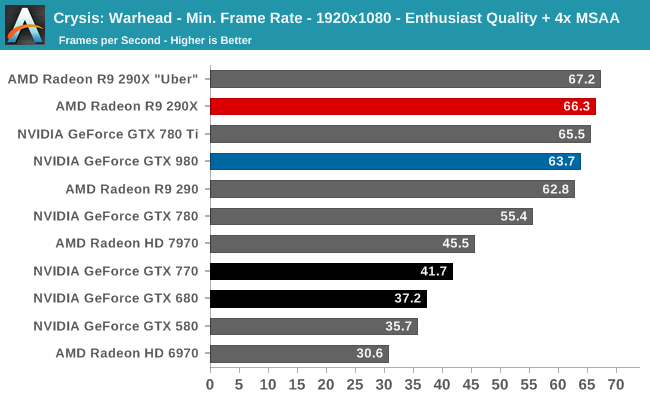

Otherwise against AMD cards this is the other game where GTX 980 can’t cleanly defeat R9 290XU. These cards are virtually tied, with AMD edging out NVIDIA in two of three tests. Given their differing architectures I’m hesitant to say this is a memory bandwidth factor as well, but if it were then R9 290XU has a very big memory bandwidth advantage going into this.

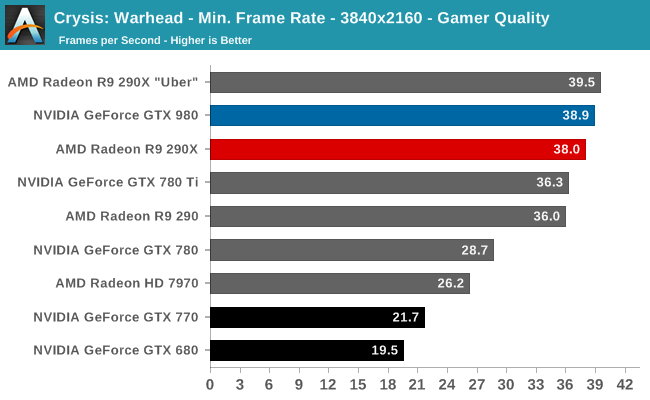

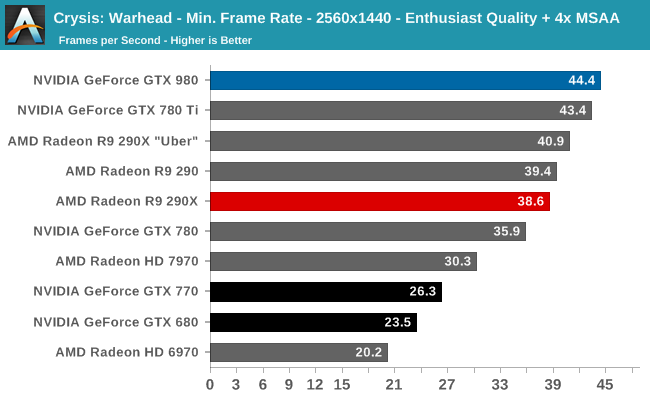

When it comes to minimum framerates the story is much the same, with the GTX 980 and AMD trading places. Though it’s interesting to note that the GTX 980 is doing rather well against the GTX 680 here; that memory bandwidth advantage would appear to really be paying off with minimum framterates.

274 Comments

View All Comments

Frenetic Pony - Friday, September 19, 2014 - link

This is the most likely thing to happen, as the transition to 14nm takes place for intel over the next 6 months those 22nm fabs will sit empty. They could sell capacity at a similar process to TSMC's latest while keeping their advantage at the same time.nlasky - Friday, September 19, 2014 - link

Intel uses the same Fabs to produce 14nm as it does to produce 22nmlefty2 - Friday, September 19, 2014 - link

I can see Nvidia switching to Intel's 14nm, however Intel charges a lot more than TSMC for it's foundry services (because they want to maintain their high margins). That would mean it's only economical for the high end cardsSeanJ76 - Friday, September 19, 2014 - link

What a joke!!!! 980GTX doesn't even beat the previous year's 780ti??? LOL!! Think I'll hold on to my 770 SC ACX Sli that EVGA just sent me for free!!Margalus - Friday, September 19, 2014 - link

uhh, what review were you looking at? or are you dyslexic and mixed up the results between the two cards?eanazag - Friday, September 19, 2014 - link

Nvidia would get twice as many GPUs per wafer on a 14nm process than 28nm. Maxwell at 14nm would blow Intel integrated and AMD out of the water in performance and power usage.That simply isn't the reality. Samsung has better than 28nm processes also. This type of partnership would work well for Nvidia and AMD to partner with Samsung on their fabs. It makes more sense than Intel because Intel views Nvidia as a threat and competitor. There are reasons GPUs are still on 28nm and it is beyond process availability.

astroidea - Friday, September 19, 2014 - link

They'd actually get four times more since you have to considered the squared area. 14^2*4=28^2emn13 - Saturday, September 20, 2014 - link

Unfortunately, that's not how it works. A 14nm process isn't simply a 28nm process scaled by 0.5; different parts are scaled differently, and so the overall die area savings aren't that simple to compute.In a sense, the concept of a "14nm" process is almost a bit of a marketing term, since various components may still be much larger than 14nm. And of course, the same holds for TSMC's 28nm process... so a true comparison would require more knowledge that you or I have, I'm sure :-) - I'm not sure if intel even releases the precise technical details of how things are scaled in the first place.

bernstein - Friday, September 19, 2014 - link

no because intel is using their 22nm for haswell parts... the cpu transition ends in a year with the broadwell xeon-ep... at which point almost all the fabs will either be upgraded or upgrading to 14nm and the rest used to produce chipsets and other secondary die'snlasky - Saturday, September 20, 2014 - link

yes but they use the same fabs for both processes