The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTBattlefield 4

Our latest addition to our benchmark suite and our current major multiplayer action game of our benchmark suite is Battlefield 4, DICE’s 2013 multiplayer military shooter. After a rocky start, Battlefield 4 has finally reached a point where it’s stable enough for benchmark use, giving us the ability to profile one of the most popular and strenuous shooters out there. As these benchmarks are from single player mode, based on our experiences our rule of thumb here is that multiplayer framerates will dip to half our single player framerates, which means a card needs to be able to average at least 60fps if it’s to be able to hold up in multiplayer.

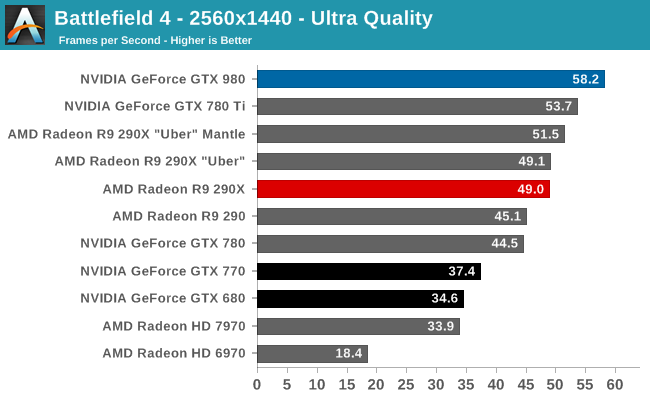

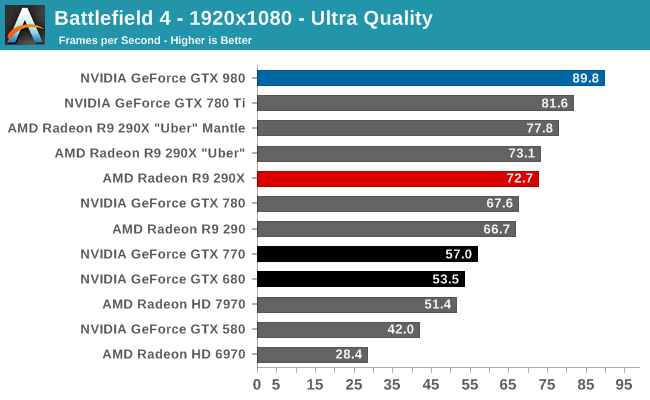

Battlefield 4 is one of our tougher games, especially with the bar set at 60fps to give us enough headroom for multiplayer performance. To that end the GTX 980 turns in another solid performance, though the dream of averaging 60fps at 1440p Ultra is going to have to wait just a bit longer to be answered.

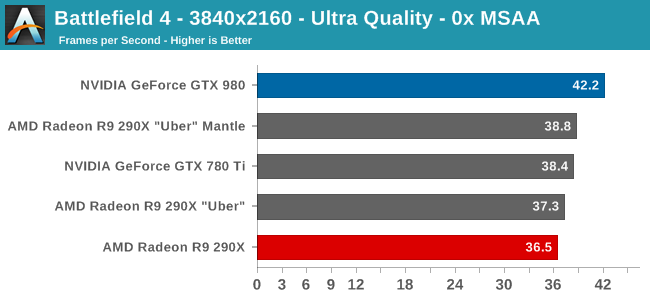

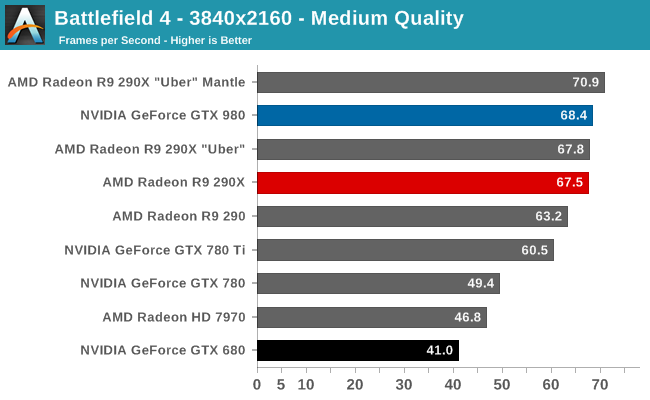

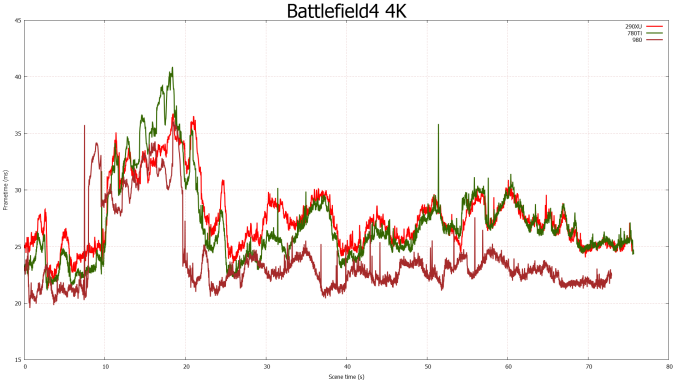

Overall on a competitive basis the GTX 980 looks very strong. Against the GTX 780 Ti it further improves on performance by 8-13%, 30%+ against GTX 780, and 66% against GTX 680. Similarly it fares well against AMD’s cards – even with their Mantle performance advantage – with the exception of one case: 4K at Medium quality. With maximum quality settings, at all resolutions the GTX 980 can outperform AMD’s best by around 15%. But in the case of 4K Medium, with the lesser shader overhead in particular the R9 290XU gets to pull ahead thanks to Mantle. At this point NVIDIA is losing by just 4%, but it goes to show how close the race between these two cards is going to be at times and why AMD is never too far behind NVIDIA in several of these games.

In any case for Ultra quality you’re looking at the GTX 980 being enough for 1080p and even 1440p if you flex the 60fps rule a bit. 4K at these settings though is going to be the domain of multi-GPU setups.

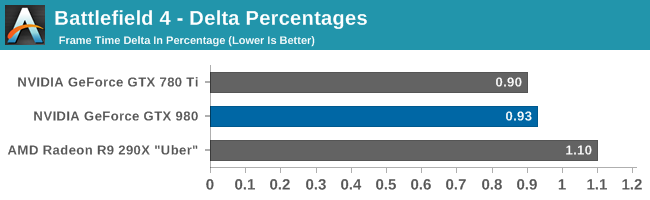

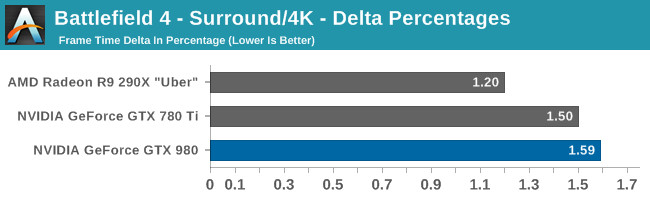

Meanwhile delta percentage performance is extremely strong here. Everyone, incuding the GTX 980, is well below 3%.

274 Comments

View All Comments

garadante - Sunday, September 21, 2014 - link

What might be interesting is doing a comparison of video cards for a specific framerate target to (ideally, perhaps it wouldn't actually work like this?) standardize the CPU usage and thus CPU power usage across greatly differing cards. And then measure the power consumed by each card. In this way, couldn't you get a better example ofgaradante - Sunday, September 21, 2014 - link

Whoops, hit tab twice and it somehow posted my comment. Continued:couldn't you get a better example of the power efficiency for a particular card and then meaningful comparisons between different cards? I see lots of people mentioning how the 980 seems to be drawing far more watts than it's rated TDP (and I'd really like someone credible to come in and state how heat dissipated and energy consumed are related. I swear they're the exact same number as any energy consumed by transistors would, after everything, be released as heat, but many people disagree here in the comments and I'd like a final say). Nvidia can slap whatever TDP they want on it and it can be justified by some marketing mumbo jumbo. Intel uses their SDPs, Nvidia using a 165 watt TDP seems highly suspect. And please, please use a nonreference 290X in your reviews, at least for a comparison standpoint. Hasn't it been proven that having cooling that isn't garbage and runs the GPU closer to high 60s/low 70s can lower power consumption (due to leakage?) something on the order of 20+ watts with the 290X? Yes there's justification in using reference products but lets face it, the only people who buy reference 290s/290Xs were either launch buyers or people who don't know better (there's the blower argument but really, better case exhaust fans and nonreference cooling destroys that argument).

So basically I want to see real, meaningful comparisons of efficiencies for different cards at some specific framerate target to standardize CPU usage. Perhaps even monitoring CPU usage over the course of the test and reporting average, minimum, peak usage? Even using monitoring software to measure CPU power consumption in watts (as I'm fairly sure there are reasonably accurate ways of doing this already, as I know CoreTemp reports it as its probably just voltage*amperage, but correct me if I'm wrong) and reported again average, minimum, peak usage would be handy. It would be nice to see if Maxwell is really twice as energy efficient as GCN1.1 or if it's actually much closer. If it's much closer all these naysayers prophesizing AMD's doom are in for a rude awakening. I wouldn't put it past Nvidia to use marketing language to portray artificially low TDPs.

silverblue - Sunday, September 21, 2014 - link

Apparently, compute tasks push the power usage way up; stick with gaming and it shouldn't.fm123 - Friday, September 26, 2014 - link

Don't confuse TDP with power consumption, they are not the same thing. TDP is for designing the thermal solution to maintain the chip temperature. If there is more headroom in the chip temperature, then the system can operate faster, consuming more power."Intel defines TDP as follows: The upper point of the thermal profile consists of the Thermal Design Power (TDP) and the associated Tcase value. Thermal Design Power (TDP) should be used for processor thermal solution design targets. TDP is not the maximum power that the processor can dissipate. TDP is measured at maximum TCASE"

https://www.google.com/url?sa=t&source=web&...

NeatOman - Sunday, September 21, 2014 - link

I just realized that the GTX 980 has a TDP of 165 watts, my Corsair CX430 watt PSU is almost overkill!, that's nuts. That's even enough room to give the whole system a very good stable overclock. Right now i have a pair of HD 7850's @ stock speed and a FX-8320 @ 4.5Ghz, good thing the Corsair puts out over 430 watts perfectly clean :)Nfarce - Sunday, September 21, 2014 - link

While a good power supply, you are leaving yourself little headroom with 430W. I'm surprised you are getting away with it with two 7850s and not experiencing system crashes.ET - Sunday, September 21, 2014 - link

The 980 is an impressive feat of engineering. Fewer transistors, fewer compute units, less power and better performance... NVIDIA has done a good job here. I hope that AMD has some good improvements of its own under its sleeve.garadante - Sunday, September 21, 2014 - link

One thing to remember is they probably save a -ton- of die area/transistors by giving it only what, 1/32 double precision rate? I wonder how competitive in terms of transistors/area an AMD GPU would be if they gutted double precision compute and went for a narrower, faster memory controller.Farwalker2u - Sunday, September 21, 2014 - link

I am looking forward to your review of the GTX 970 once you have a compatible sample in hand.I would like to see the results of the Folding @Home benchmarks. It seems that this site is the only one that consistently use that benchmark in its reviews.

As a "Folder" I'd like to see any indication that the GTX 970, at a cost of $330 and drawing less watts than a GTX 780; may out produce both the 780 ($420 - $470) and the 780Ti ($600). I will be studying the Folding @ Home: Explicit, Single Precision chart which contains the test results of the GTX 970.

Wolfpup - Monday, September 22, 2014 - link

Wow, this is impressive stuff. 10% more performance from 2/3 the power? That'll be great for desktops, but of course even better for notebooks. Very impressed they could pulll off that kind of leap on the same process!They've already managed to significantly bump up the top end mobile part from GTX 680 -> 880, but within a year or so I bet they can go quite a bit higher still.

Oh well, it was nice having a top of the line mobile GPU for a while LOL

If 28nm hit in 2012 though, doesn't that make 2015 its third year? At least 28nm seems to be a really good process, vs all the issues with 90/65nm, etc., since we're stuck on it so long.

Isn't this Moore's Law hitting the constraints of physical reality though? We're taking longer and longer to get to progressively smaller shrinks in die size, it seems like...

Oh well, 22nm's been great with Intel and 28's been great with everyone else!