The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTCompany of Heroes 2

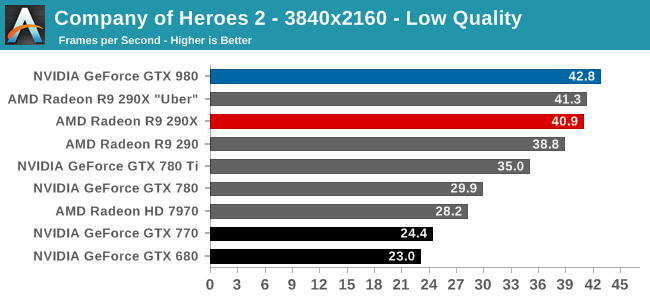

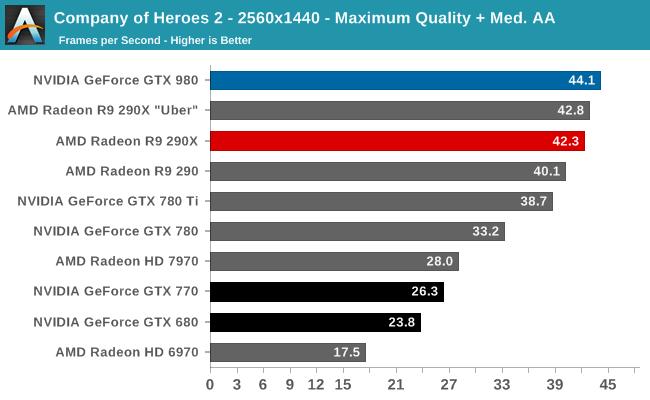

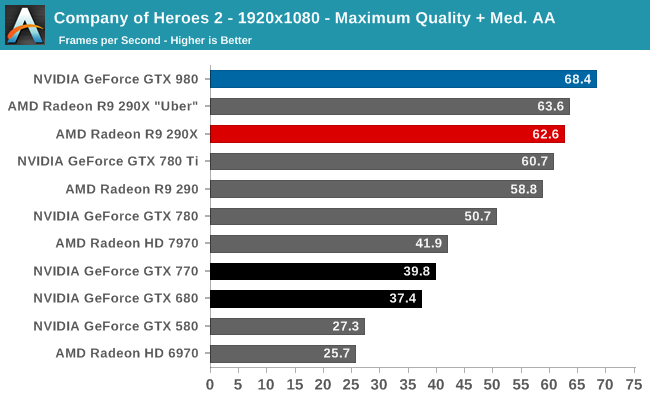

Our second benchmark in our benchmark suite is Relic Games’ Company of Heroes 2, the developer’s World War II Eastern Front themed RTS. For Company of Heroes 2 Relic was kind enough to put together a very strenuous built-in benchmark that was captured from one of the most demanding, snow-bound maps in the game, giving us a great look at CoH2’s performance at its worst. Consequently if a card can do well here then it should have no trouble throughout the rest of the game.

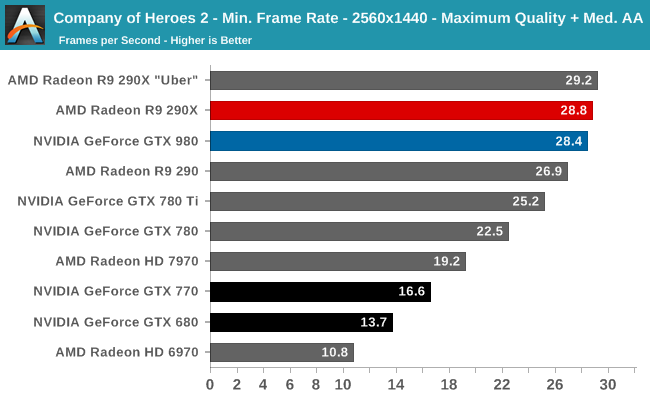

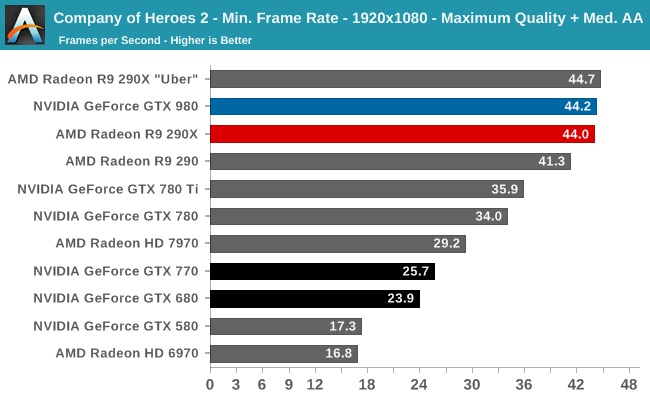

Since CoH2 is not AFR compatible, the best performance you’re going to get out of it is whatever you can get out of a single GPU. In which case the GTX 980 is the fastest card out there for this game. AMD’s R9 290XU does hold up well though; the GTX 980 may have a lead, but AMD is never more than a few percent behind at 4K and 1440p. The lead over the GTX 780 Ti is much more substantial on the other hand at 13% to 22%. So NVIDIA has finally taken this game back from AMD, as it were.

Elsewhere against the GTX 680 this is another very good performance for the GTX 980, with a performance advantage over 80%.

On an absolute basis, at these settings you’re looking at an average framerate in the 40s, which for an RTS will be a solid performance.

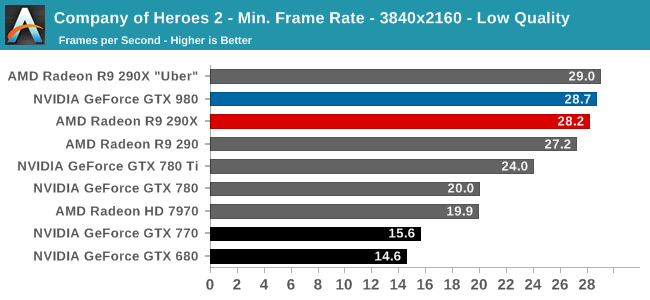

However when it comes to minimum framerates, GTX 980 can’t quite stay on top. In every case it is ever so slightly edged out by the R9 290XU by a fraction of a frame per second. AMD seems to weather the hardest drops in framerates just a bit better than NVIDIA does. Though neither card can quite hold the line at 30fps at 1440p and 4K.

274 Comments

View All Comments

nathanddrews - Friday, September 19, 2014 - link

http://www.pcper.com/files/review/2014-09-18/power...kron123456789 - Friday, September 19, 2014 - link

Different tests, different results. That's nothing new.kron123456789 - Friday, September 19, 2014 - link

But, i still think that Nvidia isn't understated TDP of the 980 and 970.Friendly0Fire - Friday, September 19, 2014 - link

Misleading. If a card pumps out more frames (which the 980 most certainly does), it's going to drive up requirements for every other part of the system, AND it's going to obviously draw its maximum possible power. If you were to lock the framerate to a fixed value that all GPUs could reach the power savings would be more evident.Also, TDP is the heat generation, as has been said earlier here, which is correlated but not equal to power draw. Heat is waste energy, so the less heat you put out the more energy you actually use to work. All this means is that (surprise surprise) the Maxwell 2 cards are a lot more efficient than AMD's GCN.

shtldr - Wednesday, September 24, 2014 - link

"TDP is the heat generation, as has been said earlier here, which is correlated but not equal to power draw."The GPU is a system which consumes energy. Since the GPU does not use that energy to create mass (materialization) or chemical bonds (battery), where the energy goes is easily observed from the outside.

1) waste heat

2) moving air mass through the heatsink (fan)

3) signalling over connects (PCIe and monitor cable)

4) EM waves

5) degradation/burning out of card's components (GPU silicon damage, fan bearing wear etc.)

And that's it. The 1) is very dominant compared to the rest. There's no "hidden" work being done by the card. It would be against the law of conservation of energy (which is still valid, as far as I know).

Frenetic Pony - Friday, September 19, 2014 - link

That's a misunderstanding of what TDP has to do with desktop cards. Now for mobile stuff, that's great. But the bottlenecks for "Maxwell 2" isn't in TDP, it's in clockspeeds. Meaning the efficiency argument is useless if the end user doesn't care.Now, for certain fields the end user cares very much. Miners have apparently all moved onto ASIC stuff, but for other compute workloads any end user is going to choose NVIDIA currently, just to save on their electricity bill. For the consumer end user, TDP doesn't matter nearly as much unless you're really "Green" conscious or something. In that case AMD's 1 year old 290x competes on price for performance, and whatever AMD's update is it will do better.

It's hardly a death knell of AMD, not the best thing considering they were just outclassed for corporate type compute work. But for your typical consumer end user they aren't going to see any difference unless they're a fanboy one way or another, and why bother going after a strongly biased market like that?

pendantry - Friday, September 19, 2014 - link

While it's a fair argument that unless you're environmentally inclined the energy savings from lower TDP don't matter, I'd say a lot more people do care about reduced noise and heat. People generally might not care about saving $30 a year on their electricity bill, but why would you choose a hotter noisier component when there's no price or performance benefit to that choice.AMD GPUs now mirror the CPU situation where you can get close to performance parity if you're willing to accept a fairly large (~100W) power increase. Without heavy price incentives it's hard to convince the consumer to tolerate what is jokingly termed the "space heater" or "wind turbine" inconvenience that the AMD product presents.

Laststop311 - Friday, September 19, 2014 - link

actually the gpu's from amd do not mirror the cpu situation at all. amd' fx 9xxx with the huge tdp and all gets so outperformed by even the i7-4790k on almost everything and the 8 core i7-5960x obliterates it in everything, the performance of it's cpu's are NOT close to intels performance even with 100 extra watts. At least with the GPU's the performance is close to nvidias even if the power usage is not.TLDR amd's gpu situation does not mirror is cpu situation. cpu situation is far worse.

Laststop311 - Friday, September 19, 2014 - link

I as a consumer greatly care about the efficinecy and tdp and heat and noise not just the performance. I do not like hearing my PC. I switched to all noctua fans, all ssd storage, and platinum rated psu that only turns on its fan over 500 watts load. The only noise coming from my PC is my radeon 5870 card basically. So the fact this GPU is super quiet means no matter what amd does performance wise if it cant keep up noise wise they lose a sale with me as i'm sure many others.And im not a fanboy of either company i chose the 5870 over the gtx 480 when nvidia botched that card and made it a loud hot behemoth. And i'll just as quickly ditch amd for nvidia for the same reason.

Kvaern - Friday, September 19, 2014 - link

"For the consumer end user, TDP doesn't matter nearly as much unless you're really "Green""Or live in a country where taxes make up 75% of your power bill \