AMD Radeon R9 285 Review: Feat. Sapphire R9 285 Dual-X OC

by Ryan Smith on September 10, 2014 2:00 PM ESTTonga’s Microarchitecture - What We’re Calling GCN 1.2

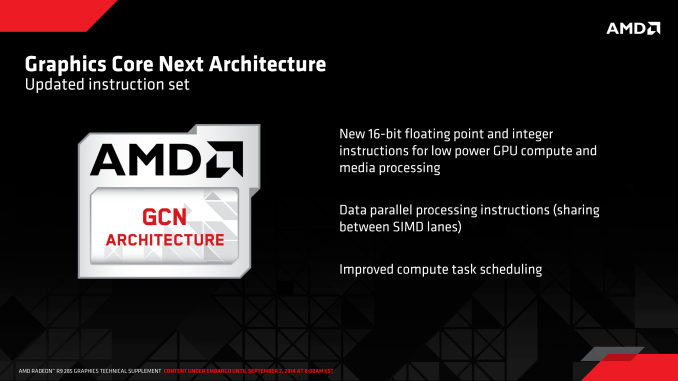

As we alluded to in our introduction, Tonga brings with it the next revision of AMD’s GCN architecture. This is the second such revision to the architecture, the last revision (GCN 1.1) being rolled out in March of 2013 with the launch of the Bonaire based Radeon HD 7790. In the case of Bonaire AMD chose to kept the details of GCN 1.1 close to them, only finally going in-depth for the launch of the high-end Hawaii GPU later in the year. The launch of GCN 1.2 on the other hand is going to see AMD meeting enthusiasts half-way: we aren’t getting Hawaii level details on the architectural changes, but we are getting an itemized list of the new features (or at least features AMD is willing to talk about) along with a short description of what each feature does. Consequently Tonga may be a lateral product from a performance standpoint, but it is going to be very important to AMD’s future.

But before we begin, we do want to quickly remind everyone that the GCN 1.2 name, like GCN 1.1 before it, is unofficial. AMD does not publicly name these microarchitectures outside of development, preferring to instead treat the entire Radeon 200 series as relatively homogenous and calling out feature differences where it makes sense. In lieu of an official name and based on the iterative nature of these enhancements, we’re going to use GCN 1.2 to summarize the feature set.

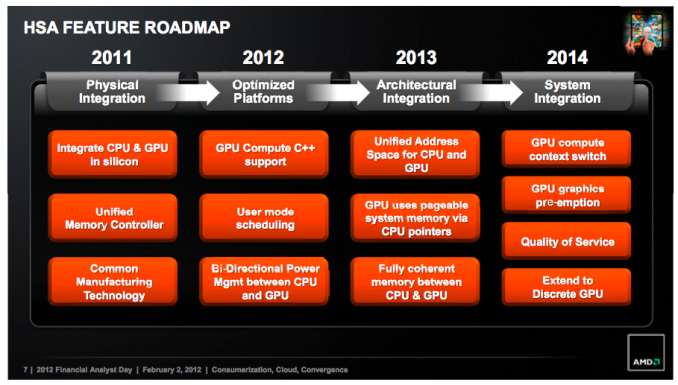

AMD's 2012 APU Feature Roadmap. AKA: A Brief Guide To GCN

To kick things off we’ll pull old this old chestnut one last time: AMD’s HSA feature roadmap from their 2012 financial analysts’ day. Given HSA’s tight dependence on GPUs, this roadmap has offered a useful high level overview of some of the features each successive generation of AMD GPU architectures will bring with it, and with the launch of the GCN 1.2 architecture we have finally reached what we believe is the last step in AMD’s roadmap: System Integration.

It’s no surprise then that one of the first things we find on AMD’s list of features for the GCN 1.2 instruction set is “improved compute task scheduling”. One of AMD’s major goals for their post-Kavari APU was to improve the performance of HSA by various forms of overhead reduction, including faster context switching (something GPUs have always been poor at) and even GPU pre-emption. All of this would fit under the umbrella of “improved compute task scheduling” in AMD’s roadmap, though to be clear with AMD meeting us half-way on the architecture side means that they aren’t getting this detailed this soon.

Meanwhile GCN 1.2’s other instruction set improvements are quite interesting. The description of 16-bit FP and Integer operations is actually very descriptive, and includes a very important keyword: low power. Briefly, PC GPUs have been centered around 32-bit mathematical operations for some number of years now since desktop technology and transistor density eliminated the need for 16-bit/24-bit partial precision operations. All things considered, 32-bit operations are preferred from a quality standpoint as they are accurate enough for many compute tasks and virtually all graphics tasks, which is why PC GPUs were limited to (or at least optimized for) partial precision operations for only a relatively short period of time.

However 16-bit operations are still alive and well on the SoC (mobile) side. SoC GPUs are in many ways a 5-10 year old echo of PC GPUs in features and performance, while in other ways they’re outright unique. In the case of SoC GPUs there are extreme sensitivities to power consumption in a way that PCs have never been so sensitive, so while SoC GPUs can use 32-bit operations, they will in some circumstances favor 16-bit operations for power efficiency purposes. Despite the accuracy limitations of a lower precision, if a developer knows they don’t need the greater accuracy then falling back to 16-bit means saving power and depending on the architecture also improving performance if multiple 16-bit operations can be scheduled alongside each other.

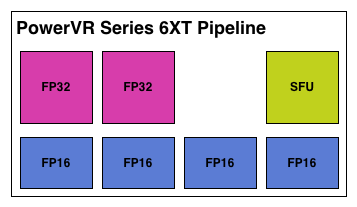

Imagination's PowerVR Series 6XT: An Example of An SoC GPU With FP16 Hardware

To that end, the fact that AMD is taking the time to focus on 16-bit operations within the GCN instruction set is an interesting one, but not an unexpected one. If AMD were to develop SoC-class processors and wanted to use their own GPUs, then natively supporting 16-bit operations would be a logical addition to the instruction set for such a product. The power savings would be helpful for getting GCN into the even smaller form factor, and with so many other GPUs supporting special 16-bit execution modes it would help to make GCN competitive with those other products.

Finally, data parallel instructions are the feature we have the least knowledge about. SIMDs can already be described as data parallel – it’s 1 instruction operating on multiple data elements in parallel – but obviously AMD intends to go past that. Our best guess would be that AMD has a manner and need to have 2 SIMD lanes operate on the same piece of data. Though why they would want to do this and what the benefits may be are not clear at this time.

86 Comments

View All Comments

chizow - Thursday, September 11, 2014 - link

No issues with Boost once you slap a third party cooler and blow away rated TDP, sure :DBut just as I said, AMD's rated specs were bogus, in reality we see that:

1) the 285 is actually slower than the 280 it replaces, even in highly overclocked factory configurations (original point about theoretical performance, debunked)

2) the TDP advantages of the 285 go away, at 190W target TDP AMD trades performance for efficiency, just as I stated. Increasing performance through better cooling results in higher TDP, lower efficiency, to the point it is negligible compared to the 280.

Its obvious AMD wanted the 285 to look good on paper, saying hey look, its only 190W TDP, when in actual shipping configurations (which also make it look better due to factory OCs and 3rd party coolers), it draws power closer to the 250W 280 and barely matches its performance levels.

In the end one has to wonder why AMD bothered. Sure its cheaper for them to make, but this part is a downgrade for anyone who bought a Tahiti-based card in the last 3 years (yes, its nearly 3 years old already!).

bwat47 - Thursday, September 11, 2014 - link

I think the main reason for this card, was to bring things up to par when it comes to features. the 280 (and 280x), were rebadged older high end cards (7950, 7970), and whole these offered great value when it came to raw performance, they are lacking features that the rest of the 200 series will support, such as freesync and trueaudio, and this feature discrepancy was confusing to customers. It makes sense to introduce a new card that brings things up to par when it comes to features. I bet they will release a 285x to replace the 280x as well.chizow - Thursday, September 11, 2014 - link

Marginal features alone aren't enough to justify this card's cost, especially in the case of FreeSync which still isn't out of proof-of-concept phase, and TrueAudio, which is unofficially vaporware status.If AMD released this card last year at Hawaii/Bonaire's launch at this price point OR released it nearly 12 months late now at a *REDUCED* price point, it would make more sense. But releasing it now at a significant premium (+20%, the 280 is selling for $220, the 285 for $260) compared to the nearly 3 year old ASIC it struggles to match makes no sense. There is no progress there, and I think the market will agree there.

If it isn't obvious now that this card can't compete in the market, it will become painfully obvious when Nvidia launches their new high-end Maxwell parts as expected next month. The 980 and 970 will drive down the price on the 780, 290/X and 770, but the real 285 killer will be the 960 which will most likely be priced in this $250-300 range while offering better than 770/280X performance.

Alexvrb - Thursday, September 11, 2014 - link

You just can't admit to being wrong. It maintains boost fine - end of story. That's what I disagreed with you on in the first place. No boost issues - the 290 series had thermal problems. Slapping a different cooler doesn't raise TDP, it just removes obstacles towards reaching that TDP. With an inadequate cooler, you're getting temp-throttled before you ever reach rated TDP. Ask Ryan, he'll set you straight.On top of this, depending on which model 285 you test, some of them eat significantly less power than an equivalent 280. Not all board partners did an equal job. Look at different reviews of different models and you'll see different results. Also, performance is better than I figured it would be, and in most cases it is slightly faster than 280. Which again, I never figured would happen and never claimed.

chizow - Friday, September 12, 2014 - link

Who cares what you disagreed with? The point you took issue was a corollary to the point I was making, which turned out to be true about the theoreticals being misstated and inaccurate when basing any conclusion about the 285 being faster than the 280.As we have seen:

1) The 285 barely reaches parity but in doing so, it requires a significant overclock which forces it to blow past its rated 190W TDP and actually draws closer to the 250W TDP of the 280.

2) It requires a 3rd party cooler similar to the one that was also necessary in keeping the 290/X temps in check in order to achieve its Boost clocks.

As for Ryan setting me straight, lmao, again, his temp tests and subtext already prove me to be correct:

"Note that even under FurMark, our worst case (and generally unrealistic) test, the card only falls by less than 20Mhz to 900MHz sustained."

So it does indeed throttle down to 900MHz even with the cap taken off its 190W rated TDP and a more efficient cooler. *IF* it was limited to a 190W hard TDP target *OR* it was forced to use the stock reference cooler, it is highly likely it would indeed have problems maintaining its Boost, just as I stated. AMD's reference specs trade performance for efficiency, once performance is increased that efficiency is reduced and its TDP increases.

Alexvrb - Friday, September 12, 2014 - link

Look, I get it, you're an Nvidia fanboy. But at least you admitted you were wrong, in your own way, finally. It sustains boost fine. Furmark makes a lot of cards throttle - including Maxwell! Whoops! Should we start saying that Maxwell can't hold boost because it throttles in Furmark? No, because that would be idiotic. I think Maxwell is a great design.However, so is Tonga. Read THG's review of the 285. Not only does it slightly edge out the 280 on average performance, but it uses substantially less power. Like, 40W less. I'm not sure what's Sapphire (the card reviewed here) is doing wrong exactly - the Gigabyte Windforce OC is fairly miserly and has similar clocks.

chizow - Saturday, September 13, 2014 - link

LOL Nvidia fanboy, that's rich coming from the Captain of the AMD Turd-polishing Patrol. :DI didn't admit I was wrong, because my statement to any non-idiot was never dependent on maintaining Boost in the first place, but I am glad to see not only is 285 generally slower than the 280 without significant overclocks, it still has trouble maintaining Boost despite higher TDP than the rated 190W and a better than reference cooler.

You could certainly say Maxwell doesn't hold boost because it throttles in Furmark, but that would prove once and for all you really have no clue what you are talking about since every Nvidia card they introduced since they invented Boost has no problems whatsoever hitting their rated Boost speeds even with the stock reference blower designs. The difference of course, is that Nvidia takes a conservative approach to their Boost ratings that they know all their cards can hit under all conditions, unlike AMD which takes the "good luck/cherry picked" approach (see: R290/290X launch debacle).

And finally about other reviews lol, for every review that says the 285 is better than the 280 in performance and power consumption, there is at least 1 more that echo the sentiments of this one. The 285 barely reaches parity and doesn't consume meaningfully less power in doing so. But keep polishing that turd! This is an ASIC only a true AMD fanboy could love some 3 years after the launch of the chip it is set to replace.

chizow - Saturday, September 13, 2014 - link

Oh and just to prove I can admit when I am wrong, you are right, Maxwell did throttle and fail to meet its Boost speeds for Furmark, but these are clearly artificially imposed driver limitations as Maxwell showed it can easily OC to 1300MHz and beyond:http://www.anandtech.com/show/7764/the-nvidia-gefo...

http://www.anandtech.com/show/7854/nvidia-geforce-...

Regardless, any comparisons of this chip to Maxwell are laughable, Maxwell introduced same performance at nearly 50% reduction in TDP or inversely, nearly double the performance at the same TDP all at a significantly reduced price point on the same process node.

What does Tonga bring us? 95-105% of R9 280's performance at 90% TDP and 120% of the price almost 3 years later? Who would be happy with this level of progress?

Nvidia is set to introduce their performance midrange GM104-based cards next week, do you think ANYONE is going to draw parellels between those cards and Tonga? We already know what Maxwell is capable of and it set the bar extremely high, so if GTX 970 and 980 come anywhere close to those increases in performance and efficiency, this part is going to look even worst than it does now.

Alexvrb - Saturday, September 13, 2014 - link

You were wrong about it being unable to hold boost, you claimed that GCN 1.1 can't hold boost despite clear evidence to the contrary. Silly. Then you were wrong about Maxwell and Furmark - though you kind of admitted you were wrong.Regarding that being a "driver limitation" you can clock a GPU to the moon, and it's fine until it gets a heavy load. However most users won't even know they're being throttled. I had this same discussion YEARS ago with a Pentium 4 guy. You can overclock all you want - when you load it up heavy, it's a whole new game. In that case the user never noticed until I showed him his real clocks while running a game.

Tonga averages a few % higher performance, dumps less heat into your case, and uses less power. Aside from this Sapphire Dual X, most 285 cards seem to use quite a bit less power, run cool and quiet. With all that being said, I think the 280 and 290 series carry a much better value in AMD's lineup. I'm certainly not a fanboy, you're much closer to claiming that title. I've actually used mostly Nvidia cards over the years. I've also used graphics from 3dfx, PowerVR, and various integrated solutions. My favorite cards over the years were a trusty Kyro II and a GeForce 3 vanilla, which was passively cooled until I got ahold of it. Ah those were the days.

chizow - Monday, September 15, 2014 - link

No, I said being a GCN 1.1 part meant it was *more likely* to not meet its Boost targets, thus overstating its theoretical performance relative to the 280. And based on the GCN 1.1 parts we had already seen, this is true, it was MORE LIKELY to not hit its Boost targets due to AMD's ambiguous and non-guaranteed Boost speeds. None of this disproved my original point that the 285's theoreticals were best-case and the 280's were worst-case and as these reviews have shown, the 280 would be faster than the 285 in stock configurations. It took an overclocked part with 3rd party cooling and higher TDP (closer to the 280) for it to reach relative parity with the 280.Tonga BARELY uses any less power and in some cases, uses more, is on par with the part it replaces, and costs MORE than the predecessor part it replaces. What do you think would happen if Nvidia tried to do the same later this week with their new Maxwell parts? It would be a complete and utter disaster.

Stop trying to put lipstick on a pig, if you are indeed as unbiased as you say you are you can admit there is almost no progress at all with this part and it simply isn't worth defending. Yes I favor Nvidia parts but I have used a variety in the past as well including a few highly touted ATI/AMD parts like the 9700pro and 5850. I actually favored 3dfx for a long time until they became uncompetitive and eventually bankrupt, but now I prefer Nvidia because much of their enthusiast/gamer spirit lives on and it shows in their products.