Testing SATA Express And Why We Need Faster SSDs

by Kristian Vättö on March 13, 2014 7:00 AM EST- Posted in

- Storage

- SSDs

- Asus

- SATA

- SATA Express

What Is SATA Express?

Officially SATA Express (SATAe from now on) is part of the SATA 3.2 standard. It's not a new command or signaling protocol but merely a specification for a connector that combines both traditional SATA and PCIe signals into one simple connector. As a result SATAe is fully compatible with all existing SATA drives and cables and the only real difference is that the same connector (although not the same SATA cable) can be used with PCIe SSDs.

As SATAe is just a different connector for PCIe, it supports both the PCIe 2.0 and 3.0 standards. I believe most solutions will rely on PCH PCIe lanes for SATAe (like the ASUS board we have), so until Intel upgrades the PCH PCIe to 3.0, SATAe will be limited to ~780MB/s. It's of course possible for motherboard OEMs to route the PCIe for SATAe from the CPU, enabling 3.0 speeds and up to ~1560MB/s of bandwidth, but obviously the PCIe interface of the SSD needs to be 3.0 as well. The SandForce, Marvell, and Samsung designs are all 2.0 but at least OCZ is working on a 3.0 controller that is scheduled for next year.

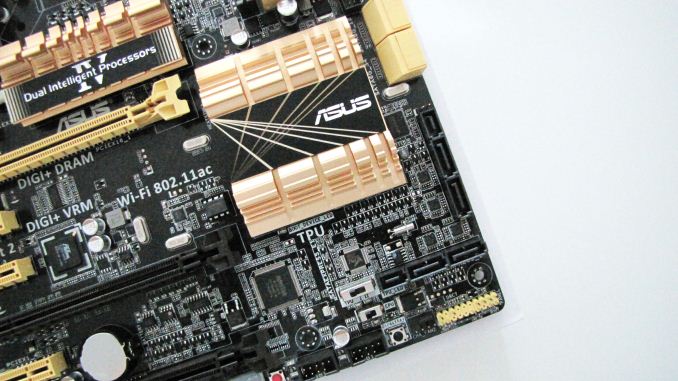

The board ASUS sent us has two SATAe ports as you can see in the image above. This is a similar port that you should find in a final product once SATAe starts shipping. Notice that the motherboard connector is basically just two SATA ports and a small additional connector—the SATA ports work normally when using a standard SATA cable. It's only when the connector meets the special SATAe cable that PCIe magic starts happening.

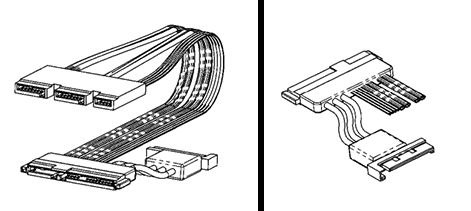

ASUS mentioned that the cable is not a final design and may change before retail availability. I suspect we'll see one larger cable instead of three separate ones for esthetic and cable management reasons. As there are no SATAe drives available yet, our cable has the same connector on both ends and the connection to a PCIe drive is provided with the help of a separate SATAe daughterboard. In the final design the other end of the cable will be similar to the current SATA layout (data+power), so it will plug straight into a drive.

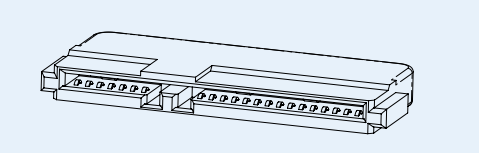

That looks like the female part to the SATA connector in your SSD, doesn't it?

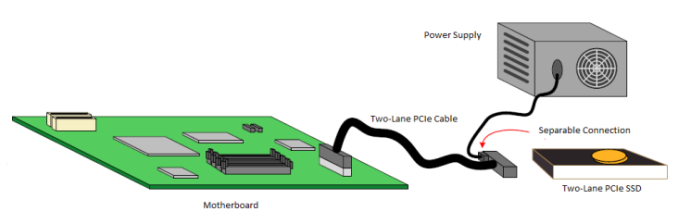

Unlike regular PCIe, SATAe does not provide power. This was a surprise for me because I expected SATAe to fully comply with the PCIe spec, which provides up to 25W for x2 and x4 devices. I'm guessing the cable assembly would have become too expensive with the inclusion of power and not all SATA-IO members are happy even with the current SATAe pricing (about $1 in bulk per cable compared to $0.30 for normal SATA cables). As a result, SATAe drives will still source their power straight from the power supply. The SATAe connector is already quite large (about the same size as SATA data + power), so instead of a separate power connector we'll likely see something that looks like this:

In other words, the SATAe cable has a power input, which can be either 15-pin SATA or molex depending on the vendor. The above is just SATA-IO's example/suggestion—they haven't actually made any standard for the power implementation and hence we may see some creative workarounds from OEMs.

131 Comments

View All Comments

Khenglish - Thursday, March 13, 2014 - link

That 2.8 uS you found is driver interface overhead from an interface that doesn't even exist yet. You need to add this to the access latency of the drive itself to get the real latency.Real world SSD read latency for tiny 4K data blocks is roughly 900us on the fastest drives.

It would take an 18000 meter cable to add even 10% to that.

willis936 - Thursday, March 13, 2014 - link

Show me a consumer phy that can transmit 8Gbps over 100m on cheap copper and I'll eat my hat.Khenglish - Thursday, March 13, 2014 - link

The problem is long cables is attenuation, not latency. Cables can only be around 50M long before you need a repeater.mutercim - Friday, March 14, 2014 - link

Electrons have mass, they can't ever travel at the speed of light, no matter the medium. The signal itself would move at the speed of light (in vacuum), but that's a different thing./pedantry

Visual - Friday, March 14, 2014 - link

It's a common misconception, but electrons don't actually need to travel the length of the cable for a signal to travel through it.In layman's terms, you don't need to send an electron all the way to the other end of the cable, you just need to make the electrons that are already there react in a certain way as to register a required voltage or current.

So a signal is a change in voltage, or a change in the electromagnetic fields, and that travels at the speed of light (no, not in vacuum, in that medium).

AnnihilatorX - Friday, March 14, 2014 - link

Just to clarify, it is like pushing a tube full of tennis balls from one end. Assuming the tennis balls are all rigid so deformation is negligible, the 'cause and effect' making the tennis ball on the other end move will travel at speed of light.R3MF - Thursday, March 13, 2014 - link

having 24x PCIe 3.0 lanes on AMD's Kaveri looks pretty far-sighted right now.jimjamjamie - Thursday, March 13, 2014 - link

if they got their finger out with a good x86 core the APUs would be such an easy sellMrSpadge - Thursday, March 13, 2014 - link

Re: "Why Do We Need Faster SSDs"You power consumption argument ignores one fact: if you use the same controller, NAND and firmware it costs you x Wh to perform a read or write operation. If you simply increase the interface speed and hence perform more of these operations per time, you also increase the energy required per time, i.e. power consumption. I your example the faster SSD wouldn't continue to draw 3 W with the faster interface: assuming a 30% throughput increase expecting a power draw of 4 W would be reasonable.

Obviously there are also system components actively waiting for that data. So if the data arrives faster (due to lower latency & higher throughput) they can finish the task quicker and race to sleep. This counterbalances some of the actual NAND power draw increases, but won't negate it completely.

Kristian Vättö - Thursday, March 13, 2014 - link

"If you simply increase the interface speed and hence perform more of these operations per time, you also increase the energy required per time, i.e. power consumption."The number of IO operations is a constant here. A faster SSD does not mean that the overall number of operations will increase because ultimately that's up to the workload. Assuming that is the same in both cases, the faster SSD will complete the IO operations faster and will hence spend more time idling, resulting in less power drawn in total.

Furthermore, a faster SSD does not necessarily mean higher power draw. As the graph on page one shows, PCIe 2.0 increases baseline power consumption by only 2% compared to SATA 6Gbps. Given that SATA 6Gbps is a bottleneck in current SSDs, more processing power (and hence more power) is not required to make a faster SSD. You are right that it may result in higher NAND power draw, though, because the controller will be able to take better advantage of parallelism (more NAND in use = more power consumed).

I understand the example is not perfect as in real world the number of variables is through the roof. However, the idea was to debunk the claim that PCIe SSDs are just a marketing trick -- they are that too but ultimately there are gains that will reach the average user as well.