The Intel Xeon E7 v2 Review: Quad Socket, Up to 60 Cores/120 Threads

by Johan De Gelas on February 21, 2014 6:00 AM EST- Posted in

- IT Computing

- Intel

- Xeon

- Ivy Bridge EX

- server

- Brickland

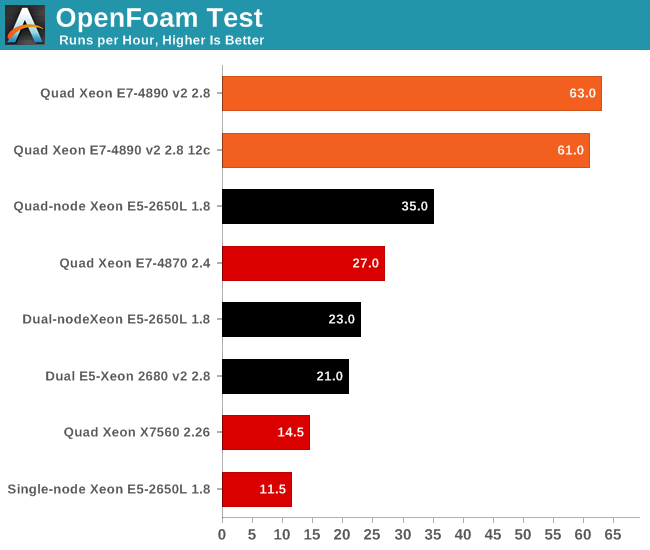

OpenFoam

Several of our readers have already suggested that we look into OpenFoam. That's easier said than done, as good benchmarking means you have to master the sofware somewhat. Luckily, my lab was able to work with the professionals of Actiflow. Actiflow specialises in combining aerodynamics and product design. Calculating aerodynamics involves the use of CFD software, and Actiflow uses OpenFoam to accomplish this. To give you an idea what these skilled engineers can do, they worked with Ferrari to improve the underbody airflow of the Ferrari 599 and increase its downforce.

The Ferrari 599: an improved product thanks to Openfoam.

We were allowed to use one of their test cases as a benchmark, but we are not allowed to discuss the specific solver. All tests were done on OpenFoam 2.2.1 and openmpi-1.6.3.

Many CFD calculations do not scale well on clusters, unless you use InfiniBand. InfiniBand switches are quite expensive and even then there are limits to scaling. We do not have an InfiniBand switch in the lab, unfortunately. Although it's not as low latency as InfiniBand, we do have a good 10G Ethernet infrastructure, which performs rather well.

So we added a fifth configuration to our testing: the quad-node Intel Server System H2200JF. The only CPU that we have eight of right now is the Xeon E5-2650L 1.8GHz. Yes, it is not perfect, but this is the start of our first clustered HPC benchmark. This way we can get an of idea whether or not the Xeon E7 v2 platform can replace a complete quad-node cluster system and at the same time offer much higher RAM capacity.

The results are pretty amazing: the quad Xeon E7-4980 v2 runs circles around our quad-node HPC cluster. Even if we were to outfit it with 50% higher clocked Xeons, the quad Xeon E7 v2 would still be the winner. Of course, there is no denying that our quad-node cluster is a lot cheaper to buy. Even with an InfiniBand switch, an HPC cluster with dual socket servers is a lot cheaper than a quad socket Intel Xeon E7 v2.

However, this bodes well for the soon to be released Xeon E5-46xx v2 parts. QPI links are even lower latency than InfiniBand. But since we do not have a lot of HPC testing experience, we'll leave it up to our readers to discuss this in more detail.

Another interesting detail is that the Xeon 2650L at 1.8GHz is about twice as fast as a Xeon L5650. We found AVX code inside OpenFoam 2.2.1, so we assume that this is one of the cases where AVX improves FP performance tremendously. Seasoned OpenFoam users, let us know whether is the accurate assessment.

125 Comments

View All Comments

Kevin G - Friday, February 21, 2014 - link

And a quick addition:There will indeed be a quick adoption to Haswell-EX not because of AVX2 or DDR4 but rather transactional memory support (TSX). For the large databases and applications these systems are targeted at, TSX should prove to be helpful.

TiGr1982 - Friday, February 21, 2014 - link

I agree, TSX should make a lot of sense for these E7's - they have a huge core count and huge shared memory at the same time.Schmide - Friday, February 21, 2014 - link

I think your L3 latency numbers are off. I think typical Intel L3 latencies are 30-40 clocks ~3-4ns.Schmide - Friday, February 21, 2014 - link

Oops my bad i miss used the calculator. Ignore.dylan522p - Friday, February 21, 2014 - link

No power consumption numbers?JohanAnandtech - Saturday, February 22, 2014 - link

Coming...we had to run lots of test in parallel, so it was not possible to make sure all systems were similar. Also we should test with workloads that require a lot more memory to get an idea.mslasm - Friday, February 21, 2014 - link

Note that E7-8857 v2 has 12 cores but no HT, so only has 12 threads as well (see http://ark.intel.com/products/75254/Intel-Xeon-Pro... Thus it is not equivalent to a 3Ghz E7-4860V2, as 4860 has HT for a total of 24 threadsAlso, there must be a typo either in the graph or in the text on the "single thread" integer performance test: "Opteron ... at 2.4GHz would deliver about 2481 MIPs", while - according to the graph - it already delivers 2636 @ 2.3Ghz.

JohanAnandtech - Saturday, February 22, 2014 - link

Good point. There is little gain from HT in OpenFoam, but it will influence the LZMA benchmarks. So the Openfoam findings are still valid, but not the LZMA. The kernel compile is somewhat in between.JohanAnandtech - Saturday, February 22, 2014 - link

I will rerun the benchmarks without HT to check.mslasm - Saturday, February 22, 2014 - link

Thanks! I did not mean to imply HT matters "a lot", but it may influence some (and I admit I don't know much about how your benchmarks behave, other than parallel LZMA which I worked a lot with) - so it just does not sound right to outright call it equivalent, and I wish AT only has statements anyone can just trust :)