The Xbox One - Mini Review & Comparison to Xbox 360/PS4

by Anand Lal Shimpi on November 20, 2013 8:00 AM ESTPerformance - An Update

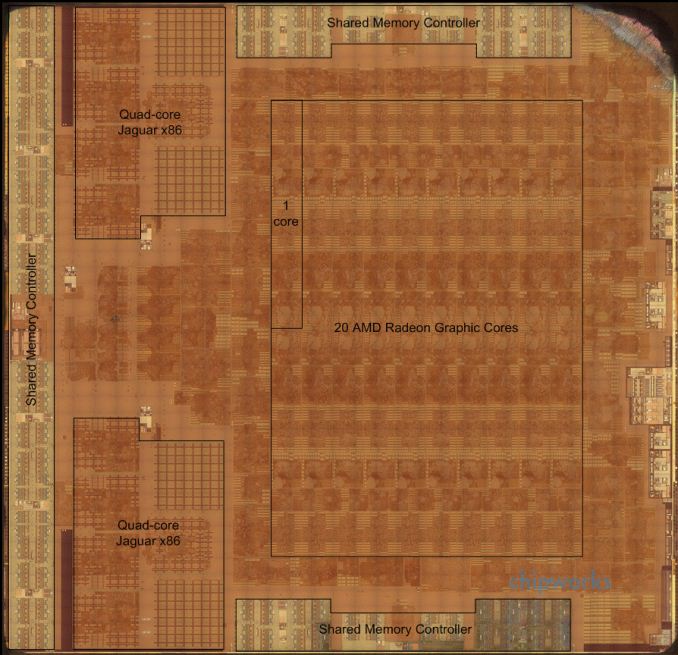

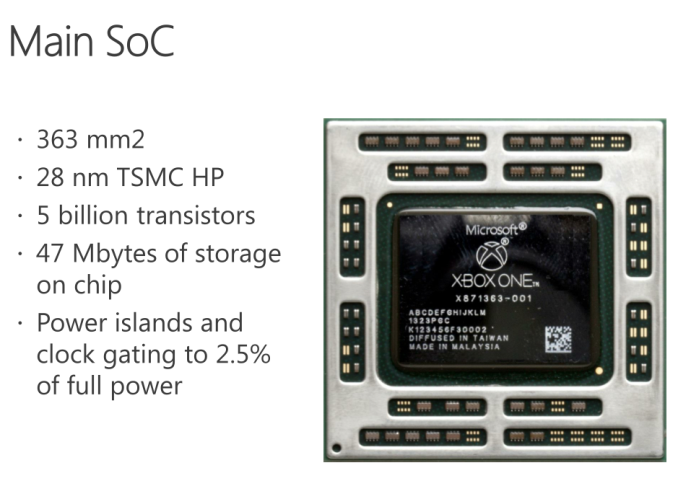

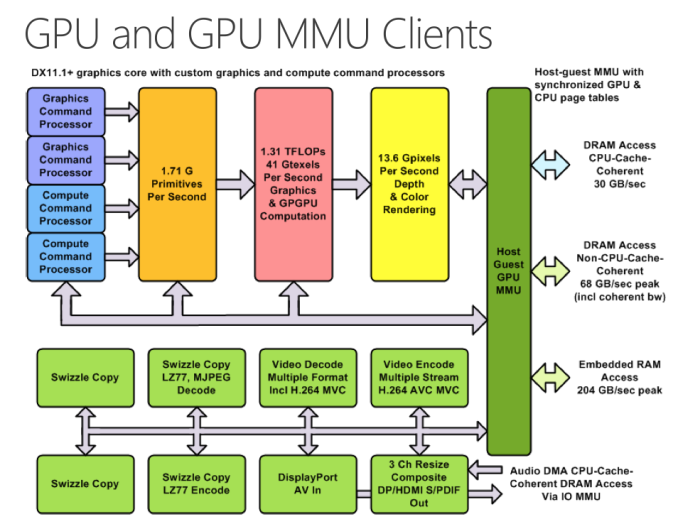

The Chipworks PS4 teardown last week told us a lot about what’s happened between the Xbox One and PlayStation 4 in terms of hardware. It turns out that Microsoft’s silicon budget was actually a little more than Sony’s, at least for the main APU. The Xbox One APU is a 363mm^2 die, compared to 348mm^2 for the PS4’s APU. Both use a similar 8-core Jaguar CPU (2 x quad-core islands), but they feature different implementations of AMD’s Graphics Core Next GPUs. Microsoft elected to implement 12 compute units, two geometry engines and 16 ROPs, while Sony went for 18 CUs, two geometry engines and 32 ROPs. How did Sony manage to fit in more compute and ROP partitions into a smaller die area? By not including any eSRAM on-die.

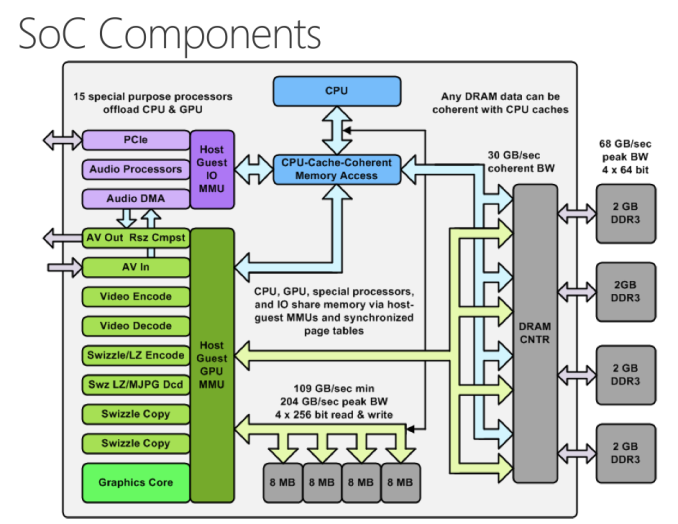

While both APUs implement a 256-bit wide memory interface, Sony chose to use GDDR5 memory running at a 5.5GHz data rate. Microsoft stuck to more conventionally available DDR3 memory running at less than half the speed (2133MHz data rate). In order to make up for the bandwidth deficit, Microsoft included 32MB of eSRAM on its APU in order to alleviate some of the GPU bandwidth needs. The eSRAM is accessible in 8MB chunks, with a total of 204GB/s of bandwidth offered (102GB/s in each direction) to the memory. The eSRAM is designed for GPU access only, CPU access requires a copy to main memory.

Unlike Intel’s Crystalwell, the eSRAM isn’t a cache - instead it’s mapped to a specific address range in memory. And unlike the embedded DRAM in the Xbox 360, the eSRAM in the One can hold more than just a render target or Z-buffer. Virtually any type of GPU accessible surface/buffer type can now be stored in eSRAM (e.g. z-buffer, G-buffer, stencil buffers, shadow buffer, etc…). Developers could also choose to store things like important textures in this eSRAM as well, there’s nothing that states it needs to be one of these buffers just anything the developer finds important. It’s also possible for a single surface to be split between main memory and eSRAM.

Obviously sticking important buffers and other frequently used data here can definitely reduce demands on the memory interface, which should help Microsoft get by with only having ~68GB/s of system memory bandwidth. Microsoft has claimed publicly that actual bandwidth to the eSRAM is somewhere in the 140 - 150GB/s range, which is likely equal to the effective memory bandwidth (after overhead/efficiency losses) to the PS4’s GDDR5 memory interface. The difference being that you only get that bandwidth to your most frequently used data on the Xbox One. It’s still not clear to me what effective memory bandwidth looks like on the Xbox One, I suspect it’s still a bit lower than on the PS4, but after talking with Ryan Smith (AT’s Senior GPU Editor) I’m now wondering if memory bandwidth isn’t really the issue here.

| Microsoft Xbox One vs. Sony PlayStation 4 Spec comparison | ||||||||||||||

| Xbox 360 | Xbox One | PlayStation 4 | ||||||||||||

| CPU Cores/Threads | 3/6 | 8/8 | 8/8 | |||||||||||

| CPU Frequency | 3.2GHz | 1.75GHz | 1.6GHz | |||||||||||

| CPU µArch | IBM PowerPC | AMD Jaguar | AMD Jaguar | |||||||||||

| Shared L2 Cache | 1MB | 2 x 2MB | 2 x 2MB | |||||||||||

| GPU Cores | 768 | 1152 | ||||||||||||

| GCN Geometry Engines | 2 | 2 | ||||||||||||

| GCN ROPs | 16 | 32 | ||||||||||||

| GPU Frequency | 853MHz | 800MHz | ||||||||||||

| Peak Shader Throughput | 0.24 TFLOPS | 1.31 TFLOPS | 1.84 TFLOPS | |||||||||||

| Embedded Memory | 10MB eDRAM | 32MB eSRAM | - | |||||||||||

| Embedded Memory Bandwidth | 32GB/s | 102GB/s bi-directional (204GB/s total) | - | |||||||||||

| System Memory | 512MB 1400MHz GDDR3 | 8GB 2133MHz DDR3 | 8GB 5500MHz GDDR5 | |||||||||||

| System Memory Bus | 128-bits | 256-bits | 256-bits | |||||||||||

| System Memory Bandwidth | 22.4 GB/s | 68.3 GB/s | 176.0 GB/s | |||||||||||

| Manufacturing Process | 28nm | 28nm | ||||||||||||

In order to accommodate the eSRAM on die Microsoft not only had to move to a 12 CU GPU configuration, but it’s also only down to 16 ROPs (half of that of the PS4). The ROPs (render outputs/raster operations pipes) are responsible for final pixel output, and at the resolutions these consoles are targeting having 16 ROPs definitely puts the Xbox One as the odd man out in comparison to PC GPUs. Typically AMD’s GPU targeting 1080p come with 32 ROPs, which is where the PS4 is, but the Xbox One ships with half that. The difference in raw shader performance (12 CUs vs 18 CUs) can definitely creep up in games that run more complex lighting routines and other long shader programs on each pixel, but all of the more recent reports of resolution differences between Xbox One and PS4 games at launch are likely the result of being ROP bound on the One. This is probably why Microsoft claimed it saw a bigger increase in realized performance from increasing the GPU clock from 800MHz to 853MHz vs. adding two extra CUs. The ROPs operate at GPU clock, so an increase in GPU clock in a ROP bound scenario would increase performance more than adding more compute hardware.

The PS4's APU - Courtesy Chipworks

The PS4's APU - Courtesy Chipworks

Microsoft’s admission that the Xbox One dev kits have 14 CUs does make me wonder what the Xbox One die looks like. Chipworks found that the PS4’s APU actually features 20 CUs, despite only exposing 18 to game developers. I suspect those last two are there for defect mitigation/to increase effective yields in the case of bad CUs, I wonder if the same isn’t true for the Xbox One.

At the end of the day Microsoft appears to have ended up with its GPU configuration not for silicon cost reasons, but for platform power/cost and component availability reasons. Sourcing DDR3 is much easier than sourcing high density GDDR5. Sony managed to obviously launch with a ton of GDDR5 just fine, but I can definitely understand why Microsoft would be hesitant to go down that route in the planning stages of Xbox One. To put some numbers in perspective, Sony has shipped 1 million PS4s thus far. That's 16 million GDDR5 chips, or 7.6 Petabytes of RAM. Had both Sony and Microsot tried to do this, I do wonder if GDDR5 supply would've become a problem. That's a ton of RAM in a very short period of time. The only other major consumer of GDDR5 are video cards, and the number of cards sold in the last couple of months that would ever use that RAM is a narrow list.

Microsoft will obviously have an easier time scaling its platform down over the years (eSRAM should shrink nicely at smaller geometry processes), but that’s not a concern to the end user unless Microsoft chooses to aggressively pass along cost savings.

286 Comments

View All Comments

epyclytus - Wednesday, November 20, 2013 - link

wow. your dream console is might not even be that much more powerful than what is already in the ps4. and, your vram configuration is worst since it only has 1GB of GDDR5.i went for the fences with my specs because it is all made up of dreams.

Subyman - Wednesday, November 20, 2013 - link

I wonder how the rise of DDR3 prices has affected MS? I'm sure they purchased contracts at fixed prices a while ago, but going forward it seems DDR3 prices aren't much better than GDDR5 right now. The cost savings may not have been worth it looking at the current marketplace.Morawka - Wednesday, November 20, 2013 - link

i'm so tired of these companies making a big cache on the chip's die to negate poorly chosen memory interfaces.apple did it with the A7 in the iphone and ipad, and now Microsoft is doing it with the XBone.

Just spend the die space on a beefy memory interface and call it a day. Sure the memory interface is going to take up more space on the chip, but its better than wasting even MORE space on eSRAM/Cache.

Apple could have just put a beefy memory controller and call it a day, instead they put 4 MB of cache which takes tons of die space and served as a stop gap solution

Microsoft could have just went with GDDR5 and call it a da, but instead went with ddr3 and wasted tons of die space on esram

sigh, just beef these things up and call it a day, especially if these are going to be on the market for the next 8 years.

blacks329 - Saturday, November 30, 2013 - link

While your complaints are valid, Apple will probably address them within 12 months with the A8, so I don't see it as that big of a problem for them. On the X1 side, they gambled wrong and we're kind of stuck with it until ~2020.Braumin - Wednesday, November 20, 2013 - link

I wonder how much of the cross-platform comparisons are just that due to time constraints, the Xbox just didn't get optimized very well. Unfortunately it looks slightly harder to code for.I'll be curious to see how this goes moving forward. Do games like Forza 5 also have the aliasing problems? Other reviews have just said that it looks great.

Also - Anand - you've outdone yourself. You're preview is better than most reviews I've seen.

GTVic - Wednesday, November 20, 2013 - link

"One" or "the One" is not a good shorthand/nickname. I prefer XBone or X-Bone.piroroadkill - Wednesday, November 20, 2013 - link

I thought it was Xbox 180 after all their U-Turns...djboxbaba - Wednesday, November 20, 2013 - link

hahaha awesomemikato - Monday, November 25, 2013 - link

Why is everyone abbreviating "box" and not "one"? Like XbOne or XbO or Xb1. And the capitalization. All I read when I see this is "X Bone". I'll just call it that now. I guess there are difficulties with confusion with the original Xbox? The Scion xB? lol. I have an xB and owners call the current model the xB2.prophet001 - Wednesday, November 20, 2013 - link

"Those concerned about their privacy will be happy to know that Kinect isn’t required for use."As opposed to those people who don't care about a video camera watching their living room 24 hours a day.

My word people. Wake up.