The Haswell Review: Intel Core i7-4770K & i5-4670K Tested

by Anand Lal Shimpi on June 1, 2013 10:00 AM ESTMemory

Haswell got an updated memory controller that’s supposed to do a great job of running at very high frequencies. Corsair was kind enough to send over some of its Vengeance Pro memory with factory DDR3-2400 XMP profiles. I have to say, the experience was quite possibly the simplest memory overclocking I’ve ever encountered. Ivy Bridge was pretty decent at higher speeds, but Haswell is a different beast entirely.

Although I used DDR3-2400 for most of my testing, Corsair’s Vengeance Pro line is available in frequencies rated all the way up to 2933MHz.

Platform

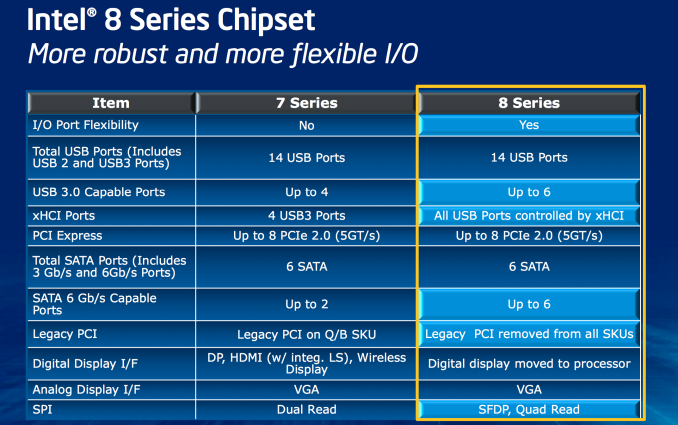

Haswell features a new socket (LGA-1150). Fundamental changes to power delivery made it impossible to maintain backwards compatibility with existing LGA-1155 sockets. Alongside the new socket comes Intel’s new 8-series chipsets.

At a high level the 8-series chipsets bring support for up to six SATA 6Gbps and USB 3.0. It’s taken Intel far too long to move beyond two 6Gbps SATA ports, so this is a welcome change. With 8-series Intel also finally got rid of legacy PCI support.

Overclocking

Despite most of the voltage regulation being moved on-package, motherboards still expose all of the same voltage controls that you’re used to from previous platforms. Haswell’s FIVR does increase the thermal footprint of the chip itself, which is why TDPs went up from 77W to 84W at the high-end for LGA-1150 SKUs. Combine higher temperatures under the heatspreader with a more mobile focused chip design, and overclocking is going to depend on yield and luck of the draw more than it has in the past.

Haswell doesn’t change the overclocking limits put in place with Sandy Bridge. All CPUs are frequency locked, however K-series parts ship fully unlocked. A new addition is the ability to adjust BCLK to one of three pre-defined straps (100/125/167MHz). The BCLK adjustment gives you a little more flexibility when overclocking, but you still need a K-SKU to take advantage of the options.

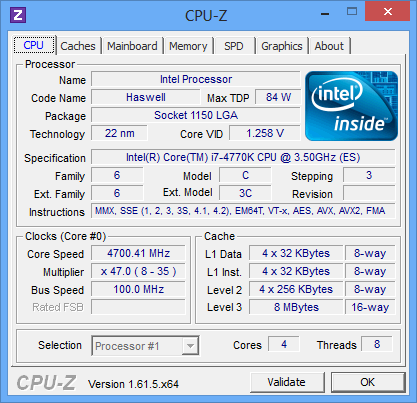

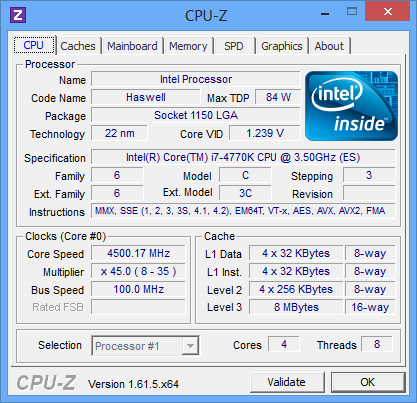

In terms of overclocking success on standard air cooling you should expect anywhere from 4.3GHz - 4.7GHz at somewhere in the 1.2 - 1.35V range. At the higher end of that spectrum you need to be sure to invest in a good cooler as you’re more likely to bump into thermal limits if you’re running on stable settings.

210 Comments

View All Comments

bji - Monday, June 3, 2013 - link

+10 false dichotomy. Look it up.kenjiwing - Saturday, June 1, 2013 - link

Any reviews comparing this gen to a 980x??Ryan Smith - Saturday, June 1, 2013 - link

It's available in Bench.http://www.anandtech.com/bench/Product/836?vs=142

owikh84 - Saturday, June 1, 2013 - link

4560K??? Not 4770K & 4670K?karasaj - Saturday, June 1, 2013 - link

4670K is the Haswell equivalent of a 3570K.hellcats - Saturday, June 1, 2013 - link

I read with some concern that the TSX instructions aren't going to be available on all SKUs. This is the main thing that I've been looking forward to on Haswell! Not providing the capability across the family is reminiscent of the 486SX/DX debacle. TSX could be huge for game physics as it would allow for far more consistent scaling. I know it is supposed to be backwards compatible, but what's the point of coding to it if it isn't always there?zanon - Saturday, June 1, 2013 - link

Agreed, TSX is one of the most interesting parts of Haswell so I'm sorry not to see it get more discussion. And as you say (and like with VT-d or other tech) I think Intel is being stupid and self-defeating by trying to make it an artificial differentiator. Unlike general basics of a chip such as clock rate, cache, hyperthreading or raw execution resources these sorts of features are only as valuable as the software that's coded for them, and nothing kills adoption amongst developers like "well maybe it'll be there but maybe not." If they can't depend on it, then it's not worth spending much extra time with and tremendously limits what it can be used for. That principal shows up over and over, it's why consoles can typically hold their own for so long. Even though on paper they get creamed, in reality developers are actually able to aim for 100% usage of all resources because there will never be any question about what is available.For features like this Intel should aim for as broad adoption as possible, or what's the point? They can differentiate just fine with pure performance, power, and physical properties. Disappointing as always.

penguin42 - Saturday, June 1, 2013 - link

Agreed! I'd also be interested in seeing performance comparisons with a transactionally optimised piece of code.Johnmcl7 - Saturday, June 1, 2013 - link

Definitely, I was a bit puzzled reading the review to find barely a mention of TSX when I thought it was meant to be one of the ground breaking new features on Haswell. Even if there was only a synthetic benchmark for now it would be extremely interesting to see if it works anything like as well as promised.John

bji - Sunday, June 2, 2013 - link

TSX is so esoteric in its applicability that I think you'd be very hard pressed to a) find a benchmark that could actually exercise it in a meaningful way and b) have any expectation that this benchmark would translate into any actual perceived performance gain in any application run by 99.999% of users.In other words - TSX is only going to help performance in some very rare and obscure types of software that "normal" users will never even come close to using, let alone caring about the performance of.

However I am intruiged by your speculation that TSX will be beneficial for physics simulation, which I guess could translate to perceivable performance increases for software that end users might actually use in the form of game physics. I found a paper that described techniques for using transactional memory to improve performance for physics simulation but it only found a 27% performance increase, which is not exactly earth shattering (I wouldn't call it "huge for game physics" personally).