NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTCompute

Jumping into compute, we aren’t expecting too much here. Outside of DirectCompute GK104 is generally a poor compute GPU, and other than the clockspeed boost GTX 770 doesn’t have much going for it.

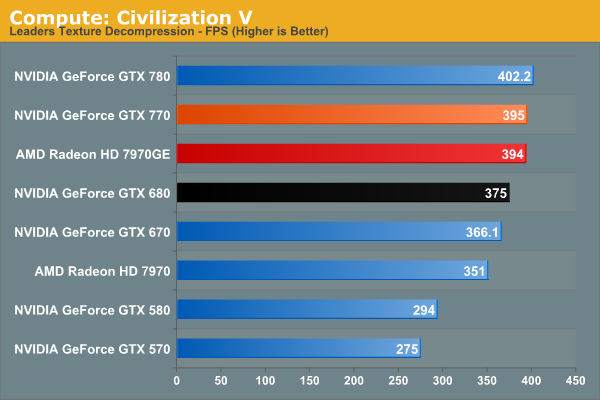

As always we'll start with our DirectCompute game example, Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. While DirectCompute is used in many games, this is one of the only games with a benchmark that can isolate the use of DirectCompute and its resulting performance.

Civilization V at least shows that NVIDIA’s DirectCompute performance is up to snuff in this case. Though as is the case with GTX 780, we’re reaching the limits of what this benchmark can do, due to just how fast modern cards have become.

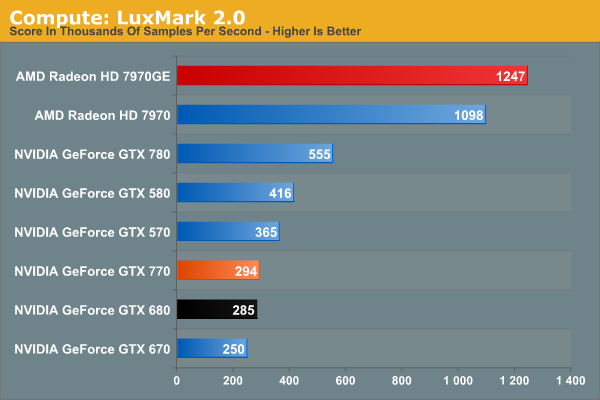

Our next benchmark is LuxMark2.0, the official benchmark of SmallLuxGPU 2.0. SmallLuxGPU is an OpenCL accelerated ray tracer that is part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

Moving on to a more general compute task, we get a reminder of how poor GK104 is here. GTX 770 can beat the slower GK104 products, and that’s it. Even GTX 570 is faster, never mind the massive lead that 7970GE holds.

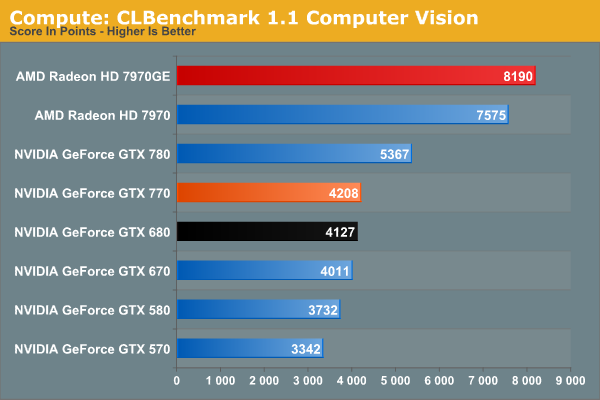

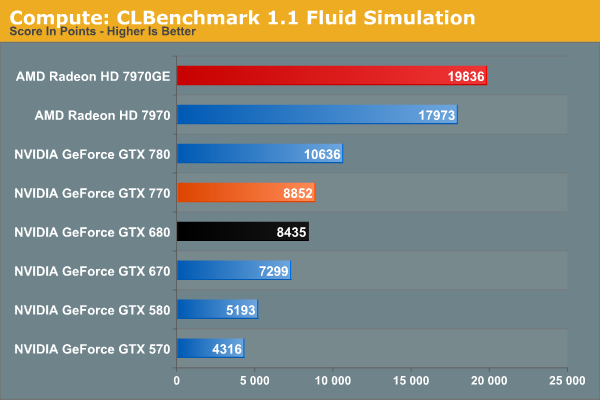

Our 3rd benchmark set comes from CLBenchmark 1.1. CLBenchmark contains a number of subtests; we’re focusing on the most practical of them, the computer vision test and the fluid simulation test. The former being a useful proxy for computer imaging tasks where systems are required to parse images and identify features (e.g. humans), while fluid simulations are common in professional graphics work and games alike.

CLBenchmark paints GTX 770 in a better light than LuxMark, but not by a great deal. The gains over the GTX 680 are miniscule since these benchmarks aren’t memory bandwidth limited, and the gap between it and the 7970GE is nothing short of enormous.

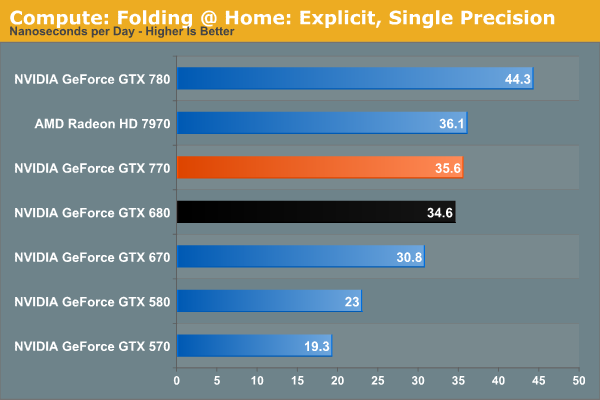

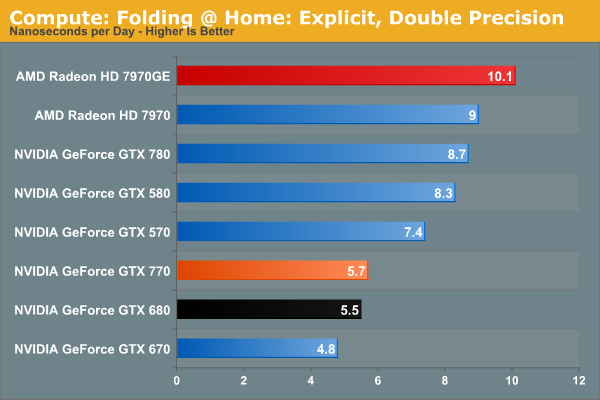

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, as Folding @ Home has moved exclusively to OpenCL this year with FAHCore 17.

Recent core improvements in Folding @ Home continue to pay off for NVIDIA. In single precision the GTX 770 is just fast enough to hang with the 7970 vanilla, though the 7970GE is still over 10% faster. Double precision on the other hand is entirely in AMD’s favor thanks to GK104’s very poor FP64 performance.

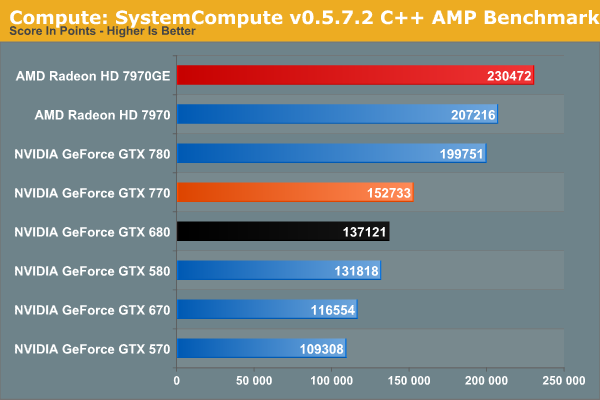

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, as described in this previous article, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

Unlike our other compute benchmarks, System Compute is at least a little bit memory bandwidth sensitive, so GTX 770 pulls ahead of GTX 680 by 11%. Otherwise like every other compute benchmark, AMD’s cards fare far better here.

117 Comments

View All Comments

Enkur - Thursday, May 30, 2013 - link

Why is there a picture of Xbox One in the article when its mentioned nowhere.Razorbak86 - Thursday, May 30, 2013 - link

The 2GB Question & The Test"The wildcard in all of this will be the next-generation consoles, each of which packs 8GB of RAM, which is quite a lot of RAM for video operations even after everything else is accounted for. With most PC games being ports of console games, there’s a decent risk of 2GB cards being undersized when used with high resolutions and the highest quality art assets. The worst case scenario is only that these highest quality assets may not be usable at playable performance, but considering the high performance of every other aspect of GTX 770 that would be a distinct and unfortunate bottleneck."

kilkennycat - Thursday, May 30, 2013 - link

NONE of the release offerings (May 30)of the GTX770 on Newegg have the Titan cooler !!!! Regardless of the pictures in this article and on the GTX7xx main page on Newegg. And no bundled software to "ease the pain" and perhaps help mentally deaden the fan noise..... this product takes more power than the GTX680. Early buyers beware... !!geok1ng - Thursday, May 30, 2013 - link

"Having 2GB of RAM doesn’t impose any real problems today, but I’m left to wonder for how much longer that’s going to be true. The wildcard in all of this will be the next-generation consoles, each of which packs 8GB of RAM, which is quite a lot of RAM for video operations even after everything else is accounted for. With most PC games being ports of console games, there’s a decent risk of 2GB cards being undersized when used with high resolutions and the highest quality art assets. "Last week a noob posted something like that on the 780 review. It was decimated by a slew of tech geeks comments afterward. I am surprised to see the same kind of reasoning on a text written by an AT expert.

All AT reviewers by now know that next console will be using an APU from AMD that will have the graphic muscle (almost) comparable to a 6670 ( 5670 in PS4 case thanks to GDDR5) . So what Mr. Ryan Smith is stating is that a "8GB" 6670 can perform better than a 2GB 770 in video operations?

I am well aware that Mr Ryan Smith is over-qualified to help AT readers revisit this old legend of graphics memory :

How little is too little?

And please let us not starting flaming about memory usage- most modern OSs and gaming engines use available RAM dinamically, so if one sees a game use 90%+ of available graphics memory does not imply , at all, that such game would run faster if we double the graphics memory. The opposite is often the true.

As soon as 4GB versions of the 770 launch AT should pit these versions against the 2GB 770 and the 3GB 7970. Or we could go back months ago and re-read tests done when the 4GB versions of the 680 came out- only at triple screen resolutions and insane levels of AA would we see any theoretical advantage of 3-4Gb over 2GB, which is largely unpractical since most games can't run at these resolutions and AA with a single card anyway.

I think NVDIA did it right (again): 2GB is enough for today and we wont see next gen consoles running triple screen resolutions at 16xAA+. 2Gb means less BoM, which is good for profit and price competition and less energy consumption which is good for card temps and max Oc results.

Enkur - Thursday, May 30, 2013 - link

I cant believe AT is mixing up unified graphics and system memory on consoles with dedicated RAM of the graphics card. doesnt make sense.Egg - Thursday, May 30, 2013 - link

PS4 has 8GB of GDDR5 and a GPU somewhat close to a 7850. I don't know where you got your facts from.geok1ng - Thursday, May 30, 2013 - link

Just to start the flaming war- next consoles will not run in monolithic GPUs, but in twin jaguar cores. So when you see those 768/1152 GPU cores numbers, remember these are "crossfired" cores. And in both consoles the GPU is running at a mere 800Mhz, hence the comparison with the 5670/6670, 480 shaders cards@ 800Mhz.It is widely accepted that console games are developed using the lowest common denominator, in this case, the Xbox One DDR3 memory. Even if we take the huge assumption that dual jaguar cores running in tandem can work similar to a 7850 -1024 cores at 860Mhz- in a PS4 ( which is a huge leap of faith looking back to ho badly AMD fared in previous crossfires attempts using integrated GPU like these jaguar cores) that turns out to be the same:

Do an 8GB 7850 gives us better graphical results than a 2GB 770, for any gaming application in the foreseeable future?

Don't 4k on me please: both consoles will be using HDMI, not DisplayPort. and no, they wont be able to drive games across 3 screens. This "next gen-consoles will have more Video RAM than high GPUs in PCs, so their games will be better" is reminding of the old days of "1gb DDr2 cards are better than 256Mb DDr3 cards for future games" scam.

Ryan Smith - Thursday, May 30, 2013 - link

We're aware of the difference. A good chunk of that unified memory is going to be consumed by the OS, the application, and other things that typically reside on the CPU in a PC. But we're still expecting games to be able to load 3GB+ in assets, which would be a problem for 2GB cards.iEATu - Thursday, May 30, 2013 - link

Why are you guys using FXAA in benchmarks as high end as these? Especially for games like BF3 where you have FPS over 100. 4x AA for 1080p and 2x for 1440p. No question those look better than FXAA...Ryan Smith - Thursday, May 30, 2013 - link

In BF3 we're testing both FXAA and MSAA. Otherwise most of our other tests are MSAA, except for Crysis 3 which is FXAA only for performance reasons.