NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTSleeping Dogs

Another Square Enix game, Sleeping Dogs is one of the few open world games to be released with any kind of benchmark, giving us a unique opportunity to benchmark an open world game. Like most console ports, Sleeping Dogs’ base assets are not extremely demanding, but it makes up for it with its interesting anti-aliasing implementation, a mix of FXAA and SSAA that at its highest settings does an impeccable job of removing jaggies. However by effectively rendering the game world multiple times over, it can also require a very powerful video card to drive these high AA modes.

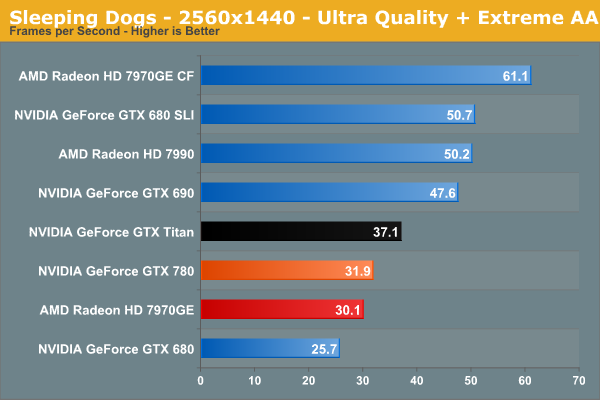

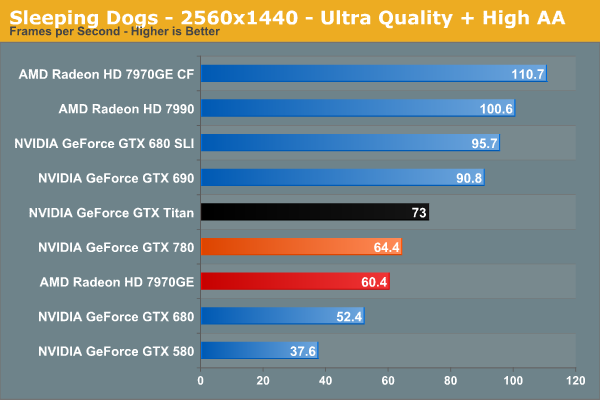

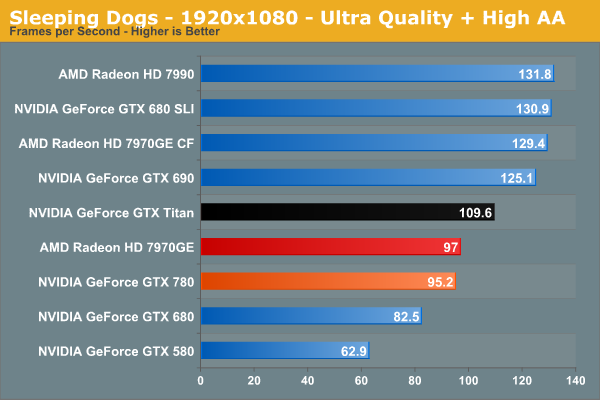

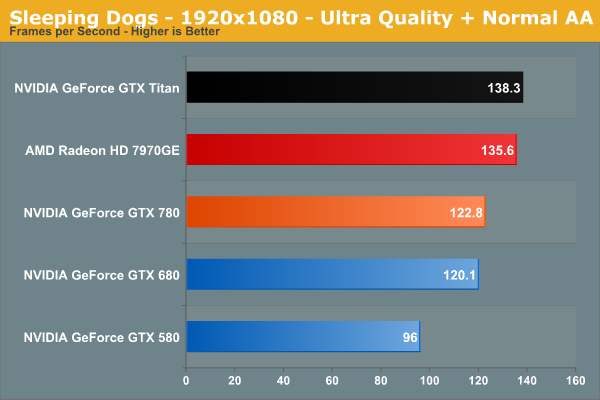

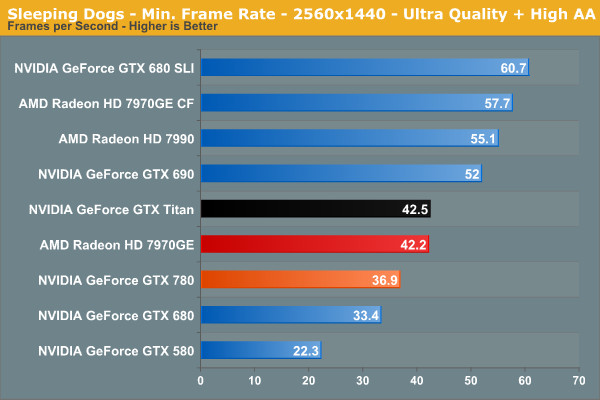

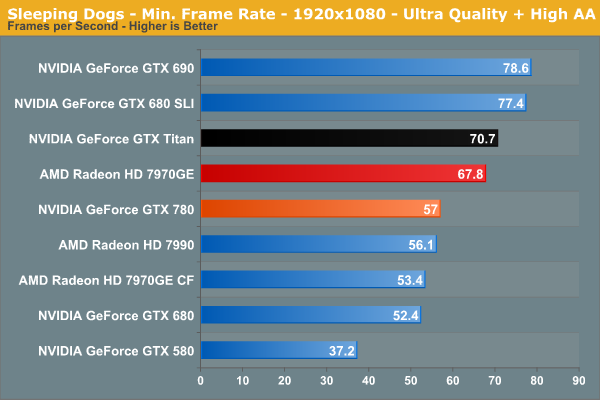

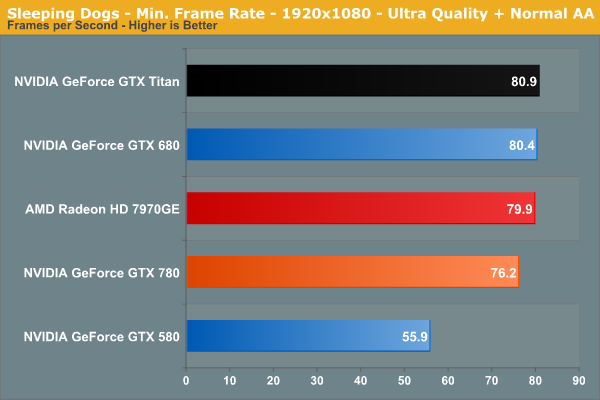

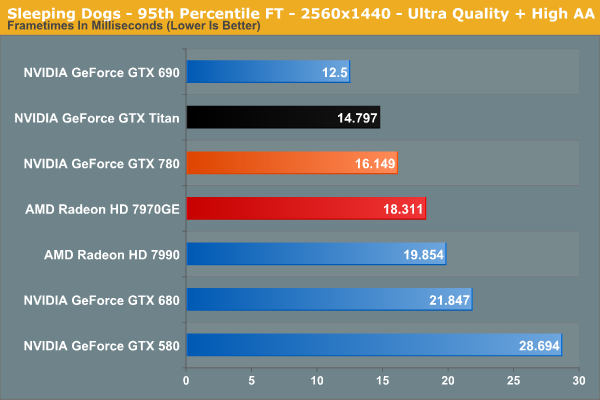

Sleeping Dogs is another game where the 780 fills out a gap, but falls closer to the 7970GE than NVIDIA would like to see. At 64.4fps it’s fast enough to crash past 60fps at 2560 with high AA, but this means it’s narrower win for the GTX 780, beating the GTX 680 by 23% but the 7970GE by just 7%. Meanwhile the GTX 780 trails the GTX Titan by 12%.

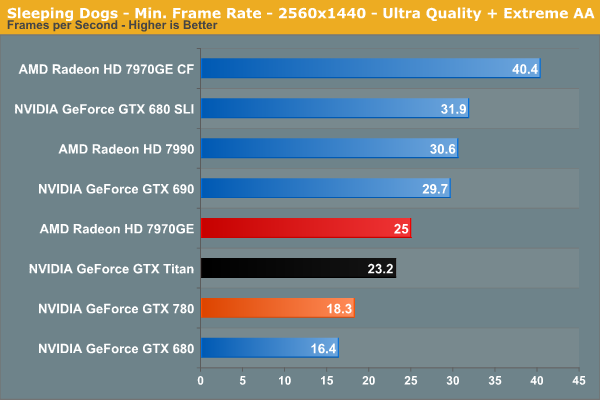

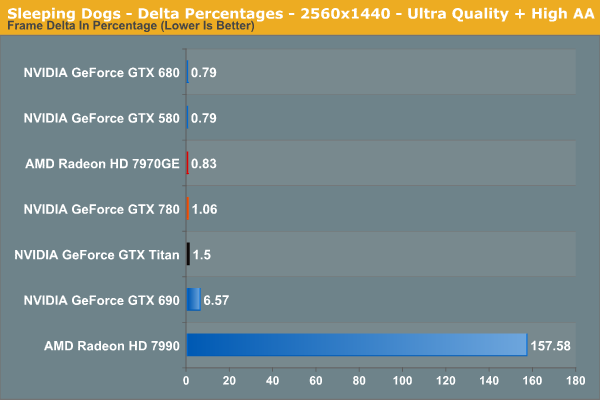

The minimum framerates, though not bad on their own, do not do the GTX 780 any favors here, and we see the GTX 780 fall behind the 7970GE here by over 10%. Interestingly this is just about an all-around worst case scenario for the GTX 780, which has the GTX 780 trailing the GTX Titan by almost the full 15% theoretical shader/texture performance gap, and the lead over the GTX 680 is only 10%. Sleeping Dogs use of SSAA in higher anti-aliasing modes is very hard on the shaders, and this is a prime example of what GTX 780’s weak spot is going to be relative to GTX Titan.

155 Comments

View All Comments

milkman001 - Friday, May 24, 2013 - link

FYI,On the "Total War: Shogun 2" page, you have the 2650x1440 graph posted twice.

JDG1980 - Saturday, May 25, 2013 - link

I don't think that the release of this card itself is problematic for Titan owners - everyone knows that GPU vendors start at the top and work their way down with new silicon, so this shouldn't have come as much of a surprise.What I do find problematic is their refusal to push out BIOS-based fan controller improvements to Titan owners. *That* comes off as a slap in the face. Someone spends $1000 on a new video card, they deserve top-notch service and updates.

inighthawki - Saturday, May 25, 2013 - link

The typically swapchain format is something like R8G8B8A8 and the alpha channel is typically ignored (value of 0xFF typically written), since it is of no use to the OS, since it will not alpha blend with the rest of the GUI. You can create a 24-bit format, but it's very likely that for performance reasons, the driver will allocate it as if it were a 32-bit format, and not write to the upper 8 bits. The hardware is often only capable of writing to 32 bit aligned places, so its more beneficial for the hardware to just waste 8 bits of data and not have to do any fancy shifting to read or write from each pixel. I've actually seen cases where some drivers will allocate 8-bit formats as 32-bit formats, wasting 4x the space the user thought they were allocating.jeremyshaw - Saturday, May 25, 2013 - link

As a current GTX580 owner running at 2560x1440, I don't have any want of upgrade, especially in compute performance. I think I'll hold out for at least one more generation, before deciding.ahamling27 - Saturday, May 25, 2013 - link

As a GTX 560 Ti owner, I am chomping at the bit to pick up an upgrade. The Titan was out of the question, but the 780 looks a lot better at 65% of the cost for 90% of the performance. The only thing holding me back is that I'm still on z67 with a 2600k overclocked to 4.5 ghz. I don't see a need to rebuild my entire system as it's almost on par with the z77/3770. The problem is that I'm still on PCIe 2.0 and I'm worried that it would bottleneck a 780.Considering a 780 is aimed at us with 5xx or lower cards, it doesn't make sense if we have to abandon our platform just to upgrade our graphics card. So could you maybe test a 780 on PCIe 2.0 vs 3.0 and let us know if it's going to bottleneck on 2.0?

Ogdin - Sunday, May 26, 2013 - link

There will be no bottleneck.mapesdhs - Sunday, May 26, 2013 - link

Ogdin is right, it shouldn't be a bottleneck. And with a decent air cooler, you ought to be

able to get your 2600K to 5.0, so you have some headroom there aswell.

Lastly, you say you have a GTX 560 Ti. Are you aware that adding a 2nd card will give

performance akin to a GTX 670? And two 560 Tis oc'd is better than a stock 680 (VRAM

capacity not withstanding, ie. I'm assuming you have a 1GB card). Here's my 560Ti SLI

at stock:

http://www.3dmark.com/3dm11/6035982

and oc'd:

http://www.3dmark.com/3dm11/6037434

So, if you don't want the expense of an all new card for a while at the cost level of a 780,

but do want some extra performance in the meantime, then just get a 2nd 560Ti (good

prices on eBay these days), it will run very nicely indeed. My two Tis were only 177 UKP

total - less than half the cost of a 680, though atm I just run them at stock speed, don't

need the extra from an oc. The only caveat is VRAM, but that shouldn't be too much of

an issue unless you're running at 2560x1440, etc.

Ian.

ahamling27 - Wednesday, May 29, 2013 - link

Thanks for the reply! I thought about SLI but ultimately the 1 GB of vram is really going to hurt going forward. I'm not going to grab a 780 right away, because I want to see what custom models come out in the next few weeks. Although, EVGA's ACX cooler looks nice, I just want to see some performance numbers before I bite the bullet.Thanks again!

inighthawki - Tuesday, May 28, 2013 - link

Your comment is inaccurate. Just because a game requires "only 512MB" of video ram doesn't mean that's all it'll use. Video memory can be streamed in on the fly as needed off the hard drive, and as a result you can easily use a lot if you wanted as a performance optimization. I would not be the least bit surprised to see games on next gen consoles using WAY more video memory than regular memory. Running a game that "requires" 512MB of VRAM on a GPU with 4GB of VRAM gives it 3.5GB more storage to cache higher resolution textures.AmericanZ28 - Tuesday, May 28, 2013 - link

NVIDIA=FAIL....AGAIN! 780 Performs on par with a 7970GE, yet the GE costs $100 LESS than the 680, and $250 LESS than the 780.