Choosing a Gaming CPU: Single + Multi-GPU at 1440p, April 2013

by Ian Cutress on May 8, 2013 10:00 AM EST

One question when building or upgrading a gaming system is of which CPU to choose - does it matter if I have a quad core from Intel, or a quad module from AMD? Perhaps something simpler will do the trick, and I can spend the difference on the GPU. What if you are running a multi-GPU setup, does the CPU have a bigger effect? This was the question I set out to help answer.

A few things before we start:

This set of results is by no means extensive or exhaustive. For the sake of expediency I could not select 10 different gaming titles across a variety of engines and then test them in seven or more different configurations per game and per CPU, nor could I test every different CPU made. As a result, on the gaming side, I limited myself to one resolution, one set of settings, and four very regular testing titles that offer time demos: Metro 2033, DiRT 3, Civilization V and Sleeping Dogs. This is obviously not Skyrim, Battlefield 3, Crysis 3 or Far Cry 3, which may be more relevant in your set up.

The arguments for and against time demo testing as well as the arguments for taking FRAPs values of sequences are well documented (time demos might not be representative vs. consistency and realism of FRAPsing a repeated run across a field), however all of our tests can be run on home systems to get a feel for how a system performs. Below is a discussion regarding AI, one of the common usages for a CPU in a game, and how it affects the system. Out of our benchmarks, DiRT 3 plays a game, including AI in the result, and the turn-based Civilization V has no concern for direct AI except for time between turns.

All this combines in with my unique position as the motherboard senior editor here at AnandTech – the position gives me access to a wide variety of motherboard chipsets, lane allocations and a fair number of CPUs. GPUs are not necessarily in a large supply in my side of the reviewing area, but both ASUS and ECS have provided my test beds with HD7970s and GTX580s respectively, such that they have been quintessential in being part of my test bed for 12 and 21 months. The task set before me in this review would be almost a career in itself if we were to expand to more GPUs and more multi-GPU setups. Thus testing up to 4x 7970 and up to 2x GTX 580 is a more than reasonable place to start.

Where It All Began

The most important point to note is how this set of results came to pass. Several months ago I came across a few sets of testing by other review websites that floored me – simple CPU comparison tests for gaming which were spreading like wildfire among the forums, and some results contradicted the general prevailing opinion on the topic. These results were pulling all sorts of lurking forum users out of the woodwork to have an opinion, and being the well-adjusted scientist I am, I set forth to confirm the results were, at least in part, valid.

What came next was a shock – some had no real explanation of the hardware setups. While the basic overview of hardware was supplied, there was no run down of settings used, and no attempt to justify the findings which had obviously caused quite a stir. Needless to say, I felt stunned that the lack of verbose testing, as well as both the results and a lot of the conversation, particularly from avid fans of Team Blue and Team Red, that followed. I planned to right this wrong the best way I know how – with science!

The other reason for pulling together the results in this article is perhaps the one I originally started with – the need to update drivers every so often. Since Ivy Bridge release, I have been using Catalyst 12.3 and GeForce 296.10 WHQL on my test beds. This causes problems – older drivers are not optimized, readers sometimes complain if older drivers are used, and new games cannot be added to the test bed because they might not scale correctly due to the older drivers. So while there are some reviews on the internet that update drivers between testing and keep the old numbers (leading to skewed results), actually taking time out to retest a number of platforms for more data points solely on the new drivers is actually a large undertaking.

For example, testing new drivers over six platforms (CPU/motherboard combinations) would mean: six platforms, four games, seven different GPU configurations, ~10 minutes per test plus 2+ hours to set up each platform and install a new OS/drivers/set up benchmarks. That makes 40+ hours of solid testing (if all goes without a second lost here or there), or just over a full working week – more if I also test the CPU performance for a computational benchmark update, or exponentially more if I include multiple resolutions and setting options.

If this is all that is worked on that week, it means no new content – so it happens rarely, perhaps once a year or before a big launch. This time was now, and when I started this testing, I was moving to Catalyst 13.1 and GeForce 310.90, which by the time this review goes live will have already been superseded! In reality, I have been slowly working on this data set for the best part of 10 weeks while also reviewing other hardware (but keeping those reviews with consistent driver comparisons). In total this review encapsulates 24 different CPU setups, with up to 6 different GPU configurations, meaning 430 data points, 1375 benchmark loops and over 51 hours in just GPU benchmarks alone, without considering setup time or driver issues.

What Does the CPU do in a Game?

A lot of game developers use customized versions of game engines, such as the EGO engine for driving games or the Unreal engine. The engine provides the underpinnings for a lot of the code, and the optimizations therein. The engine also decides what in the game gets offloaded onto the GPU.

Imagine the code that makes up the game as a linear sequence of events. In order to go through the game quickly, we need the fastest single core processor available. Of course, games are not like this – lots of the game can be parallelized, such as vector calculations for graphics. These were of course the first to be moved from CPU to the GPU. Over time, more parts of the code have made the move – physics and compute being the main features in recent months and years.

The GPU is good at independent, simple tasks – calculating which color is in which pixel is an example of this, along with addition processing and post-processing features (FXAA and so on). If a task is linear, it lives on the CPU, such as loading textures into memory or negotiating which data to transfer between the memory and the GPUs. The CPU also takes control of independent complex tasks, as the CPU is the one that can make complicated logic analysis.

Very few parts of a game come under this heading of ‘independent yet complex’. Anything suitable for the GPU but not ported over will be here, and the big one usually quoted is artificial intelligence. Deciding where an NPC is going to run, shoot or fly could be considered a very complex set of calculations, ideal for fast CPUs. The counter argument is that games have had complex AI for years – the number of times I personally was destroyed by a Dark Sim on Perfect Dark on the N64 is testament to either my uselessness or the fact that complex AI can be configured with not much CPU power. AI is unlikely to be a limiting factor in frame rates due to CPU usage.

What is most likely going to be the limiting factor is how the CPU can manage data. As engines evolve, they try and use data between the CPU, memory and GPUs less – if textures can be kept on the GPU, then they will stay there. But some engines are not as perfect as we would like them to be, resulting in the CPU as the limiting factor. As CPU performance increases, and those that write the engines in which games are made understand the ecosystem, CPU performance should be less of an issue over time. All roads point towards the PS4 of course, and its 8-core Jaguar processor. Is this all that is needed for a single GPU, albeit in an HSA environment?

Multi-GPU Testing

Another angle I wanted to test beyond most other websites is multi-GPU. There is content online dealing mostly with single GPU setups, with a few for dual GPU. Even though the number of multi-GPU users is actually quite small globally, the enthusiast markets are clearly geared for it. We get motherboards with support for four GPU cards; we have cases that will support a dual processor board as well as four double-height GPUs. Then there are GPUs being released with two sets of silicon on a PCB, wrapped in a double or triple width cooler.

More often than not on a forum, people will ask ‘what GPU for $xxx’ and some of the suggestions will be towards two GPUs at half the budget, as it commonly offers more performance than a single GPU if the game and the drivers all work smoothly (at the cost of power, heat, and bad driver scenarios). The ecosystem supports multi-GPU setups, so I felt it right to test at least one four-way setup. Although with great power comes great responsibility – there was no point testing 4-way 7970s on 1080p.

Typically in this price bracket, users will go for multi-monitor setups, along the lines of 5760x1080, or big monitor setups like 1440p, 1600p, or the mega-rich might try 4K. Ultimately the high end enthusiast, with cash to burn, is going to gravitate towards 4K, and I cannot wait until that becomes a reality. So for a median point in all of this, we are testing at 1440p and maximum settings. This will put the strain on our Core 2 Duo and Celeron G465 samples, but should be easy pickings for our multi-processor, multi-GPU beast of a machine.

A Minor Problem In Interpreting Results

Throughout testing for this review, there were clearly going to be some issues to consider. Chief of these is the question of consistency and in particular if something like Metro 2033 decides to have an ‘easy’ run which reports +3% higher than normal. For that specific example we get around this by double testing, as the easy run typically appears in the first batch – so we run two or three batches of four and disregard the first batch.

The other, perhaps bigger, issue is interpreting results. If I get 40.0 FPS on a Phenom II X4-960T, 40.1 FPS on an i5-2500K, and then 40.2 FPS on a Phenom II X2-555 BE, does that make the results invalid? The important points to recognize here are statistics and system state.

System State: We have all had times booting a PC when it feels sluggish, but this sluggish behavior disappears on reboot. The same thing can occur with testing, and usually happens as a result of bad initialization or a bad cache optimization routine at boot time. As a result, we try and spot these circumstances and re-run. With more time we would take 100 different measurements of each benchmark, with reboots, and cross out the outliers. Time constraints outside of academia unfortunately do not give us this opportunity.

Statistics: System state aside, frame rate values will often fluctuate around an average. This will mean (depending on the benchmark) that the result could be +/- a few percentage points on each run. So what happens if you have a run of four time demos, and each of them are +2% above the ‘average’ FPS? From the outside, as you will not know the true average, you cannot say if it is valid as the data set is extremely small. If we take more runs, we can find the variance (the technical version of the term), the standard deviation, and perhaps represent the mean, median and mode of a set of results.

As always, the main constraint in articles like these is time – the quicker to publish, the less testing, the larger the error bars and the higher likelihood that some results are going to be skewed because it just so happened to be a good/bad benchmark run. So the example given above of the X2-555 getting a better result is down to interpretation – each result might be +/- 0.5 FPS on average, and because they are all pretty similar we are actually more GPU limited. So it is more whether the GPU has a good/bad run in this circumstance.

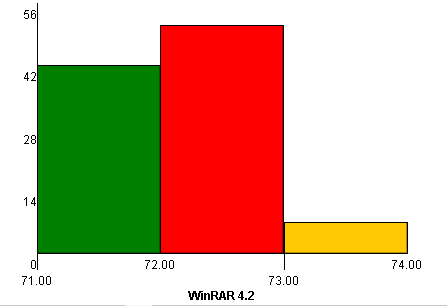

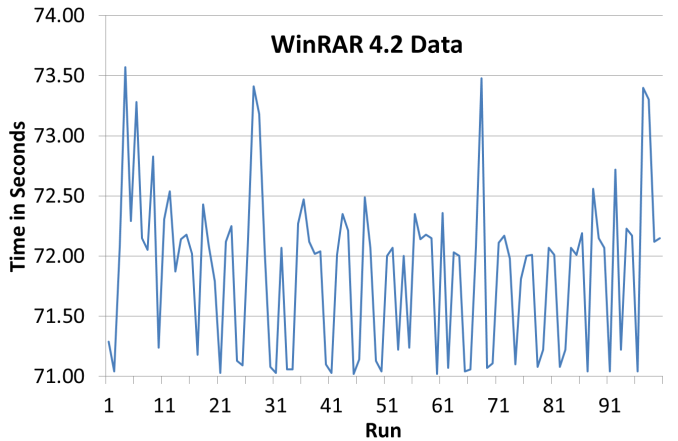

For this example, I batched 100 runs of my common WinRAR test in motherboard testing, on an i5-2500K CPU with a Maximus V Formula. Results varied between 71 seconds and 74 seconds, with a large gravitation towards the lower end. To represent this statistically, we normally use a histogram, which separates the results up into ‘bins’ (e.g. 71.00 seconds to 71.25 seconds) of how accurate the final result has to be. Here is an initial representation of the data (time vs. run number), and a few histograms of that data, using a bin size of 1.00 s, 0.75s, 0.5s, 0.33s, 0.25s and 0.1s.

As we get down to the lower bin sizes, there is a pair of large groupings of results between ~71 seconds and ~ 72 seconds. The overall average/mean of the data is 71.88 due to the outliers around 74 seconds, with the median at 72.04 seconds and standard deviation of 0.660. What is the right value to report? Overall average? Peak? Average +/- standard deviation? With the results very skewed around two values, what happens if I do 1-3 runs and get ~71 seconds and none around ~72 seconds?

Statistics is clearly a large field, and without a large sample size, most numbers can be one-off results that are not truly reflective of the data. It is important to ask yourself every time you read a review with a result – how many data points went into that final value, and what analysis was performed?

For this review, we typically take four runs of our GPU tests each, except Civilization V which is extremely consistent +/- 0.1 FPS. The result reported is the average of those four values, minus any results we feel are inconsistent. At times runs have been repeated in order to confirm the value, but this will not be noted in the results.

The Bulldozer Challenge

Another purpose of this article was to tackle the problem surrounding Bulldozer and its derivatives, such as Piledriver and thus all Trinity APUs. The architecture is such that Windows 7, by default, does not accurately assign new threads to new modules – the ‘freshly installed’ stance is to double up on threads per module before moving to the next. By installing a pair of Windows Updates (which do not show in Windows Update automatically), we get an effect called ‘core parking’, which assigns the first series of threads each to its own module, giving it access to a pair of INT and an FP unit, rather than having pairs of threads competing for the prize. This affects variable threaded loading the most, particularly from 2 to 2N-2 threads where N is the number of modules in the CPU (thus 2 to 6 threads in an FX-8150). It should come as no surprise that games fall into this category, so we want to test with and without the entire core parking features in our benchmarks.

Hurdles with NVIDIA and 3-Way SLI on Ivy Bridge

Users who have been keeping up to date with motherboard options on Z77 will understand that there are several ways to put three PCIe slots onto a motherboard. The majority of sub-$250 motherboards will use three PCIe slots in a PCIe 3.0 x8/x8 + PCIe 2.0 x4 arrangement (meaning x8/x8 from the CPU and x4 from the chipset), allowing either two-way SLI or three-way Crossfire. Some motherboards will use a different Ivy Bridge lane allocation option such that we have a PCIe 3.0 x8/x4/x4 layout, giving three-way Crossfire but only two-way SLI. In fact in this arrangement, fitting the final x4 with a sound/raid card disables two-way SLI entirely.

This is due to a not widely publicized requirement of SLI – it needs at least an x8 lane allocation in order to work (either PCIe 2.0 or 3.0). Anything less than this on any GPU and you will be denied in the software. So putting in that third card will cause the second lane to drop to x4, disabling two-way SLI. There are motherboards that have a switch to change to x8/x8 + x4 in this scenario, but we are still capped at two-way SLI.

The only way to go onto 3-way or 4-way SLI is via a PLX 8747 enabled motherboard, which greatly enhances the cost of a motherboard build. This should be kept in mind when dealing with the final results.

Power Usage

It has come to my attention that even if the results were to come out X > Y, some users may call out that the better processor draws more power, which at the end of the day costs more money if you add it up over a year. For the purposes of this review, we are of the opinion that if you are gaming on a budget, then high-end GPUs such as the ones used here are not going to be within your price range.

Simple fun gaming can be had on a low resolution, limited detail system for not much money – for example at a recent LAN I went to I enjoyed 3-4 hours of TF2 fun on my AMD netbook with integrated HD3210 graphics, even though I had to install the ultra-low resolution texture pack and mods to get 30+ FPS. But I had a great time, and thus the beauty of high definition graphics of the bigger systems might not be of concern as long as the frame rates are good.

But if you want the best, you will pay for the best, even if it comes at the electricity cost. Budget gaming is fine, but this review is designed to focus on 1440p with maximum settings, which is not a budget gaming scenario.

Format Of This Article

On the next couple of pages, I will be going through in detail our hardware for this review, including CPUs, motherboards, GPUs and memory. Then we will move to the actual hardware setups, with CPU speeds and memory timings (with motherboards that actually enable XMP) detailed. Also important to note is the motherboards being used – for completeness I have tested several CPUs in two different motherboards because of GPU lane allocations.

We are living in an age where PCIe switches and additional chips are used to expand GPU lane layouts, so much so that there are up to 20 different configurations for Z77 motherboards alone. Sometimes the lane allocation makes a difference, and it can make a large difference using three or more GPUs (x8/x4/x4 vs. x16/x8/x8 with PLX), even with the added latency sometimes associated with the PCIe switches. Our testing over time will include the majority of the PCIe lane allocations on modern setups, but for our first article we are looking at the major ones we are likely to come across.

The results pages will start with a basic CPU analysis, running through my regular motherboard tests on the CPU. This should give us a feel for how much power each CPU has in dealing with mathematics and real world tests, both for integer operations (important on Bulldozer/Piledriver/Radeon) and floating point operations (where Intel/NVIDIA seem to perform best).

We will then move to each of our four gaming titles in turn, in our six different GPU configurations. As mentioned above, in GPU limited scenarios it may seem odd if a sub-$100 CPU is higher than one north of $300, but we hope to explain the tide of results as we go.

I hope this will be an ongoing project here at AnandTech, and over time we can add more CPUs, 4K testing, perhaps even show four-way Titan should that be available to us. The only danger is that on a driver or game change, it takes another chunk of time to get data! Any suggestions of course are greatly appreciated – drop me an email at ian@anandtech.com. Our next port of call will most likely be Haswell, which I am very much looking forward to testing.

242 Comments

View All Comments

TheQweaker - Friday, May 10, 2013 - link

Just in case, here is a pointer to the nVidia GPU AI Path finding in the developer zone:https://developer.nvidia.com/gpu-ai-path-finding

And here is the title of a 2011 GPU AI Planning paper (research; not yet in a game): "Exploiting the Computational Power of the Graphics Card: Optimal State Space Planning on the GPU". You should be able to find the PDF on the web.

My 2 cents is that it's a good topic for a final paper.

-- The Qweaker.

yougotkicked - Friday, May 10, 2013 - link

Thanks again, I think I will be doing GPU AI as my final paper, probably try to implement the A* family as massively parallel, or maybe a local beam search using hundreds of hill-climbing threads.TheQweaker - Saturday, May 11, 2013 - link

Nice project.2 more cents.

Keep it simple is the best advice. It's better to have a running algorithm than none, even if it's slow.

Also, ask you advisor whether he'd want you to compare with a CPU implementation of yours in order to evaluate the pros and cons between your sequential implementation and your // implemenation. I did NOT write "evaluate gains from seq to //" as GPU programming is currently not fully understood, probably even not by nVidia engineers.

Finally, here is book title: "CUDA Programming: A Developer's Guide to Parallel Computing with GPUs". But there are many others these days.

OK. That w

TheQweaker - Saturday, May 11, 2013 - link

as my last post.-- The Qweaker.

(sorry for the cut, I wrongly clicked on submit)

yougotkicked - Monday, May 13, 2013 - link

thanks a lot for all your input, I intend to evaluate not only the advantages of GPU computing, but it's weak points as well, so I'll be sure to demonstrate the differences between a sequential algorithm, a parallel CPU algorithm, and a massively parallel GPU algorithm.Azusis - Wednesday, May 8, 2013 - link

Could you test the Q6600 and i7-920 in your next roundup? I have many PC gaming friends, and we all seem to have a Q6600, i7-920, or 2500k in our rigs. Thanks! Great job on the article.IanCutress - Wednesday, May 8, 2013 - link

I have a Q9400 coming in soon from family - Getting one of the Nehalem/Westmere range is definitely on my to-do list for the next update :)sonofgodfrey - Thursday, May 9, 2013 - link

I too have a Q6600, but it would be interesting to see the high end (non-extreme edition) Core 2s as well: E8600 & Q9650. Just for yucks, perhaps a socket 775 Pentium 4 could also make an appearance? :)gonks - Wednesday, May 8, 2013 - link

i knew it from some time ago, but this proves once again that it's time to upgrade my good old c2d (conroe) E6600 @ 3.2GhzQuizzical - Wednesday, May 8, 2013 - link

You've got a lot of data there. And it's good data if your main purpose is to compare a Radeon HD 7970 to a GeForce GTX 580. Unfortunately, most of it is worthless if you're trying to isolate CPU performance, which is the ostensible purpose of the article. You've gone far out of your way to try to make games GPU-limited so that you wouldn't be able to tell what the various CPUs can do when they're the main limiting factors.Loosely, the CPU has to do any work to run a game that isn't done by the GPU. The contents of this can vary wildly from game to game. Unless you're using DirectX 11 multithreaded rendering, only one thread can communicate with the video card at a time. But that one rendering thread mostly consists of passing data to the video card, so you don't do much in the way of real computations there. You do sort some things so that you don't have to switch programs, textures, and so forth more often than necessary, though you can have a separate sorting thread if you're (probably unreasonably) worried that this is going to mean too much work for the rendering thread.

Actually determining what data needs to be passed to the video card can comprise the bulk of the CPU work that a game needs to do. But this portion is mostly trivial to scale to as many threads as you care to--at least within reason. It's a completely straightforward producer-consumer queue with however many "producer" threads you want and the rendering thread as the single "consumer" thread that takes the data set up by other threads and passes it along to the video card.

Not quite all of the work of setting up data for the GPU is trivial to break into as many threads as necessary, though. At the start of a new frame, you have to figure out exactly where the camera is going to go in that frame. This is likely going to be very fast (e.g., tens or hundreds of microseconds), but it does need to be done before you go compute where everything else is relative to the camera.

While I haven't programmed AI, I'd expect that you could likewise break it up into as many threads as you cared to, as you could "save" the state of the game at some instant in time and have separate threads compute what all AI has to do based on the state of the game at that moment, without needing to know anything about other game characters were choosing at the same time. Some games are heavy on AI computations, while online games may do essentially no AI computations client-side, so this varies wildly from game to game.

A game engine may do a lot of other things besides these, such as processing inputs, loading data off of the hard drive, sending data over the Internet, or whatever. Some such things can't be readily scaled to many CPU cores, but if you count by CPU work necessary, few games will have all that much stuff to do other than setting up data for the GPU and computing AI.

But most of the work that a CPU has to do doesn't care what graphical settings you're using. Anything that isn't part of the graphics engine certainly doesn't care. The only parts of a the CPU side of game engine that care what monitor resolution you're using are likely to be a handful of lines to set the resolution when you change it and a few lines to check whether an object is off the camera and therefore doesn't need to be processed in that particular frame--and culling such objects is likely done mostly to save on the GPU load. Any settings that can be adjusted in video drivers (e.g., anti-aliasing or anisotropic filtering) are done almost entirely on the video card and carry a negligible CPU load.

Thus, if you're trying to isolate CPU performance, you turn down or off settings that don't affect the CPU load. In particular, you want a very low monitor resolution, no anti-aliasing, no anisotropic filtering, and no post-processing effects of any sort. Otherwise, you're just trying to make the game mostly CPU bound, and end up with data that looks like most of what you've collected.

Furthermore, even if you do the measurements properly, there's also the question of whether the games you've chosen are representative of what most people will play. If you grab the games that you usually benchmark for video cards reviews, then you're going out of your way to pick games that are unrepresentative. Tech sites like this that review hardware tend to gravitate toward badly-coded games that aren't representative of most of the games that people will play. If this video card gets 200 frames per second at max settings in one game and that video card gets 300, what's the difference in real-world game experience? If you want to differentiate between different video cards, you need games that are more demanding, and simply being really inefficient is one way to do that.

Of course, if you were trying to see how different CPUs affect performance in a mostly GPU-limited game, that can be interesting in an esoteric sense. It would probably tend to favor high single-threaded performance because the only difference you'd be able to pick out are due to things that happen between frames, which is the time that the video card is most likely to be forced to wait on the CPU briefly.

But if you were trying to do that, why not just use a Radeon HD 5450? The question answers itself.

If you would like to get some data that will be more representative of how games handle CPUs, then you'll need to do some things very differently. For starters, use just a single powerful GPU, to avoid any CrossFire or SLI weirdness. A GeForce GTX Titan is ideal, but a Radeon HD 7970 or GeForce GTX 680 would be fine. For that matter, if you're not stupid about picking graphical settings, something weaker like a Radeon HD 7870 or GeForce GTX 660 would probably work just fine. But you need to choose the graphical settings intelligently, by turning down or off any graphical settings that don't affect CPU load. In particular, anti-aliasing, anisotropic filtering, and all post-processing effects should be completely off. Use a fairly low monitor resolution; certainly no higher than 1920x1080, and you could make a good case for 1366x768.

And then don't pick your usual set of games that you use to do video card reviews. You chose those games precisely because they're outliers that won't give a good gauge of CPU performance, so they'll sabotage your measurements if you're trying to isolate CPU performance. Rather, pick games that you rejected from doing video card reviews because they were unable to distinguish between video cards very well. If the results are that in a typical game, this processor can deliver 200 frames per second and that one can do 300, then so be it. If a Core i7-3570K and an FX-6300 can deliver hundreds of frames per second in most games (as is likely if the game runs well on, say, a 2 GHz Core 2 Duo), then you shouldn't shy away from that conclusion.