AMD Comments on GPU Stuttering, Offers Driver Roadmap & Perspective on Benchmarking

by Ryan Smith on March 26, 2013 2:28 AM EST

For as long as I can remember talking about video cards and GPU performance at AnandTech, there has been debate over the type of benchmarks used to represent that performance. In the old days, the debate was mostly manufacturer driven. Curiously enough, the discourse usually fired up when one manufacturer was at a significant deficit in GPU performance. NVIDIA made a big deal about moving away from timedemos and average frame rates during the early GeForce FX (NV30) days, when its cards might have delivered a decent gaming experience but were slaughtered in most benchmarks. Even Intel advocated for a shift away from most CPU bound gaming benchmarks back during the early years of the Pentium 4 - again, for obvious reasons.

It’s a shame that these revolutions in gaming performance testing were always associated with underperforming products (and later dropped once the product stack improved in the next generation or two). It’s a shame because there has always been merit in introducing additional metrics in order to provide the most complete picture when it came to gaming performance.

The issue lay mostly dormant over the past several years. Every now and then there’d be a new attempt to revolutionize GPU performance testing, but most failed to gain widespread traction for one reason or another. Broad repeatability, one of the basic tenets of the scientific method, was usually cast aside in pursuit of a lot of these new attempts at performance testing - which ultimately limited acceptance.

A year and a half ago, Scott Wasson over at the Tech Report did something no one since Dr. Pabst was able to do: he actually brought about a revolution in the 3D game benchmarking scene.

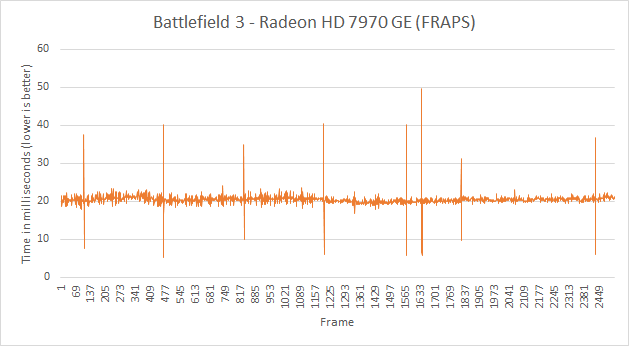

The approach seemed ridiculously simple - we’ve all had the tools for so very long. Scott used FRAPS to record frame times, and would calculate how long every frame in a benchmark took to render. By focusing on individual frame latencies, Scott’s method could better characterize the little hiccups and stutters that would get smoothed out in an average frame rate. With the new method came a bunch of nifty graphs, and the world changed.

The methodology wasn’t perfect, as FRAPS lacks a holistic view of the 3D rendering pipeline, but it did reveal some surprising issues (in addition to spawning further work that uncovered even more issues on the multi-GPU front). Interestingly enough, many of the issues uncovered by this focus on frame times/latency seemed to primarily impact AMD hardware.

AMD remained curiously quiet as to exactly why its hardware and drivers were so adversely impacted by these new testing methods. While our own foray into evolving GPU testing will come later this week, we had the opportunity to sit down with AMD to understand exactly what’s been going on.

Although neither strictly a defense nor merely an explanation of what we’ve been seeing over the past year, AMD wanted to sit down and better explain their position. This includes both why AMD’s products have been impacted in the manner they were, and why at the same time (and not unlike NVIDIA) AMD is worried about FRAPS being given more weight than it should be. Ultimately AMD believes that it’s to the benefit of buyers and journalists alike to better understand just what is happening, why it’s happening, and just what the most common tools can and are measuring.

What follows is based on our meeting with some of AMD's graphics hardware and driver architects, where they went into depth in all of these issues. In the following pages we’ll get into a high-level explanation of how the Windows rendering pipeline works, why this leads to single-GPU issues, why this leads to multi-GPU issues, and what various tools can measure and see in the rendering process.

103 Comments

View All Comments

mi1stormilst - Tuesday, March 26, 2013 - link

All of us will benefit from the light shed on the subject with better testing and companies paying closer attention to issues and work arounds related to the subject. Still we would not even be talking about better testing methods right now without the attention it got from The Tech Report. I look forward to more sites implementing some type of real world testing methods that results in a true user experience evaluation. I reread the article and still standby my original conclusion. The Tech Report gets credit, but rather then stopping there this article seems to attack their methodology when they themselves had already admitted that it was less then perfect. To date there are still not better tools being used for reviews and The Tech Report still got the point across with what was available. I am a huge fan for what they did over there as I could not pinpoint why my AMD experience was less than optimal. It forced me to early retire my 6950 grab a very affordable 660 OC and enjoy a much smoother game experience. This is my first nVidia card since my trusty 4200ti and I am not looking back until AMD is on par with nVidia in the stuttering department...it was literally making me motion sick )-:SPBHM - Tuesday, March 26, 2013 - link

"holding back one frame but not another can sometimes make the frame display evenly, but from a simulation step only a few milliseconds after the previous step"wouldn't this also happen with the single GPU "heartbeat stuttering"?

BrightCandle - Tuesday, March 26, 2013 - link

Yes it would, which is exactly the problem with the heartbeat pattern that AMD's problem causes. You can deliver the frames evenly out to the monitor but their contents has a noticeable stutter due to the graphics driver accepting the frames unevenly. The heartbeat is a sign of a real problem without a doubt, all non smooth frame time captures are. What they are not is a sign that the DVI monitor is seeing frames at those periods, but then no one ever said that was what was being measured anyway.The best way to think about it is that this is the problem going into the pipeline, measuring the output also needs to be done to get the smoothness on the output. Only with both can you understand the impact. We have half the picture, and that half is accurately measured by fraps.

Gunbuster - Tuesday, March 26, 2013 - link

Design and launch a product. Ignore user feedback.Did we forget about those people with $2000 laptops sporting AMD mobile card drivers that didn't work correctly for over a year due to some bug with the graphics switching MUX? This seems to be a pattern that revolves around AMD software people being wholly out of their depth, overworked, or just not caring. They don’t even seem to be able to figure out when they have a fix. The laptop GPU story here on AT was presented as AMD sending over beta drives and asking “Did we fix it this time?”

rootheday - Tuesday, March 26, 2013 - link

One minor correction to the description of the submission of commands through the stack - the DirectX runtime under Windows Vista and later does NOT accumulate a frames' worth of draw calls before sending them to the UMD. I believe it sends state and draw calls to the UMD immediately.The UMD accumulates commands in the command buffer and flushes them to the KMD either when a present call occurs, when the command buffer is full, or when the application requests to read back the results of enqueued rendering (Map/Lock/read Query result).

It used to be true under Windows XP that the dx runtime accumulated calls and dispatched them to the driver - but that is because in XP, the driver ran in kernel mode and it was too expensive to make the user mode->kernel mode transition on every "SetState", etc call.

tynopik - Tuesday, March 26, 2013 - link

"frame latter than it would have" -> later (pg 3)cactusdog - Tuesday, March 26, 2013 - link

As a long time ATI/AMD fan this report doesn't fill me with confidence. It appears AMD is using anandtech for their public relations spin on the stuttering issue. I don't blame anandtech for running the story, AMD's comments are newsworthy and anandtech deserves credit for being honest about AMD's intentions. On the negative side, the explanation about fraps not being an effective tool only need to be said once, it seems (by the number of times it was mentioned) that AMD's message is to make sure everyone knows Fraps its not accurate, but doesn't explain why Nvidia performs better.On the issue, it sounds like AMD is conceding and preparing us for much of the same. No where in the explanation do they mention why Nvidia performs better in the latency tests, other than to say its not what the end user is seeing. Well I disagree, users have been complaining about stuttering for years. I just don't believe that AMD have never looked into this issue before. Also with the multi-gpu stuttering. It has been an issue since crossfire/SLI first appeared and nothing has really happened there.

Im a fan of AMD cards but I use both brands and personally I have noticed Nvidia do a better job with latency and general responsiveness in game, whereas ATI/AMD has the edge with image quality. Its subtle, and probably not something the average user notices but a lot of people do notice.. If AMD can solve this issue they would sell many more cards but by the sounds of this article, its too big and complex for them to solve completely without major work. Hence the excuses. Nvidia has to play by the same rules, the same OS etc and they do a better job at latency/stuttering, hopefully AMD can fix it enough to at least perform as good as a NVidia card.

WaltC - Tuesday, March 26, 2013 - link

"NVIDIA made a big deal about moving away from timedemos and average frame rates during the early GeForce FX (NV30) days, when its cards might have delivered a decent gaming experience but were slaughtered in most benchmarks."Well, that's not really what happened at all...;) The chip "slaughtering" everything nVidia made in those days was the ATi R300. Seems rather strange to tell just half of that story. And the problem nVidia had with benchmarks wasn't technical--it was that nVidia was found to be actively cheating in 3dMark (camera on rails), among other cheats/shortcuts/optimizations in their drivers. The benchmarks told a story nVidia couldn't abide, and that was how much better the R300 was than anything nVidia had at the time. R300 was in every sense a revolution in the 3d gpu markets, blowing everything else away. All gpus on the market today are descended from R300 (just as all Intel and AMD x86 cpus are descended from AMD's original 64-bit Opterons.) nVidia did eventually own up to all of it, right before cancelling the nV30 after a month or two in production, however. People kept publishing proof after proof of what nVidia was doing until finally the company said "uncle." nVidia has been a better company since, imo. At least, its products are certainly better.

I'm using a single ATi gpu and over the last few years I have to say that I haven't seen any stuttering worth mentioning. Whenever I have seen stuttering it is usually due to some software condition or other, and rectified by the appropriate patch. I do appreciate your pointing out that Fraps isn't perfect and I think TR should stop pretending that it is. Fraps as you point out was never intended to measure this kind of latency and so using it to produce data other than frame-rate data is an "off-label" use of the program, imo. And also as you point out, I use vsync more often than not.

Really, though, I would loathe seeing AMD optimizing its drivers just to look better in TR's off-label Fraps usage...!...;) Let's hope that doesn't happen as I got quite a belly full of that sort of thing back in the nV30 days--enough to last me a lifetime.

beginner99 - Tuesday, March 26, 2013 - link

How can FRAPS detect any vendor-specific stuttering if it injects itself before the gpu-driver is called?The second thing is that v-sync is just crap. I'm not a professional gamer, not even close but in certain games turning it off made me a much better player and the difference is huge. even more annoyingly it was not directly noticeable. I did not "feel" anything changed. Except that my stats were better. Tearing and stuttering: no issue for me so far.

DanNeely - Tuesday, March 26, 2013 - link

The timing at the point it's measuring is normally blocked until the queue the GPUs feeding from has an open slot?