AMD Comments on GPU Stuttering, Offers Driver Roadmap & Perspective on Benchmarking

by Ryan Smith on March 26, 2013 2:28 AM ESTJust What Is Stuttering?

Now that we’ve seen a high-level overview of the rendering pipeline, we can dive into the subject of stuttering itself.

What is stuttering? In practice it’s any rendering anomaly that occurs that causes the time between frames to noticeably vary. This is admittedly a very generic definition, but it’s also a definition necessary to encompass all the different causes of stuttering.

We’ll get into specific scenarios of single-GPU and multi-GPU stuttering in the following pages, but briefly, stuttering can occur at several different points in the rendering pipeline. If the GPU takes longer to render a frame than expected – keeping in mind it’s impossible to accurately predict rendering times ahead of time – then that would result in stuttering. If a driver takes too long to prepare a frame for the GPU, backing up the rendering pipeline, that would result in stuttering. If a game simulation step takes too long and dispatches a frame later than it would have, or simply finds itself waiting too long before Windows lets it submit the next frame, that would result in stuttering. And if the CPU/OS is too busy to service an application or driver as soon as it would like, that would result in stuttering. The point of all of this being that stuttering and other pacing anomalies can occur at different points of the rendering pipeline, and become the responsibility of different hardware and software components.

Complicating all of this is the fact that Windows is not a real-time operating system, meaning that Windows cannot guarantee that it will execute any given command within a certain period of time. Essentially, Windows will get around to it when it can. In order to achieve the kind of millisecond level response time that applications and drivers need to ensure smoothness, Windows has to be overprovisioned to make sure it has excess resources. Consequently this is part of the reason for why the context queue exists in the first place, to serve as a buffer for when Windows can’t get the next frame passed down quickly enough.

Ultimately, while Windows will make a best-effort to get things done on time, the fact of the matter is that between the OS and the fact that PCs are composed of widely varied hardware, the software/hardware stack makes it virtually impossible to eliminate stuttering. Through careful profiling an optimizations it’s possible to get very close, but as the PC is not a fixed platform developers cannot count on any frame or any specific draw call being completed within a certain amount of time. For that kind of rendering pipeline consistency we’d have to look towards fixed platforms such as game consoles.

Moving on, stuttering is usually – though not always – a problem particular to gaming with v-sync disabled. When v-sync is enabled it places a hard floor on how often frames are presented to the user. For a typical 60Hz monitor this would mean there would be an interval of no shorter than 16.6ms, and in multiples of 16.6ms beyond that.

The significance of this is that if a game can consistently simulate and render at more than 60fps, v-sync effectively limits it to 60fps. With the end result being that the application is blocked from submitting any further frames once the context queue fills up, until the next scheduled frame is displayed. This fixed 16.6ms cycle makes it very easy to schedule frames and will typically minimize any stuttering. Of course v-sync also adds latency to the process since we’re now waiting on the GPU buffer to swap.

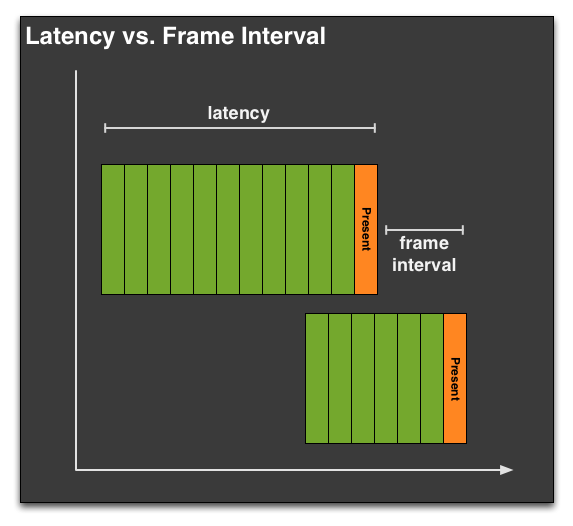

Throwing a few more definitions out before we move on, it’s important we differentiate between latency and the frame interval. Though latency gets thrown around as the time between frames, within the world of computer science and graphics that is not accurate, as latency has a different definition. Latency in this case is how long the entire rendering pipeline takes from start to end – from the moment the user clicks to the moment the first frame showing a response is displayed to the user. Most readers are probably more familiar with this concept as input lag, as latency in the rendering pipeline is a significant component of input lag.

Latency is closely related to, but not identical to the frame interval. Unlike latency, the frame interval is merely the time between frames, typically defined as the time (interval) between frames being displayed at the end of the rendering pipeline by the GPU performing a buffer swap. Typically latency and the frame interval are closely related, but thanks to the context queue it’s possible (and sometimes even likely) for a frame to go through the rendering pipeline with a high latency, while still being displayed at a consistent frame interval. For that matter the opposite can also happen.

When we’re looking at stuttering, what we’re really looking at is the frame interval rather than the latency. It’s possible to measure the latency separately, but whether it’s a software tool like FRAPS or something brute-force such as using a high-speed camera to measure the time between frames, what we’re seeing is the frame interval or a derivation thereof. The context queue means that the frame interval is not equivalent to the latency.

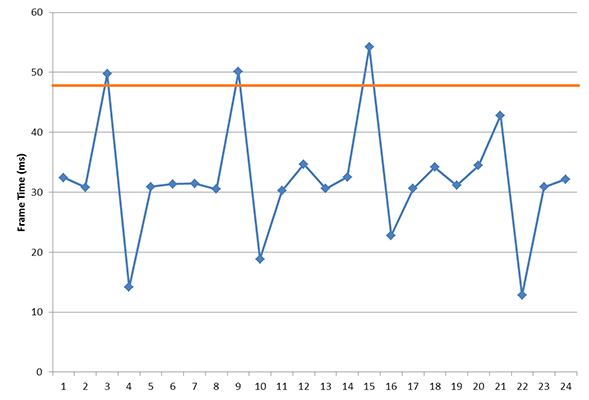

Finally, in our definition of stuttering we also need to somehow define when stuttering becomes apparent. Like input lag and other visual phenomena, there exists a point where stuttering is or isn’t visible to any given user. As we’ve already established that it’s virtually impossible to eliminate stuttering entirely on a variable platform like the PC, stuttering will always be with us to some degree, particularly if v-sync is disabled.

The problem is that this threshold is going to vary from person to person, and as such the idea of what an acceptable amount of stuttering would be is also going to vary depending on who you ask. If a frame takes 5ms longer than the previous, is that going to be noticeable? 10ms? 30ms? And what if this is at 30fps versus 60fps?

The $64K question: where is the cutoff for "good enough" stutter?

In our discussion with AMD, AMD brought up a very simple but very important point: while we can objectively measure instances of stuttering with the right tools, we cannot objectively measure the impact of stuttering on the user. We can make suggestions for what’s acceptable and set common-sense guidelines for how much of a variance is too much – similar to how 60fps is the commonly accepted threshold for smooth gameplay – but nothing short of a double-blind trial will tell us whether any given instance of stuttering is noticeable to any given individual.

AMD didn’t have all of the answers to this one, and frankly neither do we. Variance will always exist and so some degree of stuttering will always be present. The only point we can really make is the same point AMD made to us, which is that stuttering is only going to matter when it impacts the user. If the user cannot see stuttering then stuttering should no longer be an issue, even if we can measure some small degree of stuttering still occurring. Like input lag, framerates, and other aspects of rendering, there is going to be a point where stuttering can become “good enough” for most users.

103 Comments

View All Comments

Tuvok86 - Tuesday, March 26, 2013 - link

This is great victory for all of the tech press.When people started complaining about stuttering years ago we were only dreaming of getting so much attention from gpu brands.

I still remember someone constantly saying "micro-stuttering doesn't exist", I wonder how they feel now that they enjoy the fps and smoothness benefits.

In any case I praise constructive journalism that triggered a significant leap in the technology.

BrightCandle - Tuesday, March 26, 2013 - link

One important fact I feel is missing in your treatment of what it is fraps is measuring and why its more representative of problems than you and AMD think it is. For some reason everyone who makes this argument that fraps is isn't very useful seems to skip this one, but its really really important.Fraps measures at the present call and that isn't a random choice. Because the present call has a few different modes of operation, but all games use blocking mode. What that means is that if the context queue is full (which it normally is) then game thread is held up waiting for that present call to complete. Subsequent present calls are regulated by the GPU's driver in this case as the thread is held and when it chooses to accept the completion of that frame only then can the games thread continue. Since Fraps is measuring this it can see when the driver is accepting frames in an uneven fashion, so while you might see even frames presented to the monitor due to the buffering there is still a knock on effect.

Game simulations produce particular frames of their simulation, sometimes in the same thread as the present call and sometimes in a different thread. Regardless they use the release of the present call as the end of their rendering step and that allows another frame to be started or delivered. So if the present calls are coming back unevenly the game simulation itself will stutter as it tries to produce as many simulation steps as the rendering is producing. If the present calls are stuttering there is a feedback loop into the game simulation that is too causing it to stutter.

Its this feedback loop on the rendering and game simulation which causes much of the problem, and it starts in the GPU driver. It might very well be caused by Windows but the big difference we see in the manufacturers solutions tells us that its almost entirely the manufacturers fault when it happens and impacts on gameplay.

So quite rightly fraps does not measure stuttering out to the screen, it measures the GPUs regulation of the frame rate of the game rendering and its simulation and that does cause real stuttering, both of the subsequent present calls and the game simulation.

Of course pcperspective have now shown that AMD's SLI stuttering out the DVI port is considerably worse than Fraps, so much so they considered what they are doing is a cheat as the frames aren't real. But you need bothperspectives, the output and the input to the pipeline to see the impact on the game. Its not just the frames themselves that have to be regulated to be smooth its also the game simulation that must run smoothly, and it is regulated by the handling of the context queue.

JPForums - Tuesday, March 26, 2013 - link

There are two things you need to keep in mind:1) Nvidia also agrees with the limitation of FRAPS. In fact, IIRC they were the first to voice the issue that FRAPS recordings are in the wrong place and can only infer what actually needs to be recorded. The author is correct, when Ati and Nvidia agree, we should at least pay attention.

2) Though your your points are AFAIK correct and well articulated, they still point to the issue of FRAPS inferring, rather than recording the the targeted information. The difference is, rather than consistency of output frames, you are looking for consistency of simulation steps. I agree that this is a metric that really needs to be covered. In fact, I would even go as far as matching simulation steps to their corresponding frame times to expose issues when short steps are accompanied by long frames or vice versa.

Unfortunately, FRAPS can't measure any of this directly and even for your points proves to be limited to inference. That said, until a reviewer gets tools that can reveal this information, inference via FRAPS is better than no information at all. Pcperspective's comments on AMD's stuttering issues are related (as they state) to crossfire setups. I could see the differences between CF and SLI in blind tests (though SLI also has some microstutter) and this only confirms it. The runt frames only add fuel to the fire. I'm open to using AMD in single GPU builds, but only use Nvidia for multiGPU builds. Perhaps this will change in July, but I'm guessing there will still be plenty of work to do.

JPForums - Tuesday, March 26, 2013 - link

I should probably expand a little on what I consider a limitation of FRAPS for stutter caused by simulation steps. FRAPS inserts itself at the output of the render and is therefore subject to a variable delay between the simulator time step through the render. Important information can still be inferred, like simulation stutter in AMD's heartbeat waveform. However, I'd still rather get a timestamp directly at the output of the simulator rather than at the output of the renderer, if it ever becomes an option. Unfortunately, that would probably require cooperation with the game developer, so I'm not sure that will ever happen.tipoo - Tuesday, March 26, 2013 - link

The third page makes me wonder, just how much would a real time operating system improve performance? QNX on BB10 is real time, the PS4 OS may be too.juampavalverde - Tuesday, March 26, 2013 - link

Time to update the GPU review template guys... At least copy&paste PCPer and TechReport methods.cjb110 - Tuesday, March 26, 2013 - link

Sounds like there's a market for a tool then, something that does what GPUView does but in simpler manner (like Fraps presents).drbaltazar - Tuesday, March 26, 2013 - link

sadly the issue they find isn't exsactly caused by the gpu!it is at the os end!data fragmentation at various level is often the cause.and this happen everywhere,at the processor cache level to the server cache level!ms say it doesn't mather !they re wrong!it affect everything related to image quality.bufferbloat also is the main problem.mtu,udp fragmentation ,multithreading and rss fragmentation etc etc etc!oh they say they can reconstruct the data in the proper maner that wont impact performance or quality!again ms is either wrong or unknowing of the problem these various issue cause .I haven't event started on the gpu side yet!all that data manipulation etc is the main issue !how to fix it?mm!probably use official standard limit like the 1460 for mtu and add udp to that also so that it is also at 1460.(just a random exemple cause these will need to be tweaked ,why?so that packet don't get fragmented anywhere in the computer or the server.or they tell people how to make it happen ,because right now not many have 1080p quality even most have a 1080p monitor.so imagine if amd is using window idea to tweak their gpu?like .net4 etc !(yep it become a nightmare)hopefully they ll fix this but all side have been on a race for performance .(wouldn't want to sell a = performing w8 instead of w7 .it wouldn't sell!i am all for getting better performance but not at the expense of subpixel quality of graphic.nvidia is probably better because they noticed ms error and have worked to avoid the os mistake by using standard and proper ways .I aint saying ms is wrong maybe they can really fragment packet and have everything being fine and dandy looking in 1080p.but I will tell you this.in most area of computing it feels like this:os is saying 255.0.0 and at the other end for some reason its like our old phone game,at the other end what is being done isn't at all what the os said the beginning (and viceversa)hopefully these idea of new data mining and testing tool will go deeper and test what is actually going on in our computer,network and server datapath so they all can work together.cause right now?our game look 1080i even tho we are all set at 1080pmi1stormilst - Tuesday, March 26, 2013 - link

I love you guys, but this article comes off a bit like sour grapes. The Tech Report dove into this issue head first and admitted from the beginning the testing methods may not be perfect. They have continued to be clear on this and you made no mention of the high speed video tests that they performed. The bottom line is The Tech Report is primarily responsible for getting AMD to get on the ball with this issue. Regardless of AMD's bag of excuses and their sudden clarity on the best methods for testing we would not be where we are without the sold work of The Tech Report. I feel that if the FRAPS method of testing was sufficient for bringing these issues to light then a job well done. The situation will only improve from there and Scott Wasson and company deserve more praise than this sour attempt of an article to discredit the good work they have done. If that we not your intention then I apologize, but it comes off as such.brybir - Tuesday, March 26, 2013 - link

I did not see it this way at all. Instead, I read it as TechReport started a trend in evaluating stuttering that most were not looking for, and that while there is some merit to their methods, there are other better ways of evaluating the issue. I did not see any effort to hide, obscure, or otherwise show "sour grapes" to them for their testing.As to the merit of the article, if AMD, Nvidia, and Anandtech folks all agree that the methods used by TechReport are okay but could be improved upon with better tools, then the end result will be better for everyone. Much as standard bench-marking software has evolved a lot over the the last decade, the bench-marking for this type of testing will change dramatically as people find interesting and new ways to really get in depth with the issue and generate data that is easy to aggregate and report. I think that is a net benefit for all of us!